ActionParty: The AI Bridging Video Games to Drone Swarm Control

A new AI model, ActionParty, tackles multi-agent action binding in generative video games, offering a blueprint for intuitively commanding complex drone swarms with game-like precision and reliability.

TL;DR: Researchers have developed ActionParty, an AI model that finally cracks the problem of simultaneously controlling multiple independent agents in generative video environments. This breakthrough in "action binding" means we're a significant step closer to intuitively commanding complex drone swarms with game-like precision, making multi-drone operations far more accessible and reliable.

Commanding the Swarm: A New Era for Drone AI

For years, the dream of commanding a drone swarm like a general overseeing troops on a battlefield has felt just out of reach. We've seen impressive choreographed light shows, but truly dynamic, intuitive control over a group of drones, each executing specific, independent tasks, remains a significant challenge. This isn't about flying one drone and having others mimic it; it's about telling a red drone to scout left while a blue drone secures an objective, all in real-time. A new paper, "ActionParty: Multi-Subject Action Binding in Generative Video Games," introduces an AI model that directly addresses this hurdle, promising a future where drone swarm operations are as fluid and responsive as playing your favorite real-time strategy game.

Why Swarms Get Confused: The "Action Binding" Problem

Current generative AI models, particularly those built on diffusion techniques for video generation, are powerful. They can create incredibly realistic and interactive simulated environments – what researchers call "world models." The catch? They typically shine in single-agent scenarios. Ask them to simulate one drone moving, and they do great. Ask them to simulate five drones, each with a distinct, user-defined action, and things fall apart. The core issue is "action binding." These models struggle to consistently associate a specific action (e.g., "move right") with its corresponding subject (e.g., "the red triangle"). The result is a chaotic mess where actions are either ignored, applied to the wrong subject, or mixed up entirely. This fundamental limitation means that complex multi-drone operations, which demand precise individual control within a collective, are currently bottlenecked. Developing robust, dynamic multi-drone behaviors is costly, time-consuming, and often results in systems that lack the accuracy and adaptability needed for real-world scenarios. Consider a search and rescue operation with a swarm where you can't reliably tell one drone from another or have them execute distinct commands without confusion. That's the problem ActionParty aims to solve.

Inside ActionParty: How AI Keeps Track of Every Drone

ActionParty tackles this "action binding" problem head-on by introducing two key innovations: subject state tokens and a spatial biasing mechanism. Think of subject state tokens as dedicated, persistent latent variables that capture the unique state of each individual subject (or drone, in our case) in the scene—its position, orientation, and identity. These aren't just pixels; they're abstract representations that the AI uses to keep track of who's who and what they're doing.

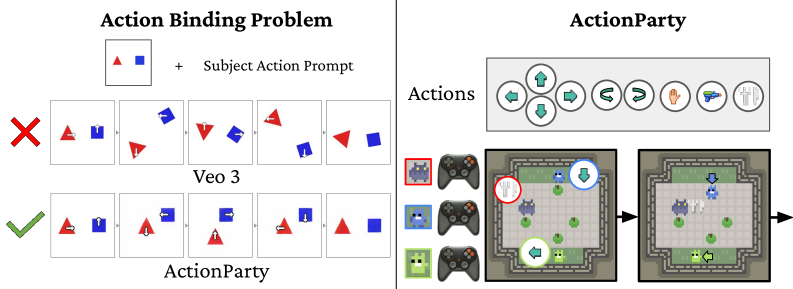

Figure 1: Left: A typical failure of text-to-video models when attempting multi-subject action binding, where actions are misattributed. Right: ActionParty successfully controls multiple subjects with distinct actions.

Figure 1: Left: A typical failure of text-to-video models when attempting multi-subject action binding, where actions are misattributed. Right: ActionParty successfully controls multiple subjects with distinct actions.

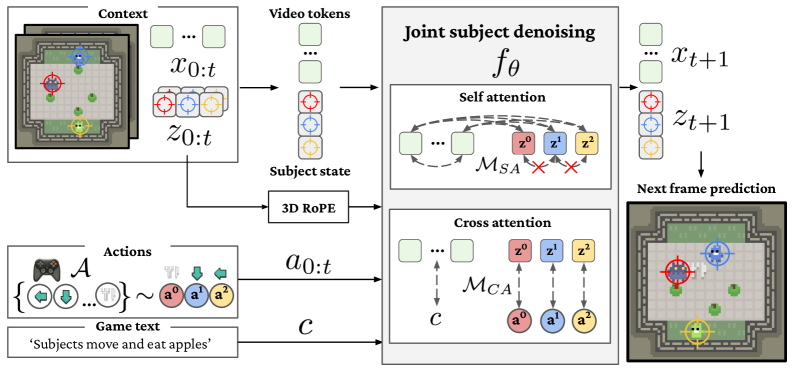

The model works by taking initial video frames and the current subject states as context, along with specific action inputs for each subject and a text description of the environment. It then generates the next video frame. The core of ActionParty is a Diffusion Transformer (DiT). This DiT jointly models both the global video latents (what the entire scene looks like) and the individual subject state tokens.

Figure 2: The ActionParty pipeline. Contextual video frames and subject states are processed by a Diffusion Transformer (DiT) to generate the next frame, conditioned on individual action inputs and a scene description.

Figure 2: The ActionParty pipeline. Contextual video frames and subject states are processed by a Diffusion Transformer (DiT) to generate the next frame, conditioned on individual action inputs and a scene description.

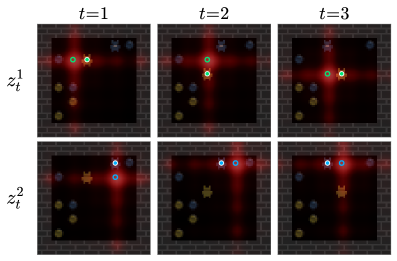

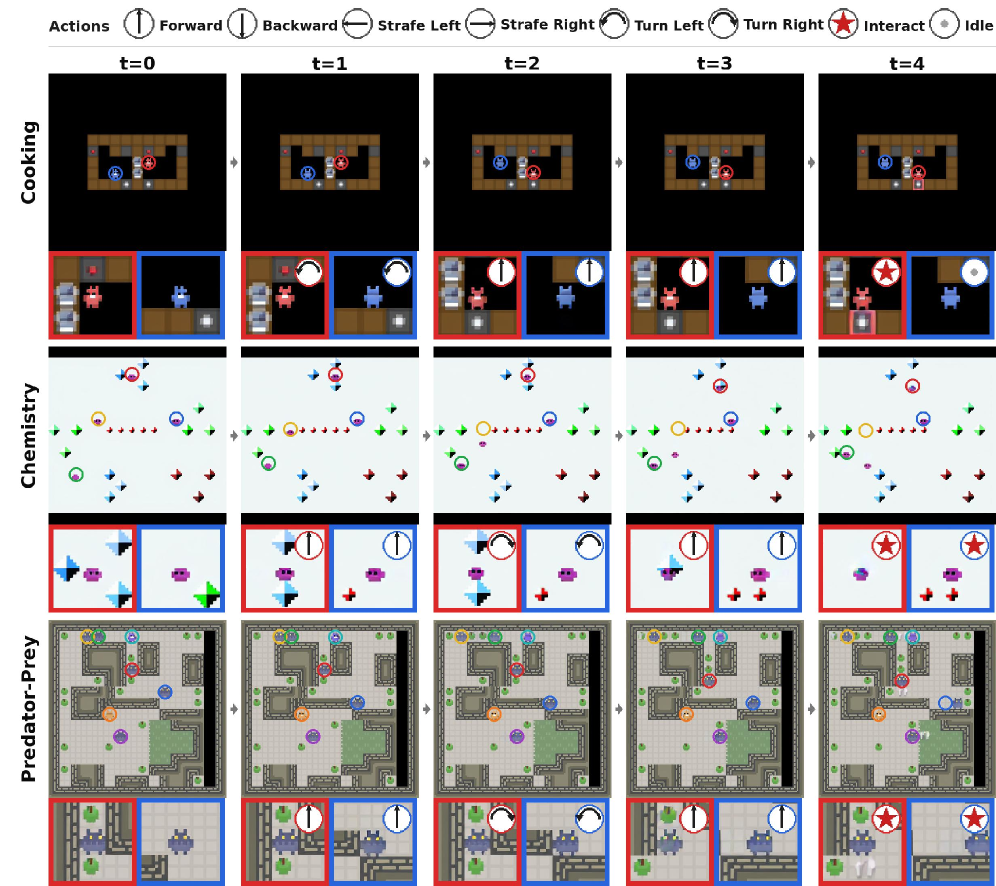

Within each DiT block, two specialized attention mechanisms come into play. First, a self-attention block, enhanced with a 3D RoPE (Rotary Positional Embedding) biasing (Figure 3), is responsible for rendering the subject states into the pixels of the video frame. This mechanism ensures that each subject's unique state information accurately influences its visual representation in the generated video.

Figure 3: Self-attention with subject-video RoPE bias helps render subject states accurately into the video frame.

Figure 3: Self-attention with subject-video RoPE bias helps render subject states accurately into the video frame.

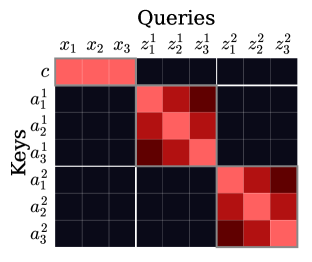

Second, a cross-attention block (Figure 4) with an explicit subject-action binding mechanism is used to update the subject states themselves based on the new action inputs. Crucially, the model specifically links each agent's action to its respective subject state token, preventing the actions from getting confused or misapplied. This disentanglement of global video rendering from individual action-controlled subject updates is what allows ActionParty to maintain identity consistency and accurate action following for multiple agents simultaneously.

Figure 4: Cross-attention bias explicitly links individual actions to their corresponding subject states, crucial for accurate action binding.

Figure 4: Cross-attention bias explicitly links individual actions to their corresponding subject states, crucial for accurate action binding.

The Proof: ActionParty's Performance Under Pressure

ActionParty isn't just a theoretical concept; it delivers quantifiable results. The researchers rigorously evaluated their model on the challenging Melting Pot benchmark, a suite of diverse multi-agent environments. Here's what they found:

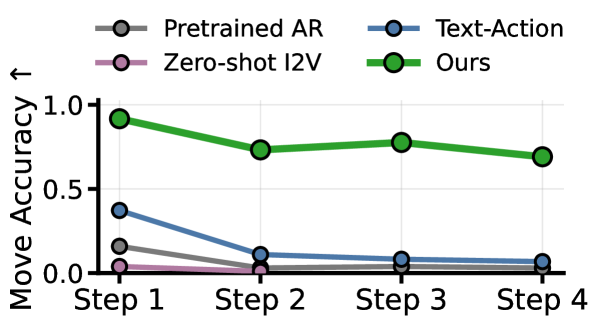

- Multi-Agent Control: ActionParty demonstrated the first video world model capable of controlling up to seven players simultaneously across 46 diverse environments. This is a significant leap beyond previous single-agent or loosely controlled multi-agent systems.

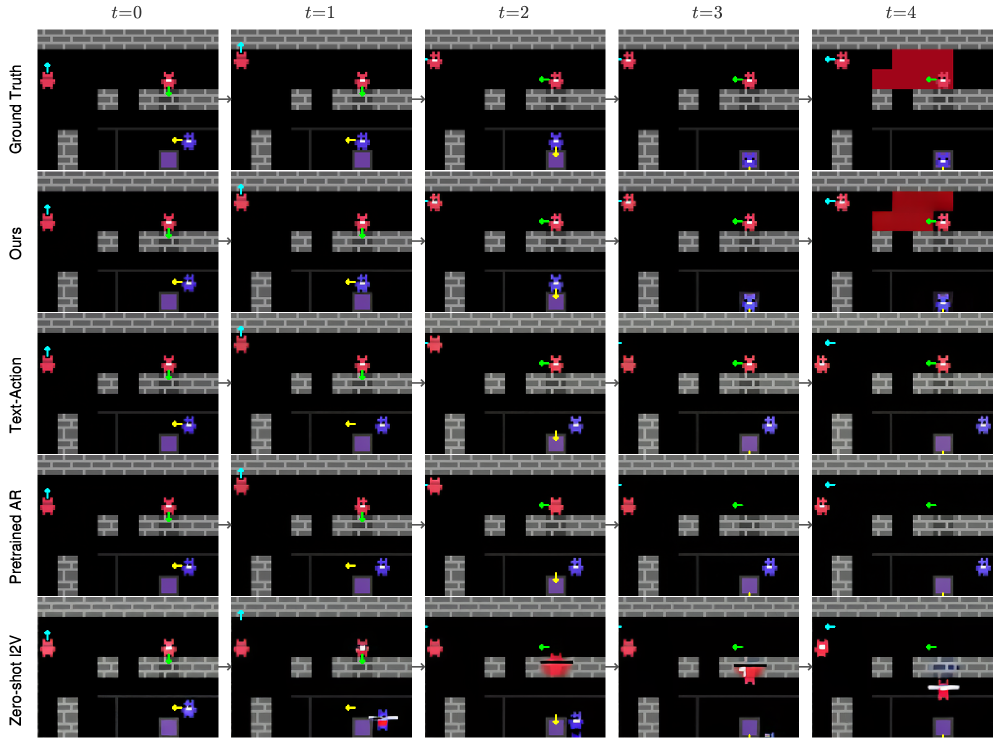

- Action-Following Accuracy: The model shows significant improvements in action-following accuracy compared to baselines. While other models quickly degrade, ActionParty maintains stable action binding across multiple autoregressive rollout steps (Figure 6).

- Identity Consistency: ActionParty also achieved superior identity consistency, meaning it keeps track of which agent is which throughout complex interactions, even as they move and interact. This is vital for reliable multi-agent operations.

- Robust Autoregressive Tracking: The system can robustly track subjects through complex interactions over time, predicting future states and frames based on continuous action inputs.

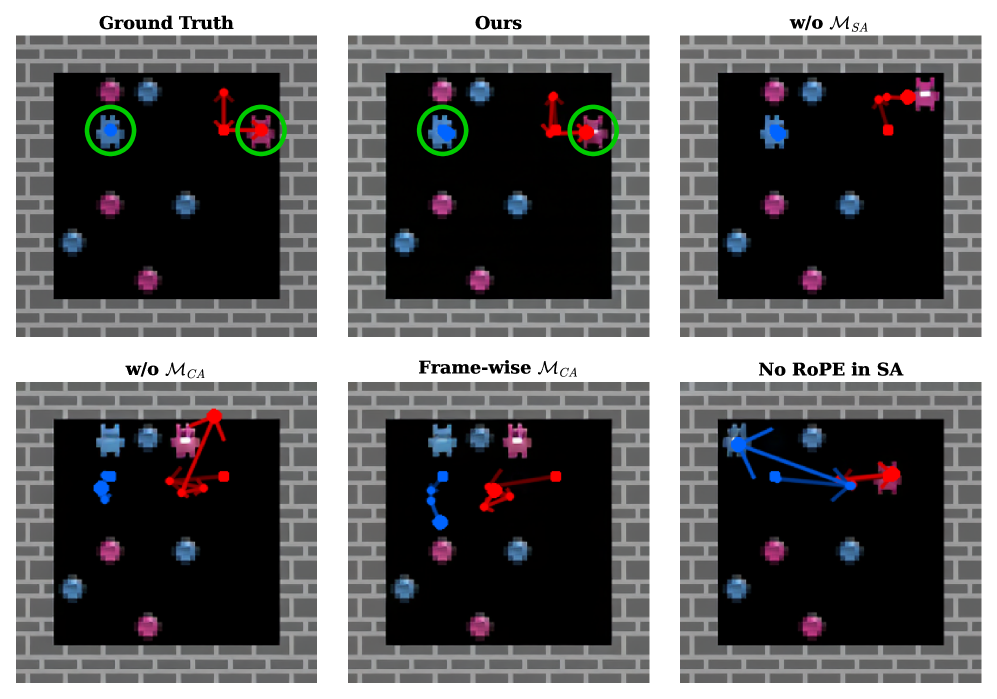

- Qualitative Superiority: Visual comparisons (Figure 5) clearly show ActionParty's ability to follow ground truth actions precisely, whereas baselines fail to associate actions correctly or maintain consistent subject identities. Ablation studies (Figure 8) further confirmed that removing ActionParty's core components—

subject state tokensand thespatial biasing mechanism—leads directly to a loss of multi-character control.

Figure 5: Qualitative comparison showing ActionParty (ours) accurately following ground truth actions for multiple subjects, unlike baseline methods.

Figure 5: Qualitative comparison showing ActionParty (ours) accurately following ground truth actions for multiple subjects, unlike baseline methods.

Figure 6: Movement accuracy (MA) over autoregressive steps. ActionParty maintains high accuracy over time, while baselines rapidly degrade.

Figure 6: Movement accuracy (MA) over autoregressive steps. ActionParty maintains high accuracy over time, while baselines rapidly degrade.

Figure 7: ActionParty successfully generates frames and tracks subject predictions (circles) for 2-, 4-, and 7-player scenarios, demonstrating robust control across varying complexities.

Figure 7: ActionParty successfully generates frames and tracks subject predictions (circles) for 2-, 4-, and 7-player scenarios, demonstrating robust control across varying complexities.

Figure 8: Ablation studies confirm the necessity of ActionParty's introduced components for successful multi-character control.

Figure 8: Ablation studies confirm the necessity of ActionParty's introduced components for successful multi-character control.

Beyond Single Drones: The Future of Swarm Operations

This research marks a significant advance for anyone building or operating drone swarms. The ability to precisely and intuitively control multiple agents simultaneously, without confusion, transforms the potential of multi-drone operations.

- Intuitive Swarm Control: Forget tedious pre-programming or complex individual control interfaces. An operator could use a tablet or even gestures to direct a swarm, assigning specific roles and paths to individual drones or sub-groups, much like commanding units in a real-time strategy game. A single, high-level command like "Drone 1, investigate that anomaly; Drone 2, provide overwatch" becomes directly actionable and reliable.

- Complex Mission Execution: This enables dynamic, on-the-fly task allocation for missions like search and rescue, precision agriculture, large-scale construction site monitoring, or logistics. A swarm could autonomously adapt to changing conditions, with individual drones performing distinct yet coordinated actions, from mapping specific zones to delivering payloads or providing sensory input.

- Enhanced Situational Awareness: By maintaining consistent identities and tracking individual drone states, operators gain clearer situational awareness. They know exactly what each drone is doing and where it is, reducing the cognitive load and potential for error in high-stakes scenarios.

- Reduced Operator Workload: Automating the "action binding" means operators can focus on higher-level strategic goals rather than micromanaging individual drone movements. This shifts the paradigm from piloting to commanding, making complex operations accessible to fewer, more efficient human supervisors.

Grounding the Dream: Real-World Hurdles for Swarm AI

While ActionParty is a significant step forward, its current limitations and the path to real-world drone deployment warrant consideration.

First, the evaluation was conducted on the Melting Pot benchmark, which involves 2D grid-world environments. Translating this to the chaotic, physics-driven 3D reality of actual drones presents a substantial sim-to-real gap. Real drones operate under complex aerodynamic forces, battery constraints, and contend with environmental factors like wind, rain, and obstacles, none of which are explicitly modeled in Melting Pot. Integrating realistic drone physics and sensor models would be the next critical step.

Second, generative AI models, especially Diffusion Transformers, are computationally intensive. Training ActionParty likely requires significant GPU clusters, and even real-time inference for a dynamic swarm on edge AI hardware like NVIDIA Jetson or Qualcomm Snapdragon could be challenging. Optimizing the model for lower latency and reduced computational footprint will be essential for practical deployment on resource-constrained drones.

Third, ActionParty primarily addresses the execution of actions. It takes action inputs and generates the corresponding video. However, the problem of generating those intelligent, high-level action commands for a swarm remains. This would likely require another AI layer, perhaps one that translates human language instructions or strategic goals into discrete actions for each drone. For example, the related work "Beyond Referring Expressions: Scenario Comprehension Visual Grounding" hints at how an AI could interpret nuanced commands like "investigate the area where the anomaly occurred," which would then need to be translated into specific move_forward, turn_left actions for individual drones.

Finally, while the paper demonstrates control for up to seven agents, real-world drone swarms can involve dozens or even hundreds of units. Scaling ActionParty to manage such large numbers of autonomous agents while maintaining performance and reliability is an open question. There's also the challenge of robustness to real-world communication latency, sensor noise, and potential drone failures, which are not typically simulated in generative game environments.

Building Your Own Swarm Brain: Not Yet for the Garage

For the average hobbyist or even a small-scale builder, directly replicating ActionParty in its current form is likely out of reach. This isn't a project you can tackle with off-the-shelf Raspberry Pi or ArduPilot components. The model requires significant AI/ML expertise, substantial computational resources for training (think multiple high-end GPUs), and potentially large synthetic datasets for fine-tuning.

However, the underlying concepts and potentially open-source implementations (if released) could inspire future, more accessible projects. As edge AI hardware becomes more powerful and efficient, and as pre-trained models become more readily available, simplified versions or specialized applications of this technology might become feasible for advanced hobbyists to experiment with smaller drone groups. For now, this is squarely in the realm of well-funded research labs and specialized engineering teams.

The Broader AI Ecosystem Powering Swarms

This work doesn't exist in a vacuum. It builds on and is complemented by several other advancements. For instance, creating the highly realistic and dynamic simulation environments necessary to train and test advanced drone swarm control systems like ActionParty is a challenge in itself. The "Generative World Renderer" paper by Huang et al. proposes methods to create such realistic generative world renderers from AAA games, offering the ideal sandbox for developing and refining these complex AI behaviors before they ever touch a real drone.

Furthermore, effectively commanding a swarm requires more than just action execution; it demands intelligent interpretation of user intent. "Beyond Referring Expressions: Scenario Comprehension Visual Grounding" by He et al. directly addresses this, enabling AI agents to go beyond simple object references to truly understand complex, contextual commands. This capability would be crucial for translating a human operator's nuanced instructions into the precise action inputs ActionParty needs.

As these swarms become more autonomous and undertake longer, more intricate missions, managing their collective and individual memories becomes critical. "Novel Memory Forgetting Techniques for Autonomous AI Agents" by Fofadiya and Tiwari explores how to ensure that an AI agent's memory remains relevant and efficient, discarding outdated data while retaining critical information. This would be essential for sustained, coherent multi-drone operations where drones need to remember past observations or task assignments. And while ActionParty focuses on control, individual drones still need to perceive their environment effectively. "Steerable Visual Representations" by Ruthardt et al. could allow each drone in a swarm to dynamically focus its visual attention on specific, relevant cues, enhancing the overall swarm's situational awareness and task execution.

A New Era of Swarm Control is Dawning

ActionParty represents a tangible stride towards a future where drone swarms are not just collections of autonomous units, but rather cohesive, intelligent entities controlled with an unprecedented level of intuition and precision. It's a crucial step in transforming complex multi-drone operations from intricate programming challenges into dynamic, game-like command scenarios.

Paper Details

Title: ActionParty: Multi-Subject Action Binding in Generative Video Games Authors: Alexander Pondaven, Ziyi Wu, Igor Gilitschenski, Philip Torr, Sergey Tulyakov, Fabio Pizzati, Aliaksandr Siarohin Published: April 2026 arXiv: 2604.02330 | PDF

Written by

The Flight DeskSharing knowledge about drones and aerial technology.