AirGuard: Radar-AI Fusion for Smarter Drone vs. Bird Identification

A new system, AirGuard, leverages integrated sensing and communications (ISAC) with radar and deep learning to precisely differentiate between UAVs and birds, enhancing airspace safety and management.

TL;DR: AirGuard is a simulated system that uses radar-like signals and AI to tell the difference between drones and birds. By analyzing signal echoes, it extracts unique "fingerprints" for each, which a neural network then uses to classify targets, promising more precise airspace monitoring.

Decoding the Skies: Knowing Your Airborne Neighbors

It's a common scenario: a drone is flying, and suddenly, a bird appears, prompting a swift maneuver or a moment of concern. Collision avoidance is a fundamental challenge. But what if a drone could not just detect an object, but identify whether it's a feathered friend or another piece of airborne technology? That's the central concept behind "AirGuard," a new system proposed by Luo et al. This research addresses the critical problem of differentiating between UAVs and birds, not solely for collision avoidance, but for a more nuanced understanding of our shared airspace.

The Ambiguity Above: Why Identification Matters

Current drone sensing typically relies on basic obstacle detection, proximity sensors, or general radar. While these methods are effective for avoiding something, they often lack critical context. A drone might swerve to avoid a bird, consuming energy and deviating from its path, even when a less aggressive maneuver would suffice if it knew it was a small, predictable bird rather than a faster, heavier drone. This ambiguity creates inefficiencies and can introduce unnecessary risk in complex environments. More advanced systems might use cameras, but vision-based identification struggles with range, lighting, and occlusions, adding significant processing overhead and latency. What's needed is a robust, all-weather solution that can provide precise identification without overburdening the drone's computational resources.

AirGuard's Secret: Turning Radar Echoes into Unique IDs

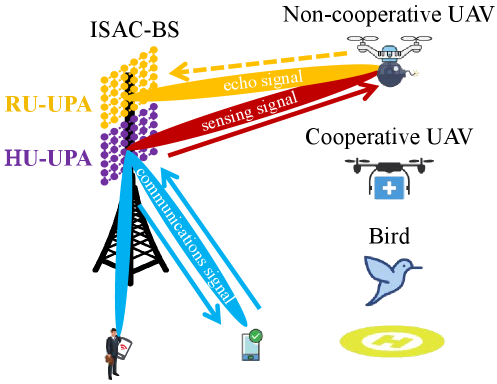

AirGuard tackles this challenge by leveraging Integrated Sensing and Communications (ISAC) principles. The system works by a drone emitting a radar signal and then intently listening to the echoes. The key isn't merely detecting the echo, but extracting subtle patterns unique to the object's physical characteristics and motion.

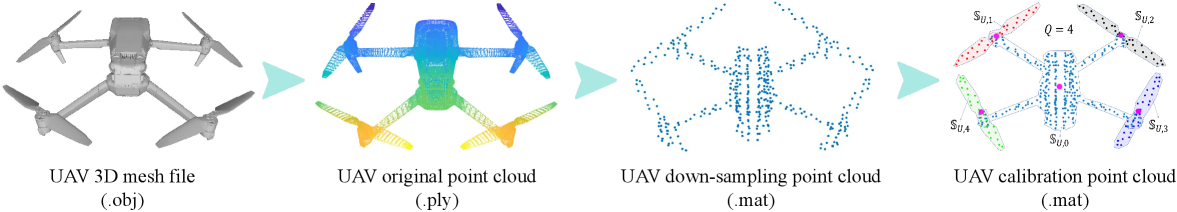

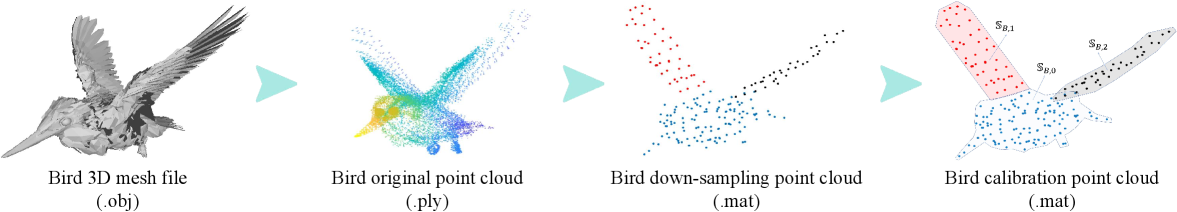

The process begins with detailed mathematical models for both UAVs and birds, defining how their movements and structures reflect radio waves.

Figure 1: ISAC scenario for low-altitude target monitoring.

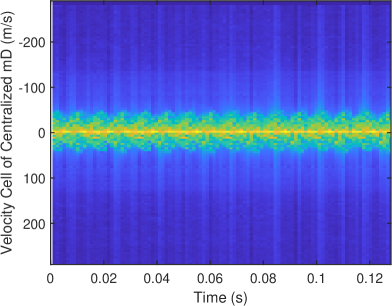

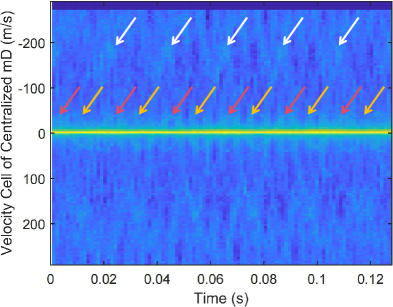

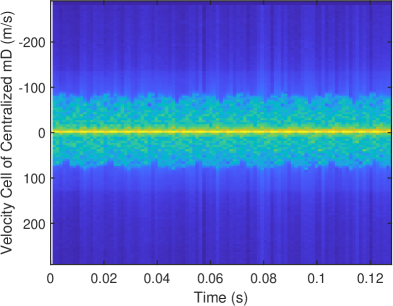

From these simulated echoes, AirGuard extracts two crucial data types: the centralized micro-Doppler (cmD) spectrum and the high resolution range profile (HRRP).

- The

cmDspectrum captures the fine-grained frequency shifts caused by the target's internal movements – think of a bird's flapping wings versus a drone's rotating propellers. These create distinct "micro-Doppler" signatures. - The

HRRPprovides a high-resolution "radar image" of the target's physical structure along the range dimension, revealing its shape and size profile.

Figure 2: Process diagram to obtain the UAV calibration point cloud.

Figure 4: Process diagram to obtain the bird calibration point cloud.

These two data types are then converted into image representations. This conversion is strategic because neural networks, specifically Convolutional Neural Networks (CNNs), excel at image recognition. AirGuard employs a dual feature fusion network, which takes both the cmD spectrum image and the HRRP image as inputs. This dual input enables the network to combine structural (HRRP) and kinematic (cmD) information for more robust classification.

Figure 5: (a)

Figure 6: (b)

Figure 7: (c)

Figures 5, 6, and 7 illustrate examples of these cmD and HRRP images, showcasing the distinct patterns the CNN learns to differentiate. The network was trained and validated on a massive dataset of 237,600 simulated cmD and HRRP image samples, ensuring it learned a wide variety of target characteristics.

Simulated Success: Promising Early Results

The paper demonstrates AirGuard's effectiveness through simulation. While specific accuracy percentages for bird vs. UAV classification are not explicitly detailed in the abstract, the authors state the system's "effectiveness has been demonstrated by simulation results." This implies high accuracy in distinguishing between the two classes within their simulated environment. This is an important first step, establishing the feasibility of using these combined radar features for classification.

Why This Matters: Real-World Impact for Drones

Consider a future where your drone isn't just avoiding a "blip" but knows it's a pigeon or a DJI Mavic. This isn't merely academic; it has practical implications for:

- Smarter Collision Avoidance: Drones could apply different avoidance strategies based on the target. A slow, predictable bird might warrant a gentle altitude change, while an oncoming drone requires a more aggressive, immediate evasion. This optimizes flight paths and battery life.

- Airspace Management: For fleet operators or

UTM(Unmanned Traffic Management) systems, knowing the composition of airborne objects is crucial. Are those unauthorized drones or just local wildlife? AirGuard could provide that intelligence. - Security and Surveillance: Identifying rogue drones near sensitive areas becomes much easier if you can filter out natural avian activity.

- Environmental Monitoring: For drones used in wildlife observation, distinguishing between other UAVs and the target species (or just non-target birds) prevents false positives and improves data quality.

The Unflown Reality: Limitations and Future Challenges

While promising, AirGuard, in its current state, remains a simulated proof-of-concept. Several factors require consideration before real-world deployment:

- Simulation vs. Reality: The 237,600 training samples are simulated. Real-world

cmDandHRRPsignatures can be far more complex and noisy, affected by weather, target aspect angles, and environmental clutter. Generating a robust real-world dataset will be a massive undertaking. - Hardware Integration: The paper discusses an

ISACsystem. While the concept is sound, integrating a compact, lightweight, and low-power radar system capable of generating these high-resolution profiles onto a mini-drone presents a significant engineering challenge. - Computational Load: Running a

CNNon a drone's embedded processor, especially for real-time identification, will require efficient hardware (e.g., dedicated AI accelerators) and optimized models. - Distinguishing Between Drone Types: The paper focuses on UAV vs. Bird. What about differentiating between various types of UAVs? Or small insects that might have similar radar signatures to birds? The classification capabilities may need to expand.

- Communication Range and Speed: The "communications" part of

ISACisn't detailed regarding how it aids classification or if it's purely for data offloading. The latency from sensing to decision is critical for fast-moving objects.

A Hobbyist's Dream? Not Yet.

In short, no. Replicating AirGuard is not a weekend project for a hobbyist. The core components involve:

- Specialized Radar Hardware: This requires sophisticated compact radar capable of emitting and receiving signals suitable for

cmDandHRRPextraction, far beyond standard hobbyist sensors. - Signal Processing Expertise: Extracting

cmDandHRRPfrom raw radar echoes demands advanced signal processing algorithms, typically implemented inMATLABorPythonwith specialized libraries. - Deep Learning Development: While

CNNsare accessible, training a robust model necessitates substantial computational resources and a massive, well-labeled dataset (which, for now, remains simulated). - Open Source: The paper does not mention any open-source code or datasets, which is typical for initial research papers.

This firmly resides in the realm of academic and industrial research, requiring significant investment in hardware, data collection, and specialized engineering.

Beyond Identification: AirGuard in a Connected Drone Ecosystem

AirGuard's identification capabilities feed directly into a broader ecosystem of drone autonomy. Once a drone identifies a threat, it must act decisively and safely. This is where related research becomes crucial. For example, "A Feasibility-Enhanced Control Barrier Function Method for Multi-UAV Collision Avoidance" by Zhong et al. (https://arxiv.org/abs/2603.13103) outlines the next logical step: how drones can reliably avoid those identified threats, especially in crowded, multi-drone environments. AirGuard identifies what an object is, and control barrier functions dictate how to safely maneuver.

Furthermore, a drone's understanding of its environment benefits significantly from persistent world modeling. "Towards Spatio-Temporal World Scene Graph Generation from Monocular Videos" by Peddi et al. (https://arxiv.org/abs/2603.13185) explores how drones can construct richer, 3D models of their surroundings. This enhances the ability to predict trajectories and plan safer avoidance paths, even when objects are temporarily out of sight – a vital complement to AirGuard's instantaneous identification.

Finally, effective avoidance necessitates comprehensive awareness. While focused on ground robots, "Panoramic Multimodal Semantic Occupancy Prediction for Quadruped Robots" by Zhao et al. (https://arxiv.org/abs/2603.13108) underscores the need for 360° perception. Drones also require this robust occupancy prediction to map the space around identified objects, ensuring safe passage even in challenging conditions. AirGuard identifies; panoramic occupancy mapping helps the drone understand the context of that identification within its immediate surroundings.

The ability to accurately differentiate between a drone and a bird using radar and AI represents a significant leap for drone safety and air traffic management. The challenge now lies in transitioning this simulation-proven concept into the messy, unpredictable reality of our skies.

Paper Details

Title: AirGuard: UAV and Bird Recognition Scheme for Integrated Sensing and Communications System Authors: Hongliang Luo, Zhonghua Chu, Tengyu Zhang, Chuanbin Zhao, Bo Lin, Feifei Gao Published: March 2026 (arXiv) arXiv: 2603.13112 | PDF

Written by

Mini Drone Shop AISharing knowledge about drones and aerial technology.