Taming 6G's Near-Field: JSSAnet for Ultra-Reliable Drone Communication

A new paper introduces JSSAnet, a neural network designed for efficient near-field channel estimation in 6G's extremely large-scale antenna arrays, promising robust, ultra-fast data links for drones.

TL;DR: This research introduces JSSAnet, a neural network designed to significantly improve near-field channel estimation for

6Gcommunication using Extremely Large-Scale Antenna Arrays (ELAA). It tackles the complexnear-fieldchallenges by partitioning channels and applying a novel joint spatial attention mechanism, promising highly reliable and ultra-fast data links essential for advanced drone operations.

The New Wireless Frontier for Drones

For drone operators, builders, and engineers, robust communication isn't just a feature – it's the backbone of autonomy and reliability. As we push towards 6G, the promise of unheard-of data rates and ultra-low latency comes with a catch: the physics of massive antenna arrays create complex "near-field" communication challenges. This paper dives into a smart solution, JSSAnet, aiming to make those theoretical 6G speeds a practical reality for drones, unlocking new levels of swarm coordination and real-time data processing.

Why Near-Field Comms are a Headache

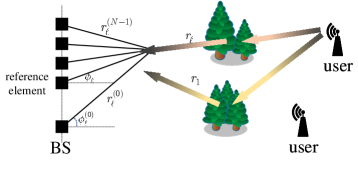

Current 5G systems are impressive, but 6G aims higher, leveraging ELAA (Extremely Large-Scale Antenna Arrays) with hundreds or thousands of antennas. When you pack that many antennas into a small space, the electromagnetic waves no longer behave like simple flat planes (far-field). Instead, they become spherical (near-field), as conceptually illustrated in Figure 1, introducing a complex, non-linear channel that's incredibly difficult to predict and manage.

Figure 1: The near-field uplink channel with spherical-wave and with several scatters.

Figure 1: The near-field uplink channel with spherical-wave and with several scatters.

Existing channel estimation methods struggle with this near-field effect, leading to inefficient communication, wasted power, and slower data rates. This isn't just an academic problem; for drones, it means less reliable control links, slower data offloading, and limitations on how many drones can operate in a dense environment without interference. Think of trying to coordinate a swarm of inspection drones or deliver high-fidelity sensor data in real-time – current methods simply aren't up to the task without significant computational overhead or accuracy compromises.

How JSSAnet Cracks the Code

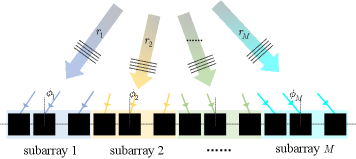

The core innovation of JSSAnet is a theory-guided approach to tame the near-field complexity. The authors propose a piecewise Fourier representation that essentially breaks down the complex near-field channel into smaller, more manageable "subchannels." Each subchannel can then be mapped to the angular domain using a Discrete Fourier Transform (DFT). This clever partitioning allows the system to approximate the spherical wavefronts more effectively, as shown in Figure 2, by dividing the ELAA into M subarrays where wavefronts are planar within subarrays but spherical between them.

Figure 2: The ELAA is partitioned uniformly into M subarrays. The EM waves impinge on the subarray with planar wavefront, while the wavefronts between subarrays exhibit spherical waves.

Figure 2: The ELAA is partitioned uniformly into M subarrays. The EM waves impinge on the subarray with planar wavefront, while the wavefronts between subarrays exhibit spherical waves.

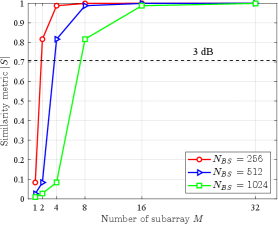

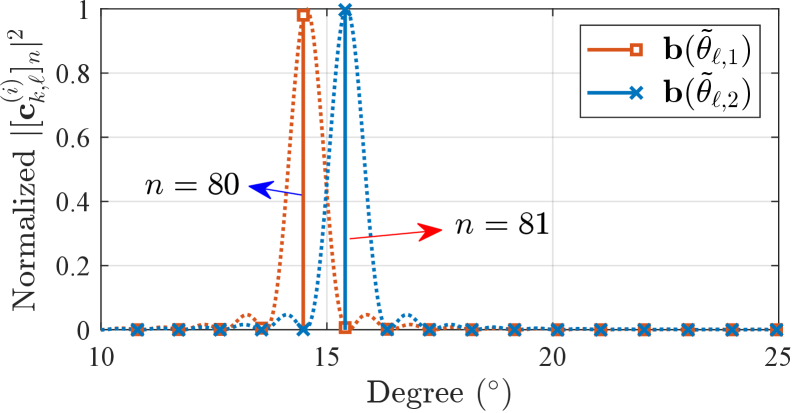

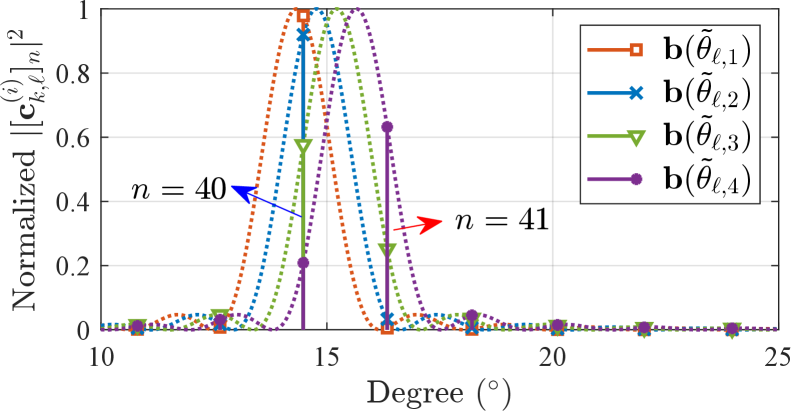

This partitioning strategy is critical because it addresses the fundamental difference between far-field (planar waves) and near-field (spherical waves) propagation. The paper provides a detailed analysis of the similarity between these spherical waves and their planar approximations, as shown in Figure 3. This similarity metric guides how effectively the channel can be segmented. Figures 4 and 5 further illustrate this partitioning concept for different numbers of subarrays (M=2 and M=4), demonstrating the method's flexibility in handling various array configurations.

Figure 3: The similarity metric between b(θ) and a(θ₀,r₀). The carrier frequency f_c, the spatial AoA θ₀ and distance r₀ are 60 GHz, sin(π/12) and 15 meters, respectively.

Figure 3: The similarity metric between b(θ) and a(θ₀,r₀). The carrier frequency f_c, the spatial AoA θ₀ and distance r₀ are 60 GHz, sin(π/12) and 15 meters, respectively.

Figure 4: (a) M=2

Figure 4: (a) M=2

Figure 5: (b) M=4

Figure 5: (b) M=4

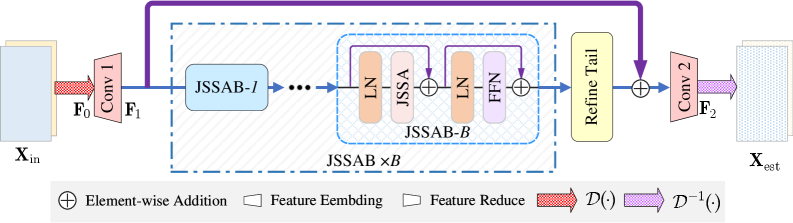

Once partitioned, the JSSAnet (Joint Subchannel-Spatial-Attention Network) gets to work. This neural network is designed to extract spatial features from both within each subchannel (intra-subchannel) and between them (inter-subchannel). The overall structure of the proposed JSSAnet for near-field channel estimation is depicted in Figure 6.

Figure 6: (a) The structure of the proposed JSSAnet for near-field channel estimation.

Figure 6: (a) The structure of the proposed JSSAnet for near-field channel estimation.

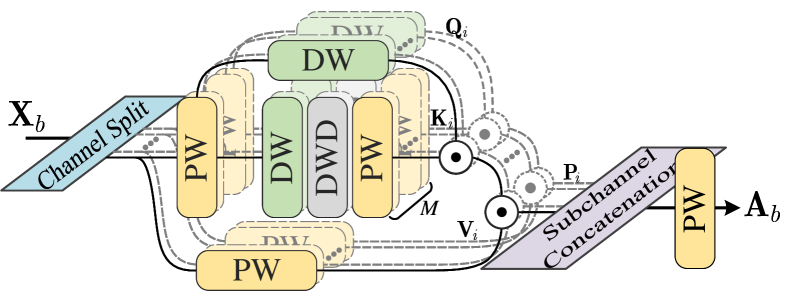

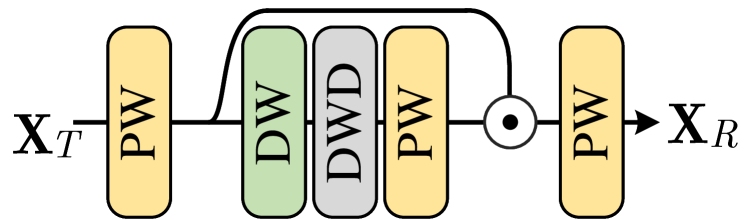

The design of this attention mechanism isn't arbitrary; it's guided by derived theoretical bounds that help mitigate DFT quantization loss and ensure accurate approximation. A key component is the JSSA layer within an attention block, which assigns independent and adaptive spatial attention weights to each subchannel in parallel. This means the network can dynamically focus on the most relevant parts of the channel data, as detailed in the Joint Subchannel-Spatial-Attention (JSSA) Module shown in Figure 7.

Figure 7: (b) Joint Subchannel-Spatial-Attention (JSSA) Module

Figure 7: (b) Joint Subchannel-Spatial-Attention (JSSA) Module

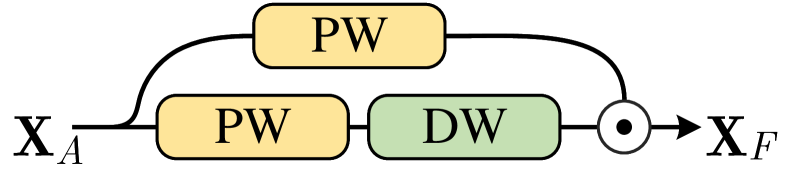

Following this, a feed-forward network (FFN) further refines the residual non-linear dependencies across these subchannels, as illustrated in Figure 8. Finally, a Refine Tail module (Figure 9) processes the output to produce the final channel estimation. The JSSA map itself is linearly computed using element-wise product combining large-kernel convolutions (DLKC), which helps maintain strong contextual learning capabilities without excessive computational burden.

Figure 8: (c) Forward-feed network (FFN)

Figure 8: (c) Forward-feed network (FFN)

Figure 9: (d) Refine Tail

Figure 9: (d) Refine Tail

Essentially, JSSAnet learns to "see" the near-field channel's intricate structure, even with its spherical wavefronts and non-linearities, and then intelligently allocates resources to estimate it accurately.

The Numbers Speak for Themselves

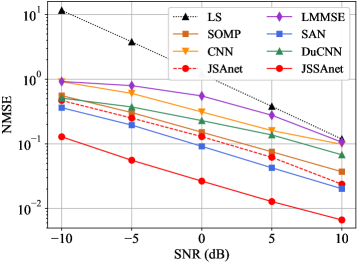

The numerical results from the paper confirm that JSSAnet isn't just a theoretical exercise; it delivers tangible performance improvements. The authors show that embedding sparsity information into the attention network is indeed effective.

- Superior Estimation Performance: JSSAnet consistently achieves superior channel estimation performance compared to existing methods across various Signal-to-Noise Ratio (

SNR) levels. - Reduced Normalized Mean Square Error (NMSE): As depicted in Figure 10, JSSAnet shows a lower

NMSE(which means better accuracy) across a range ofSNRvalues, especially at higherSNRs where traditional methods plateau. For example, at anSNRof 10 dB, JSSAnet shows a significant improvement inNMSEover schemes likeAngular-DFTandSparse-CS. - Robustness: The performance holds even with varying distances (e.g.,

runiformly distributed between 10 and 20 meters), which is crucial for dynamic drone environments.

Figure 10: NMSE performance comparison of different schemes in different SNRs, where r∼𝒰(10,20) m and L=6.

Figure 10: NMSE performance comparison of different schemes in different SNRs, where r∼𝒰(10,20) m and L=6.

These metrics indicate a more precise and reliable understanding of the communication channel, translating directly into better data throughput and connection stability.

Why it Matters for Drones

This research matters for anyone building or operating advanced drones. For autonomous UAVs and drone swarms, 6G communication powered by something like JSSAnet means:

- Hyper-Reliable Control Links: Imagine mission-critical operations where a dropped packet could mean mission failure. JSSAnet's improved channel estimation provides the foundation for ultra-reliable low-latency communication (

URLLC), making drone control virtually unshakeable, even in complexnear-fieldenvironments like urban canyons or industrial facilities. - Ultra-Fast Data Transfer: High-resolution sensors,

LiDARdata, and4Kvideo feeds from drones can be streamed in real-time without bottlenecks. This is crucial for applications like precision agriculture, infrastructure inspection, or search and rescue, where immediate data analysis is critical. - Advanced Swarm Coordination: The ability to accurately estimate

near-fieldchannels meansdrone swarmscan communicate more effectively amongst themselves and with a central controller. This enables tighter coordination, faster decision-making, and more complex collective behaviors, moving beyond simple leader-follower dynamics to truly autonomous, distributed intelligence. - Edge AI Enablement: With higher bandwidth and reliability, drones can offload more raw sensor data to

edge computingnodes or even the cloud for heavierAIprocessing, reducing onboard computational requirements and extending flight times. Conversely, more complexAImodels can be downloaded to the drone quickly.

The Road Ahead: Limitations and Next Steps

While JSSAnet offers a significant leap, it's important to be realistic about its current stage and inherent challenges:

- Computational Overhead: Neural networks, especially those with attention mechanisms, can be computationally intensive. While the paper mentions

DLKChelps, deploying such a system on resource-constrained drone hardware will require careful optimization. Power consumption for real-time inference on a drone is a significant consideration. - Training Data Requirements: Like all deep learning models, JSSAnet requires substantial, diverse training data. Collecting realistic

near-fieldchannel data forELAAconfigurations in varied environments (urban, rural, indoor) is a massive undertaking. The paper's numerical results use simulated data, which is a common starting point but doesn't fully capture real-world complexity. - Dynamic Environments: Drones are inherently mobile. While the paper shows robustness to varying distances, rapid changes in orientation, speed, and surrounding obstacles (multipath fading) can make real-time channel estimation even more challenging. The current model might need further adaptations for extreme dynamism.

- Hardware Integration: This is a theoretical and algorithmic paper. The practical challenges of integrating

ELAAwith the necessary processing power onto a drone, managing weight, power draw, and thermal dissipation, are substantial engineering hurdles that extend beyond the scope of this research. It assumesELAAis already deployed.

DIY Feasibility: Not Yet, But Keep Watching

For the average drone hobbyist or small builder, directly replicating 6G ELAA hardware and implementing JSSAnet from scratch is not feasible today. This is cutting-edge wireless research requiring specialized RF hardware, massive antenna arrays, and significant computational resources for model training and inference. Think university labs and large R&D divisions, not a garage workshop.

However, the principles are important. Understanding how 6G is evolving means you'll be better prepared for future drone communication modules. If open-source implementations of near-field channel estimation algorithms or JSSAnet variants become available for SDR platforms like USRP or LimeSDR, then experimenting with scaled-down versions might become possible. For now, consider this a glimpse into the future capabilities that commercial 6G modules will eventually offer, rather than a build-it-yourself project.

Beyond the Wires: Related Innovations

The implications of enhanced 6G communication, as proposed by JSSAnet, extend far beyond just faster connections. Consider how this impacts drone autonomy. Papers like "Chameleon: Episodic Memory for Long-Horizon Robotic Manipulation" by Guo et al. highlight the need for sophisticated onboard intelligence. Ultra-reliable, high-bandwidth communication would allow drones to share complex 'episodic memories' within a swarm or offload memory processing to ground stations, enabling more advanced, long-duration tasks that currently strain onboard resources.

Furthermore, the data drones collect will be richer and more immediate. "The role of spatial context and multitask learning in the detection of organic and conventional farming systems based on Sentinel-2 time series" by Hemmerling et al. underscores the demand for high-resolution spatial data in applications like precision agriculture. With JSSAnet-enabled 6G, drones could transmit vast amounts of hyper-spectral or LiDAR data in real-time, drastically improving the efficiency and impact of such monitoring tasks, making drone-based data collection more competitive with satellite systems.

Finally, when we transmit massive amounts of visual data, its quality for human or AI interpretation is paramount. The work on "Vision-Language Models vs Human: Perceptual Image Quality Assessment" by Mehmood et al. becomes directly relevant. As 6G facilitates the streaming of high-fidelity video, understanding and ensuring its perceptual quality for the end-user – whether an operator or an AI processing stream – will be critical, complementing the low-level channel estimation with high-level data utility.

The Future of Drone Connectivity

JSSAnet is a serious stride towards making 6G's ambitious promises a reality for drones, laying the groundwork for a future where autonomous aerial systems are more connected, intelligent, and capable than ever before.

Paper Details

Title: JSSAnet: Theory-Guided Subchannel Partitioning and Joint Spatial Attention for Near-Field Channel Estimation Authors: Zhiming Zhu, Shu Xu, Chunguo Li, Yongming Huang, Luxi Yang Published: Preprint arXiv: 2603.24505 | PDF

Written by

Mini Drone Shop AISharing knowledge about drones and aerial technology.