Chameleon: Giving Drones Episodic Memory for Complex Missions

This research introduces Chameleon, an episodic memory system enabling robots to recall fine-grained past interactions to overcome perceptual aliasing and reliably complete long-horizon tasks.

TL;DR:

Chameleonintroduces a novel episodic memory system for robots, allowing them to recall fine-grained past interactions to overcome perceptual aliasing and reliably complete long-horizon tasks. This approach significantly improves decision-making in complex environments where identical observations can arise from different histories.

Beyond Real-Time: Drones That Remember

Advanced drone missions aren't just about seeing in real-time anymore. They demand a deep understanding of past events and their context. Imagine a drone inspecting a sprawling industrial facility where countless pipes or valves look exactly alike, or a delivery drone weaving through a bustling city. For these machines to truly operate autonomously over long periods, they need to recall specific details of what they've encountered and done. That's where researchers from Google DeepMind and other institutions come in. They're tackling this challenge head-on with Chameleon, an episodic memory system designed to help robots learn from every interaction, addressing a crucial bottleneck in long-horizon robotic manipulation.

The Memory Gap: Why Robots Forget Important Details

At the heart of complex robotic tasks lies a problem called "perceptual aliasing." It's when a robot encounters the same visual input at different times, yet the correct action hinges entirely on its past experiences. Picture a classic shell game: all the cups look identical, but only one conceals the prize. For a drone, this might mean distinguishing between two identical-looking power lines, knowing only one needs inspection because of a prior fault report, or navigating through a dense forest past similar-looking obstacles. Most current robotic memory systems fall short here. They tend to compress sensory data into broad semantic traces or rely on simple similarity-based retrieval. This approach often discards the crucial, fine-grained perceptual cues necessary to tell the difference between situations that look identical but demand different responses. The result? Unreliable decision-making and a cap on a robot's ability to complete complex, multi-step missions without constant human oversight.

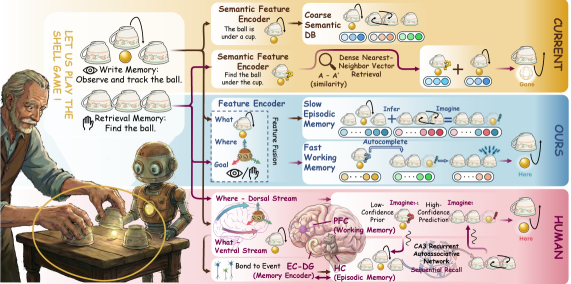

Figure 1: The classic shell game illustrates perceptual aliasing, where identical observations require memory of past interactions for correct decision-making. Chameleon aims to solve this.

Figure 1: The classic shell game illustrates perceptual aliasing, where identical observations require memory of past interactions for correct decision-making. Chameleon aims to solve this.

Building a Robot's Mind: Chameleon's Approach to Episodic Memory

Chameleon confronts perceptual aliasing by taking cues from human episodic memory—specifically, our Entorhinal Cortex–Hippocampus–Prefrontal Cortex (EC–HC–PFC) system. Rather than just compressing observations, Chameleon prioritizes preserving "disambiguating fine-grained perceptual cues" by writing "geometry-grounded multimodal tokens" into its memory. This is a critical difference: it's not merely what the robot observed, but where and how it observed it, embedding spatial context directly into the memory trace. The system operates via a clear Perception → Memory → Policy pipeline. First, its Perception module, featuring a "dorsal stream" for spatial processing, generates view-consistent tokens from both front and hand RGB camera inputs. This initial phase is essential for cutting down ambiguity before memory even begins to form. As Figure 5 illustrates, this dorsal stream ensures attention locks onto the correct target, guided by end-effector geometry and epipolar feasibility, stopping attention from scattering across irrelevant distractors.

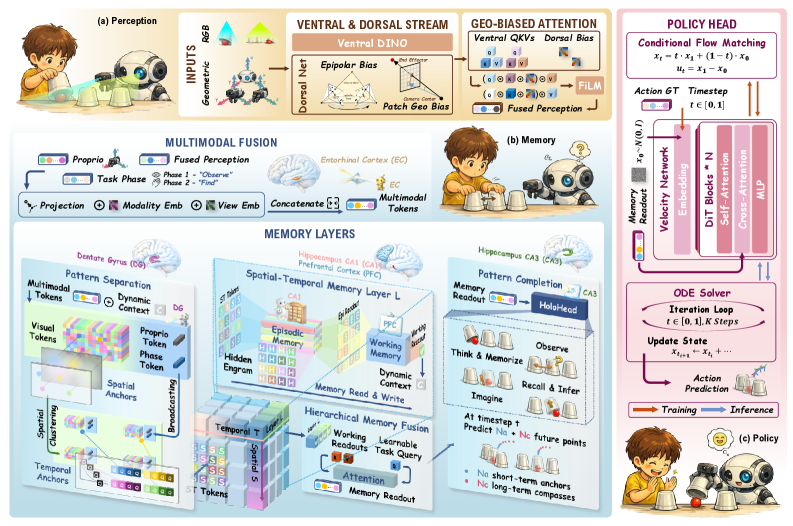

Figure 7: An overview of Chameleon's closed-loop perception-memory-policy pipeline, emphasizing geometry-grounded tokens and imagination-style rollout supervision.

Figure 7: An overview of Chameleon's closed-loop perception-memory-policy pipeline, emphasizing geometry-grounded tokens and imagination-style rollout supervision.

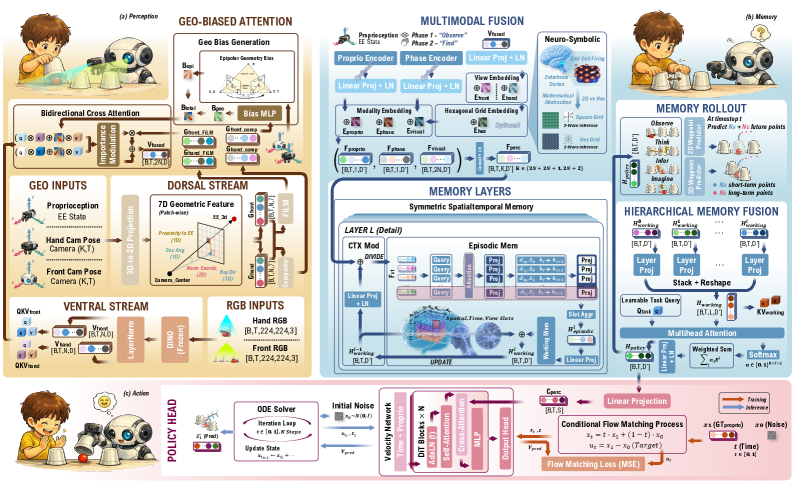

These tokens then flow into the Memory module, which links an episodic state with a working state. The real innovation here is a "differentiable memory stack" that learns "relevance-based recall" through HoloHead rollout training. This isn't a simple lookup table; the system actively learns what to commit to memory and how to leverage it to forecast future states. The memory readout, a compact recurrent decision state, then guides the Policy module.

Figure 2: The detailed architecture of Chameleon, from perception to policy, highlighting the role of the memory module in bridging observation and action.

Figure 2: The detailed architecture of Chameleon, from perception to policy, highlighting the role of the memory module in bridging observation and action.

Finally, the Policy, relying exclusively on this memory readout, employs conditional flow matching to generate a future end-effector pose trajectory for execution. This complete closed-loop system empowers the robot to forge a robust, history-dependent grasp of its surroundings, which is vital for navigating situations that look confusingly similar.

Figure 5: The dorsal stream's role in disambiguating observations at the point of memory formation, crucial for accurate spatial tracking and decision-making.

Figure 5: The dorsal stream's role in disambiguating observations at the point of memory formation, crucial for accurate spatial tracking and decision-making.

Proof in the Pudding: Real-World Performance Under Ambiguity

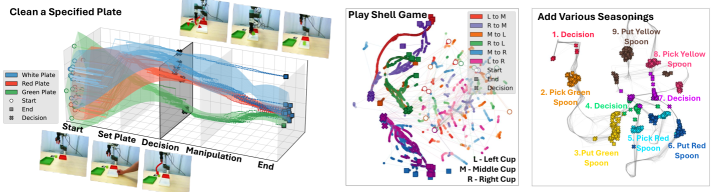

To truly test Chameleon, the researchers put it through its paces using a UR5e robot and a brand-new dataset they developed: Camo-Dataset. This dataset was specifically crafted for scenarios demanding episodic recall, spatial tracking, and sequential manipulation amidst perceptual aliasing. It features three tough tasks:

- Clean a Specified Plate: The robot has to remember which plate the user pointed to, even when three identical-looking plates are on the table at decision time.

- Play Shell Game: The robot tracks a cube hidden under one of three identical cups.

- Add Various Seasonings: The robot needs to recall which specific spoon it picked up earlier when confronted with three similar spoons.

Figure 3: Examples of the challenging, perceptually aliased tasks used in the Camo-Dataset to test Chameleon's episodic memory capabilities.

Figure 3: Examples of the challenging, perceptually aliased tasks used in the Camo-Dataset to test Chameleon's episodic memory capabilities.

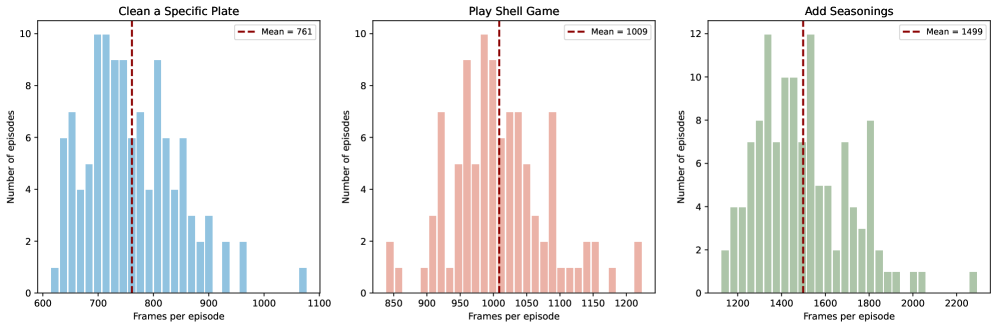

In every one of these tasks, Chameleon consistently and significantly boosted decision reliability and long-horizon control compared to leading baseline methods. The crucial takeaway is its knack for keeping a clear distinction between decision-relevant states, even when visuals are ambiguous. Figure 4, which displays UMAP projections, vividly shows how Chameleon's internal representation (h_t) separates history-dependent states, creating distinct clusters for different underlying decision targets even when observations look identical. This "pattern separation" is precisely what's required to conquer perceptual aliasing. What's more, HoloHead waypoint predictions, which guide the policy, stayed consistent with the hidden target even under ambiguity (Figure 6). This suggests that Chameleon's memory readout (h_t) effectively enables "pattern completion" from partial cues, meaning it can deduce the correct state even with incomplete sensory input. These tasks are no walk in the park either, with mean episode lengths spanning from 761 to 1499 frames (Figure 8), showcasing the system's resilience over prolonged interactions.

Figure 4: UMAP projections illustrate Chameleon's ability to separate distinct history-dependent states internally, even when external observations are identical.

Figure 4: UMAP projections illustrate Chameleon's ability to separate distinct history-dependent states internally, even when external observations are identical.

Figure 6: Predictive rollouts confirm Chameleon's capacity for pattern completion, maintaining accurate predictions despite ambiguous decision-time observations.

Figure 6: Predictive rollouts confirm Chameleon's capacity for pattern completion, maintaining accurate predictions despite ambiguous decision-time observations.

Figure 8: The Camo-Dataset features long-duration episodes, demonstrating the need for robust memory over extended interactions.

Figure 8: The Camo-Dataset features long-duration episodes, demonstrating the need for robust memory over extended interactions.

Flight Paths to the Future: Impact on Drone Autonomy

This research marks a significant leap for drone autonomy, especially in complex, real-world settings. For hobbyists and engineers developing advanced drones, Chameleon's methodology means crafting systems that are genuinely intelligent, rather than merely reactive.

- Persistent Inspection: Imagine a drone meticulously inspecting a sprawling solar farm or a multi-story building. It could remember which panels it has already checked, which zones demand closer examination based on prior observations, or even track subtle changes over time—even if individual panels or structural elements appear identical.

- Complex Search & Rescue: In a disaster area, a drone could recall the precise last known location of a target, distinguish between similar piles of rubble it has already searched, or follow a survivor's movement even if they briefly vanish behind an obstruction.

- Autonomous Delivery & Logistics: For package deliveries, a drone could remember the exact porch it needs to approach among a row of identical-looking houses, or track a specific recipient in a bustling outdoor area.

- Dynamic Environment Navigation: A drone could navigate a dense forest, recalling the precise path it took through a particular cluster of trees, or track a moving object through a visually confusing environment like tall grass, even if its line of sight is temporarily blocked.

- Swarm Coordination: Within a drone swarm, individual units could share and update their episodic memories, fostering more robust collective intelligence and task completion.

The power to recall fine-grained, geometry-grounded context means drones can transcend basic object recognition. They can now understand and act upon the history of their interactions with the environment. This capability is paramount for reliable decision-making in long-duration missions where environments are frequently dynamic and visually ambiguous.

The Road Ahead: Where Chameleon Still Needs to Fly

While Chameleon marks a substantial stride forward, it's crucial to recognize its current scope and inherent limitations.

- Fixed-Base Manipulation: The experiments were carried out using a

UR5emanipulator, which is a robot arm fixed to a base. Adapting this technology to mobile platforms like drones introduces layers of complexity, including ego-motion estimation, dynamic flight control, and managing vastly larger operational areas. A drone's spatial context is inherently far more dynamic than that of a stationary arm. - Computational Overhead: Although not explicitly detailed in the paper's abstract, memory systems—especially those designed to retain fine-grained details and involving differentiable stacks—can be computationally demanding. For compact drones with stringent power and weight limitations, optimizing this for on-board processing presents a significant hurdle.

- Scalability to Vast Environments: The

Camo-Datasettasks, while intricate, are confined to relatively small workspaces. Scaling episodic memory to manage environments spanning kilometers, or to recall interactions over days or weeks, introduces a completely different order of magnitude for memory storage and retrieval efficiency. - Novelty Generalization: The paper primarily focuses on scenarios where the robot has encountered similar objects or tasks during its training. While it adeptly handles perceptual aliasing, its ability to generalize to entirely novel objects or completely unfamiliar long-horizon tasks remains an open question. Further investigation is needed into how well its memory structures can adapt to entirely new experiences.

Hobbyist Hacking: Can You Build Your Own Chameleon?

For the average hobbyist, replicating Chameleon right now is, frankly, impractical. The system relies on advanced deep learning architectures, a bespoke dataset (Camo-Dataset), and demands a sophisticated robotic platform like the UR5e equipped with multiple cameras. The core HoloHead training and conditional flow matching aren't readily available, off-the-shelf tools. Plus, the code isn't open-source, which is common for cutting-edge research from powerhouses like Google DeepMind. However, the concepts themselves are incredibly valuable. Hobbyists can certainly draw inspiration from ideas like "geometry-grounded tokens" and "episodic memory" when crafting their own drone control systems. Pondering how to embed spatial context and historical events into a drone's decision-making, even with more straightforward models, offers a rich learning opportunity. For example, building simple memory maps that track object IDs and their states could be a foundational step toward this kind of intelligence.

Broader Horizons: Related Work Enhancing Robot Intelligence

Chameleon's emphasis on structured memory for long-horizon tasks aligns well with other ongoing research in robotic perception and understanding. For example, the quest to build a robust environmental understanding is also explored in papers like "EndoVGGT: GNN-Enhanced Depth Estimation for Surgical 3D Reconstruction." While its focus is on surgical robots, EndoVGGT's reliance on GNNs for precise 3D perception of deformable objects underscores the fundamental need for rich, dependable spatial data—precisely the kind of "geometry-grounded tokens" Chameleon's perception module depends on. Without such accurate 3D understanding, forming truly meaningful memories would be far more challenging. Likewise, an episodic memory system truly shines when it can interpret sequences of events. "CliPPER: Contextual Video-Language Pretraining on Long-form Intraoperative Surgical Procedures for Event Recognition" addresses this head-on, aiming to extract significant events and their context from lengthy video streams using video-language models. CliPPER's techniques could potentially offer the high-level event abstraction that Chameleon's memory could then anchor with fine-grained perceptual cues, allowing drones to translate continuous sensory input into actionable "memories." Finally, though geographically distinct, "The role of spatial context and multitask learning in the detection of organic and conventional farming systems based on Sentinel-2 time series" offers a real-world application illustrating the power of spatial and temporal reasoning. That paper highlights how grasping "spatial context" and employing "multitask learning" over time enhances classification. For a drone equipped with Chameleon's memory, this translates to recalling specific field boundaries, areas of interest, or task-specific objectives across multiple flights, showcasing how advanced memory can facilitate complex, sustained tasks on a grander scale. These interconnected efforts collectively underscore the increasing importance of contextual, time-aware intelligence in autonomous systems.

A Smarter Generation of Autonomous Systems

Chameleon brings us closer to a future where drones don't merely react to the immediate present. Instead, they intelligently learn and act based on a rich history of experience, unlocking genuine long-term autonomy in complex, often ambiguous environments.

Paper Details

Title: Chameleon: Episodic Memory for Long-Horizon Robotic Manipulation Authors: Xinying Guo, Chenxi Jiang, Hyun Bin Kim, Ying Sun, Yang Xiao, Yuhang Han, Jianfei Yang Published: Submitted on 26 Mar 2024 arXiv: 2603.24576 | PDF

Written by

Mini Drone Shop AISharing knowledge about drones and aerial technology.