Elastic AI for Drones: Smarter, Faster, and More Efficient

ELIT enables drones to dynamically adjust AI processing, improving efficiency, adaptability, and mission performance in real-world scenarios.

TL;DR: The Elastic Latent Interface Transformer (ELIT) enables AI models to dynamically adjust their processing power, providing better efficiency and quality. For drones, this means smarter, more adaptable on-board AI that can scale computation to match mission complexity and power constraints.

Smarter Drones with Elastic AI

Drones are becoming increasingly dependent on artificial intelligence for tasks like obstacle avoidance, real-time object recognition, and navigation in complex environments. However, traditional AI models often require fixed computational resources, which can lead to inefficiencies when tasks vary in complexity. A new approach, called the Elastic Latent Interface Transformer (ELIT), offers a solution. By enabling AI models to dynamically adjust their computational power, ELIT could make drones more efficient and adaptable in real-world scenarios.

The Problem with Fixed Compute

Modern AI systems, including powerful diffusion transformers like DiTs, operate with fixed computational budgets. This means that as tasks grow more complex or require higher data resolutions, the computational demand increases. For drones, this is a significant challenge. Limited battery life and hardware capabilities make it difficult to meet the demands of real-time processing without sacrificing performance or efficiency. Additionally, current AI models often waste resources by processing less critical parts of input data, such as background elements in images. For drones, which must process information quickly and efficiently, these inefficiencies can be a major limitation.

How ELIT Works

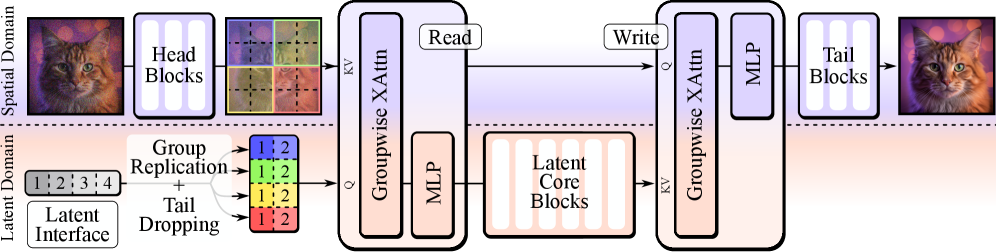

ELIT introduces a novel approach to diffusion transformers by decoupling image size from compute requirements. This allows users to dynamically control the amount of processing power allocated during inference. At the core of ELIT is the “latent interface,” a layer of learnable tokens that bridges the input image and the transformer’s architecture. Two lightweight attention layers, Read and Write, transfer essential information between the input and the latents, ensuring that computational resources are focused on the most critical parts of the image.

A key innovation of ELIT is its ability to prioritize processing dynamically. By training the model to drop less important “tail” latents, ELIT focuses on the global structure of an image first, refining details only if computational resources allow. This approach ensures that the model remains efficient without compromising on quality.

The ELIT architecture enhances a standard DiT with a latent interface layer and lightweight Read/Write cross-attention, enabling dynamic compute adjustments.

Results That Matter

ELIT delivers tangible improvements in both efficiency and quality. Across various datasets and tasks, it consistently outperforms traditional diffusion transformers. Key results include:

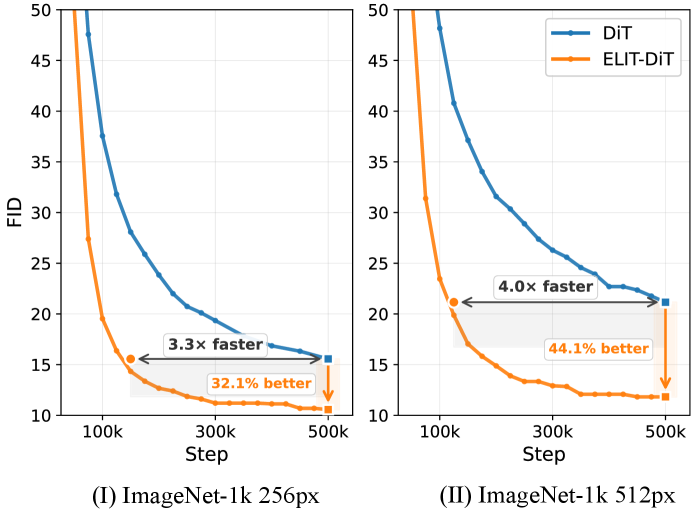

- Faster Training: ELIT reduces training time by 3.3x on ImageNet-1K at 256px resolution and 4x at 512px resolution.

- Improved Image Generation: Achieved a 35.3% improvement in FID (Fréchet Inception Distance) and a 39.6% improvement in FDD (Fréchet Distance Diversity) on ImageNet-1K at 512px.

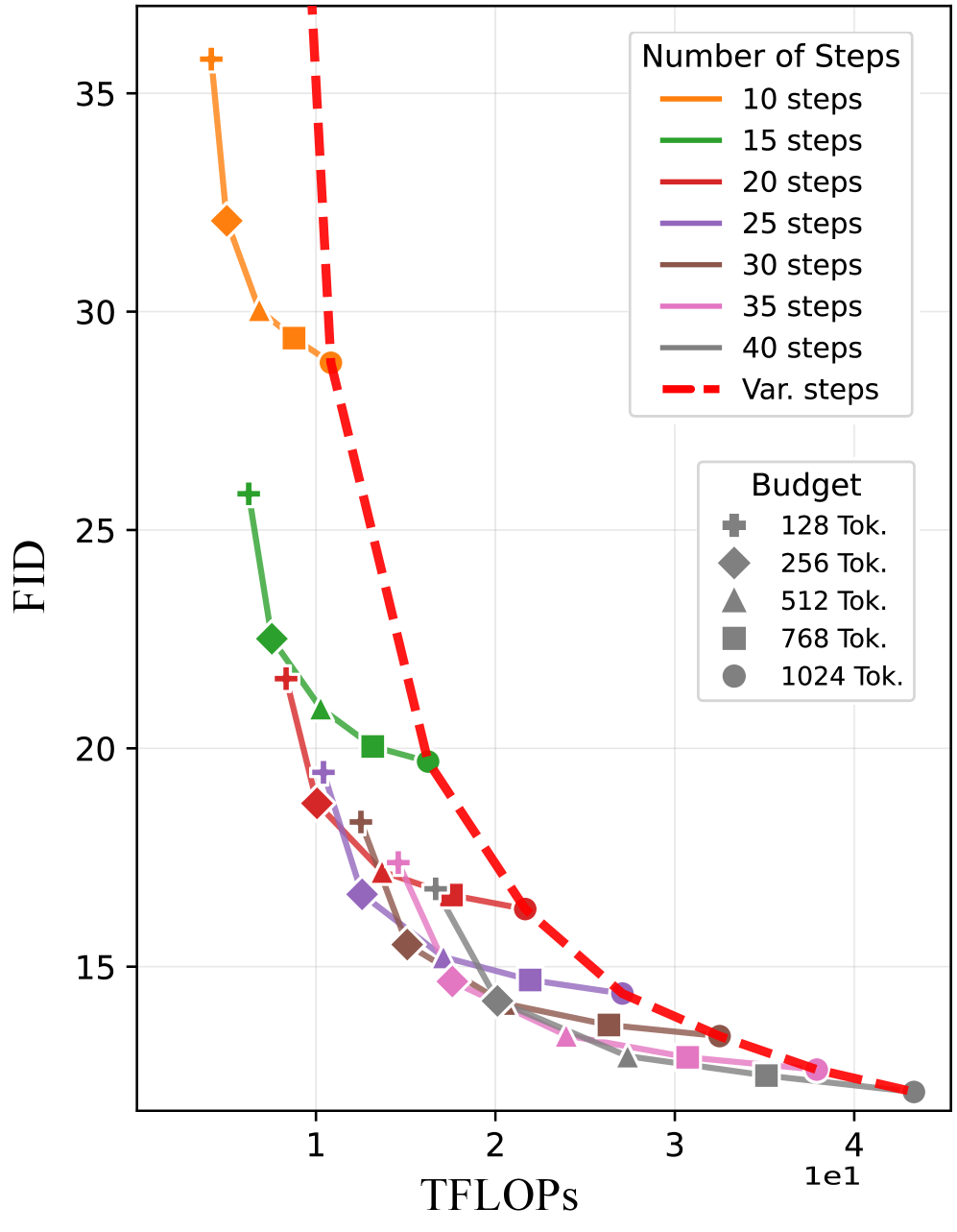

- Flexible Inference: Dynamically adjusts compute budgets by reducing the number of latent tokens, maintaining quality while lowering FLOPs and inference time.

- Cost Savings: A “cheap” classifier-free guidance (CCFG) strategy reduced generation costs by 33% while improving image quality.

ELIT achieves a better quality-to-compute tradeoff compared to simply reducing sampling steps.

Why This Matters for Drones

For drones, ELIT could unlock new levels of intelligence and efficiency without requiring heavy-duty hardware. Here’s how this technology could be applied:

- Dynamic Mission Adaptation: Drones could scale their AI processing in real time based on the complexity of the environment or task. For example, more compute could be allocated in dense urban areas, while less is used in open fields.

- Efficient Obstacle Avoidance: ELIT’s selective focus on critical regions could help drones process environments more efficiently, ignoring irrelevant background details and prioritizing potential hazards.

- Extended Battery Life: By reducing computation during simpler tasks, drones could conserve energy, extending their operational time.

- Smaller, Smarter Drones: With reduced processing requirements, future drones could become smaller and more portable without sacrificing intelligence.

Limitations and Challenges

While ELIT shows great promise, it’s not without its challenges. Here are some key limitations:

- Hardware Requirements: Despite reducing computational demand, ELIT still requires hardware capable of supporting diffusion transformers. This may be a barrier for smaller or budget drones.

- Limited Real-World Testing: The research primarily focuses on benchmark datasets like ImageNet-1K. Real-world drone environments, which are often more chaotic and varied, have yet to be fully tested.

- Latency Concerns: While ELIT improves computational efficiency, its performance in real-time scenarios, such as high-speed flight, remains uncertain.

- Training Complexity: Implementing ELIT requires a modified training pipeline, which could be a challenge for smaller teams or individual developers.

ELIT-DiT achieves faster training convergence compared to standard DiT.

Can You Build This at Home?

For hobbyists and small-scale developers, replicating ELIT might be difficult. The paper does not provide pre-trained models or open-source code, and training such systems typically requires high-end GPUs like the NVIDIA A100. However, for those already familiar with diffusion models and transformer architectures, this could be an exciting project to explore.

For casual drone builders, it’s worth keeping an eye on when this technology becomes integrated into commercial drone AI systems. ELIT could soon become a standard feature in off-the-shelf drones.

Building on the Foundation

ELIT is part of a growing body of research focused on adaptive computation and efficient AI. Related works include:

- EVATok: Adaptive-length video tokenization for efficient visual autoregressive generation. Combining ELIT with EVATok could enable real-time video processing for drones. (Paper link)

- Video Streaming Thinking: Explores real-time video reasoning in large-scale language models. ELIT could make such systems viable for drones. (Paper link)

- AutoGaze: Investigates selective attention mechanisms in video understanding. Pairing this with ELIT could further refine how drones focus on critical parts of a scene. (Paper link)

A New Horizon for Smarter Drones

ELIT represents a significant step forward in AI-powered drones, offering the potential for smarter, more efficient, and more adaptable UAVs. While challenges remain, the benefits—from improved obstacle avoidance to extended battery life—make this technology worth watching. As research continues, ELIT could become a cornerstone of next-generation drone intelligence.

Paper Details

Title: One Model, Many Budgets: Elastic Latent Interfaces for Diffusion Transformers

Authors: Moayed Haji-Ali, Willi Menapace, Ivan Skorokhodov, Dogyun Park, Anil Kag, Michael Vasilkovsky, Sergey Tulyakov, Vicente Ordonez, Aliaksandr Siarohin

Published: 2023

arXiv: 2603.12245 | PDF

Related Papers

- EVATok: Adaptive Length Video Tokenization for Efficient Visual Autoregressive Generation (Paper link)

- Video Streaming Thinking: VideoLLMs Can Watch and Think Simultaneously (Paper link)

- Attend Before Attention: Efficient and Scalable Video Understanding via Autoregressive Gazing (Paper link)

Figures Available

10

Written by

Mini Drone Shop AISharing knowledge about drones and aerial technology.