MegaFlow: Next-Gen Motion Sensing for Autonomous Drones

MegaFlow, an AI model, significantly enhances optical flow for drones. It enables perception of large, fast-moving objects and complex environments with strong zero-shot accuracy, promising more robust drone navigation and interaction.

TL;DR: MegaFlow is a new AI model that drastically improves how autonomous drones "see" motion. By leveraging powerful pre-trained vision models, it accurately tracks fast, large movements and generalizes to new environments without specific training (zero-shot). This means drones could navigate complex, dynamic spaces and avoid obstacles far more reliably than before.

Autonomous drones need to understand motion – not just their own, but everything moving around them. A new paper introduces MegaFlow, an AI model that significantly advances optical flow estimation. This represents a substantial improvement in how drones could perceive and react to dynamic environments, moving beyond the often-fragile motion sensing of the past.

The Blurry Vision Problem: Why Current Drones Struggle

Current optical flow methods, which estimate pixel-level motion between video frames, face significant limitations. They often depend on iterative local searches or extensive, task-specific fine-tuning. This approach works adequately for small, predictable movements or when pre-trained for a very specific scenario. However, when a drone encounters large, rapid movements – like a bird darting past at high speed, or navigating a chaotic construction site – these methods frequently fail. They struggle with 'large displacement' motion and, critically, don't generalize well to completely new environments (the 'zero-shot' problem). This means a drone trained in a simulated city might perform poorly in a real forest, leading to navigation errors, collisions, or inefficient path planning. The outcome? Less reliable autonomy and more manual intervention, which undermines the goal of an autonomous system.

Smarter Eyes: How Pre-Trained Models Boost Drone Perception

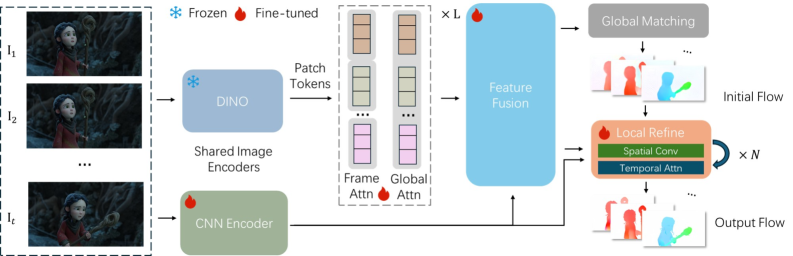

MegaFlow takes a different approach. Instead of building a complex, task-specific neural network from scratch, it leverages the power of existing, highly capable pre-trained vision models. Imagine giving a drone incredibly well-developed 'eyes' and then teaching it to interpret subtle shifts in what it sees. Specifically, MegaFlow adapts pre-trained Vision Transformer (ViT) features, which are already excellent at understanding global patterns in images.

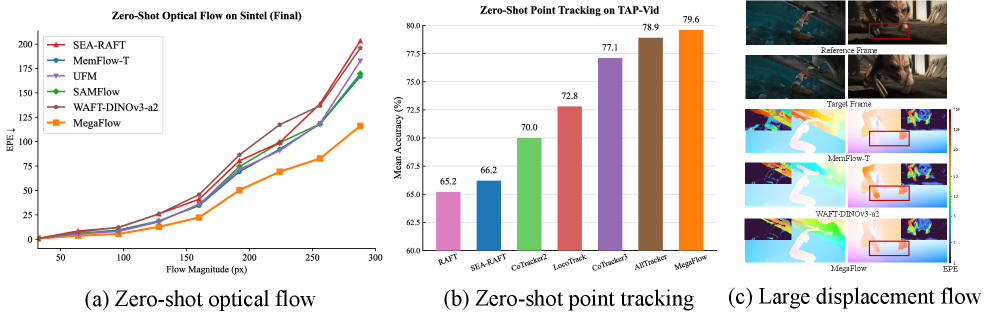

Figure 1:

Figure 1: MegaFlow consistently achieves low End-Point Error (EPE), especially for large displacements, and shows superior zero-shot point tracking.

The core idea is to treat flow estimation as a global matching problem. Traditional methods can get stuck in local minima when pixels move far between frames. By using these global ViT features, MegaFlow can 'see' across the entire frame, matching points that have moved significantly. This global perspective allows it to handle those challenging large displacements. After this initial global matching, a few lightweight iterative refinements fine-tune the sub-pixel accuracy. The entire pipeline is efficient and, crucially, robust to variable-length video inputs without needing architectural tweaks. This flexibility is a significant advantage for drone applications where video streams can vary.

Figure 2:

Figure 2: MegaFlow's pipeline uses frozen DINO and trainable CNN features, alternating frame and global attention, followed by iterative refinement for sub-pixel accuracy. It handles variable-length inputs seamlessly.

Performance That Delivers: The Numbers Behind MegaFlow

MegaFlow isn't just a clever idea; it delivers concrete results. The paper presents compelling performance across multiple benchmarks, particularly highlighting its zero-shot capabilities. Zero-shot means it's tested on data it has never specifically seen during training, which is vital for real-world drone deployment.

- Optical Flow: On benchmarks like

Sintel(Final),MegaFlowachieves the lowest End-Point Error (EPE), with its advantage becoming even more pronounced when dealing with large displacements. This indicates improved tracking of fast-moving objects or scenes where the drone itself is moving quickly. - Point Tracking:

MegaFlowalso demonstrates state-of-the-art zero-shot performance on long-range point tracking benchmarks likeTAP-Vid. This suggests it can accurately follow specific features or objects over extended periods, even with occlusions or significant changes. - Generalization: The model generalizes remarkably well to diverse video scenes, including Full HD resolution videos, without needing specific fine-tuning. This highlights its robust transferability.

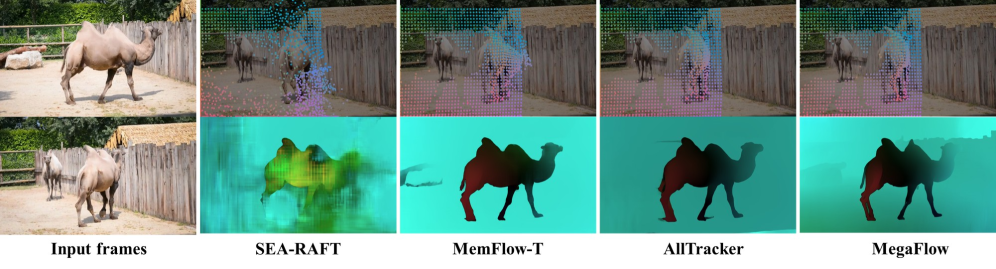

Figure 3:

Figure 3: MegaFlow produces more accurate and temporally consistent long-range tracks and flow estimates on the DAVIS benchmark compared to other leading methods.

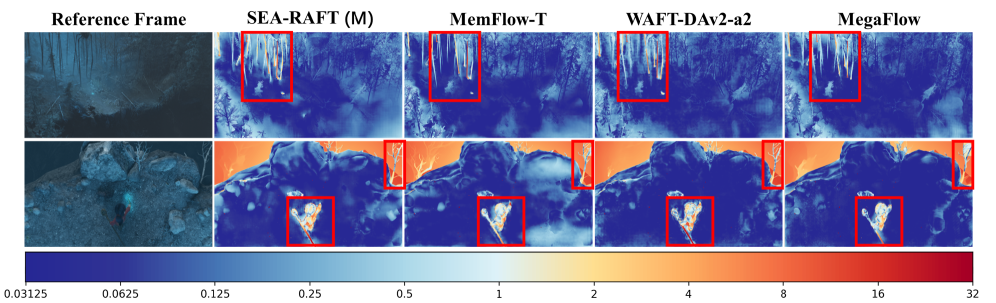

Figure 4:

Figure 4: MegaFlow outperforms prior methods on the Spring benchmark, generalizing well to Full HD resolution and preserving both local and global motion details.

Figure 5: Unlike methods using isolated frame pairs,

Figure 5: Unlike methods using isolated frame pairs, MegaFlow's expanded multi-frame context generates stable and accurate motion boundaries, reducing temporal inconsistencies and occlusion artifacts.

Impact on Autonomy: What MegaFlow Means for Drones

This technology holds significant implications for autonomous drones. Consider a drone performing high-speed infrastructure inspection, where components vibrate rapidly, or flying through a forest with unpredictably swaying branches. MegaFlow's ability to accurately perceive large, complex motion without prior training for that exact scenario could enable new levels of autonomy and safety.

Specifically, this means:

- Enhanced Obstacle Avoidance: Drones could more reliably detect and track fast-moving obstacles like other drones, birds, or thrown objects, significantly reducing collision risks.

- Precise Navigation in Dynamic Environments: Navigating complex industrial sites, disaster zones, or dense urban areas with unpredictable human and vehicle movement becomes far more feasible. Accurate optical flow provides better odometry and localization, even when GPS is denied or unreliable.

- Improved Object Interaction and Tracking: For drones performing tasks like delivery, surveillance, or cinematic filming, the ability to track objects with high precision over long durations, even with occlusions, is invaluable. This could lead to more stable following shots or more robust package delivery to moving targets.

- Better Swarm Coordination: If each drone in a swarm has a clearer, more adaptable understanding of its environment's motion, the entire swarm can coordinate more effectively, avoiding collisions and maintaining formations even in challenging conditions.

Where MegaFlow Still Needs Work: Current Limitations

No research is perfect, and MegaFlow, while impressive, has its boundaries. The paper doesn't deeply explore the computational cost or power requirements, which are critical for resource-constrained drones. While it leverages pre-trained models, the inference speed on embedded hardware like an NVIDIA Jetson or Qualcomm Snapdragon Flight remains a key question for real-time deployment. We also don't see extensive testing in extreme low-light or adverse weather conditions (fog, heavy rain), which significantly challenge any vision system.

The authors acknowledge that while their approach excels at zero-shot generalization, it still involves some iterative refinement steps. While 'lightweight,' these steps add to computation time compared to purely feed-forward models. A further push towards even faster, purely single-pass flow estimation on embedded systems would be the next logical step for widespread real-world drone deployment. Finally, integrating this motion sensing with higher-level semantic understanding is crucial. Knowing what is moving is often as important as how it's moving.

DIY Feasibility: Getting Your Hands on MegaFlow

For hobbyists and builders, replicating MegaFlow would be challenging but not impossible. The model relies on powerful pre-trained Vision Transformers, specifically DINO features. While the code is open-source (the project page is linked in the abstract), training such a model from scratch typically requires substantial computational resources (GPUs). Running inference, however, could be feasible on higher-end embedded AI boards. If the authors release pre-trained models and optimized inference pipelines (e.g., ONNX or TensorRT compatible), hobbyists with Jetson Orin or similar hardware could certainly experiment with integrating it into their drone platforms. The ability to handle variable-length inputs without architectural changes is a significant advantage for real-world testing and integration.

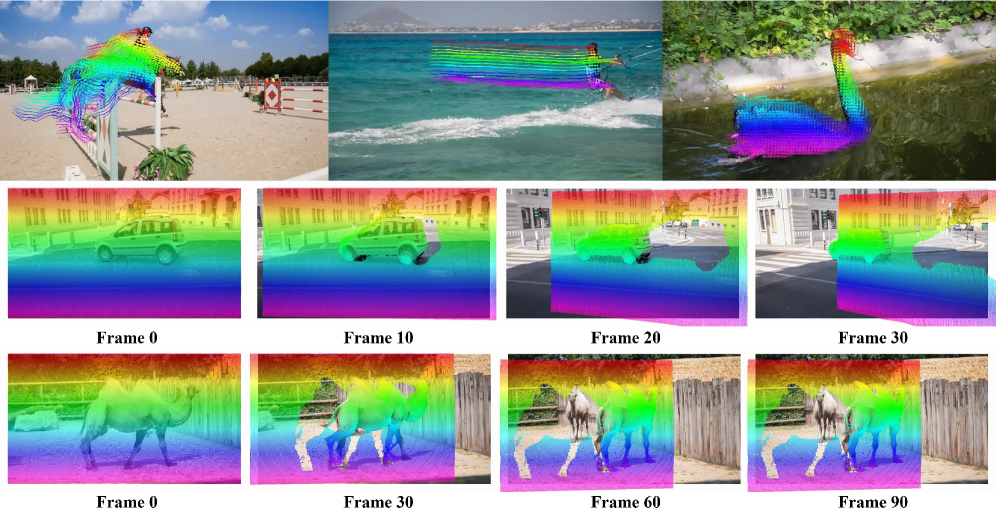

Figure S1: After TSHK-stage training,

Figure S1: After TSHK-stage training, MegaFlow achieves strong zero-shot tracking on the TAP-Vid dataset, enabling both dense tracking and stable long-range point trajectories.

Figure S2: After TSHK-stage training,

Figure S2: After TSHK-stage training, MegaFlow generalizes well to diverse video scenes, demonstrating stable large displacement optical flow prediction.

Figure S3:

Figure S3: MegaFlow's qualitative performance on Sintel is superior to other methods like SEA-RAFT, MemFlow, and WAFT-DINOv3-a2.

Beyond Motion: Towards Truly Intelligent Drones

MegaFlow's precise motion sensing is a vital component, but it's not the entire solution. For a truly intelligent drone, this data needs integration with broader environmental understanding. For instance, the 'MuRF: Unlocking the Multi-Scale Potential of Vision Foundation Models' paper addresses robust object recognition across varying scales. A drone combining MegaFlow's motion perception with MuRF's multi-scale object understanding would possess a far more complete and adaptable perception system.

Then there's the challenge of consistent understanding. After MegaFlow provides accurate motion data, models like those in 'R-C2: Cycle-Consistent Reinforcement Learning Improves Multimodal Reasoning' can ensure the drone's interpretation of its environment is robust and internally consistent, reducing the chance of contradictory information leading to poor decisions. Furthermore, understanding what something is helps with compositional concepts, like 'a red car on a bridge,' and generalizing to new situations, as explored in 'No Hard Negatives Required: Concept Centric Learning Leads to Compositionality without Degrading Zero-shot Capabilities of Contrastive Models.' MegaFlow handles the 'how it moves'; these others address the 'what it is' and 'what it means.'

This zero-shot, large displacement optical flow isn't merely an incremental improvement; it's a foundational capability that makes more advanced, reliable autonomous drone operations genuinely possible. The future of drone autonomy relies on this kind of robust, adaptable 'seeing,' not just flying.

Paper Details

Title: MegaFlow: Zero-Shot Large Displacement Optical Flow Authors: Dingxi Zhang, Fangjinhua Wang, Marc Pollefeys, Haofei Xu Published: Submitted to arXiv: March 2026 arXiv: 2603.25739 | PDF

Written by

Mini Drone Shop AISharing knowledge about drones and aerial technology.