Pointy: Lightweight AI for Sharper 3D Drone Vision on Less Data

A new transformer architecture, Pointy, processes 3D point clouds with significantly less training data than larger models, offering efficient and accurate perception for autonomous drones.

Your Drone's Next Vision Upgrade Just Got Leaner

TL;DR: Researchers have unveiled Pointy, a lightweight transformer architecture designed for 3D point cloud processing. It delivers perception accuracy on par with much larger, data-intensive foundation models, yet requires significantly less training data and computational power. This marks a substantial step forward for equipping resource-constrained drones with advanced 3D 'eyes'.

Autonomous drones need to truly see the world in 3D, not merely generate a static map. This means they must actively understand their surroundings, accurately detect obstacles, and navigate intricate, dynamic environments with confidence. For hobbyists pushing the boundaries of smarter drone builds and engineers deploying these systems in critical real-world scenarios, this advanced perception capability is absolutely essential. The primary hurdle? Achieving truly robust and reliable 3D perception typically requires powerful, data-hungry AI models, vast training datasets, and considerable onboard processing muscle. But what if you could achieve top-tier perception without all that computational weight and data overhead?

The Weight of Advanced 3D AI

Today's most advanced AI for 3D perception, particularly the "foundation models," are powerful but come with significant demands. They typically require extensive pre-training on millions of data points, images, and even text samples. This massive data appetite directly leads to high computational needs, large model sizes, and substantial power consumption – all significant hurdles for deployment on small, battery-powered drones. For many of us, training or even running these colossal models on a drone's onboard computer is simply impractical due to limitations in weight, power, and cost. We need intelligent, efficient solutions, not just bigger ones.

Pointy's Smart, Streamlined Design

Pointy addresses this challenge directly by reimagining the transformer architecture for point clouds. Rather than simply scaling up data and model size, the researchers prioritized an efficient design and a carefully chosen training approach. It's a transformer-based backbone specifically engineered for 3D point cloud processing, built to be both lightweight and highly effective.

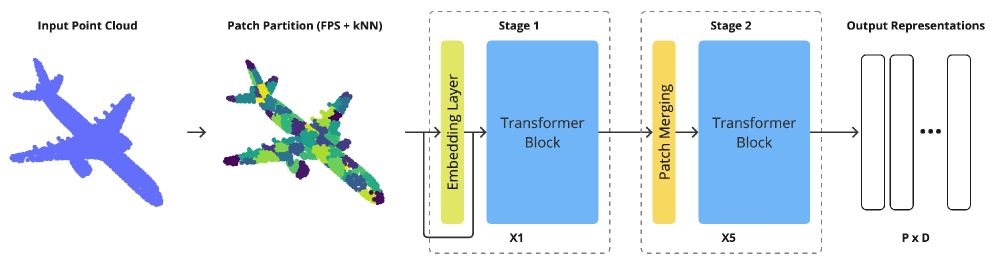

Figure 1: Architecture Pointy – transformer backbone for point cloud processing. The model takes raw point cloud data as input, applies patch partitioning using Farthest Point Sampling (FPS), and k-Nearest Neighbors (kNN). Raw point features are preserved through residual connections alongside learned patch embeddings in the embedding layer based on PointNet. The architecture consists of token merging operations between adjacent tokens after each transformer block. This hierarchical design enables local and global feature learning through progressive patch merging. The final output produces P×D dimensional representations, where P is the number of patches and D is the embedding dimension.

Figure 1: Architecture Pointy – transformer backbone for point cloud processing. The model takes raw point cloud data as input, applies patch partitioning using Farthest Point Sampling (FPS), and k-Nearest Neighbors (kNN). Raw point features are preserved through residual connections alongside learned patch embeddings in the embedding layer based on PointNet. The architecture consists of token merging operations between adjacent tokens after each transformer block. This hierarchical design enables local and global feature learning through progressive patch merging. The final output produces P×D dimensional representations, where P is the number of patches and D is the embedding dimension.

In essence, Pointy takes raw point cloud data as input. It then employs techniques such as Farthest Point Sampling (FPS) and k-Nearest Neighbors (kNN) to intelligently divide these points into patches. Importantly, it maintains raw point features via residual connections and utilizes learned patch embeddings (inspired by PointNet). The architecture features a hierarchical design with token merging between transformer blocks, enabling it to learn both fine local details and broader global contextual features efficiently. This 'tokenizer-free' method stands out as a key innovation, simplifying the processing pipeline and reducing overhead.

Performance That Defies Its Size

This isn't merely theoretical; Pointy delivers concrete results. The paper highlights several compelling comparisons:

- Data Efficiency: Pointy was trained using only 39,000 point clouds. This is a stark contrast to other foundation models, which often demand upwards of 200,000 samples, or even millions when incorporating multi-modal data (images, text).

- Outperforms Larger Models: Despite its minimal training data footprint, Pointy surpassed several larger foundation models that had been exposed to significantly more training samples.

- Approaches State-of-the-Art: It achieved results nearly matching the state-of-the-art from models that processed over a million multi-modal samples, underscoring the effectiveness of its efficient architecture and training approach.

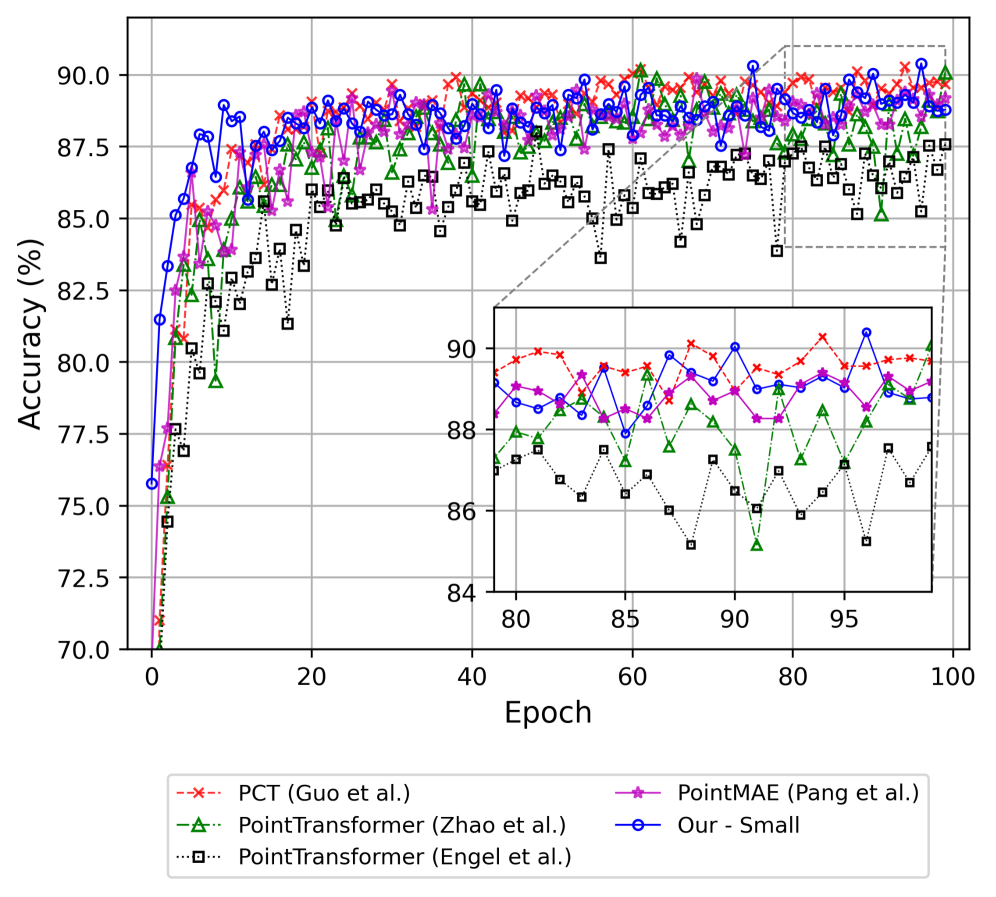

- Classification Accuracy: On the

ModelNet40dataset, Pointy recorded an 89.3% classification accuracy for 2048 input points after just 30 epochs of training under standardized conditions.

Figure 2: (a) ModelNet40

Figure 2: (a) ModelNet40

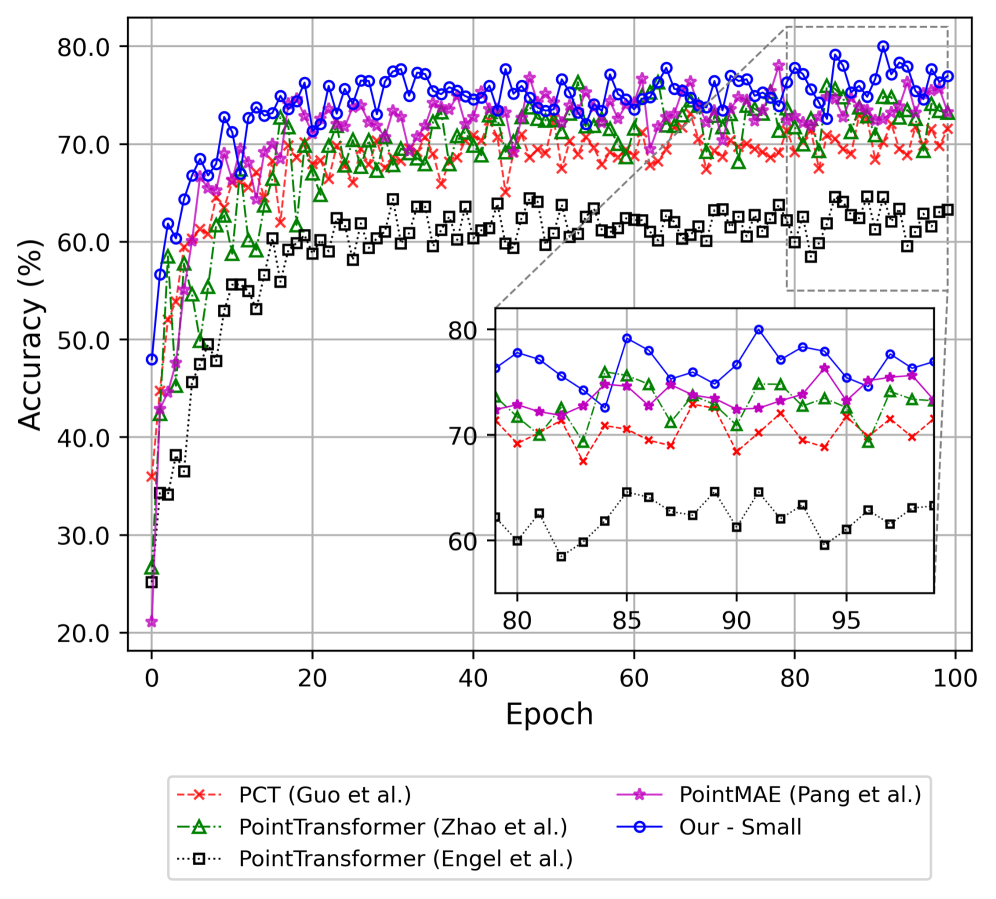

Figure 3: (b) ScanObjectNN

Figure 3: (b) ScanObjectNN

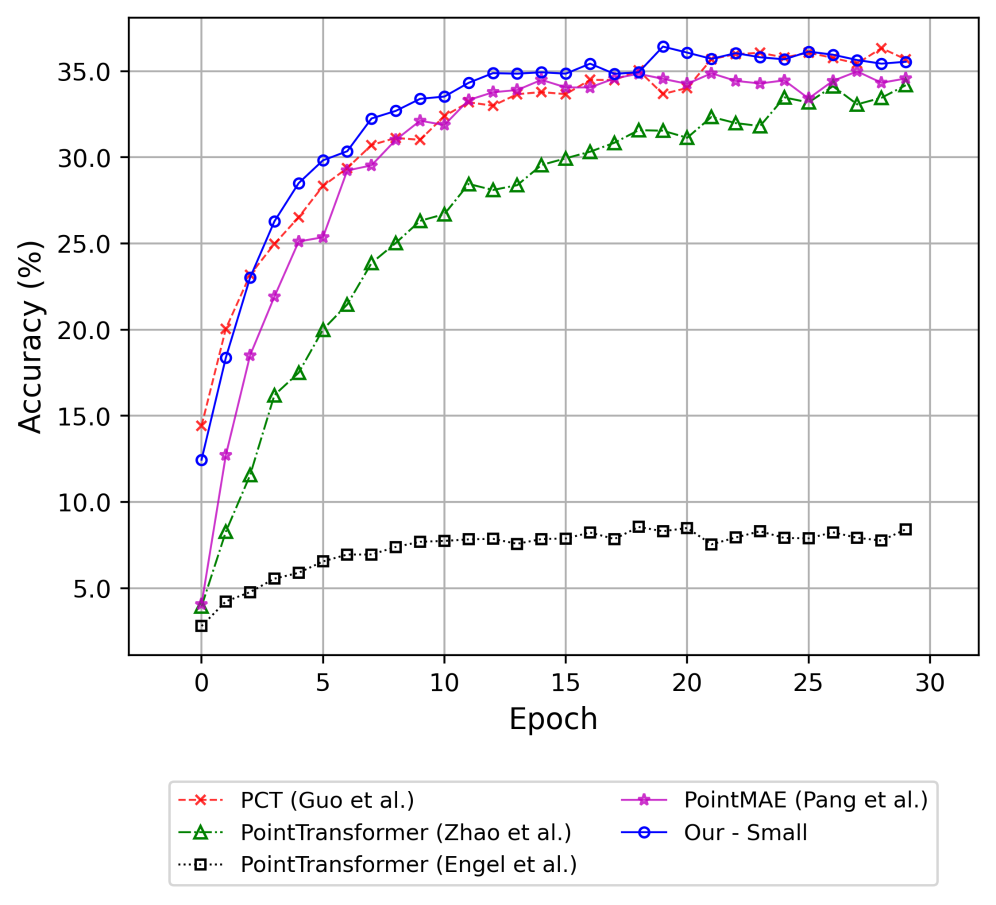

Figure 4: (c) Objaverse-LVIS

Figure 4: (c) Objaverse-LVIS

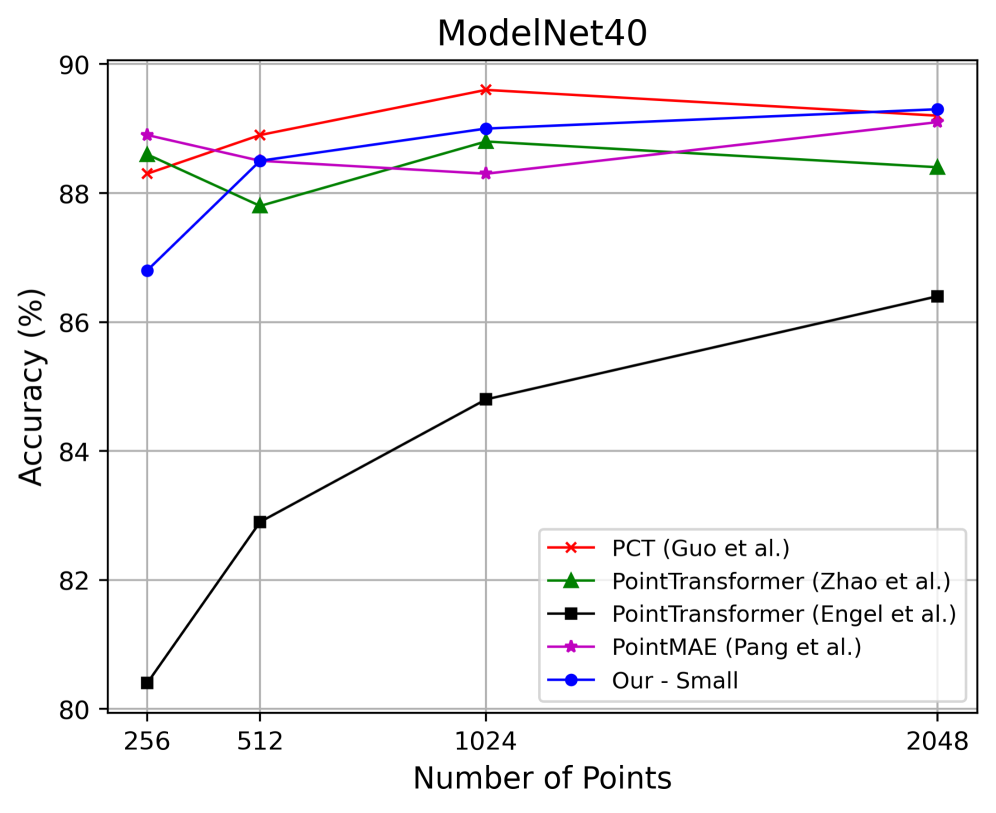

While some models, like PCT, demonstrate superior performance at lower point cloud sizes (e.g., 256-1024 points, as illustrated in Figure 5), Pointy remains highly competitive, particularly as the point count increases, showcasing robust overall performance with its streamlined design.

Figure 5: Classification accuracy on ModelNet40 as a function of input point cloud size. Models were trained for 30 epochs under identical conditions, with results showing the peak accuracy achieved. While PCT demonstrates superior performance in the 256-1024 point range, our architecture achieves competitive results and attains 89.3% for 2048 points.

Figure 5: Classification accuracy on ModelNet40 as a function of input point cloud size. Models were trained for 30 epochs under identical conditions, with results showing the peak accuracy achieved. While PCT demonstrates superior performance in the 256-1024 point range, our architecture achieves competitive results and attains 89.3% for 2048 points.

Why Pointy Matters for Your Drone Projects

This research carries a direct and profound impact on the future trajectory of autonomous drones. Pointy fundamentally changes the equation, meaning you can now equip your drone with sophisticated 3D perception capabilities without the prohibitive requirement of a supercomputer onboard or an endless, costly supply of training data. This opens up a host of exciting possibilities. Consider the transformative implications:

- Smarter, Lighter Drones: A reduced computational load allows for smaller, lighter, and more power-efficient onboard processors. This directly translates to extended flight times, increased payload capacity, and lower overall drone costs.

- Enhanced Autonomy: Improved 3D perception enables more reliable obstacle avoidance, precise navigation in GPS-denied environments, and accurate object recognition for critical tasks like inspection or delivery.

- Faster Development: Requiring less data for training makes it quicker and simpler for developers and researchers to adapt these models to specific drone applications, even with limited custom datasets.

- Edge AI for Drones: This innovation brings advanced AI capabilities from the cloud directly to the drone's 'edge,' facilitating real-time decision-making where low latency is paramount.

Where Pointy Still Needs to Grow

While Pointy represents a significant leap forward, it's crucial to acknowledge its current limitations. The model, like many perception systems, excels at understanding the 3D environment but doesn't inherently handle acting within it. Real-world drone deployment introduces several complexities not fully addressed by this work:

- Dynamic Environments: The training datasets, while comprehensive, might not fully capture the chaotic and ever-changing nature of real-world drone operations, including factors like moving obstacles, fluctuating lighting conditions, and sensor noise.

- Generalization to Novel Objects: Despite its efficiency, Pointy's ability to generalize to entirely new objects or heavily occluded scenes encountered in unpredictable environments will require further rigorous testing.

- Sensor Integration: The paper primarily focuses on point cloud processing. Integrating this capability with other sensor modalities, such as thermal imaging or optical flow, will be the next logical step towards a more complete drone perception system.

- Beyond Perception: Pointy provides the 'eyes,' but a drone still requires the 'brain' to interpret this visual information for complex decision-making and robust control, particularly in safety-critical applications.

Getting Started: DIY Feasibility

For builders and hobbyists, there's good news: the authors have made Pointy remarkably accessible. The full implementation, including code, pre-trained models, and training protocols, is openly available on GitHub. If you have access to a modern GPU (such as an NVIDIA RTX series card), you can readily experiment with this architecture. This open-source release significantly lowers the barrier to entry for integrating advanced 3D perception into your own drone projects or exploring its capabilities firsthand.

The Bigger Picture: Beyond Just Seeing

Pointy's remarkable efficiency in 3D perception lays a crucial foundation for a much broader scope of autonomous capabilities. Once a drone can reliably 'see' and interpret its world in three dimensions, the next significant challenges shift towards understanding environmental dynamics, predicting changes, and making truly intelligent, proactive decisions. This foundational work seamlessly integrates with and enhances other cutting-edge research across the robotics and AI landscape:

- Action Reasoning: Papers like "DynVLA: Learning World Dynamics for Action Reasoning in Autonomous Driving" by Shang et al. (https://arxiv.org/abs/2603.11041) focus on predicting environmental changes and determining appropriate actions. Pointy could supply

DynVLAwith the precise 3D input needed for effective reasoning, allowing drones to not just perceive, but to anticipate. - Reliable Control: "PPGuide: Steering Diffusion Policies with Performance Predictive Guidance" by Wang et al. (https://arxiv.org/abs/2603.10980) explores methods to ensure robotic actions are reliable and error-free. Pointy's enhanced perception, combined with

DynVLA's reasoning, would greatly benefit fromPPGuide's framework for executing actions safely and robustly in dynamic drone operations. - Physical Interaction: Research such as "Learning Adaptive Force Control for Contact-Rich Sample Scraping with Heterogeneous Materials" by Cetin et al. (https://arxiv.org/abs/2603.10979) demonstrates drones moving beyond passive observation to active interaction. With Pointy enabling precise 3D understanding, drones could perform complex, contact-rich tasks like environmental sampling or intricate infrastructure manipulation.

- Advanced Sensing: And it's not solely about geometry. "Neural Field Thermal Tomography" by Zhong et al. (https://arxiv.org/abs/2603.11045) introduces a method for 3D reconstruction of material properties using thermal data. This expands the definition of '3D vision' for drones, allowing them to infer hidden material characteristics for non-destructive evaluation and opening up high-value inspection applications.

Pointy offers a compelling path toward more capable, autonomous drones by making advanced 3D perception practical for real-world deployment. This work is not just about refining models; it's about empowering a new generation of drone applications by making high-performance AI accessible.

Paper Details

Title: Pointy - A Lightweight Transformer for Point Cloud Foundation Models Authors: Konrad Szafer, Marek Kraft, Dominik Belter Published: March 2026 arXiv: 2603.10963 | PDF

Original Paper: Pointy - A Lightweight Transformer for Point Cloud Foundation Models Related Papers:

- DynVLA: Learning World Dynamics for Action Reasoning in Autonomous Driving

- PPGuide: Steering Diffusion Policies with Performance Predictive Guidance

- Learning Adaptive Force Control for Contact-Rich Sample Scraping with Heterogeneous Materials

- Neural Field Thermal Tomography: A Differentiable Physics Framework for Non-Destructive Evaluation

Written by

Mini Drone Shop AISharing knowledge about drones and aerial technology.