Smart Drones: Never Lost, Even in Featureless Voids

New research allows drones to autonomously explore challenging, feature-sparse environments by actively seeking out visual cues and optimizing their flight path to maintain reliable localization and mapping.

TL;DR: Autonomous drones typically struggle in environments lacking visual features, leading to localization errors and failed missions. This new approach enables drones to actively seek out and prioritize visually rich areas and optimize their camera's orientation to maintain stable tracking, resulting in significantly more reliable exploration and mapping in tough conditions.

Navigating the Blank Canvas

For anyone building or operating autonomous drones, the challenge of navigating vast, empty spaces is a familiar headache. Think of an industrial warehouse with blank walls, a long, featureless tunnel, or even a sterile laboratory environment. These are places where a drone needs to go, but current autonomous exploration methods often hit a wall – literally, in some cases. This paper, "Perception-Aware Autonomous Exploration in Feature-Limited Environments," tackles this head-on, presenting a robust framework that allows stereo-equipped UAVs to not just explore, but to think about how they're exploring, ensuring they don't lose their way even when there's seemingly nothing to 'see'.

The Unseen Pitfalls of Blind Exploration

Most autonomous exploration strategies prioritize one thing: covering as much unknown space as possible, as quickly as possible. This seems logical, but it overlooks a critical dependency: the drone's ability to know where it is. Visual-inertial odometry (VIO), the backbone of many drone navigation systems, relies heavily on tracking distinct visual features in the environment. When these features are sparse or absent – like a smooth, untextured wall – VIO performance degrades rapidly.

This degradation isn't just an inconvenience; it's a mission-killer. Odometry drift accumulates, maps become corrupted, and the drone can get lost, leading to mission failure or even crashes. Current methods don't account for this visual dependency, essentially sending drones blind into areas where their primary navigation sensor is hobbled. The core problem isn't just where to go, but how to go there without losing perception.

Guiding Light: How Drones Learn to 'See'

The researchers propose a hierarchical perception-aware exploration framework that explicitly links exploration progress with the drone's ability to maintain stable visual feature tracking. It's a two-pronged attack on the feature-limited problem, designed to keep the drone's VIO robust.

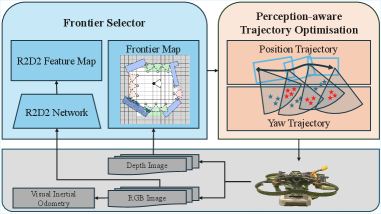

Figure 2: The perception-aware exploration framework consists of two main components: a frontier selector and perception-aware trajectory optimization.

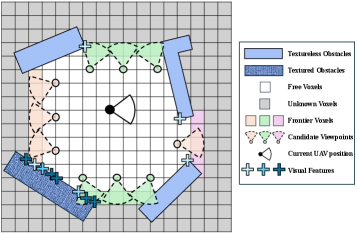

First, there's a frontier selector. Instead of just picking the closest unknown area (a 'frontier'), this system evaluates candidate frontiers based on an expected feature quality. It builds a global feature map, identifying areas with higher texture and more stable visual cues. When deciding where to go next, it prioritizes subgoals that offer better visual information, even if they're not the absolute closest unexplored point. This intelligent choice ensures the drone is always heading towards an area where it can confidently localize.

Figure 3: The frontier selector evaluates candidate viewpoints based on expected feature quality (blue intensity), preferring locations with stronger visual support even if farther away.

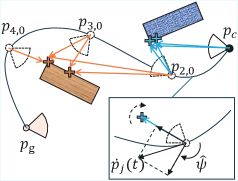

Second, and equally crucial, is the perception-aware trajectory optimization. Once a path is chosen, the drone doesn't just fly straight. It actively optimizes its continuous yaw trajectory. This means the drone can turn its camera to keep known, high-quality features within its field of view, even as it moves. By compensating for relative angular velocity, it maintains stable feature tracks, preventing the blurring and loss of features that often plague VIO in motion. This is a subtle but powerful enhancement, ensuring that even if features are scarce, the ones it has are used optimally.

Figure 4: The yaw angle trajectory optimization dynamically adjusts the drone's orientation to maintain covisible feature sets, compensating for relative angular velocity to keep features in view.

The Numbers Don't Lie: Better Tracking, Deeper Exploration

The researchers put their method to the test in both simulated environments with varying texture levels (Figure 5 in the paper) and real-world indoor scenarios featuring largely textureless walls. The results are compelling:

- Superior Feature Tracking: The system consistently maintained a higher number of tracked visual features per frame compared to baselines that ignored feature quality or didn't optimize yaw. This is evident in Figure 7, showing significantly more tracked features throughout the exploration progress.

Figure 7: This graph clearly shows the proposed method (Perception-Aware) maintaining a higher count of tracked visual features throughout the exploration process, indicating more robust VIO.

- Reduced Odometry Drift: By keeping VIO stable, the accumulation of localization errors was significantly curtailed.

- Increased Coverage: The perception-aware drone achieved, on average, a 30% higher coverage of the environment before its odometry error exceeded specified thresholds. This means it could explore more space reliably before getting lost.

In real-world experiments, the drone successfully explored rooms with mostly blank walls, actively turning to keep an eye on textured obstacles or corners to ensure reliable state estimation. (See Figure 10 for a visual of this in action).

Figure 10: Real-world demonstration shows the drone's trajectory and camera orientations (a), its active tracking of features (b), and the global feature map guiding its perception-aware exploration (c).

Beyond the Lab: Real-World Impact

This research is a significant step forward for autonomous drone capabilities. For industrial applications, such as inspecting large, empty warehouses, tunnels, or infrastructure, this means drones can perform complex mapping and monitoring tasks with far greater reliability. Search and rescue operations in visually challenging environments – like collapsed buildings or barren landscapes – could benefit immensely from a drone that won't easily lose its bearings. It expands the operational envelope for current VIO-dependent UAVs, making them viable in a whole new class of difficult environments.

The Road Ahead: Where This Approach Stumbles

While promising, this method isn't without its considerations. The primary limitation is its inherent reliance on visual features. If an environment is truly featureless and also completely dark, or if surfaces are perfectly uniform and reflective, even the most perception-aware system will struggle. The approach also currently focuses on static environments for its global feature map; dynamic changes in the environment could pose challenges for map maintenance.

Furthermore, continuously optimizing yaw and maintaining a global feature map adds computational overhead. For smaller drones with limited onboard processing power (e.g., lower-end NVIDIA Jetson variants), striking a balance between autonomy and flight time remains a critical engineering challenge. The paper primarily demonstrates this with stereo cameras; integrating other sensor modalities like LiDAR for environmental understanding might offer even greater robustness, especially in low-light or textureless conditions, though feature quality assessment would be different.

Can You Build This?

Directly replicating this research for a hobbyist might be a stretch, as it involves advanced algorithms for state estimation, mapping, and sophisticated path/yaw planning. You'd need a drone equipped with a high-quality stereo camera and a powerful onboard computing platform capable of running complex VIO algorithms, map maintenance, and real-time trajectory optimization – likely something like an NVIDIA Jetson Orin Nano or NX.

The underlying concepts, however, are invaluable. For advanced builders and university projects using ROS, the principles of prioritizing visually rich subgoals and actively stabilizing feature tracks can inspire custom navigation stacks. While the specific code might not be open-source yet, the detailed methodology provides a roadmap for those looking to push the boundaries of drone autonomy in challenging environments.

Building on Giants: Related Innovations

The ability to explore intelligently in feature-limited environments doesn't exist in a vacuum. It relies on fundamental advancements across robotics. For instance, a drone's stable flight is paramount for clear sensor data. Papers like "Optimal control of differentially flat underactuated planar robots in the perspective of oscillation mitigation" (arXiv:2603.15528) highlight strategies for mitigating oscillations in underactuated systems. Stable motion directly translates to cleaner VIO inputs, making the job of feature tracking and odometry even more reliable, especially when features are scarce.

As drones become more sophisticated, they'll move beyond simple exploration to higher-level understanding. If a drone uses Vision-Language Models (VLMs) to interpret its surroundings, ensuring those interpretations are trustworthy becomes vital. "Anatomy of a Lie: A Multi-Stage Diagnostic Framework for Tracing Hallucinations in Vision-Language Models" (arXiv:2603.15557) addresses the critical issue of VLM 'hallucinations.' A perception-aware drone needs to not only 'see' reliably but also 'understand' reliably, adding another layer of trust to its autonomous operations.

Finally, intelligent exploration is often a precursor to interaction. Once a drone can confidently navigate and map a complex, featureless space, the next logical step is to perform tasks within it. This is where research like "Towards Generalizable Robotic Manipulation in Dynamic Environments" (arXiv:2603.15620) comes into play. It introduces Vision-Language-Action (VLA) models for robotic manipulation in dynamic environments, extending the story from a drone that can explore intelligently to one that can also understand and interact with a changing world after its initial reconnaissance.

Paper Details

Title: Perception-Aware Autonomous Exploration in Feature-Limited Environments Authors: Moji Shi, Rajitha de Silva, Hang Yu, Riccardo Polvara, Marija Popović Published: March 2026 (based on arXiv ID) arXiv: 2603.15605 | PDF

Written by

Mini Drone Shop AISharing knowledge about drones and aerial technology.