TETO: Event Cameras Finally Master Real-World Motion for Drones

Researchers developed TETO, a new framework enabling event cameras to achieve state-of-the-art motion estimation and frame interpolation using minimal real-world data, overcoming the long-standing sim-to-real gap.

TL;DR: TETO is a new framework that teaches event cameras to track motion and predict optical flow with state-of-the-art accuracy, using only 25 minutes of unannotated real-world video. This bypasses the typical reliance on flawed synthetic data, making event cameras far more practical for real-world drone applications.

Unlocking Superhuman Perception for Drones

Event cameras are fascinating sensors. Unlike traditional cameras that capture frames, they record individual pixel brightness changes with microsecond precision. This design gives them a natural advantage for capturing rapid motion and performing well in difficult lighting – precisely what drones require. The challenge, however, has been teaching them to "see" motion accurately in the real world. This often meant relying on huge, costly synthetic datasets that simply don't reflect reality. A new paper introducing TETO changes this, making accurate motion perception from event cameras genuinely practical.

The Real-World Motion Problem

For years, the potential of event cameras for high-speed, low-latency motion perception was evident. However, converting those raw "events" into useful motion data, such as optical flow or object trajectories, posed a significant deep learning challenge. Most existing solutions relied heavily on massive synthetic datasets (like those generated by V2E or Voltmeter). While synthetic data offers perfect ground truth, it comes with a major flaw: the "sim-to-real gap."

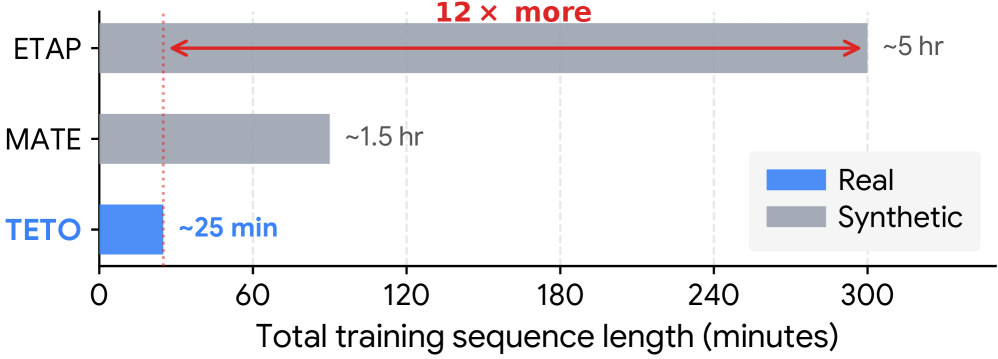

Figure 1: Training data scale of event-based trackers.

This gap is more than a minor inconvenience; it's a fundamental problem. Synthetic events often don't behave like real-world events. They can exhibit unrealistic periodic artifacts or different statistical distributions, as Figure 2 clearly shows. Training models on this flawed data results in systems that perform poorly when faced with the unpredictable, noisy reality of actual drone flight. A drone navigation system built on data that doesn't quite reflect the real world is a recipe for instability and failure.

![Real event vs. synthetic event analysis. (a) Inter-Event Interval (IEI) distributions show that real events concentrate in short intervals with rapid decay, while synthetic events exhibit long tails and periodic artifacts. (b) The appearance of real and synthetic events differs substantially, with synthetic events from V2E [hu2021v2e] and Voltmeter [lin2022dvs] exhibiting artifacts not present in real-world recordings.](https://qawnehlcoileybzacnvr.supabase.co/storage/v1/object/public/article-covers/figures/teto-event-cameras-finally-master-real-world-motion-for-drones-mn5r9ck9/fig-1.png)

Figure 2: Real event vs. synthetic event analysis. (a) Inter-Event Interval (IEI) distributions show that real events concentrate in short intervals with rapid decay, while synthetic events exhibit long tails and periodic artifacts. (b) The appearance of real and synthetic events differs substantially, with synthetic events from V2E [hu2021v2e] and Voltmeter [lin2022dvs] exhibiting artifacts not present in real-world recordings.

Moreover, collecting and annotating real-world event camera data for motion estimation is incredibly difficult and time-consuming. This combination of sim-to-real issues and annotation bottlenecks has prevented event cameras from reaching their full potential in real-world applications like autonomous drones.

How TETO Teaches Event Cameras to See

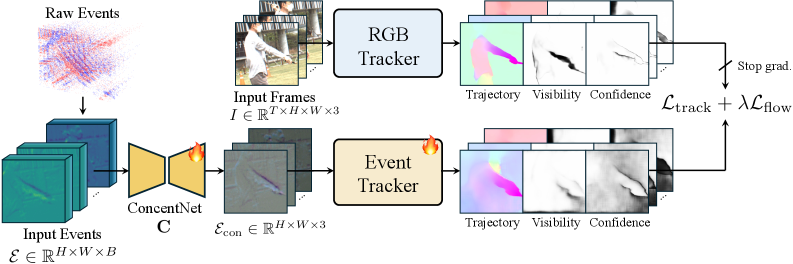

The TETO framework, short for "Tracking Events with Teacher Observation," directly addresses this issue with an ingenious teacher-student learning approach. The core idea is to harness the robust tracking abilities of a pre-trained RGB camera tracker (the "teacher") to educate an event camera network (the "student") on how to interpret motion. Crucially, it accomplishes this using only about 25 minutes of unannotated real-world event camera recordings.

Here's how it works:

- Teacher's Guidance: A well-established

RGBtracker, already proficient in recognizing motion patterns from vastRGBdatasets, generates "pseudo-labels" for motion (point trajectories and optical flow) based on synchronizedRGBframes. - Event Condensation: The raw data from the event camera (streams of individual events) is fed into a "concentration network." This network aggregates multi-scale events into a more manageable,

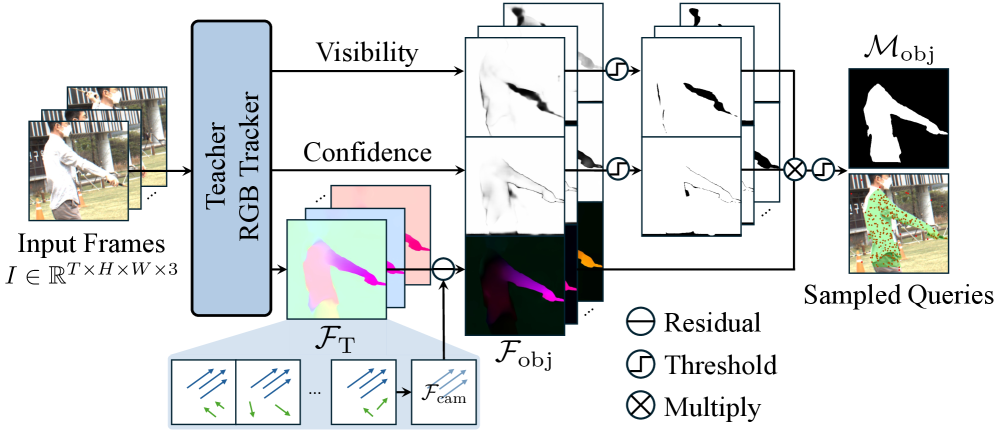

3-channelrepresentation that the student network can process. - Intelligent Sampling: This is where TETO truly shines. Drones are constantly moving, and this "ego-motion" can easily dominate the event stream. To prevent the student from exclusively learning ego-motion, TETO employs a "motion-aware data curation and query sampling strategy." It identifies independently moving objects by estimating global ego-motion via

RANSACand then calculating residual flow. Queries are then oversampled from these independently moving regions, ensuring the student learns to track actual objects, not just the drone's own movement.

Figure 3: Object motion query sampling. Given teacher-predicted optical flow, we estimate a global affine model via RANSAC and compute residual flow to identify independently moving regions. Queries are oversampled from these regions to prevent bias toward dominant ego-motion patterns.

Figure 4: Overall architecture. The concentration network aggregates multi-scale events into a 3-channel representation. Pseudo-labels for tracking and optical flow are provided by the teacher tracker.

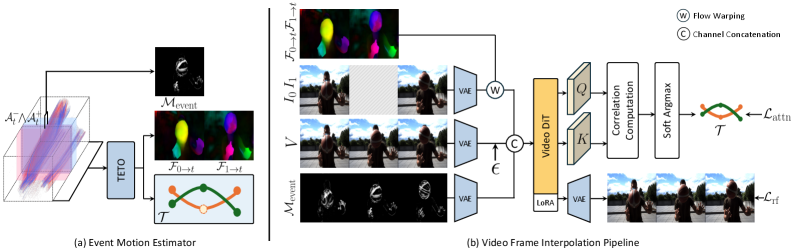

The student network, trained with these pseudo-labels and intelligent sampling, learns to jointly predict both point trajectories and dense optical flow directly from event streams. This motion information is then utilized as "explicit motion priors" to condition a pre-trained video diffusion transformer, enabling high-quality frame interpolation.

Figure 5: Video frame interpolation architecture. (a) Motion signals from TETO and event motion mask construction. (b) Overall pipeline with latent-space flow warping and trajectory-guided attention supervision.

Impressive Results with Minimal Data

TETO isn't just a clever concept; it truly delivers. The results are genuinely impressive, especially considering the minimal training data required.

- Point Tracking: TETO achieves state-of-the-art performance on the

EVIMO2dataset for point tracking, a crucial capability for robust object following and localization. - Optical Flow: It also establishes a new benchmark for optical flow estimation on the

DSECdataset. Accurate optical flow is fundamental for tasks like collision avoidance and visual odometry. - Data Efficiency: Perhaps the most striking aspect is that it achieves these results using orders of magnitude less training data than previous methods that relied on synthetic datasets. This dramatically reduces the cost and complexity of developing event-based vision systems.

- Frame Interpolation: The high-fidelity motion estimation directly translates to superior frame interpolation quality on

BS-ERGBandHQ-EVFIbenchmarks. This means smoother, more realistic video output, even when working with sparse event data.

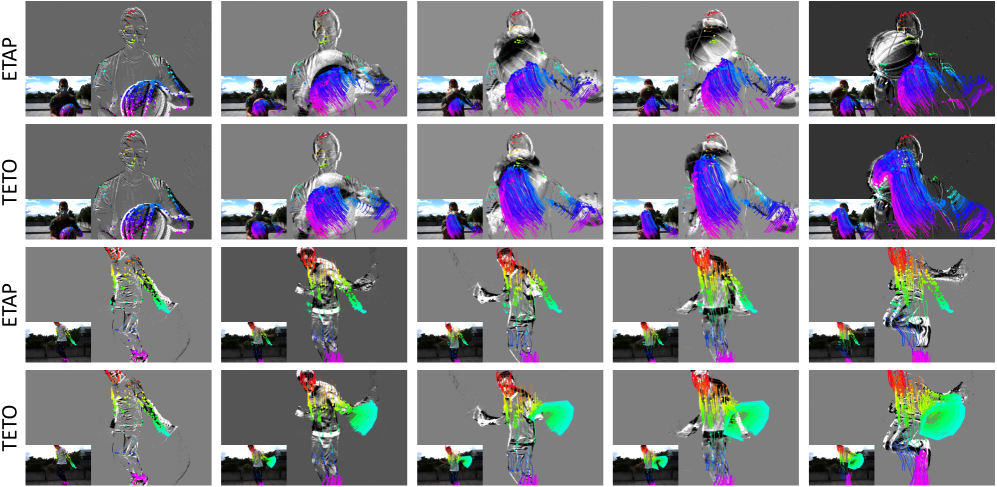

Qualitative examples from the paper (Figures 6, 7, 8, 9) demonstrate TETO's ability to maintain accurate tracks even under challenging conditions such as rapid motion, significant illumination changes, and scenes with multiple moving objects – situations where traditional RGB systems often struggle.

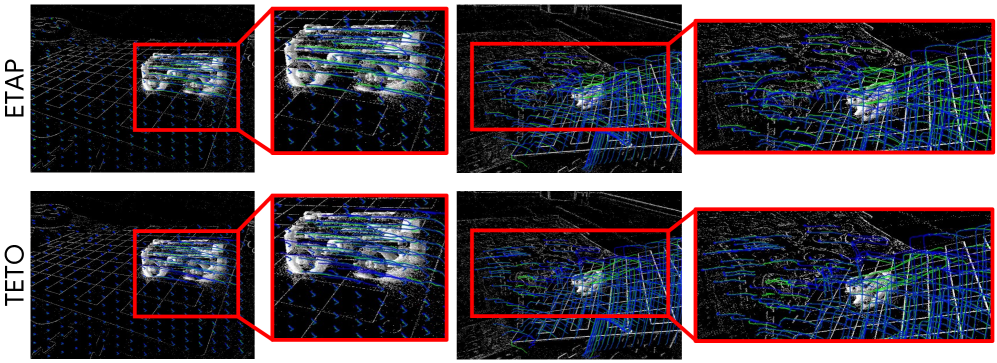

Figure 6: Qualitative Comparison. Ground-truth trajectories are shown in green and model predictions in blue.

Figure 7: Qualitative comparison under dynamic motion.

![Robust tracking in extreme conditions on BS-ERGB (Top) and EVFI-LL (Bottom) [zhang2024sim].](https://qawnehlcoileybzacnvr.supabase.co/storage/v1/object/public/article-covers/figures/teto-event-cameras-finally-master-real-world-motion-for-drones-mn5r9ck9/fig-7.png)

Figure 8: Robust tracking in extreme conditions on BS-ERGB (Top) and EVFI-LL (Bottom) [zhang2024sim].

Figure 9: Qualitative comparison under dynamic motion.

Why This Matters for Drones

For drone enthusiasts, builders, and engineers, TETO represents a significant leap towards equipping autonomous flight with truly superhuman perception.

- Enhanced Autonomy: Consider a drone that can track a small, fast-moving object (like another drone, a bird, or a person) with exceptional precision, even if it rapidly crosses the frame or briefly disappears behind an obstacle. TETO's robust point tracking and optical flow capabilities make this possible for advanced object following, swarm coordination, and dynamic obstacle avoidance.

- Robust Navigation in Any Condition: Event cameras excel where

RGBcameras falter: in low light, high dynamic range environments (imagine flying from deep shadow into bright sunlight), and at extreme speeds. TETO's ability to extract accurate motion in these conditions means drones can operate more reliably and safely across diverse real-world settings, from dimly lit warehouses to bright outdoor landscapes. - Precision and Stability: Improved motion estimation directly translates to more stable flight, more accurate position holding, and finer control for delicate tasks such as close-up structural inspection or precise landings in confined spaces.

- Foundation for Advanced AI: The high-fidelity motion data TETO provides offers rich input for higher-level AI tasks. For instance, it could feed into systems like

AgentRVOS, as explored in a related paper by Jin et al., enabling a drone to segment and track objects based on natural language queries with far greater reliability. This precise motion understanding would facilitate more robust "reasoning over object tracks" for zero-shot referring video object segmentation. - Physical Interaction: When combined with other sensors like tactile feedback, TETO's precise, low-latency motion data could empower drones to perform complex physical interactions. The 'VTAM: Video-Tactile-Action Models for Complex Physical Interaction Beyond VLAs' paper by Yuan et al. highlights the necessity of such granular data for achieving delicate grasping, perching, or contact-based inspection. TETO could provide a critical piece of that puzzle.

- Superior Environmental Mapping: Accurate ego-motion and object motion are fundamental for constructing precise 3D maps of environments. With TETO, drones could generate much more accurate and generalized 3D occupancy maps, even in dynamic urban settings, as suggested by the 'OccAny: Generalized Unconstrained Urban 3D Occupancy' paper by Cao and Vu. This is vital for advanced path planning and obstacle avoidance in complex environments.

Limitations & What's Still Needed

While TETO marks a significant leap forward, it's not a magic bullet.

- Teacher Dependency: TETO still relies on a robust, pre-trained

RGBteacher. The event student's performance is inherently tied to the quality of theRGBpseudo-labels. If theRGBtracker struggles in specific conditions, the event tracker could inherit those weaknesses. - Event Camera Limitations: Event cameras excel at capturing motion but don't provide texture or color information. While superb for speed and dynamic range, they lack the rich visual details that

RGBcameras offer. For tasks requiring object recognition based on appearance, they still need to be paired with other sensors. - Data Diversity: Although 25 minutes of unannotated data is impressive, the diversity of that data remains crucial. If the training data doesn't encompass a wide range of motion patterns and environments, the model might not generalize perfectly to entirely novel scenarios.

- On-Drone Compute: Deploying complex deep learning models for motion estimation on resource-constrained drones demands careful optimization. The paper doesn't detail the computational overhead or power consumption of TETO on embedded hardware, which is a key factor for practical drone integration.

- Lack of Open-Source: As a research paper, there's no immediate mention of an open-source release. This implies that practical adoption by hobbyists or smaller teams would require substantial effort to reimplement the framework.

DIY Feasibility

For the average hobbyist, replicating TETO from scratch would be a significant undertaking. While event cameras like DVS sensors are commercially available (though still pricier than typical RGB cameras), implementing the teacher-student framework and its sophisticated data curation strategy demands deep learning expertise, substantial computational resources for training, and access to a robust RGB tracker. Without readily available code or pre-trained models, this technology remains firmly in the research and advanced development realm, rather than a weekend DIY project. However, TETO's results clearly demonstrate what's possible and will undoubtedly influence future open-source efforts.

Beyond the Frame

TETO moves event cameras significantly closer to becoming standard, reliable sensors for autonomous drones. By effectively closing the sim-to-real gap and drastically reducing data requirements, it brings the promise of truly high-speed, robust motion perception into much sharper focus. The future of drone autonomy will undoubtedly depend on sensors that can perceive beyond the limitations of traditional frames, and TETO represents a powerful stride in that direction.

Paper Details

Title: TETO: Tracking Events with Teacher Observation for Motion Estimation and Frame Interpolation Authors: Jini Yang, Eunbeen Hong, Soowon Son, Hyunkoo Lee, Sunghwan Hong, Sunok Kim, Seungryong Kim Published: March 2026 (arXiv v1) arXiv: 2603.23487 | PDF

Written by

Mini Drone Shop AISharing knowledge about drones and aerial technology.