Beyond Just Seeing: Drones Teach AI to 'Think' Like Structural Engineers

New research introduces DefectBench, a rigorous benchmark evaluating Large Multimodal Models (LMMs) for autonomous building inspection. It reveals LMMs excel at understanding structural pathologies but struggle with precise localization, while showing promise for zero-shot defect segmentation.

TL;DR: Large Multimodal Models are getting surprisingly good at understanding what a building defect is and how it impacts structural integrity. While they still struggle with pinpointing where a defect is with metric precision, new zero-shot segmentation capabilities mean drones could identify novel flaws without prior training data.

Automated building inspection is a critical, often tedious, task that drones are well-suited to transform. For too long, however, our drone-based AI systems have been limited to merely 'seeing' defects. They can spot a crack, certainly, but grasping its implications? That understanding has traditionally been reserved for human engineers. A new paper, "Can Large Multimodal Models Inspect Buildings? A Hierarchical Benchmark for Structural Pathology Reasoning," addresses this directly, expanding the capabilities of what AI-powered drones can truly understand about a building's structural health. This isn't just about better cameras or longer flight times; it's about equipping drones with the cognitive tools to interpret the complex visual information they gather, moving them from simple data collection to intelligent diagnosis.

The Problem with Just 'Seeing'

Traditional computer vision models like YOLO or Mask R-CNN are highly effective at one task: pixel-level localization. They can draw a bounding box or segment a crack with impressive accuracy. The challenge, however, is their inherent passivity. These models don't grasp the context; they don't understand why a crack matters, or if it's connected to another structural element. This absence of visual understanding and topological awareness means such models generalize poorly. To them, a crack is just a crack; they cannot interpret it as a symptom of a larger structural issue, nor can they assess its severity from an engineering perspective. This fundamental limitation means that even with highly accurate detection, the raw data from drone inspections still requires extensive human review and interpretation. For drone operations, this translates to high post-processing costs, as human experts must still review every anomaly and interpret the data. This limitation has hindered the development of truly autonomous, intelligent inspection, keeping human engineers tethered to desks reviewing endless images rather than focusing on higher-level problem-solving.

A New Yardstick for AI Understanding

To bridge this gap, researchers introduced DefectBench, the first multi-dimensional benchmark designed to rigorously evaluate Large Multimodal Models (LMMs) in the high-stakes domain of civil engineering. They recognized that moving beyond simple detection required testing LMMs on their ability to reason and interpret, not merely recognize. This shift in focus is crucial because structural integrity isn't just about identifying a flaw, but understanding its nature, location, and potential impact on the entire structure.

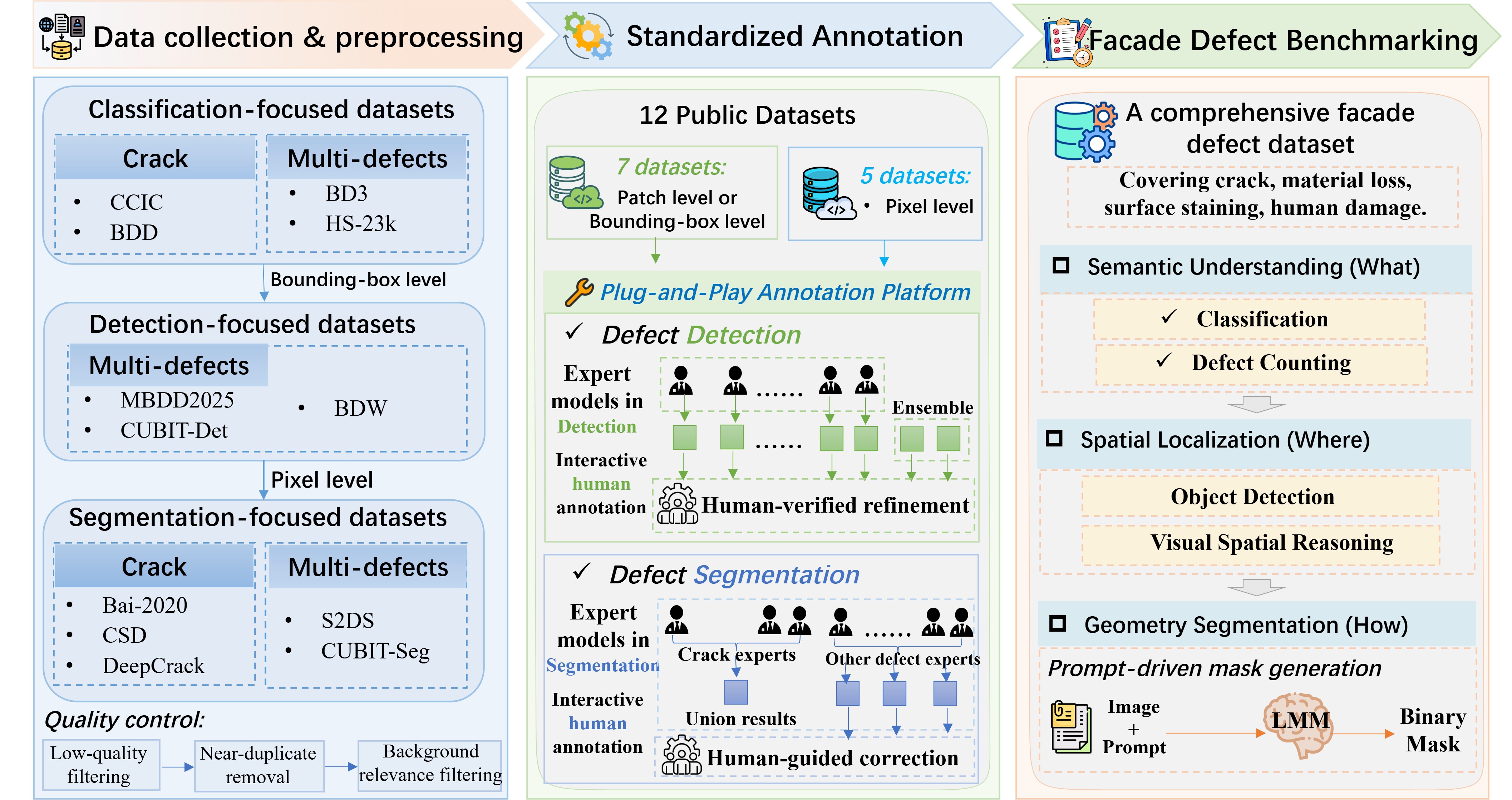

Their approach involved a clever human-in-the-loop semi-automated annotation framework. This framework leverages expert proposals, which are subsequently verified by human structural engineers. This meticulous process unified 12 fragmented datasets into a single, standardized, hierarchical ontology of building defects. It's like creating a common language for LMMs to understand structural pathologies, categorized from superficial damage to critical structural failures, ensuring a consistent and comprehensive understanding across various defect types and severities.

Figure 1. Overview of the DefectBench construction and evaluation framework.

Figure 1. Overview of the DefectBench construction and evaluation framework.

DefectBench evaluates LMMs across three escalating cognitive dimensions:

- Semantic Perception: Can the LMM correctly identify what the defect is (e.g., spalling, efflorescence, crack)? This tests the model's ability to classify and understand the nature of the damage.

- Spatial Localization: Can it accurately pinpoint where the defect is in a metric sense? This goes beyond simple bounding boxes, demanding precise coordinates for practical applications.

- Generative Geometry Segmentation: Can it precisely delineate the shape and extent of the defect, even for novel types, without specific training? This is a powerful capability, allowing for detailed mapping of damage.

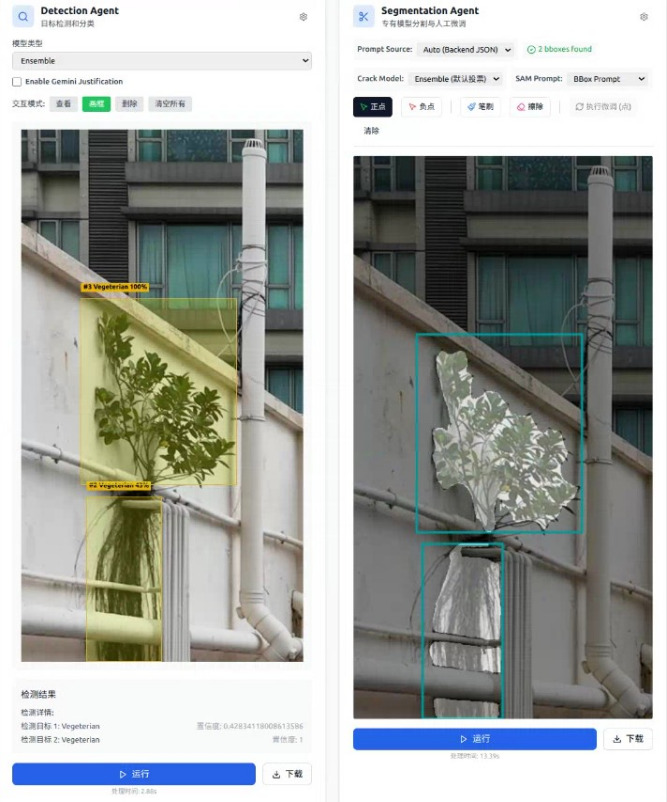

Such a multi-faceted evaluation is crucial. It moves beyond a simple 'correct/incorrect' assessment, delving into the nuances of an LMM's understanding, providing a much richer picture of their capabilities and shortcomings. To facilitate this, the researchers also built a plug-and-play annotation platform (Figure 4), which could prove a valuable tool for anyone looking to build similar datasets, further democratizing the creation of high-quality training data.

Figure 4. UI for plug-and-play annotation platform.

Figure 4. UI for plug-and-play annotation platform.

The Results: Smart, But Still Needs Directions

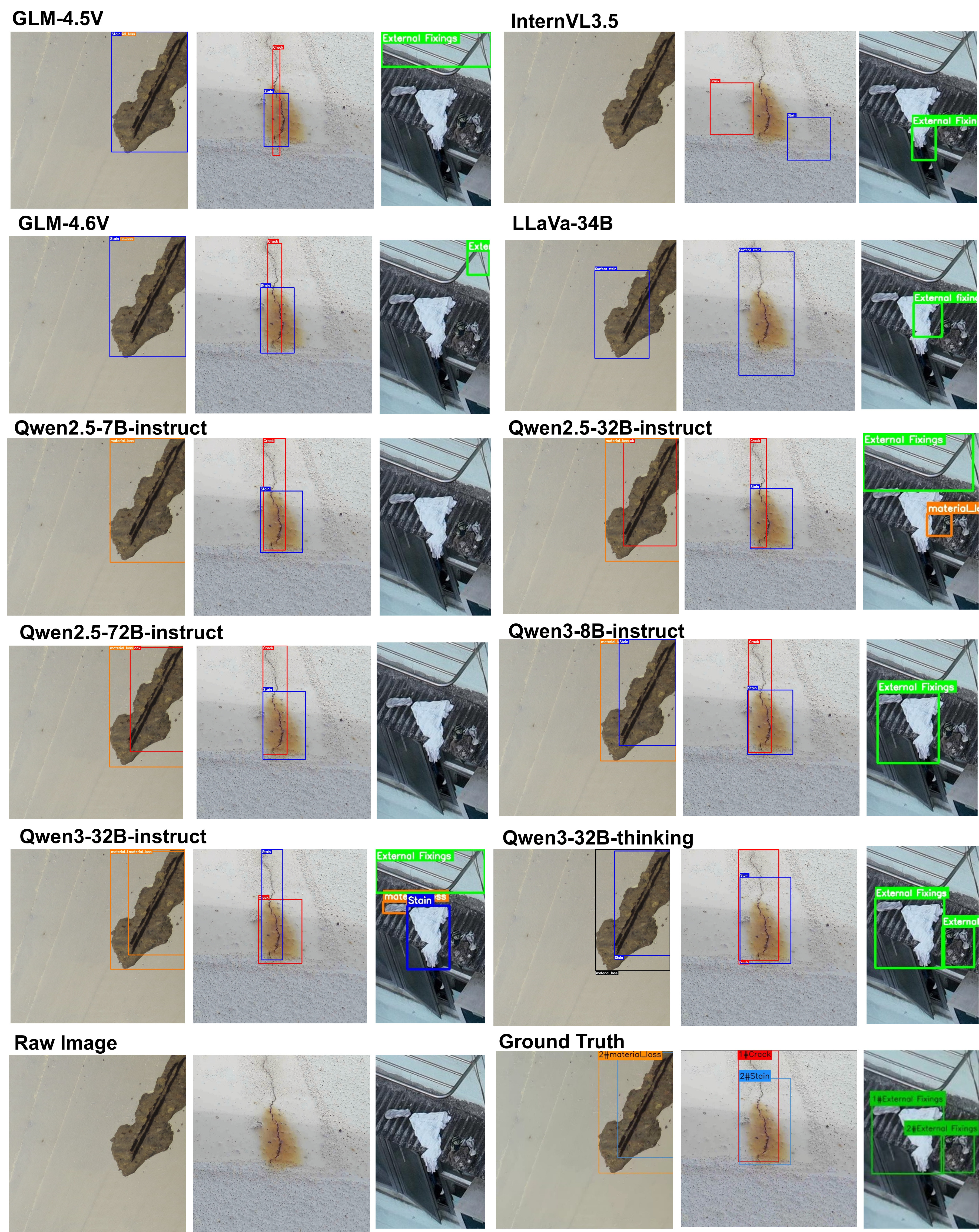

The extensive experiments involved testing 18 state-of-the-art (SOTA) LMMs. The findings present a mixed picture, yet offer highly insightful takeaways for drone practitioners:

- Topological Awareness & Semantic Understanding (The 'What' and 'How'): LMMs demonstrated exceptional capabilities here. They could effectively diagnose defects, understanding their type and potential implications. This indicates LMMs can grasp the meaning of a defect in a way traditional models cannot, moving beyond mere pattern recognition to a more conceptual understanding of structural health.

- Metric Localization Precision (The 'Where'): Here, current LMMs fall short. While they know what a defect is, precisely locating it in 3D space with metric accuracy remains a significant deficiency. This precision is critical for real-world repair and maintenance, where knowing a crack is 'somewhere on the wall' isn't nearly as useful as knowing its exact coordinates for a repair crew.

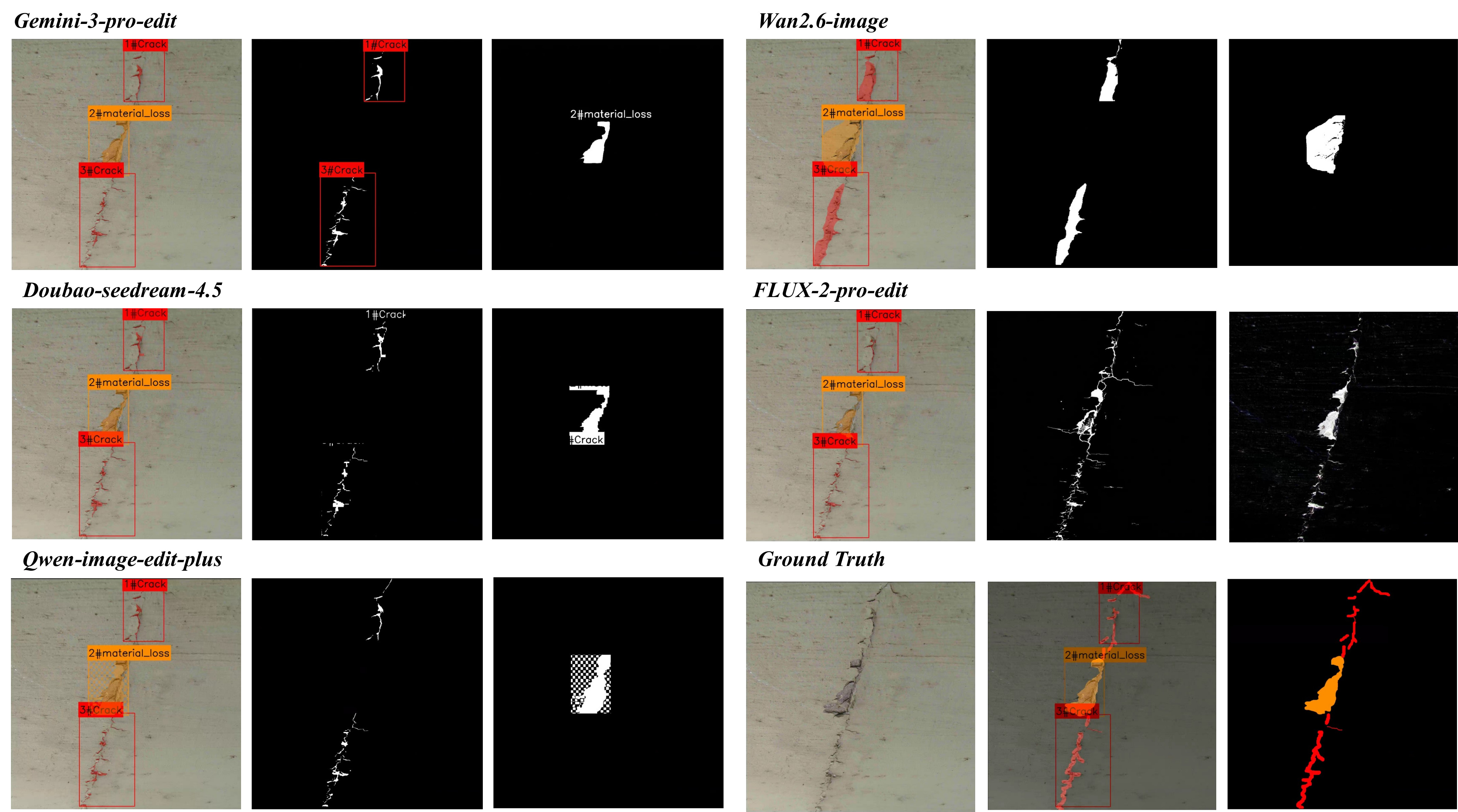

- Zero-Shot Generative Segmentation: This marks a significant advancement. The study validates that general-purpose foundation models can perform generative segmentation (drawing precise outlines of defects) and even rival specialized supervised networks without domain-specific training. This capability means an LMM could identify and segment a completely new type of structural flaw it's never encountered, offering a powerful tool for proactive maintenance and the early detection of unforeseen issues.

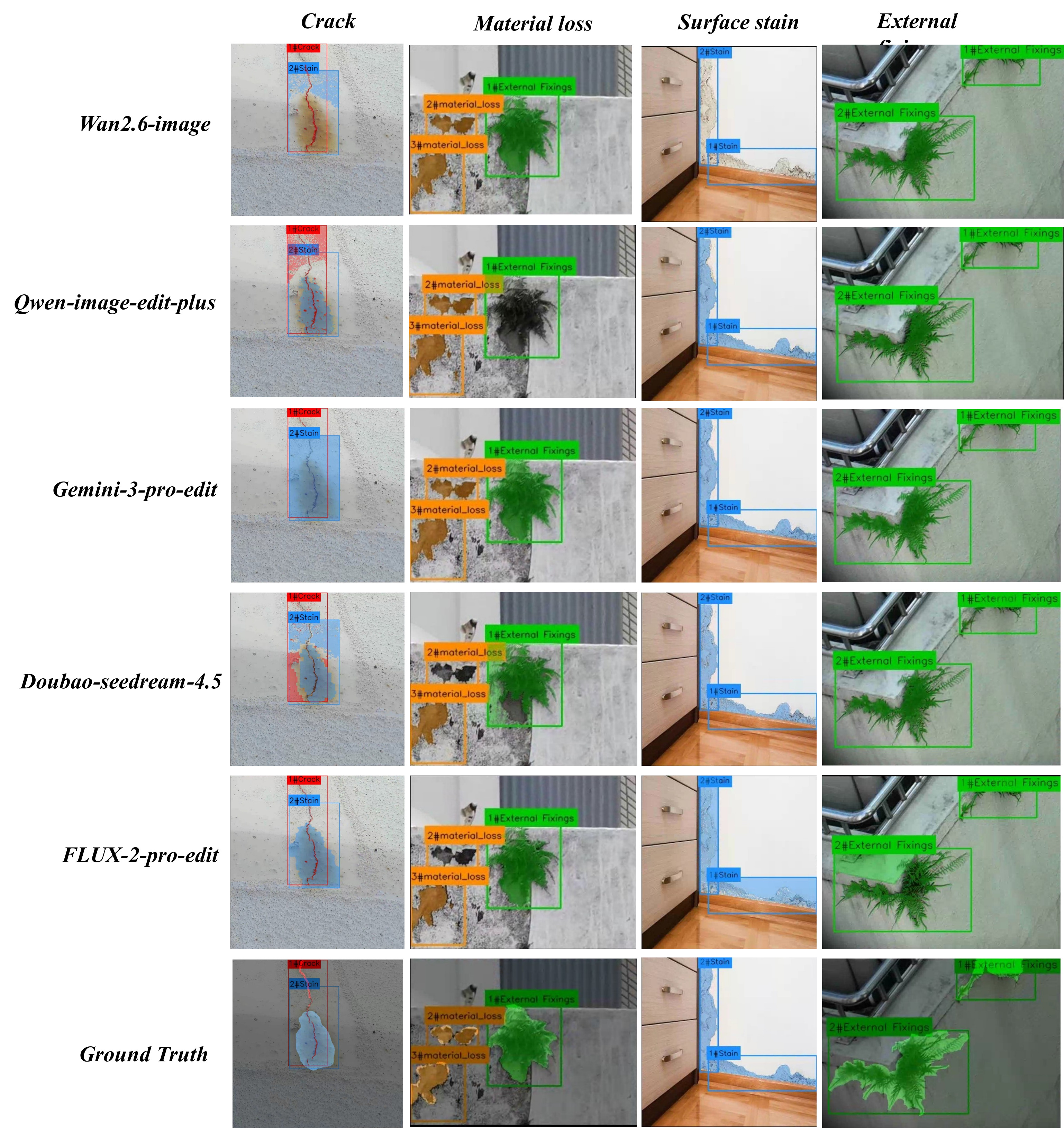

Figure 5. Comparative defect detection results of different LMMs on facade images.

Figure 5. Comparative defect detection results of different LMMs on facade images.

Why This Matters for Your Drones

This research represents a significant stride towards truly autonomous and intelligent drone inspection. Consider a drone that doesn't just capture images, but actively reasons about a building's structural health. With these LMMs, your drone could:

- Prioritize Inspections: Identify critical defects needing immediate attention versus minor cosmetic issues, allowing engineers to allocate resources more efficiently.

- Generate Smarter Reports: Provide not just images, but also a preliminary diagnosis of the defect type and its potential severity, based on structural topology, significantly reducing the manual effort in report generation.

- Adapt Inspection Paths: If an LMM identifies an anomaly, it could direct the drone to perform a more detailed, focused inspection of that area, perhaps flying closer or from a different angle, optimizing data collection in real-time.

- Identify Novel Threats: The zero-shot segmentation capability means your drone could flag entirely new types of structural issues, offering unprecedented early warning systems for emerging problems that no human has explicitly trained it to look for.

This transforms drones from mere data collectors into active diagnostic agents, providing actionable intelligence directly from the field. The goal is to build drones that can assist, not just observe, structural engineers, freeing them to focus on complex problem-solving and decision-making.

Figure 2. Visualization of generative instability and instruction alignment failures in zero-shot segmentation.

Figure 2. Visualization of generative instability and instruction alignment failures in zero-shot segmentation.

Limitations & What's Still Missing

While promising, this research highlights several areas for improvement before full real-world deployment:

- Metric Localization: The LMMs' weakness in precise 'where' localization presents a significant hurdle. For repairs or detailed structural analysis, knowing a defect is within a meter isn't sufficient; centimeter accuracy is often required. Achieving this often relies on accurate

SLAMsystems and robustGPSor visual odometry from the drone itself, which are separate but critical components for practical drone inspection. - Generative Instability: As Figure 2 shows, generative segmentation isn't perfect. LMMs can still hallucinate or misinterpret instructions, leading to inaccurate defect outlines. Improving the robustness and instruction alignment of these models is crucial to prevent false positives or missed critical areas, which could have serious consequences in structural assessment.

- Real-world Environment Constraints: The

DefectBenchdataset, while comprehensive, remains curated. Real-world conditions—varying lighting, weather, vegetation, or complex facade geometries—can significantly challenge LMM performance. Further testing in diverse, uncontrolled environments is therefore necessary to ensure the models are robust enough for practical applications beyond laboratory settings. - Computational Overhead: LMMs are computationally intensive. Running these models in real-time on a drone, especially for generative tasks, demands significant onboard processing power. This introduces challenges related to weight, power consumption, and thermal management for drone platforms, pushing the boundaries of

edge AIcapabilities. Efficient model architectures or robustedge AIsolutions are essential to make these intelligent drones a reality without sacrificing flight time or payload capacity.

Figure 3. Cross-model qualitative evaluation of generative geometry segmentation across multiple facade defect categories.

Figure 3. Cross-model qualitative evaluation of generative geometry segmentation across multiple facade defect categories.

DIY Feasibility for the Hobbyist Builder

The good news for builders and hobbyists is that the DefectBench database and evaluation framework are open-source. This means you can download the high-quality, human-verified dataset and use it to train or fine-tune your own computer vision models. While training a SOTA LMM from scratch is beyond most hobbyist setups, experimenting with smaller, open-source LMMs like LLaVA or MiniGPT-4 on this dataset is entirely feasible. You could also use the provided annotation tools to start creating your own custom datasets for specific inspection tasks relevant to your drone projects, contributing to the broader research community. This work provides a solid foundation, but it's important to remember that LMMs are just one piece of the puzzle for truly autonomous drones. Before an LMM can even begin to reason about structural pathologies, the drone needs to navigate precisely and build accurate maps. This is where research like "HortiMulti: A Multi-Sensor Dataset for Localisation and Mapping in Horticultural Polytunnels" becomes critical, highlighting the need for robust SLAM in challenging environments like dense vegetation or indoor spaces. Furthermore, processing the vast amounts of video data captured by drones for LMM understanding requires efficient methods, as explored in "Adaptive Greedy Frame Selection for Long Video Understanding," to avoid overwhelming onboard processors. For actual onboard intelligence, the principles discussed in "TinyML Enhances CubeSat Mission Capabilities" regarding edge AI are directly applicable, aiming to bring complex reasoning closer to the drone itself, reducing latency and reliance on cloud processing. Ultimately, the goal is for the drone to not just identify issues but to self-refine its inspection strategies, a concept explored in "The Robot's Inner Critic: Self-Refinement of Social Behaviors through VLM-based Replanning," allowing for truly adaptive and intelligent missions. Each of these areas complements the core challenge of teaching LMMs to understand complex visual information, paving the way for a new generation of smart inspection drones.

This research firmly shifts the conversation from merely detecting flaws to intelligently diagnosing them. The future of drone inspection isn't just about better cameras or flight times; it's about equipping our drones with the ability to reason, interpret, and truly understand the world they're inspecting, transforming them into indispensable partners for structural engineers.

Paper Details

Title: Can Large Multimodal Models Inspect Buildings? A Hierarchical Benchmark for Structural Pathology Reasoning Authors: Hui Zhong, Yichun Gao, Luyan Liu, Hai Yang, Wang Wang, Haowei Zhang, Xinhu Zheng Published: March 2026 arXiv: 2603.20148 | PDF

Written by

Mini Drone Shop AISharing knowledge about drones and aerial technology.