Explaining AI Decisions in Drones with UNBOX

UNBOX uses language and diffusion models to explain black-box AI decisions, enhancing trust and accountability in drone systems.

TL;DR:

UNBOX is a framework designed to explain black-box visual models without needing direct access to their internals. By combining language models and diffusion models, it generates human-readable descriptions of AI decision-making, paving the way for more trustworthy drone systems.

Can Drones Explain Their Decisions?

Drones are becoming smarter and more autonomous, handling tasks like navigation, obstacle avoidance, and object detection with minimal human input. But as these systems grow more complex, they also become less transparent. Why does a drone choose one path over another? Why does it flag a specific object? These are questions that current AI systems often can’t answer.

Enter UNBOX—a new framework that aims to explain the decision-making process of black-box AI models. By leveraging advanced language and diffusion models, UNBOX provides insights into how AI systems interpret the world, even when their internal workings are inaccessible. This innovation could transform how we interact with and trust AI-powered drones.

The Challenge: Decoding the Black Box

Many drones rely on proprietary AI models for tasks like visual recognition and decision-making. These so-called "black-box" models are notoriously opaque, offering little to no insight into how they arrive at their conclusions. This lack of transparency poses challenges for debugging, understanding biases, and ensuring safety.

Existing methods for interpreting AI decisions often require access to the internal workings of the model, such as its neural network weights or training data. However, such access is frequently restricted due to proprietary constraints. UNBOX addresses this issue by providing explanations without needing to look inside the black box.

How UNBOX Works

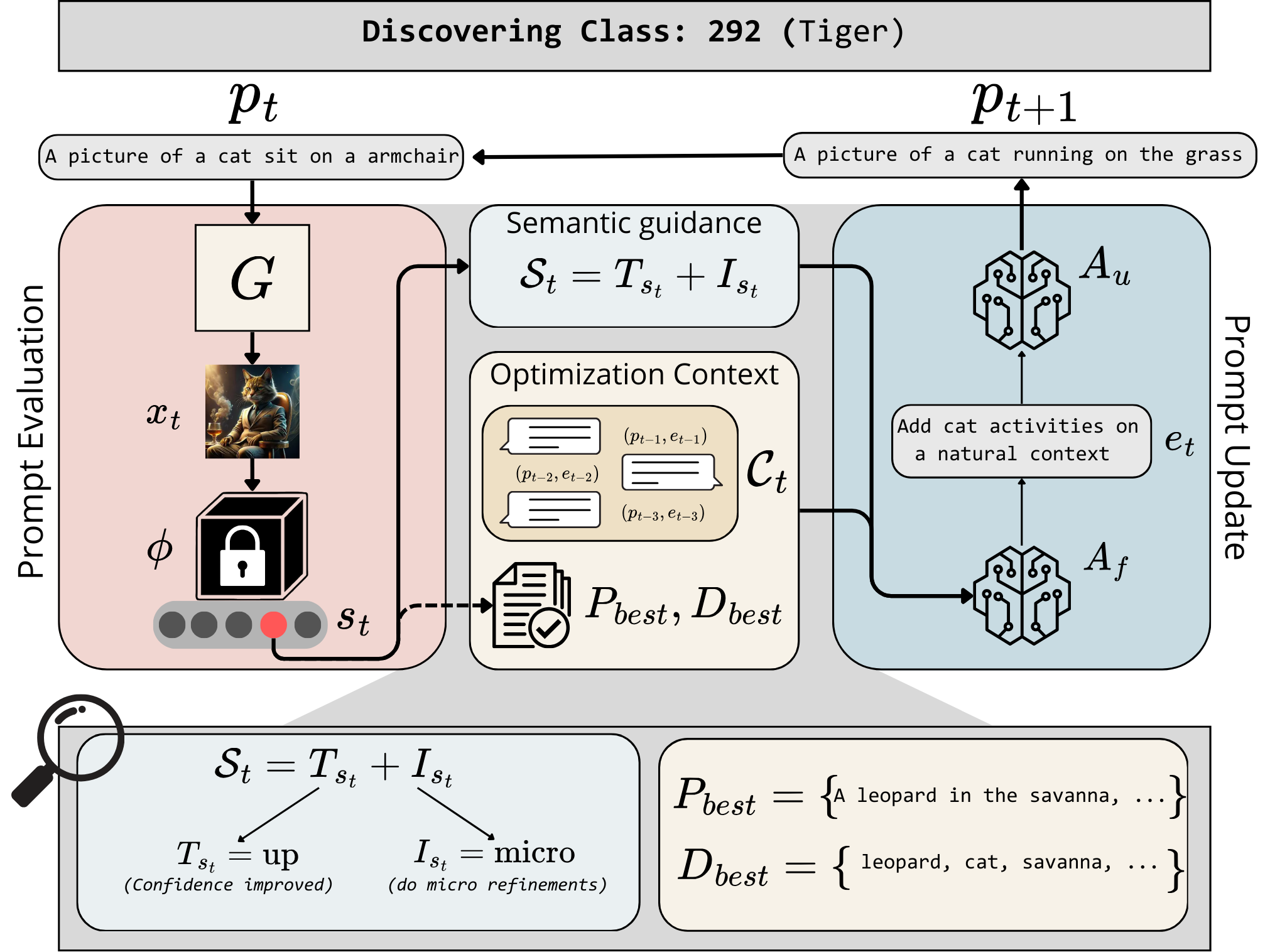

UNBOX approaches the problem of AI interpretability by using a combination of natural language and image generation models. Here’s a breakdown of its process:

-

Text Prompt Creation: UNBOX uses a Large Language Model (LLM) to generate text prompts based on the target concept or class. For example, if the goal is to understand how a drone identifies a tree, the LLM creates descriptive prompts related to trees.

-

Image Generation: These prompts are then converted into images using a text-to-image diffusion model. The generated images are designed to represent the concept in a way that the black-box model can process.

-

Feedback Loop: The generated images are fed into the black-box model, which evaluates how well they match the target concept. Based on the model’s output, the LLM refines the prompts to improve their alignment with the model’s understanding.

-

Human-Readable Explanations: The final, refined prompts are used to create natural language descriptions that explain the black-box model’s decision-making process.

Overview of the UNBOX framework. It combines text-to-image generation and language models to interpret black-box AI systems.

Overview of the UNBOX framework. It combines text-to-image generation and language models to interpret black-box AI systems.

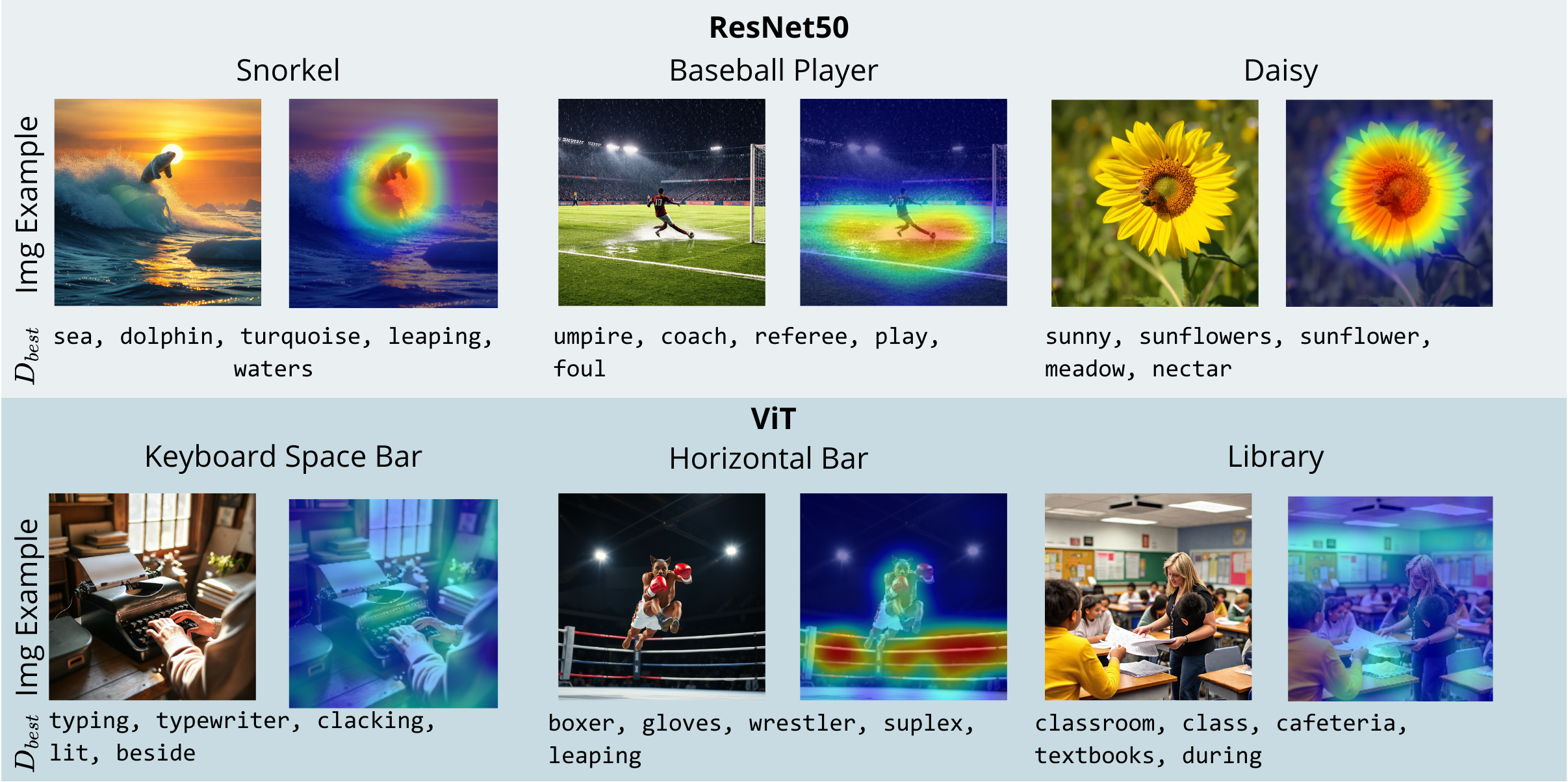

One standout feature of UNBOX is its ability to identify spurious correlations in AI models. For instance, it can reveal if a model mistakenly associates birds with water or identifies people based on irrelevant background features.

Examples of how UNBOX identifies non-causal correlations in AI decision-making.

Examples of how UNBOX identifies non-causal correlations in AI decision-making.

Does UNBOX Deliver?

UNBOX has shown promising results when compared to traditional white-box interpretability methods that require full access to AI systems. Here are some key takeaways:

- Accuracy: UNBOX’s text-based explanations align closely with human understanding, as confirmed by semantic fidelity tests.

- Bias Detection: The framework successfully identified biases and spurious correlations in datasets like ImageNet-1K and CelebA.

- Performance: Despite its black-box constraints, UNBOX performs comparably to state-of-the-art interpretability methods that require internal model access.

Instead of relying solely on numerical accuracy metrics, the researchers focused on evaluating the semantic alignment and interpretability quality of UNBOX’s outputs.

Why This Matters for Drones

UNBOX has significant implications for drone technology, particularly in areas where trust and accountability are critical. Here’s how it could make a difference:

- Safer Operations: By explaining why it recognizes certain obstacles or waypoints, a drone equipped with UNBOX could help operators debug and trust its decisions.

- Bias Identification: UNBOX can uncover biases in a drone’s AI, such as difficulties in recognizing objects in specific environments, enabling developers to address these issues.

- Enhanced Human Interaction: In tasks like delivery or inspections, drones could provide plain-language explanations of their actions, fostering transparency and trust.

For example, in a search-and-rescue mission, a drone could explain why it flagged a particular area for human presence, allowing operators to verify its findings more effectively.

Limitations of UNBOX

While UNBOX is a promising framework, it’s not without its challenges. Here are some of its limitations:

- Resource-Intensive: The reliance on LLMs and diffusion models requires significant computational resources, making real-time applications challenging for drones with limited processing power.

- Dependency on External Tools: UNBOX depends on external models like

Stable DiffusionandGPT-4, which may not always be accessible or cost-effective. - No Model Improvement: While UNBOX can diagnose issues in black-box models, it doesn’t directly improve their performance or address the biases it identifies.

- Limited Testing: The framework has been tested on general datasets like ImageNet and CelebA, but its performance on drone-specific tasks, such as aerial imagery, remains unproven.

Can You Build It?

For hobbyists and developers interested in experimenting with UNBOX, here’s what you’ll need:

- A black-box vision model, such as AWS Rekognition or Google Cloud Vision.

- A text-to-image diffusion model like

Stable Diffusion. - A powerful LLM, such as

GPT-4or an open-source alternative likeLLaMA.

While the framework’s codebase isn’t publicly available yet, replicating it would require expertise in AI pipelines, prompt engineering, and cloud computing. It’s a challenging but not impossible task.

The Bigger Picture

UNBOX is part of a broader movement toward making AI systems more interpretable and accountable. It complements other vision-language model (VLM) research, such as:

- FVG-PT: A method for fine-tuning vision-language models for specific tasks, which could be combined with UNBOX for optimized performance and interpretability.

- ER-Pose: A framework for real-time human pose estimation, where UNBOX could explain why certain movements are flagged.

- FOMO-3D: A system for detecting rare 3D objects, where UNBOX could clarify how and why a drone identifies these objects.

Together, these advancements could significantly enhance the capabilities of AI-powered drones.

Final Thoughts

UNBOX represents a step forward in making AI systems more transparent and trustworthy. While it’s not yet ready for widespread deployment, its potential to explain AI decision-making could revolutionize how we interact with drones and other autonomous systems. As research in this area continues, we may soon see drones that can articulate their decisions in ways humans can understand—an exciting prospect for the future of AI.

Paper Details

Title: UNBOX: Unveiling Black-box visual models with Natural-language

Authors: Simone Carnemolla, Chiara Russo, Simone Palazzo, Quentin Bouniot, Daniela Giordano, Zeynep Akata, Matteo Pennisi, Concetto Spampinato

Published: 2023

arXiv: 2603.08639 | PDF

Related Papers

Written by

Mini Drone Shop AISharing knowledge about drones and aerial technology.