IndoorR2X: How LLMs Let Drones Team Up with Your Smart Home

IndoorR2X introduces a framework enabling LLM-powered robots to coordinate with static IoT sensors. This enhances indoor autonomy and task planning, reducing exploration and boosting reliability by leveraging existing smart home infrastructure.

TL;DR: Forget robots talking only to each other.

IndoorR2Xshows how LLM-powered drones can coordinate with your smart home's existing IoT devices, like security cameras and smart sensors, to gain a global understanding of their environment. ThisRobot-to-Everything(R2X) approach drastically improves multi-robot efficiency and reliability for indoor tasks by giving them a persistent, building-wide "eye."

Your Drone Just Got a Smart Home Upgrade

For too long, our autonomous indoor robots, including mini drones, have operated in relative isolation. They navigate using their own onboard sensors, building a limited, ego-centric view of the world. But what if your drone could tap into the same network that powers your smart thermostat, your security cameras, and your smart speakers? A new paper, IndoorR2X, from researchers at Google, CMU, and others, demonstrates a framework where Large Language Models (LLMs) enable multi-robot teams to do exactly that, orchestrating complex tasks by leveraging existing IoT infrastructure.

The Problem with Being a Lone Wolf

Current multi-robot systems often rely solely on Robot-to-Robot (R2R) communication to piece together a shared understanding of an environment. This works, but it's inefficient. Each robot still needs to explore extensively to cover blind spots, and even then, partial observability remains a major hurdle. Imagine a drone searching for a lost item in a cluttered office – it needs to physically fly into every room and around every obstacle.

This constant exploration wastes battery, time, and computational resources, and scaling it to larger teams introduces significant coordination overhead. We're missing a persistent, building-wide context that goes beyond what any single mobile robot can perceive.

How Your Drone Learns to Talk to Everything

IndoorR2X tackles this by introducing the Robot-to-Everything (R2X) paradigm. Instead of just R2R, mobile robots – think your indoor inspection drone – integrate their onboard sensor data with observations from static Internet of Things (IoT) devices already present in the environment. This means your drone can "see" through the eyes of a wall-mounted IP camera, get status updates from a smart lock, or even detect temperature changes from a smart thermostat.

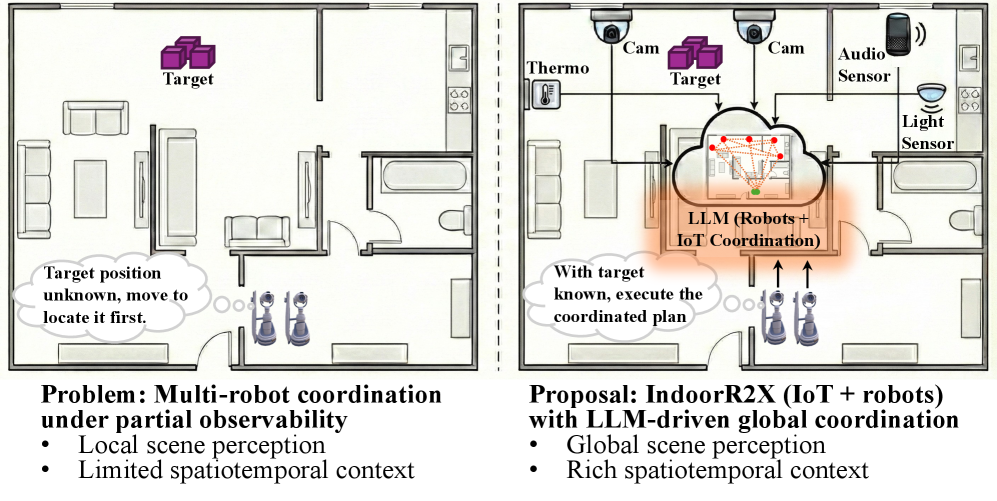

Figure 1: Motivation for IndoorR2X. Augmenting robot perception with global IoT context via LLMs for efficient coordination.

Figure 1: Motivation for IndoorR2X. Augmenting robot perception with global IoT context via LLMs for efficient coordination.

All these heterogeneous observations – from moving robots and fixed IoT sensors – are funneled into a central coordination hub. Here, a global semantic world model is constructed, detailing object locations, device statuses, and environmental conditions across the entire building. The crucial brain of this operation is an LLM-based online planner. This LLM processes the rich, global semantic state and generates high-level, parallel action plans for each robot. For instance, if a task involves "cleaning the kitchen," the LLM knows the kitchen layout from IoT data, where the cleaning supplies are, and which robot is best positioned to start, all without needing extensive robot-side exploration first.

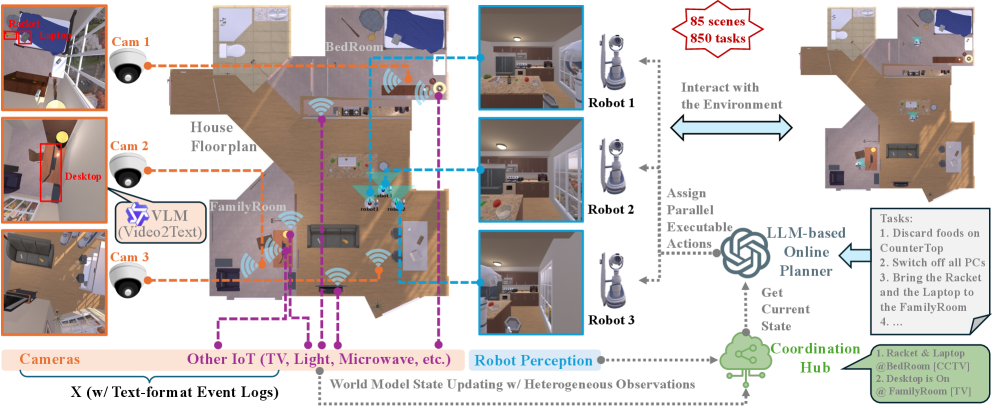

Figure 2: Our IndoorR2X framework. CCTV observations and other IoT device signals are collected to augment the world model beyond the perception range of the robots’ ego cameras. These heterogeneous observations are synchronized through a coordination hub, where an LLM-based online planner generates parallel actions for each robot and executes them to perform their respective tasks. As an example scenario, robots are assigned to perform household tasks in the morning. After potential overnight changes to object locations or device statuses (e.g., TVs), robots first update their indoor world model by leveraging the “X” observations.

Figure 2: Our IndoorR2X framework. CCTV observations and other IoT device signals are collected to augment the world model beyond the perception range of the robots’ ego cameras. These heterogeneous observations are synchronized through a coordination hub, where an LLM-based online planner generates parallel actions for each robot and executes them to perform their respective tasks. As an example scenario, robots are assigned to perform household tasks in the morning. After potential overnight changes to object locations or device statuses (e.g., TVs), robots first update their indoor world model by leveraging the “X” observations.

The framework then executes these plans, continuously updating the world model with new observations from both robots and IoT devices. This loop allows for dynamic replanning as conditions change, making the system adaptive and robust.

The Numbers Don't Lie: Efficiency and Reliability Boost

The researchers put IndoorR2X through its paces in a configurable simulation environment, evaluating it across various sensor layouts, robot teams, and task suites. The results are compelling:

- Improved Efficiency: IoT-augmented world modeling significantly boosted multi-robot efficiency. Robots spent less time exploring, leading to shorter task completion times and reduced total travel distance.

- Enhanced Reliability: The system demonstrated higher task success rates compared to

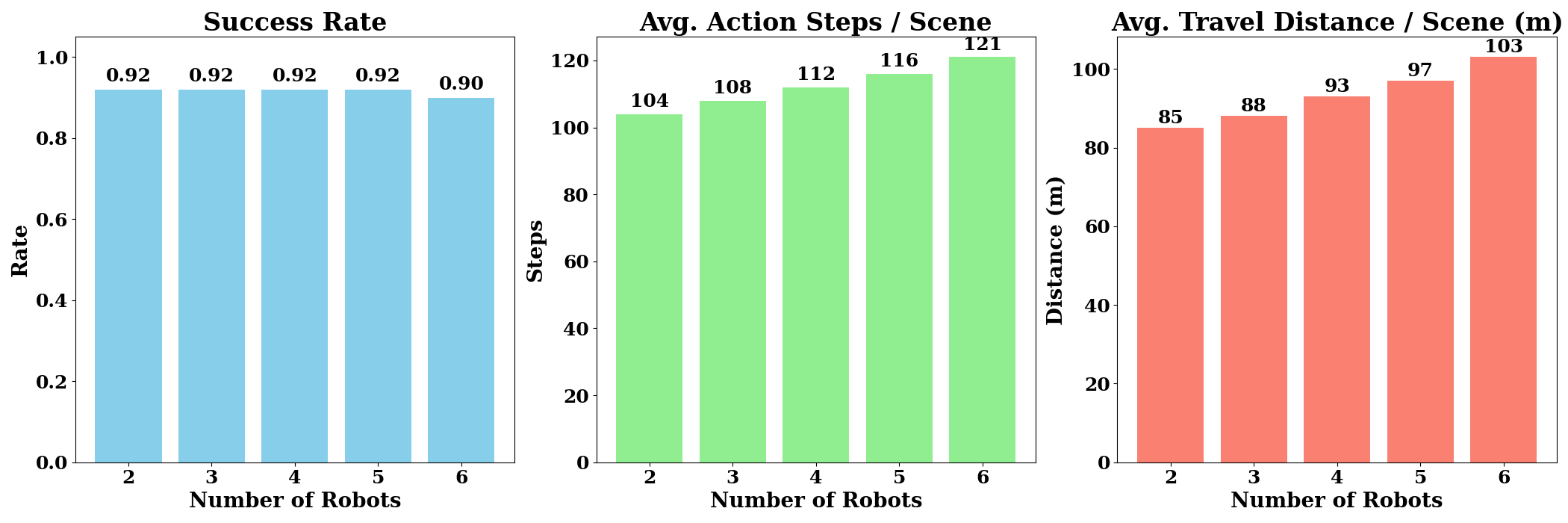

R2R-only approaches, especially in complex environments with partial observability. - Scalability: The framework showed good scalability, maintaining a stable success rate even with up to five robots. However, as expected, coordination overhead (total distance traveled) increased with larger fleet sizes, suggesting optimization opportunities for very large swarms.

Figure 3: Scalability analysis. Success rate (left) and efficiency metrics (center/right) as a function of team size (N=2 to 6). While success remains stable up to N=5, the coordination overhead (total distance traveled) increases with fleet size.

Figure 3: Scalability analysis. Success rate (left) and efficiency metrics (center/right) as a function of team size (N=2 to 6). While success remains stable up to N=5, the coordination overhead (total distance traveled) increases with fleet size.

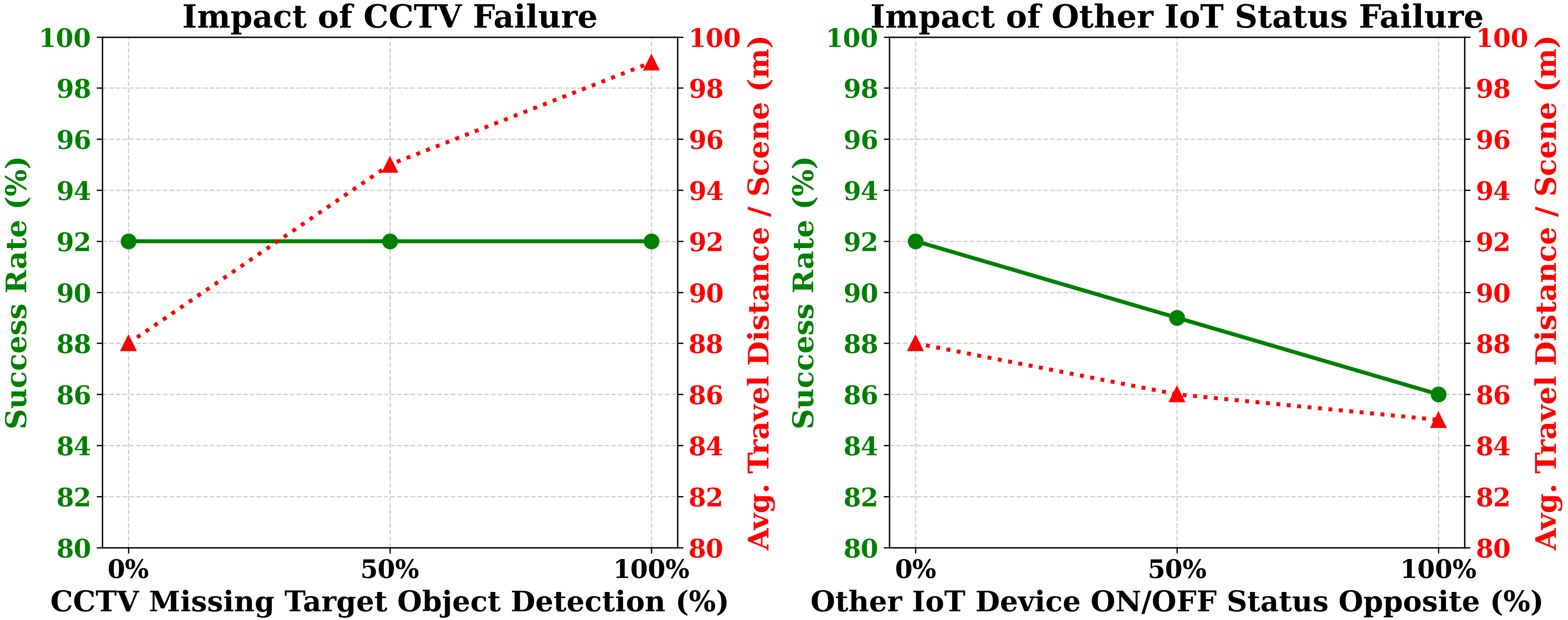

The paper also investigates robustness to "X" (IoT) failures. The system proved resilient to missing detections from IoT devices, maintaining a constant success rate, albeit with increased robot travel distance as they had to compensate. However, incorrect semantic status reports (false positives) from IoT sensors significantly impacted success, leading to unrecoverable planning errors. This highlights the critical importance of reliable IoT data.

Figure 4: Robustness to “X” failures. The system is resilient to missing detections (left), maintaining a constant success rate at the cost of increased travel. However, incorrect semantic status reports (right) significantly impact success, as false positives can lead to unrecoverable planning errors.

Figure 4: Robustness to “X” failures. The system is resilient to missing detections (left), maintaining a constant success rate at the cost of increased travel. However, incorrect semantic status reports (right) significantly impact success, as false positives can lead to unrecoverable planning errors.

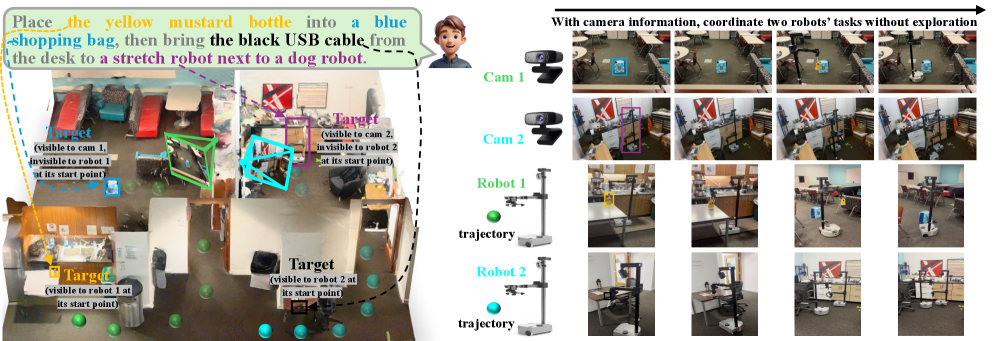

Qualitative demonstrations in simulation showed three robots and IoT sensors coordinating tasks like disposing of perishables and consolidating items in a family room. A real-world experiment with two Stretch robots and two web cameras also validated the concept in a more constrained, three-room setting, demonstrating out-of-sight visibility.

Figure 6: Illustration of our real-world experiment. Two mobile Stretch robots jointly perform tasks in a three-room environment, utilizing two web cameras for out-of-sight visibility. A third Stretch robot stands stationary by a robot dog as a target.

Figure 6: Illustration of our real-world experiment. Two mobile Stretch robots jointly perform tasks in a three-room environment, utilizing two web cameras for out-of-sight visibility. A third Stretch robot stands stationary by a robot dog as a target.

Why Your Drone Needs This Feature

This is big for indoor drones. Imagine a future where your mini drone isn't just a flying camera but an active participant in your smart environment.

- Automated Inspections: Drones could perform detailed structural inspections in commercial buildings, using static CCTV feeds to guide their path and focus on areas flagged by other sensors (e.g., thermal sensors detecting hot spots).

- Inventory Management: In warehouses, drones could work with fixed overhead cameras and RFID readers to quickly locate and verify inventory, minimizing flight time by knowing exactly where to look.

- Security & Surveillance: A drone could patrol a smart office, leveraging motion sensors and door contacts to prioritize areas of interest, providing a dynamic, mobile perspective to complement static security cameras.

- Smart Home Assistants: Your drone could proactively tidy up, find lost keys, or check if appliances are off, all by querying your existing smart home hub and coordinating with other devices.

- Reduced Drone Complexity: By offloading "seeing" to static IoT, drones can potentially carry lighter sensor payloads, extending flight time or allowing for more specialized tools.

This research moves drones from isolated, reactive machines to truly integrated, intelligent agents within our connected spaces. It's not about replacing, but augmenting.

Where We're Not Quite There Yet

While IndoorR2X presents a compelling vision, several practical hurdles remain.

First, the system's reliance on the accuracy of IoT semantic status reports is a significant vulnerability. As Figure 4 showed, false positives from a static sensor can completely derail the LLM's planning, leading to unrecoverable errors. Ensuring the integrity and reliability of diverse IoT data streams in real-world, dynamic environments is a monumental task.

Second, the computational demands of LLM-driven planning are substantial. While the paper uses a simulation, deploying such a system in a truly decentralized, low-latency manner for a fleet of drones would require powerful edge computing or robust cloud integration with minimal latency. This is where work like "TinyML Enhances CubeSat Mission Capabilities" becomes relevant, highlighting the need for efficient AI on resource-constrained devices to make sophisticated drone autonomy practical.

Third, the real-world experiments in the paper, while illustrative, are limited to two Stretch robots in a controlled, three-room environment. Scaling this to complex, multi-floor buildings with diverse IoT ecosystems and dozens of mobile robots introduces complexities in sensor calibration, data synchronization, and privacy concerns that are not fully explored.

Finally, while the LLM plans actions, the paper doesn't explicitly detail how the robots learn from their own planning failures or refine their behaviors. Integrating concepts from "The Robot's Inner Critic: Self-Refinement of Social Behaviors through VLM-based Replanning" would add another layer of robustness, allowing the system to learn and adapt beyond just reacting to new sensor data.

Can You Build This in Your Garage?

For the ambitious hobbyist or academic lab, the core IndoorR2X simulation framework provides a solid starting point. The paper states it offers "configurable simulation environments, sensor layouts, robot teams, and task suites," which is excellent for systematic evaluation. While the full LLM integration might require significant computational resources (like access to GPT-4 or similar powerful models as API calls), one could experiment with simpler language models or rule-based systems to emulate the planning logic.

Hardware-wise, replicating the real-world experiment would involve mobile robots like Stretch (which are expensive collaborative robots, not mini drones) and standard web cameras. The IoT aspect could be simulated with MQTT brokers and simple Python scripts reporting "sensor data." The challenge for a drone hobbyist lies in porting the concepts from ground robots to flight platforms, including robust localization and navigation for drones in complex indoor environments, especially when relying on external camera feeds. Open-source robot operating systems like ROS would be essential for integrating different sensor modalities and robot control.

The Wider Robotics Ecosystem

This work on R2X coordination for indoor robots naturally connects to broader trends in autonomous systems. The challenge of scaling coordination, particularly for large numbers of agents, is a hot topic. For instance, "MeanFlow Meets Control: Scaling Sampled-Data Control for Swarms" by Dong et al. explores efficient control for large-scale swarms, a necessary next step if IndoorR2X were to manage dozens or hundreds of mini drones. Understanding how to manage control updates intermittently, as that paper describes, would be crucial for efficient R2X deployment with many robots.

Furthermore, enabling robots to perform complex reasoning, like the LLM-driven planning in IndoorR2X, is increasingly reliant on advanced AI models. "Can Large Multimodal Models Inspect Buildings? A Hierarchical Benchmark for Structural Pathology Reasoning" showcases how LMMs can enable drones to perform high-level intelligence like structural analysis, demonstrating the wider applicability of these powerful models beyond just navigation. It underlines that drones are becoming platforms for sophisticated AI, not just data collectors.

Finally, to make this level of autonomy truly robust, robots need more than just planning; they need to learn and adapt. "The Robot's Inner Critic: Self-Refinement of Social Behaviors through VLM-based Replanning" by Lim et al. proposes a system where robots critique and replan their own actions. Integrating such self-refinement into an IndoorR2X system would make drone teams more resilient to unexpected events or inaccurate IoT reports, pushing them closer to true autonomy.

The ability for drones to break free from their isolated perception bubbles and integrate with the fabric of our smart environments promises a future of unprecedented autonomy and capability. It's not about replacing, but augmenting.

Paper Details

Title: IndoorR2X: Indoor Robot-to-Everything Coordination with LLM-Driven Planning Authors: Fan Yang, Soumya Teotia, Shaunak A. Mehta, Prajit KrisshnaKumar, Quanting Xie, Jun Liu, Yueqi Song, Li Wenkai, Atsunori Moteki, Kanji Uchino, Yonatan Bisk Published: March 2026 arXiv: 2603.20182 | PDF

Written by

Mini Drone Shop AISharing knowledge about drones and aerial technology.