Matryoshka Gaussian Splatting: Adaptive 3D Vision for Smarter Drones

A new training framework, Matryoshka Gaussian Splatting (MGS), enables continuous level of detail for 3D Gaussian Splatting without sacrificing full-fidelity rendering quality. This means drones can dynamically adjust their 3D scene understanding on the fly.

TL;DR: Matryoshka Gaussian Splatting (MGS) provides continuous level of detail (LoD) for 3D scenes rendered with Gaussian Splatting. Crucially, it does this without compromising the quality of the full, high-fidelity model, allowing drones to adapt their visual processing from sparse outlines to detailed reconstructions based on immediate mission needs and available compute resources.

Your Drone's Next Vision Upgrade Just Dropped

For drone operators, builders, and engineers, understanding the world in 3D is paramount. Whether you're mapping a construction site, navigating a complex indoor environment, or performing a detailed inspection, the quality and speed of your drone's 3D perception directly impact mission success. A new paper, "Matryoshka Gaussian Splatting" (MGS), offers a notable advancement, providing a way for drones to achieve adaptive 3D vision. This means they can scale detail from a rough sketch to photorealistic accuracy, all from a single underlying model.

The LoD Conundrum: Why Flexibility Matters

Traditional 3D rendering approaches often present a tough choice. You can either generate a high-fidelity 3D model that's rich in detail but computationally expensive and slow to render, or a simplified, lower-fidelity model that's fast but sacrifices crucial visual information. For drones, this creates a constant tension between accuracy and real-time performance, constrained by limited onboard processing power and battery life. Existing level-of-detail (LoD) methods for 3D Gaussian Splatting (3DGS) have tried to bridge this gap, but haven't quite hit the mark.

Some offer discrete LoD steps, meaning you jump from 10% detail to 50% detail, with no in-between. Such an approach lacks the granular control needed for dynamic scenarios. Others claim continuous LoD but often come with a significant catch: noticeable quality degradation even at full capacity. While offering flexibility, this often comes at the cost of your best possible visual output. That's a compromise no one wants, especially when mission-critical decisions hinge on visual data. MGS directly addresses this, promising true continuous LoD without sacrificing peak quality.

How Matryoshka Gaussians Get Smarter

Essentially, Matryoshka Gaussian Splatting introduces a clever training framework for standard 3DGS pipelines. The brilliance here isn't a new architecture, but a more intelligent training approach. MGS learns a single, ordered set of Gaussians. Think of it like a set of Russian nesting dolls (Matryoshka dolls) – each doll contains a smaller, equally coherent doll within it. Here, each 'prefix' of Gaussians (the first k splats) forms a complete, if less detailed, reconstruction of the scene.

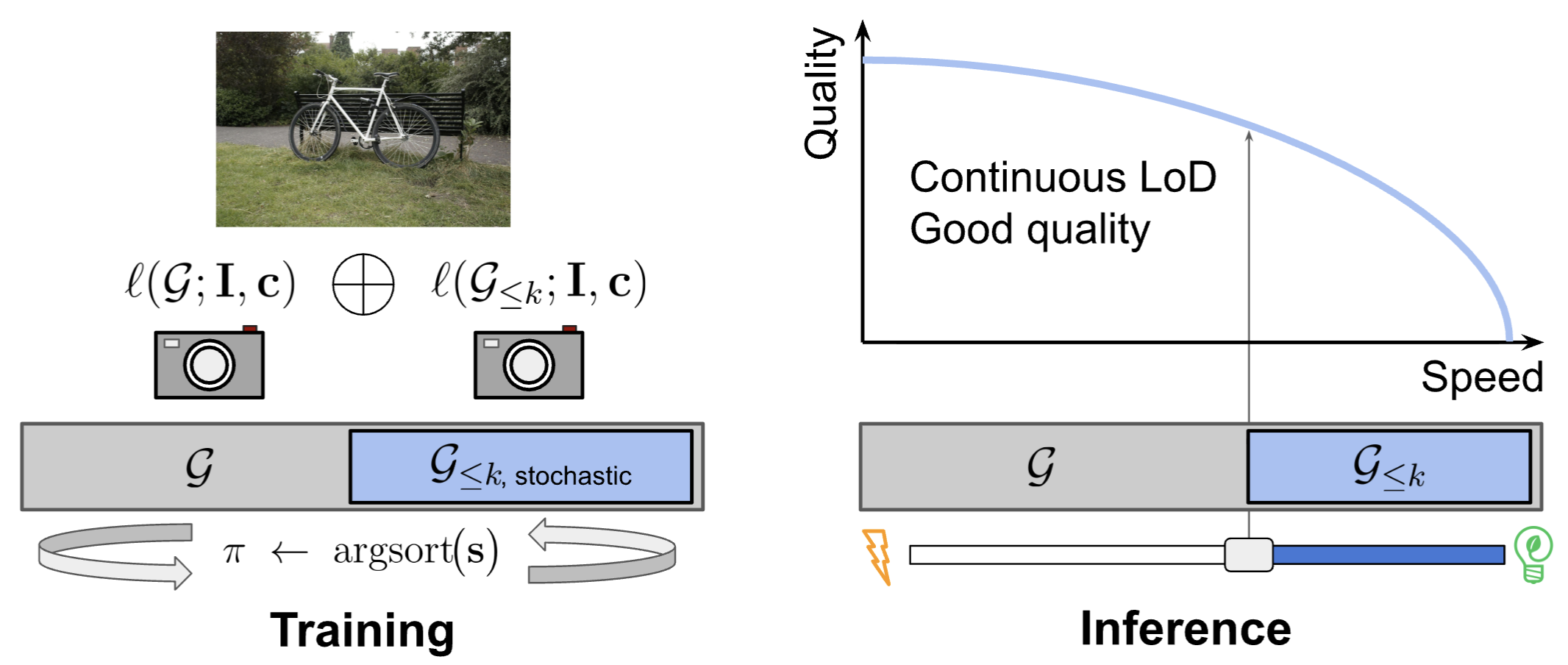

This cleverness comes alive during "stochastic budget training." In each training iteration, the system randomly samples a 'splat budget' – essentially, how many Gaussians it should use. It then optimizes both that randomly chosen prefix and the full set of Gaussians simultaneously. This dual optimization, requiring only two forward passes, ensures that even a small subset of Gaussians can render a coherent scene, while the full set still achieves peak quality. The ordering of these Gaussians is critical, with more important (e.g., higher opacity) Gaussians placed earlier in the sequence, as explored in their ablations (Figure 6).

Figure 2: Framework of Matryoshka Gaussian Splatting.

With this approach, rendering the first 10% of Gaussians gives you a usable, albeit lower-fidelity, scene. Render 50%, and it's much clearer. Render 100%, and you get the best possible quality, indistinguishable from a standard, top-tier 3DGS model. This continuous scaling makes MGS incredibly powerful for adaptive drone applications.

![Continuous LoD need not sacrifice full-capacity quality to enable budget trade-off. Our method, MGS (top), learns an ordered set of Gaussian primitives whose prefixes yield coherent reconstructions at any splat budget. Compared to CLoD-3DGS [26] (mid) and CLoD-GS [4] (bot), MGS achieves the highest fidelity at every operating point with quality degrading gracefully under budget reduction. Scene: Garden [1].](https://qawnehlcoileybzacnvr.supabase.co/storage/v1/object/public/article-covers/figures/matryoshka-gaussian-splatting-adaptive-3d-vision-for-smarter-drones-mn01hoee/fig-0.jpg)

Figure 1: Continuous LoD need not sacrifice full-capacity quality to enable budget trade-off. Our method, MGS (top), learns an ordered set of Gaussian primitives whose prefixes yield coherent reconstructions at any splat budget. Compared to CLoD-3DGS [26] (mid) and CLoD-GS [4] (bot), MGS achieves the highest fidelity at every operating point with quality degrading gracefully under budget reduction. Scene: Garden [1].

Performance That Delivers

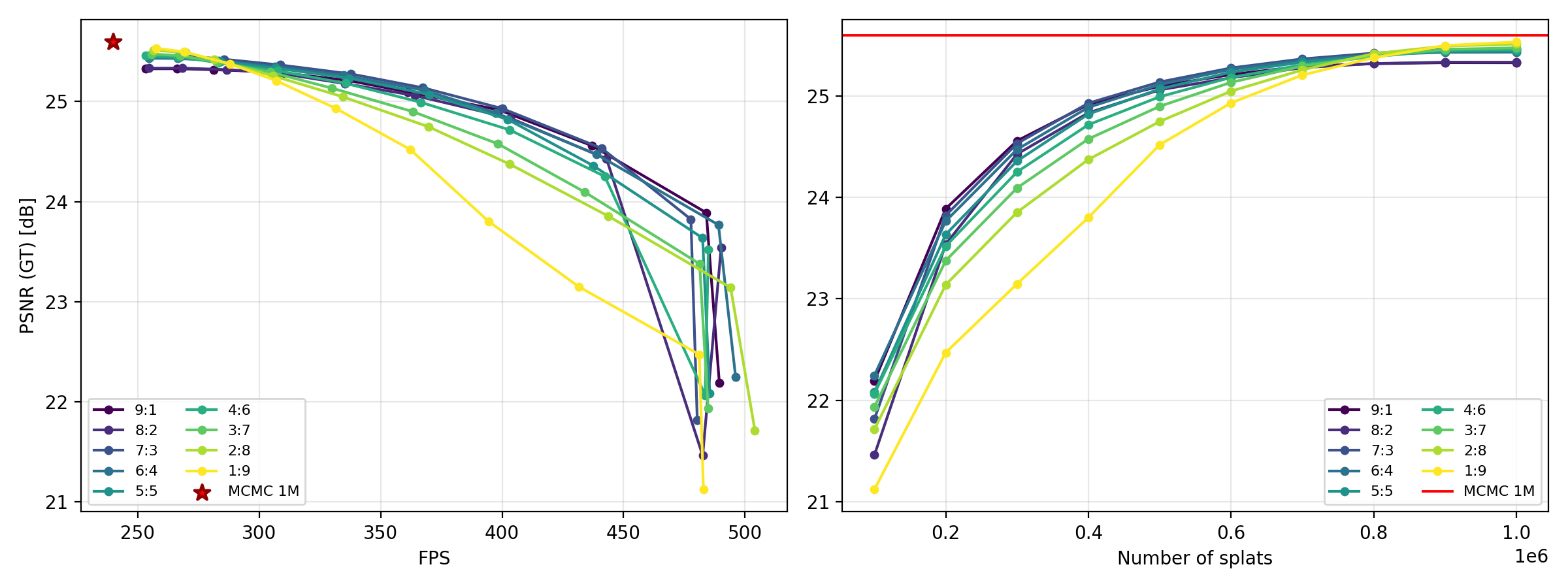

The results are clear. The researchers put MGS through its paces across four benchmarks and against six baselines, and the results are impressive:

- Uncompromised Full-Capacity Quality: MGS matches the peak rendering quality of its backbone 3DGS model. This is critical – you're not sacrificing your best output for the sake of flexibility.

- Superior Quality-Budget Trade-off: At every operating point (from 1% to 100% of splats), MGS consistently delivers higher visual quality (measured by PSNR) compared to other continuous LoD baselines. For example, at highly constrained budgets (5-10%), MGS maintained coherent reconstructions with PSNRs of 21–28 dB, while baselines suffered severe artifacts and quality collapse (11–17 dB).

- Wider FPS Range: MGS spans a much wider Frames Per Second (FPS) range than any baseline, indicating its ability to adapt to extremely diverse computational budgets.

![Quality-budget trade-off on Mip-NeRF 360 [1], averaged across all nine scenes. Left: Quality (Q¯ ar{Q}, Eq. 9) vs. FPS. Right: Quality vs. number of Gaussian splats. Curves trace continuous LoD models across prefix ratios 1%–100%, and trace discrete LoD models at their recommended operating points respectively. MGS (ours, dark blue) achieves the highest quality at every speed and splat budget, while spanning a much wider FPS range than any baseline.](https://qawnehlcoileybzacnvr.supabase.co/storage/v1/object/public/article-covers/figures/matryoshka-gaussian-splatting-adaptive-3d-vision-for-smarter-drones-mn01hoee/fig-2.png)

Figure 3: Quality-budget trade-off on Mip-NeRF 360 [1], averaged across all nine scenes. Left: Quality (Q¯, Eq. 9) vs. FPS. Right: Quality vs. number of Gaussian splats. Curves trace continuous LoD models across prefix ratios 1%–100%, and trace discrete LoD models at their recommended operating points respectively. MGS (ours, dark blue) achieves the highest quality at every speed and splat budget, while spanning a much wider FPS range than any baseline.

![Qualitative comparison of continuous LoD methods across four benchmarks. Renderings are shown at 5%, 10%, 30%, 60%, and 100% of the full splat budget. We compare MGS with CLoD-3DGS [26] and CLoD-GS [4]. Under highly constrained budgets (5–10%), MGS maintains coherent reconstructions with PSNR of 21–28 dB, while both baselines suffer from severe artifacts and quality collapse (11–17 dB).](https://qawnehlcoileybzacnvr.supabase.co/storage/v1/object/public/article-covers/figures/matryoshka-gaussian-splatting-adaptive-3d-vision-for-smarter-drones-mn01hoee/fig-3.jpg)

Figure 4: Qualitative comparison of continuous LoD methods across four benchmarks. Renderings are shown at 5%, 10%, 30%, 60%, and 100% of the full splat budget. We compare MGS with CLoD-3DGS [26] and CLoD-GS [4]. Under highly constrained budgets (5–10%), MGS maintains coherent reconstructions with PSNR of 21–28 dB, while both baselines suffer from severe artifacts and quality collapse (11–17 dB).

Why This Matters for Your Drone Operations

This development holds significant implications for drone builders and operators. MGS reshapes how drones can process and understand 3D environments. Here's why:

- Adaptive Mission Planning: A drone mapping a large area might initially use a low-fidelity LoD for rapid reconnaissance, identifying points of interest quickly. Once a specific area needs detailed inspection, it can seamlessly ramp up to full fidelity for precise data collection, all within the same model.

- Resource Management: Onboard compute resources and battery life are always at a premium. MGS lets a drone dynamically adjust its visual processing load. If power is low or a fast response is needed (e.g., obstacle avoidance), it can render at a lower splat budget, saving CPU/GPU cycles. When hovering for a high-resolution photo, it can crank up the detail.

- Real-time SLAM and Navigation: For Simultaneous Localization and Mapping (SLAM) or autonomous navigation in complex environments, a drone needs fast, reliable scene understanding. MGS enables dynamic trade-offs between speed and accuracy, potentially leading to more robust, efficient navigation. For FPV racing, a low-latency, low-fidelity rendering could provide just enough information to navigate at speed, while a high-fidelity rendering could be used for post-race analysis or detailed environment reconstruction.

- Remote Operations & Data Streaming: When streaming 3D data back to a ground station, MGS could enable the drone to transmit only the necessary level of detail, optimizing bandwidth without sacrificing the option for full fidelity when needed.

What's Still on the Horizon?

While Matryoshka Gaussian Splatting marks an impressive stride, it's important to acknowledge its current boundaries. There are practical considerations and limitations to keep in mind:

- Training Time: While the inference is flexible, the stochastic budget training method does add to the overall training time of the 3DGS model. This isn't a runtime limitation, but it does mean generating these adaptable models takes longer upfront.

- Dynamic Scenes: Like most 3DGS methods, MGS is primarily designed for static scenes. Reconstructing and rendering highly dynamic environments with moving objects remains a significant challenge, one that MGS, in its current form, doesn't directly tackle. For drones operating in bustling urban or industrial settings, this is a key area for future work.

- Onboard Inference Hardware: While MGS optimizes rendering, real-time, high-fidelity 3D Gaussian Splatting still demands significant GPU power. Integrating this into a small, power-constrained drone for complex, high-resolution tasks might require specialized edge AI accelerators or highly optimized custom hardware. The paper demonstrates efficiency on desktop GPUs, but scaling to drone-grade hardware for complex, real-time tasks remains a substantial hurdle.

- Model Size and Storage: While rendering efficiency improves, the full MGS model still contains all the Gaussians for maximum fidelity. For drones with very limited storage, the size of these comprehensive models could be a factor, though 3DGS models are generally quite compact compared to mesh-based representations.

Figure 7: Ablations on Prefix/Full Loss Weight distribution. While prefix-heavy or full-heavy weighting can marginally improve their respective endpoints, a 6:4 ratio provides the best area under the curve. For the sake of simplicity and balanced performance, we utilise a 5:5 ratio for our MGS approach and for all other ablation experiments.

Can You Build With This?

For hobbyists and builders, the feasibility of directly implementing MGS today depends on your existing expertise with 3D Gaussian Splatting. The method is a training framework built on 3DGS, which already has open-source implementations. If you're already familiar with training 3DGS models, adapting to MGS's stochastic budget training might be within reach. You'd need a capable GPU for training, similar to what's required for standard 3DGS. For real-time inference on a drone, you'd likely need a drone equipped with a powerful NVIDIA Jetson module or similar embedded GPU. Crucially, the research indicates no architectural modifications are needed, which lowers the barrier to adoption compared to entirely new hardware or software stacks. The authors have stated their intent to release code and pre-trained models, which would greatly accelerate community adoption.

The Broader 3D Ecosystem

This work fits neatly into the broader 3D ecosystem. For instance, DreamPartGen focuses on generating 3D objects as compositions of meaningful parts with semantic grounding. This could be crucial for providing the high-quality, semantically rich 3D content that an MGS-enabled drone might then efficiently render and interact with, using adaptive fidelity. Similarly, Under One Sun introduces Multi-Object Generative Perception for inferring radiometric constituents like reflectance and illumination from a single image. This foundational perception data is exactly what MGS would then leverage to create its realistic and adaptable 3D rendering, enhancing the drone's understanding of material properties and lighting conditions. Finally, Cubic Discrete Diffusion explores efficient representation of visual information using high-dimensional tokens. If MGS provides efficient 3D rendering, then such methods could offer complementary ways to efficiently generate or encode the underlying visual data or textures, potentially enabling even more compact storage or transmission for drone operations.

Matryoshka Gaussian Splatting represents a solid step toward truly adaptive, efficient, and high-quality 3D vision systems for drones, moving us closer to fully autonomous and intelligent aerial platforms.

Paper Details

Title: Matryoshka Gaussian Splatting Authors: Zhilin Guo, Boqiao Zhang, Hakan Aktas, Kyle Fogarty, Jeffrey Hu, Nursena Koprucu Aslan, Wenzhao Li, Canberk Baykal, Albert Miao, Josef Bengtson, Chenliang Zhou, Weihao Xia, Cristina Nader Vasconcelos. Cengiz Oztireli Published: Preprint (March 2026) arXiv: 2603.19234 | PDF

Written by

Mini Drone Shop AISharing knowledge about drones and aerial technology.