Metis: Smarter Drones That Think Before They Act

New research introduces `HDPO` and `Metis`, an AI model that intelligently decides when to use external tools versus internal knowledge, drastically reducing unnecessary computations while boosting accuracy for autonomous systems.

TL;DR: New research presents

HDPO, a framework that teaches AI models to wisely choose between using internal knowledge and external tools. The resulting model,Metis, reduces tool invocations by orders of magnitude while simultaneously elevating reasoning accuracy, promising faster, more reliable decision-making for drones.

Our drones are getting smarter, capable of increasingly complex autonomous operations. But true autonomy means more than just flying a programmed path or processing data. It means knowing when to process data locally, when to offload to the cloud, when to trust internal sensors, and when to seek external information. This paper tackles exactly that: giving AI agents, and by extension, our drones, the meta-cognition to make these "wise" choices.

The Cost of Blind Action

Current agentic multimodal models, the sophisticated brains behind advanced drone autonomy, suffer from a fundamental flaw: they're often tool-happy. They reflexively invoke external tools (like code interpreters or search engines) even when the answer is clear from their internal visual context. Blind tool invocation isn't just wasteful; it introduces severe latency, consumes extra power, and injects noise that can derail accurate reasoning. For a drone, this translates to slower response times, shorter flight durations due to increased power draw, and potentially critical decision errors in dynamic environments.

Existing attempts to fix this problem by penalizing tool use with a single reward signal fail. These methods either aggressively suppress essential tool use, or the penalty is too mild and gets lost in the noise of the accuracy reward during optimization. It’s an irreconcilable dilemma with no effective middle ground, leaving us with either under-utilized tools or over-utilized, inefficient ones.

Decoupling Wisdom and Accuracy

The core innovation here is HDPO (Hybrid Decoupled Policy Optimization), which redefines how AI agents learn to be efficient. Instead of lumping accuracy and tool-use efficiency into one competing reward, HDPO separates them. It creates two distinct optimization channels: one for maximizing task correctness and another for enforcing execution economy only within accurate trajectories. The agent first learns to solve the task correctly, then learns to do it efficiently. The "conditional advantage estimation" ensures that efficiency is only rewarded when the agent is already performing accurately, preventing the common dilemma where mild penalties for tool use are overshadowed by accuracy rewards.

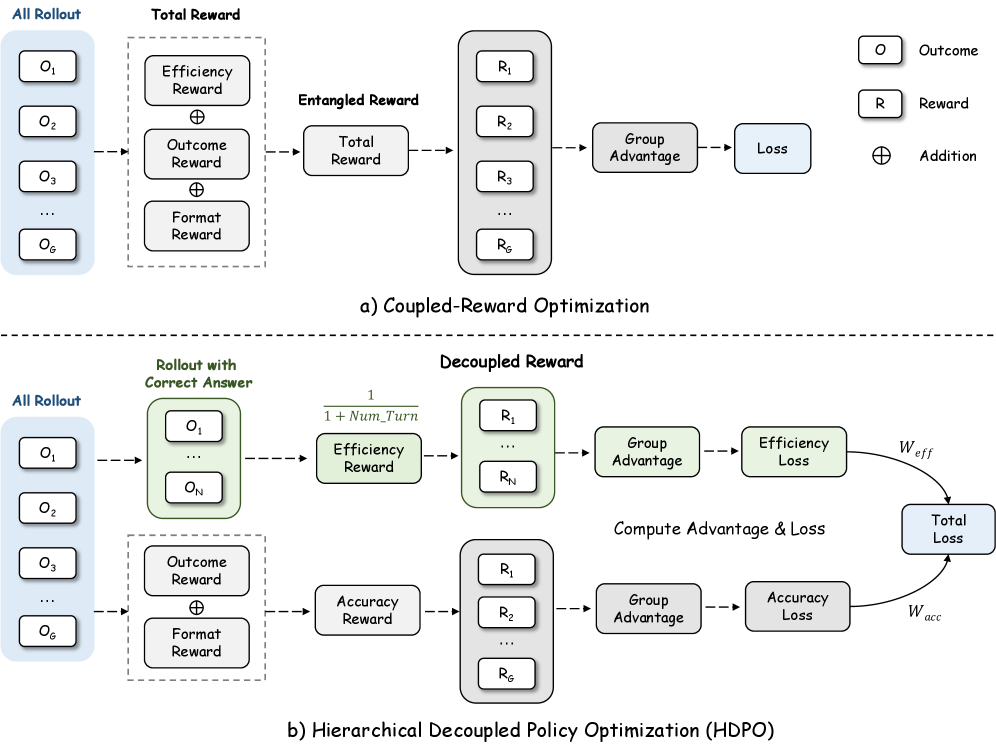

Figure 2 illustrates this decoupling perfectly:

Figure 2: Comparison between coupled-reward optimization and HDPO. Existing methods entangle accuracy and efficiency into a single reward signal, while HDPO decouples them into separate branches and combines them only at the final loss, enabling more strategic tool use.

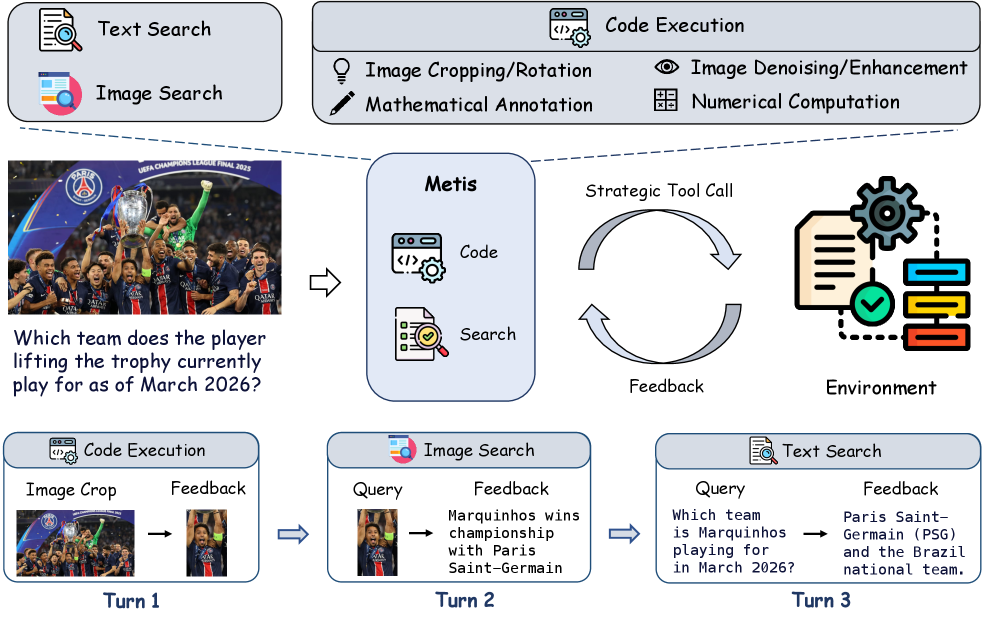

The resulting model, Metis, is designed as a strategic multimodal reasoning agent. As shown in Figure 3, Metis selectively invokes tools like code execution, text search, and image search during multi-turn reasoning. It doesn't just call tools by default; it adaptively determines when a tool will genuinely provide useful evidence and when it can reason directly from the available context.

Figure 3: Overview of Metis. A strategic multimodal reasoning agent that selectively invokes code execution, text search, and image search tools during multi-turn reasoning. Rather than invoking tools by default, Metis adaptively determines when tool interactions provide genuinely useful evidence, and otherwise reasons directly from the available context to obtain the final answer.

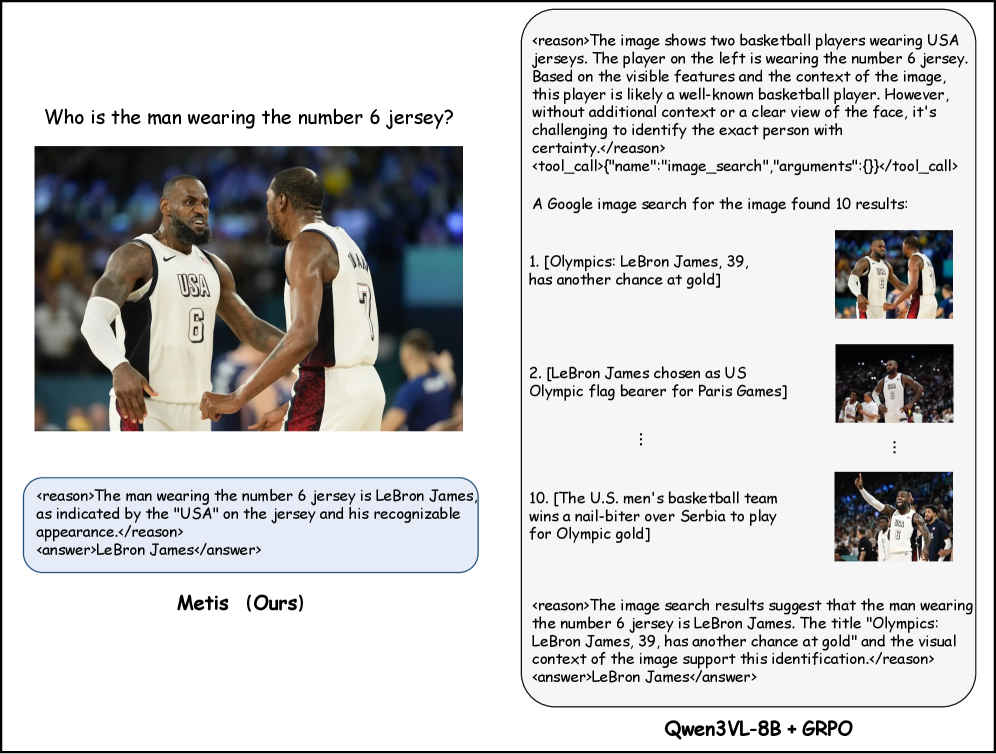

This cognitive curriculum is key. For example, Figure 4 demonstrates Metis's ability to answer a query directly from visual context without external tools, showcasing its meta-cognitive restraint.

Figure 4: Direct reasoning from visual context. The query can be resolved through visual understanding and prior knowledge alone. Metis abstains from tool invocation and answers directly, exemplifying the meta-cognitive restraint instilled by HDPO.

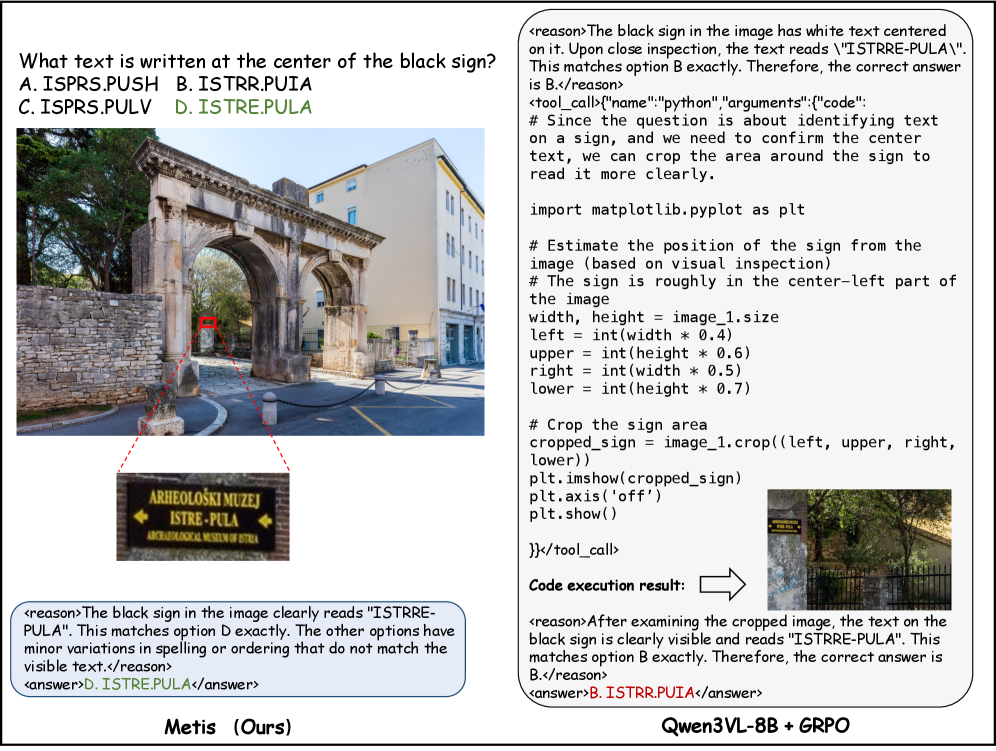

Figure 6 further demonstrates this efficiency, where Metis accurately extracts information from legible on-screen text in an image without resorting to computational tools. This approach avoids unnecessary processing overhead and saves resources.

Figure 6: Direct reasoning from visual inspection. The on-screen text is clearly legible from the raw image. Metis correctly extracts the answer without invoking code execution or search tools, avoiding unnecessary computational overhead.

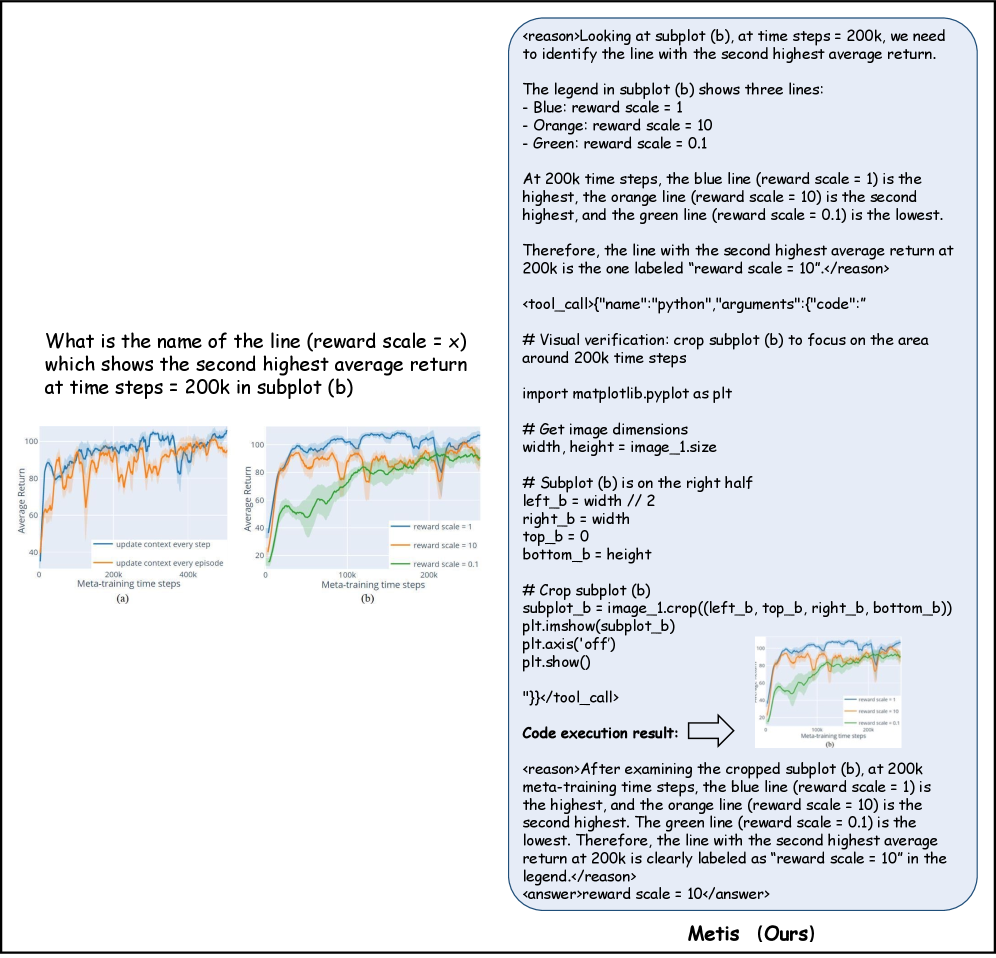

Conversely, when a task genuinely requires external processing, Metis knows when to engage. Figure 5 shows Metis invoking code execution for fine-grained visual analysis when a specific subplot region needs enlargement for accurate curve comparison. This highlights the "wisely" part—using tools when necessary, not just because they're available.

Figure 5: Targeted code execution for fine-grained visual analysis. The question requires comparing curves in a specific subplot region that is difficult to resolve at the original image scale. Metis invokes code to crop and enlarge the relevant area, enabling precise identification of the curve behavior near the queried time step.

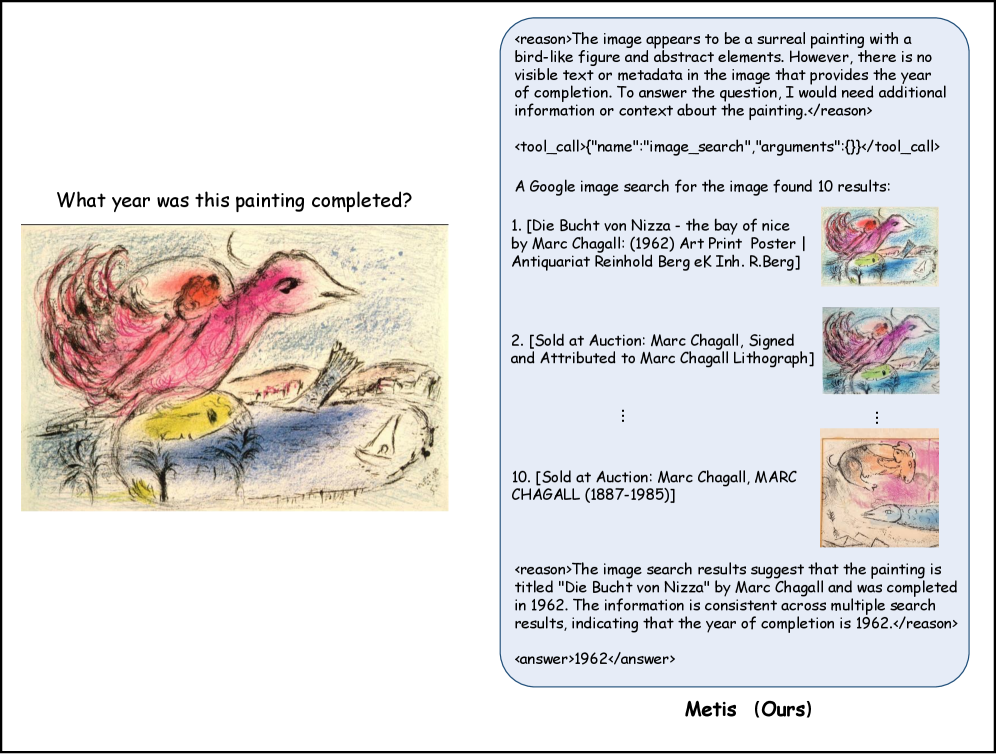

Similarly, when visual identification alone is insufficient, Metis intelligently resorts to external search. Figure 7 demonstrates Metis initiating an image search to identify an artwork that cannot be reliably recognized from its visual features alone, then extracting the required information from the search results.

Figure 7: Strategic image search for visual identification. The artwork cannot be reliably identified from visual features alone. Metis invokes image search to match the visual content against external references, then retrieves the completion year from the search results.

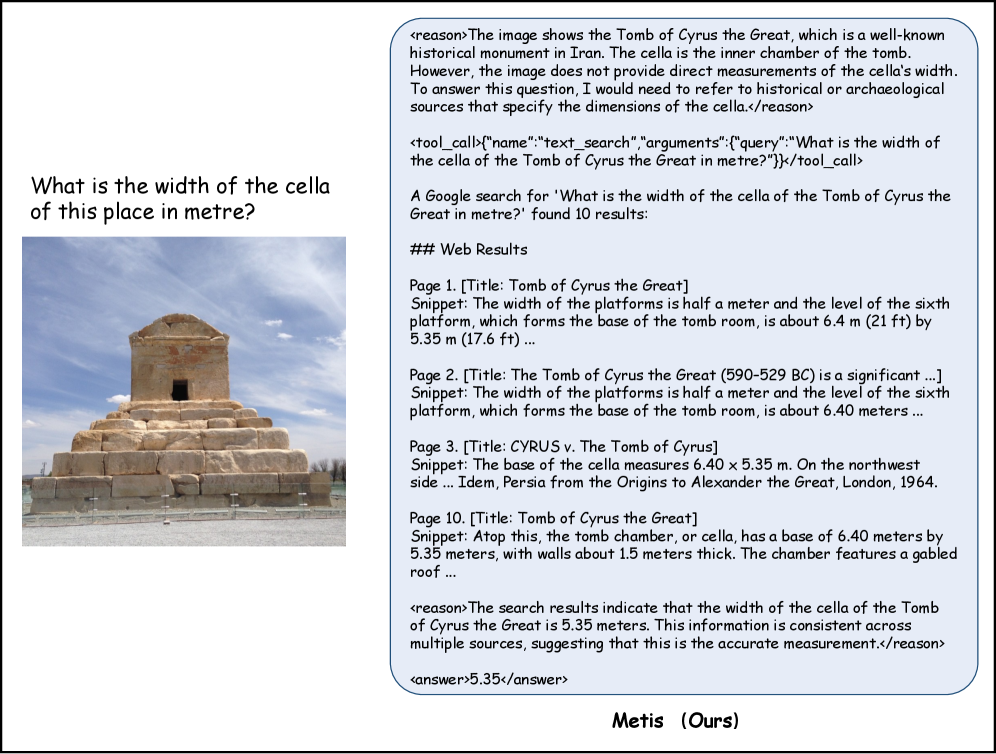

And for factual knowledge gaps, Figure 8 shows Metis strategically invoking a text search. If a drone needs to know the precise dimensions of a structure it's observing, and that information isn't visually apparent, Metis understands the "epistemic gap" and queries an external knowledge base.

Figure 8: Strategic text search for factual knowledge. While the monument is visually identifiable, the queried measurement (cella width) cannot be inferred from the image. Metis recognizes this epistemic gap and invokes text search to retrieve the precise factual information from external sources.

This adaptive tool selection is what sets Metis apart, leading to substantial gains in efficiency and accuracy.

Real-World Impact: Efficiency and Accuracy Boost

The empirical evidence is compelling. Metis, powered by HDPO, significantly outperforms existing methods in both efficiency and accuracy. This isn't a trade-off; instead, it's an improvement on both fronts.

- Tool Invocations:

Metisreduces tool invocations by "orders of magnitude" compared to baseline methods. This represents a massive win for efficiency. - Reasoning Accuracy: Simultaneously, it achieves the best overall performance, elevating reasoning accuracy across benchmarks.

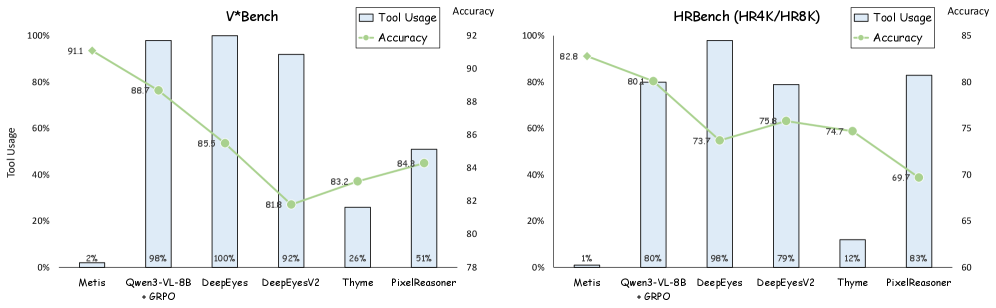

Figure 1 clearly visualizes this dual improvement:

Figure 1: Comparison of tool-use efficiency and task performance. Existing methods rely heavily on tool calls, reflecting limited efficiency awareness. In contrast, our method uses tools far more selectively while achieving the best overall performance, showing that strong accuracy and high efficiency can be attained simultaneously.

For drone operations, this research is a big deal. Consider the direct applications:

- Onboard Processing Efficiency: A drone equipped with a

Metis-like agent could intelligently decide whether to process sensor data (e.g., visual input for obstacle avoidance) using its onboardedge AIhardware or offload it to a ground station or cloud if the task is too complex or requires external data. This conserves precious battery life and reduces communication latency. - Adaptive Autonomy: Consider a drone inspecting infrastructure. If it sees a clear crack, it processes it locally. If it spots an ambiguous anomaly requiring cross-referencing with historical maintenance logs or complex structural analysis, it intelligently queries a remote

knowledge baseor invokes a specializedsimulation tool. - Reduced Latency: By minimizing unnecessary tool calls, drones can react faster to dynamic environments, crucial for high-speed flight or collision avoidance scenarios.

- Robust Decision Making: Fewer extraneous tool calls mean less noise in the reasoning process, leading to more reliable and accurate mission execution. A drone could "know" when its visual

SLAMsystem is sufficient for navigation and when it needs to activateLiDARfor more detailed mapping in a complex, dimly lit environment. - Customizable AI Agents: Builders and engineers can create drone AI that is specifically tuned for efficiency in certain scenarios, maximizing flight time or operational speed without sacrificing task performance.

The Road Ahead: Limitations and Challenges

While HDPO and Metis represent a significant step forward, it's important to acknowledge the current limitations:

- Computational Cost of Meta-Cognition: While

Metisreduces external tool calls, the internal meta-cognitive process itself (deciding when to use a tool) still incurs some computational overhead. The paper doesn't explicitly detail the latency or power cost ofMetis's internal decision-making compared to a "blind" agent before external tools are invoked. - Pre-defined Tools: The

Metisagent relies on a set of pre-defined tools (code execution, text search, image search). Its "wisdom" is bounded by the capabilities of these tools. Integrating new or dynamic tools would require further research. - Generalizability to Real-World Uncertainty: The evaluations are based on specific multimodal reasoning benchmarks. Real-world drone environments are far more chaotic and unpredictable. How

Metishandles novel, ambiguous, or rapidly changing scenarios where its internal context or known tools might be insufficient is an open question. - Hardware Integration: The paper focuses on the algorithmic and model aspects. For real-world drone deployment, integrating this type of meta-cognitive agent efficiently onto

edge AIprocessors with strictSWaP-C(Size, Weight, Power, and Cost) constraints would be a significant engineering challenge. The 'tools' a drone might use (e.g., activating a specific sensor, running a heavy-dutyCValgorithm, transmitting data) have very different cost profiles than abstract 'code execution' or 'text search' in a simulated environment.

Building Smarter Drone Brains

For hobbyists, replicating Metis from scratch would be a substantial undertaking. The model itself, being a large multimodal model, requires significant computational resources for training, likely involving multiple GPUs or TPUs. However, the core idea of HDPO and its principles could inspire more constrained, domain-specific implementations. If the authors release Metis weights or a simplified HDPO implementation, it would open doors for more accessible experimentation. For now, the paper describes a research breakthrough rather than an off-the-shelf solution. Hardware for local deployment would involve advanced NVIDIA Jetson or Intel Movidius platforms, but the training itself is at a cloud-scale.

The paper significantly advances the field of agentic multimodal models. It directly addresses the "blind tool invocation" problem that plagues many current Large Language Models (LLMs) and Multimodal Large Language Models (MLLMs). For instance, the challenge highlighted in "Seeing but Not Thinking: Routing Distraction in Multimodal Mixture-of-Experts" by Xu et al. (arXiv:2604.08541)—where models perceive accurately but fail to reason—is precisely what Metis aims to mitigate by providing a more discerning reasoning process. By ensuring Metis doesn't get sidetracked by unnecessary external calls, it implicitly reduces opportunities for such 'routing distractions'.

Furthermore, Metis's ability to selectively use tools complements the development of generalist models like OpenVLThinkerV2 by Hu et al. (arXiv:2604.08539). While OpenVLThinkerV2 focuses on building a robust, underlying multimodal reasoning capability across various visual tasks, Metis adds the critical layer of meta-cognition on top. A drone's 'brain' needs both powerful general reasoning (like OpenVLThinkerV2) and the wisdom to manage those capabilities efficiently (like Metis).

Finally, for drones to effectively execute complex missions, they often need to process and generate information with high numerical precision. This is where work like "When Numbers Speak: Aligning Textual Numerals and Visual Instances in Text-to-Video Diffusion Models" by Sun et al. (arXiv:2604.08546) becomes relevant. If a Metis-equipped drone decides to generate a simulation or a mission plan using an external generative model, ensuring that model accurately represents numerical details is crucial for reliable 'tool use'. Metis provides the wisdom to invoke the right tool, and papers like Sun et al. ensure that the invoked tool performs reliably.

Ultimately, HDPO brings us closer to truly autonomous drones that aren't just intelligent, but wise—making smarter, faster, and more efficient decisions in the air.

Paper Details

Title: Act Wisely: Cultivating Meta-Cognitive Tool Use in Agentic Multimodal Models Authors: Shilin Yan, Jintao Tong, Hongwei Xue, Xiaojun Tang, Yangyang Wang, Kunyu Shi, Guannan Zhang, Ruixuan Li, Yixiong Zou Published: April 2026 arXiv: 2604.08545 | PDF

Written by

Mini Drone Shop AISharing knowledge about drones and aerial technology.