Stop Wandering: Metacognitive AI Makes Drones Smarter, Not Just Faster

A new paper introduces MetaNav, an AI agent using "metacognitive reasoning" to dramatically improve vision-language navigation for drones, cutting VLM queries by over 20% while boosting robustness.

TL;DR: This paper introduces MetaNav, a novel AI agent that equips autonomous systems, like drones, with "metacognitive reasoning" for Vision-Language Navigation. By allowing agents to monitor their own exploration and adapt strategies, MetaNav drastically reduces inefficient behaviors like revisiting, leading to state-of-the-art performance and a significant 20.7% reduction in expensive VLM queries.

Teaching Drones to Think About Their Thinking

We've all seen drones navigate complex spaces, but what happens when the command isn't just "go to the red box" but "find the spare parts in the storage room and bring back the heaviest one"? Current autonomous systems often struggle with such ambiguity and scale, wasting precious battery life and mission time by getting lost or repeatedly checking the same spots. Truly intelligent, human-commanded drones need to move beyond simple pathfinding to genuine understanding and efficient execution.

The Cost of Aimless Exploration

Today's Vision-Language Navigation (VLN) agents, even those leveraging powerful foundation models, often lack self-awareness. They operate on greedy frontier selection—always picking the next seemingly best spot—and rely on passive spatial memory. This approach leads to frustrating inefficiencies: drones get stuck in local oscillations, needlessly revisit explored areas, and burn through computational resources with redundant Visual-Language Model (VLM) queries. For anyone building a long-endurance inspection drone or a complex delivery system, these flaws translate directly into shorter flight times, failed missions, and higher operational costs.

MetaNav's Smart Navigation Playbook

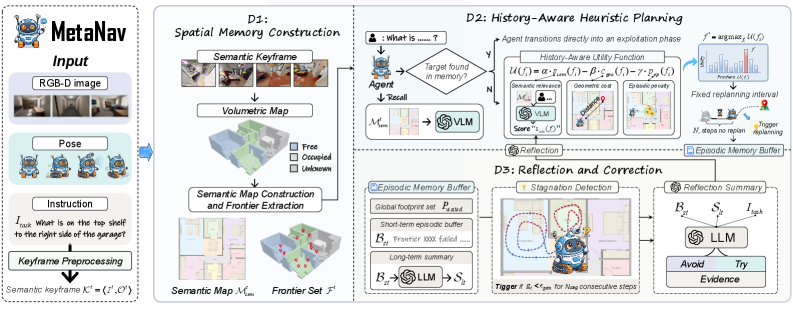

Enter MetaNav, a system designed to give VLN agents a form of "metacognition"—the ability to think about their own thinking. It tackles these problems by integrating three key components: Spatial Memory, History-Aware Heuristic Planning, and Reflective Correction.

First, the Spatial Memory Construction (D1) module gives the drone persistent awareness of its environment. Using RGB-D input, it continuously builds a 3D semantic map, identifying navigable areas and potential "frontiers" for exploration. This isn't just a basic occupancy grid; it’s rich with semantic information, helping the drone understand what it's seeing, not just where it is.

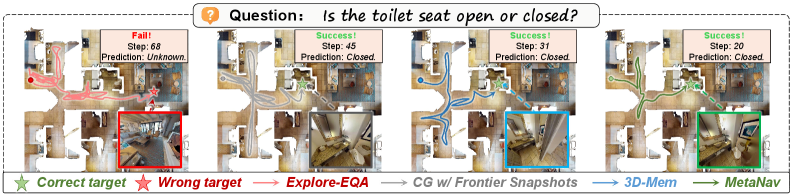

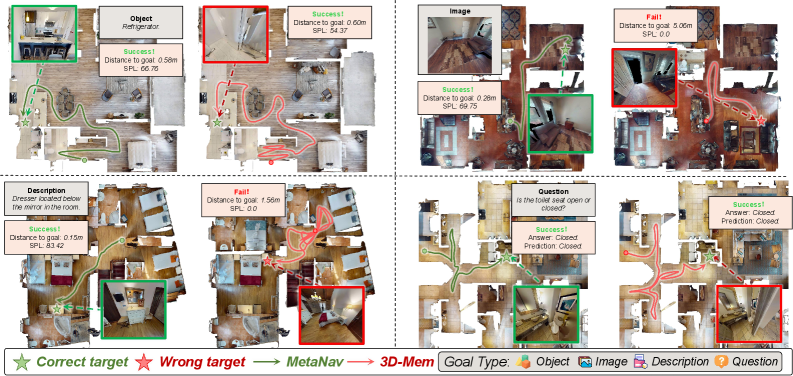

Figure 1. Qualitative trajectory comparison. Baselines suffer from local oscillation or failure when trapped by spatial ambiguities; MetaNav leverages episodic reflection to break deadlocks and generate efficient paths.

Next, the History-Aware Heuristic Planning (D2) takes over. Instead of blindly picking the nearest or most semantically relevant frontier, MetaNav uses a sophisticated utility function. This function considers semantic relevance (how well a frontier matches the instruction), geometric cost (distance to the frontier), and an episodic penalty. This penalty actively discourages the agent from revisiting previously explored or recently failed areas, directly addressing redundant exploration. The agent then executes the chosen path for a fixed replanning interval before re-evaluating.

The truly novel aspect is Reflection and Correction (D3). This module maintains an episodic memory of past actions and their outcomes. It actively monitors the agent's progress, specifically detecting "stagnation"—situations where the drone isn't making meaningful progress or is trapped in a loop. When stagnation is detected, an LLM (Large Language Model) is invoked. This LLM doesn't just give new instructions; it generates corrective rules that are then injected back into the History-Aware Planning module (D2). These rules might be "avoid narrow corridors" or "prioritize open spaces," effectively adapting the drone's frontier selection strategy based on its past failures. This is where the metacognition truly shines, allowing the drone to diagnose and fix its own strategic shortcomings.

Figure 2. System overview of MetaNav. Spatial Memory Construction (D1) builds a persistent 3D semantic map and extracts frontiers from RGB-D input. History-Aware Heuristic Planning (D2) selects a frontier via a utility function combining semantic relevance, geometric cost, and episodic penalty, then executes it for a fixed replanning interval. Reflection and Correction (D3) maintains episodic memory, detects stagnation via information gain, and invokes an LLM to inject corrective rules into D2. Arrows indicate data flow; dashed arrows denote LLM/VLM queries.

The Numbers Don't Lie: Smarter, Faster

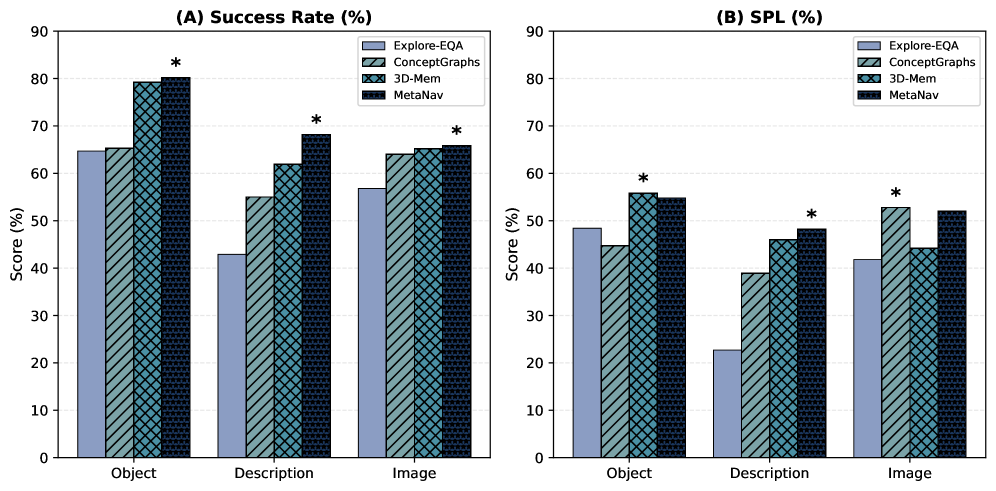

The numbers speak for themselves. MetaNav achieves state-of-the-art performance across multiple benchmarks, including GOAT-Bench, HM3D-OVON, and A-EQA. More importantly for drone operators, it does so with remarkable efficiency.

- VLM Query Reduction: MetaNav reduces the number of expensive

VLMqueries by an average of 20.7%. This is a significant win for battery life and on-device processing. - Improved Success Rates: On

GOAT-Bench, MetaNav outperforms baselines significantly across various instruction modalities, demonstrating its robustness to different command types (Figure 3). - Efficiency Gains: The system’s path efficiency is visibly better. Figure 6 shows MetaNav generating cleaner, more direct paths compared to baselines that often get caught in local oscillations or target confusion.

Figure 3. Performance across instruction modalities on GOAT-Bench.

The authors also fine-tuned parameters like the replanning interval and short-term memory capacity, showing MetaNav's performance is not overly sensitive to these, suggesting a robust design (Figures 4 and 5).

Figure 4. Effect of replanning interval on GOAT-Bench and HM3D-OVON.

Figure 5. Effect of short-term memory capacity KK on GOAT-Bench.

Figure 6. Trajectory comparison across four goal modalities. 3D-Mem (red) shows local oscillation and target confusion; MetaNav (green) produces efficient paths.

Real-World Impact for Autonomous Drones

For hobbyists and engineers working with drones, MetaNav directly addresses critical pain points. Consider an inspection drone tasked with "check all the stress points on the bridge underbelly." With metacognitive reasoning, it wouldn't waste time re-scanning already inspected areas or getting stuck in structural alcoves. Instead, it would learn from its own exploration, dynamically adjusting its search pattern to be more efficient. This translates to:

- Extended Mission Endurance: Fewer

VLMqueries and shorter, more direct paths mean significantly less power consumption, crucial for battery-limitedUAVs. - Robustness in Complex Environments: Drones can autonomously navigate challenging, ambiguous spaces—like cluttered warehouses or disaster zones—without human intervention to correct failed strategies.

- True Autonomy: This moves drones closer to understanding complex human instructions and executing them intelligently, rather than just following a pre-programmed route or simple

SLAMpath. It unlocks possibilities for search and rescue, complex asset management, and even drone-based scientific exploration where adaptability is key.

The Road Ahead: Challenges and What's Next

While MetaNav is a significant step forward, it's important to be pragmatic about its current state and future challenges:

- LLM/VLM Dependencies: The approach heavily relies on Large Language Models (

LLMs) and Visual-Language Models (VLMs) for both semantic understanding and reflective correction. Running these models effectively on resource-constrained drone hardware, especially at the edge, remains a substantial challenge. WhileVLMqueries are reduced, they are not eliminated. - Real-time Adaptation to Dynamic Changes: The paper focuses on static or semi-static 3D environments. How MetaNav would handle highly dynamic environments with moving obstacles or rapidly changing conditions is not fully explored. The

RGB-Dinput implies relatively close-range sensing, which might limit its utility in very large-scale outdoor scenarios without additional sensor fusion. - Generalization of Corrective Rules: While the

LLMgenerates corrective rules, their long-term effectiveness and generalization across vastly different environments (e.g., from an indoor warehouse to a forest) need further validation. The quality of these rules is dependent on theLLM's understanding and the quality of the stagnation detection. - Computational Overhead: Although

VLMqueries are reduced, the overhead of running anLLMfor reflection and correction, even if infrequent, adds computational complexity. For truly tiny drones, everyFLOPScounts.

Is This a DIY Project?

For the average hobbyist, replicating MetaNav in its entirety is a tall order. The system relies on sophisticated components like RGB-D cameras, robust SLAM capabilities for 3D semantic mapping, and powerful LLMs/VLMs. While foundation models are becoming more accessible, integrating them into a real-time drone system with custom reflective logic requires significant engineering effort and computational resources often beyond typical hobbyist setups.

However, elements of this research could inspire more constrained projects. Implementing "History-Aware Planning" with simpler heuristics and a basic memory of visited nodes is certainly feasible on Raspberry Pi-powered drones running ROS. For instance, a basic anti-revisiting penalty in your path planning algorithm. On the perception side, projects like "Deep Neural Network Based Roadwork Detection for Autonomous Driving" by Wullrich et al. demonstrate that robust, real-time perception (e.g., using YOLO with LiDAR) is achievable. While their focus is autonomous driving, the underlying principles of using edge AI for environmental understanding are directly applicable to drones needing to "see" and "understand" their immediate environment to support navigation. For managing the memory aspect in long-running missions, insights from papers like "Novel Memory Forgetting Techniques for Autonomous AI Agents" by Fofadiya and Tiwari could inform how you design a more efficient, less bloated episodic memory for your drone, preventing performance degradation over time. And to improve your drone's ability to interpret complex instructions beyond simple "go to X," the work by He et al. in "Beyond Referring Expressions: Scenario Comprehension Visual Grounding" highlights the importance of enhancing semantic comprehension, which is crucial for any sophisticated VLN.

MetaNav pushes autonomous drones beyond mere navigation to a realm of self-aware exploration, making truly intelligent, efficient missions a tangible reality for the future of aerial robotics.

Paper Details

Title: Stop Wandering: Efficient Vision-Language Navigation via Metacognitive Reasoning Authors: Xueying Li, Feng Lyu, Hao Wu, Mingliu Liu, Jia-Nan Liu, Guozi Liu Published: April 3, 2024 (arXiv v1) arXiv: 2604.02318 | PDF

Written by

The Flight DeskSharing knowledge about drones and aerial technology.

More from Mini Drone Shop

Unmasking the Invisible: Polarization Powers Drone Camouflage Detection

Smarter Drone Comms: AI-Powered Beams Cut Through the Noise

Drones Understand 'Past the Truck,' Even When It's Hidden