Unmasking the Invisible: Polarization Powers Drone Camouflage Detection

New research introduces CPGNet, an asymmetric RGB-polarization framework that significantly improves camouflaged object detection by using polarization cues to guide RGB feature learning, outperforming existing methods.

TL;DR: New research introduces CPGNet, an asymmetric RGB-polarization framework that significantly improves camouflaged object detection. It uses polarization cues to conditionally guide RGB feature learning, outperforming existing methods by seeing past camouflage where traditional cameras struggle. This has major implications for drone applications in search and rescue, environmental monitoring, and security, though it requires specialized hardware and high-quality polarization data.

Drones are increasingly our eyes in the sky, offering unprecedented perspectives for everything from infrastructure inspection to environmental monitoring. But what if they could see past what's intentionally hidden? That's the compelling promise of recent advancements in camouflaged object detection (COD), a field critical for applications where objects are designed to blend seamlessly into their surroundings. A new paper, "Conditional Polarization Guidance for Camouflaged Object Detection," by Zhang et al., presents a significant leap forward in this domain. This isn't about better zoom or higher resolution; it's about fundamentally changing how drones perceive their environment, using a clever trick of light to unmask the invisible.

The Blurring Problem

Spotting objects deliberately blended into their surroundings is a monumental challenge for any vision system, human or machine. Our brains are adept at pattern recognition, but even we struggle against well-executed camouflage. Traditional RGB cameras often struggle because a target's color and texture can match the background too closely, making differentiation nearly impossible based on visual appearance alone. While existing polarization-based methods have shown promise—polarization offers unique optical characteristics that can differentiate objects even when they visually fuse with their environment—these systems often rely on complex visual encoders and bulky fusion mechanisms. This translates to more computational overhead, higher power consumption, and increased weight. These are all significant drawbacks for drone applications where every gram and milliampere counts, directly impacting flight time and payload capacity. Furthermore, many current approaches fail to fully leverage polarization's potential to actively guide an RGB system's learning process, treating it more as an additive feature rather than a core insight that could fundamentally improve perception.

How Polarization Unmasks the Hidden

So, how does polarization help? Light waves vibrate in different directions. When light hits a surface, it can become partially polarized, meaning its vibrations align in a particular orientation. Camouflaged objects, even if they match their background in color and texture, often have different surface properties or orientations, causing light to reflect off them with a distinct polarization signature. This subtle difference, invisible to the naked eye and standard RGB cameras, becomes the key.

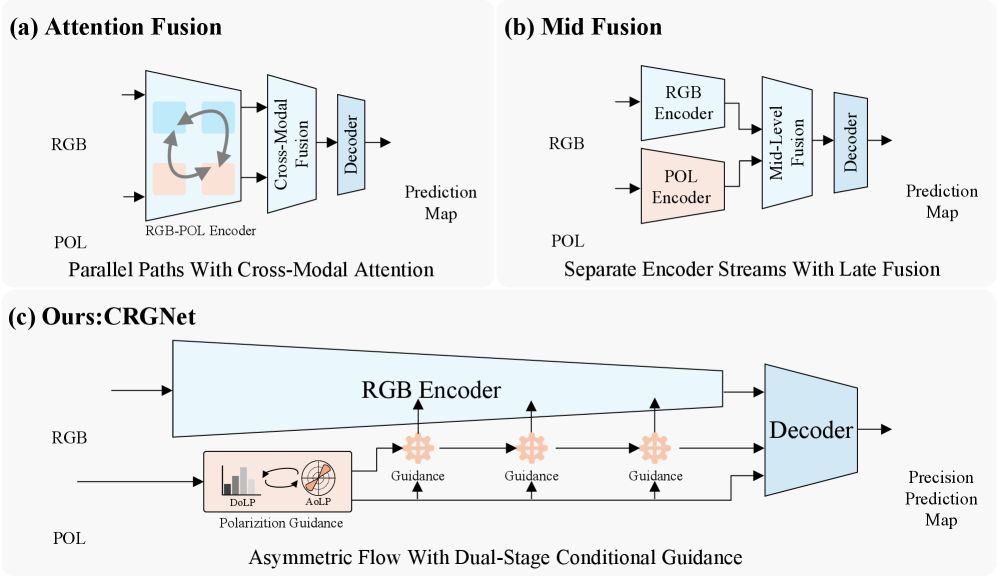

To tackle the limitations of previous methods, the researchers propose CPGNet, an asymmetric RGB-polarization framework. The core idea isn't to simply merge RGB and polarization data; instead, polarization cues conditionally guide the learning of RGB features. Think of it like a seasoned guide whispering crucial hints to a novice, rather than just handing them a map and expecting them to find a hidden trail. The polarization data acts as an intelligent filter, highlighting areas of interest that RGB alone would miss.

At its heart is a lightweight Polarization Integration Module (PIM) that jointly models RGB and polarization cues. This module generates 'polarization guidance'—a kind of prior knowledge that tells the network where to look for subtle discrepancies in the scene. Unlike conventional feature fusion, this guidance dynamically modulates RGB features, directing the network's attention to the faint outlines, textures, or material differences that betray a camouflaged object. This dynamic interaction ensures that the RGB system isn't just passively receiving more data, but actively learning to interpret its own visual input through the lens of polarization.

Figure 1. Comparison of RGB–polarization integration paradigms. (a) Attention fusion enhances RGB features using polarization-aware attention. (b) Mid-level fusion directly merges RGB and polarization features. (c) Our method treats polarization as conditional guidance for RGB representation learning, rather than explicit feature fusion.

Figure 1. Comparison of RGB–polarization integration paradigms. (a) Attention fusion enhances RGB features using polarization-aware attention. (b) Mid-level fusion directly merges RGB and polarization features. (c) Our method treats polarization as conditional guidance for RGB representation learning, rather than explicit feature fusion.

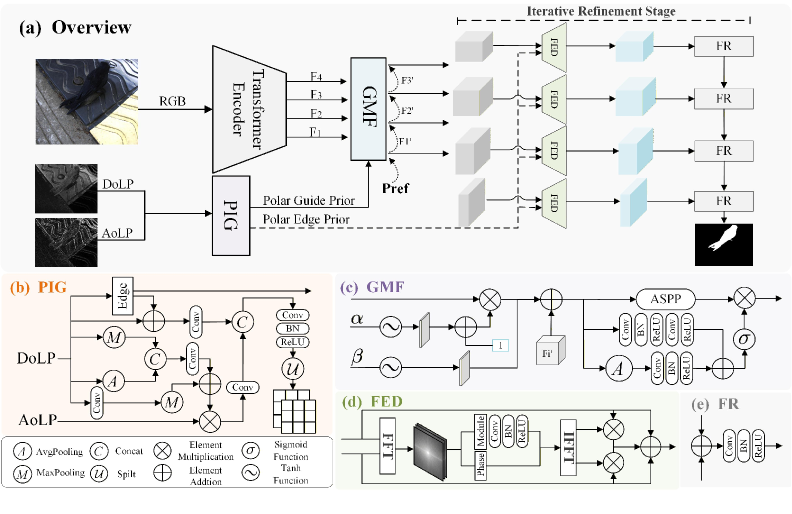

Beyond this basic guidance, CPGNet introduces a Polarization Edge-guided Frequency Refinement (EFM) strategy. This module specifically enhances high-frequency components—the sharp details and edges—using polarization information as a constraint. This is critical for breaking down camouflage patterns, as subtle edge differences are often key to revealing a hidden object. Finally, an iterative feedback decoder refines these predictions from coarse to fine, ensuring both accuracy and complete segmentation.

Figure 2. (a) Overview of the proposed CPGNet. (b) Polarization Integration Module (PIM). (c) Polarization Guidance Enhancement (PGE). (d) Edge-guided Frequency Module (EFM). (e) Feature Refinement (FR). CPGNet progressively injects polarization guidance into hierarchical RGB features and refines predictions in a coarse-to-fine manner.

Figure 2. (a) Overview of the proposed CPGNet. (b) Polarization Integration Module (PIM). (c) Polarization Guidance Enhancement (PGE). (d) Edge-guided Frequency Module (EFM). (e) Feature Refinement (FR). CPGNet progressively injects polarization guidance into hierarchical RGB features and refines predictions in a coarse-to-fine manner.

Seeing is Believing: The Results

The qualitative results are striking. CPGNet was tested on various polarization datasets, including PCOD-1200 and RGBP-Glass, and even on non-polarization RGB-Depth datasets. The model consistently outperformed state-of-the-art methods. In practice, this means clearer, more complete object boundaries and more accurate segmentation, even in highly challenging scenes where camouflage is expertly applied. The network doesn't just guess; it sees.

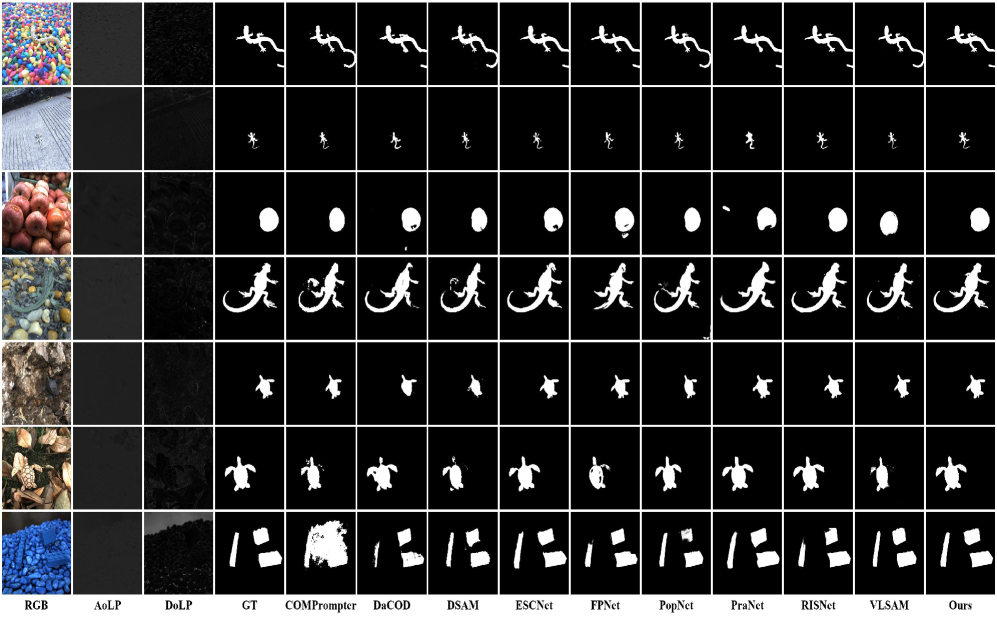

Figure 3. Qualitative comparison with SOTA methods on PCOD-1200. CPGNet produces more complete predictions and cleaner boundaries in challenging camouflage scenes.

Figure 3. Qualitative comparison with SOTA methods on PCOD-1200. CPGNet produces more complete predictions and cleaner boundaries in challenging camouflage scenes.

For instance, Figure 3 clearly shows CPGNet delivering sharper, more confident predictions compared to other models, which often produce fragmented or blurry detections. This isn't just an academic improvement; it translates directly to actionable intelligence for drone operators.

Why This Matters for Drones

This technology holds significant implications for drone applications, potentially transforming how autonomous systems interact with complex environments. For search and rescue operations, a CPGNet-equipped drone could more easily spot individuals wearing camouflage clothing or hidden within debris, drastically reducing search times in critical situations. In environmental monitoring, it could identify invasive species blending into native flora, or detect naturally camouflaged wildlife for population studies, offering a non-intrusive yet highly effective observation tool. For industrial inspection, it could reveal hidden faults or defects obscured by grime or texture on surfaces, improving safety and maintenance efficiency by catching issues that might otherwise go unnoticed. And, of course, in security and defense, the ability to unmask camouflaged assets or personnel would provide a critical advantage, enhancing situational awareness and threat detection. A drone that can reliably spot a hidden trap, a concealed sensor, or even a person deliberately trying to evade detection moves us closer to truly intelligent, perceptive autonomous drones, capable of operating with a new level of awareness.

The Catch: Limitations and What's Next

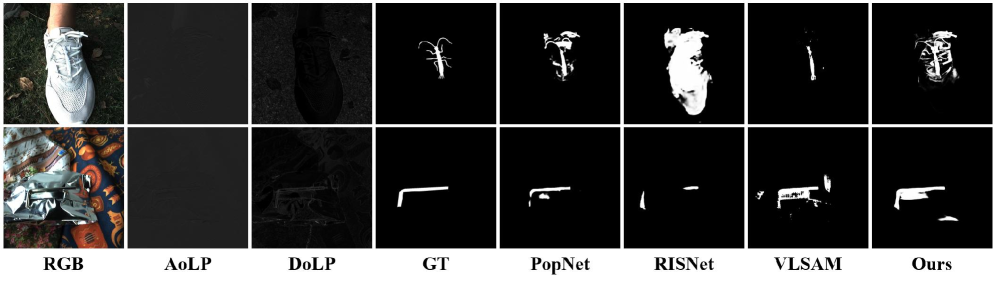

No technology is a silver bullet, and CPGNet comes with practical considerations that are important for real-world deployment. The most critical limitation, acknowledged by the authors, is its reliance on high-quality polarization data. As Figure 7 illustrates, when Degree of Linear Polarization (DoLP) or Angle of Linear Polarization (AoLP) measurements are weak, noisy, or unstable, the derived guidance can become unreliable. This directly impacts localization and segmentation accuracy, meaning environmental conditions like heavy fog, rain, or very low light could significantly degrade performance. Ensuring robust polarization data collection in diverse and challenging weather remains a key hurdle.

Figure 7. Failure cases caused by degraded polarization quality. When DoLP/AoLP measurements are weak, noisy, or unstable, the derived guidance may become unreliable, leading to inaccurate localization and incomplete segmentation.

Figure 7. Failure cases caused by degraded polarization quality. When DoLP/AoLP measurements are weak, noisy, or unstable, the derived guidance may become unreliable, leading to inaccurate localization and incomplete segmentation.

Furthermore, deploying this on a drone requires specialized hardware: a polarization camera. While these are becoming more common (e.g., Sony IMX250MZR or FLIR Blackfly S BFS-U3-51S5P), they still add cost, complexity, and often weight compared to standard RGB sensors. This additional hardware can impact a drone's payload capacity, flight duration, and overall budget. Although CPGNet aims for reduced computational overhead compared to previous polarization fusion methods, running sophisticated deep learning models on edge devices like small drones still presents challenges for real-time processing and power budgets. Achieving high frame rates with minimal power draw on a constrained embedded system will require further optimization. For widespread adoption, these practical considerations around data quality, hardware integration, and computational efficiency will need continuous refinement.

DIY Feasibility: For the Dedicated Builder

For the ambitious hobbyist or drone builder, replicating this kind of system isn't trivial, but it's certainly within the realm of possibility for a dedicated engineer with deep learning experience. The core challenge lies in acquiring a suitable polarization camera, which represents a significant upfront investment. Once that hardware is in hand, the framework itself is software-based. While the paper doesn't explicitly state open-source availability, the methodology is clearly detailed, suggesting it could be implemented using popular deep learning frameworks like PyTorch or TensorFlow. However, be prepared for a steep learning curve if you're new to neural network architectures and the intricacies of training. Expect to dive deep into model design and potentially significant data collection and training if you aim to adapt it to novel camouflage scenarios. This isn't a weekend project, but a serious undertaking for pushing the boundaries of drone vision.

Expanding Drone Intelligence

This work on advanced camouflage detection doesn't exist in a vacuum. Once a drone can reliably spot hidden objects, the next question arises: what do we do with that information? This connects directly to other cutting-edge research, forming a broader ecosystem of drone intelligence. For instance, the paper “EC-Bench: Enumeration and Counting Benchmark for Ultra-Long Videos” explores the challenge of counting and tracking objects in extremely long video feeds. If a drone can find camouflaged items, being able to accurately count them over extended missions—perhaps for inventory management or monitoring subtle changes in a population—is the logical next step, building on CPGNet's detection capabilities. Similarly, “Better than Average: Spatially-Aware Aggregation of Segmentation Uncertainty Improves Downstream Performance” highlights the critical importance of understanding the confidence of a detection. When dealing with something as tricky as camouflage, knowing how certain a drone is about its findings is paramount for making sound decisions in the field, especially in high-stakes scenarios. Finally, bridging the gap from seeing to thinking is crucial. The work in “Video Models Reason Early: Exploiting Plan Commitment for Maze Solving” delves into how video models develop reasoning and planning capabilities. For an autonomous drone, detecting a camouflaged object isn't the end goal; it's the input for a complex decision-making process—whether to investigate further, report its findings, or avoid a potential hazard. CPGNet provides the 'eyes'; these other papers work on the 'brain', collectively advancing the intelligence of autonomous systems.

This research pushes drone vision closer to true perception, giving them an advantage against the visually elusive. The future of drone autonomy looks to be one where nothing stays hidden for long.

Paper Details

Title: Conditional Polarization Guidance for Camouflaged Object Detection Authors: QIfan Zhang, Hao Wang, Xiangrong Qin, Ruijie Li Published: Not explicitly stated in abstract, typical for arXiv pre-prints. arXiv: 2603.30008 | PDF

Written by

The Flight DeskSharing knowledge about drones and aerial technology.

More from Mini Drone Shop

Stop Wandering: Metacognitive AI Makes Drones Smarter, Not Just Faster

Smarter Drone Comms: AI-Powered Beams Cut Through the Noise

Drones Understand 'Past the Truck,' Even When It's Hidden