StreamReady: Drones Get a Sense of Timing for Smarter Alerts

StreamReady, a new AI framework, gives drones a 'sense of timing,' enabling them to answer questions precisely when visual evidence appears. This improves real-time utility and accuracy in streaming video analysis.

TL;DR: A new paper unveils

StreamReady, a framework teaching AI models not just what to see in long videos, but when to answer. This moves drones beyond reactive reporting to truly intelligent, timely communication, measured by a new metric:Answer Readiness Score (ARS).

Drones see everything, recording, processing, and identifying objects or events. But knowing when to alert you to something critical is a different challenge. An alert sent too early is speculative; one sent too late is useless. A team including Shehreen Azad, Vibhav Vineet, and Yogesh Singh Rawat just published StreamReady, a system giving AI that precise sense of timing in streaming video. This isn't just about better object detection; it's about intelligent temporal reasoning – knowing when to act on what's seen.

The Problem: Drones Don't Know When to Shut Up (Or Speak Up)

Current drone vision systems often struggle with the 'when' aspect of real-time intelligence. They excel at identifying a target or an anomaly in a video feed, but lack a robust mechanism to determine the optimal moment to deliver that information. Consider a drone monitoring a construction site for safety violations: spotting a worker without a hard hat is one thing. Reporting it the instant the violation occurs, rather than before enough evidence is gathered or after they've moved on, is another entirely. Existing models, even sophisticated Vision-Language Models (VLMs), are often trained on static images or pre-segmented video clips where the 'when' is implicitly handled by the dataset's structure. Faced with a continuous, unsegmented stream, these models might speculate too early or report too late, diminishing their operational utility. This isn't a minor inefficiency; it's a fundamental limitation for applications where timing is paramount, like search and rescue, dynamic surveillance, or autonomous navigation. Without this temporal precision, even the most accurate object detection becomes less valuable, as the information arrives out of sync with real-world events.

How StreamReady Achieves On-Time Intelligence

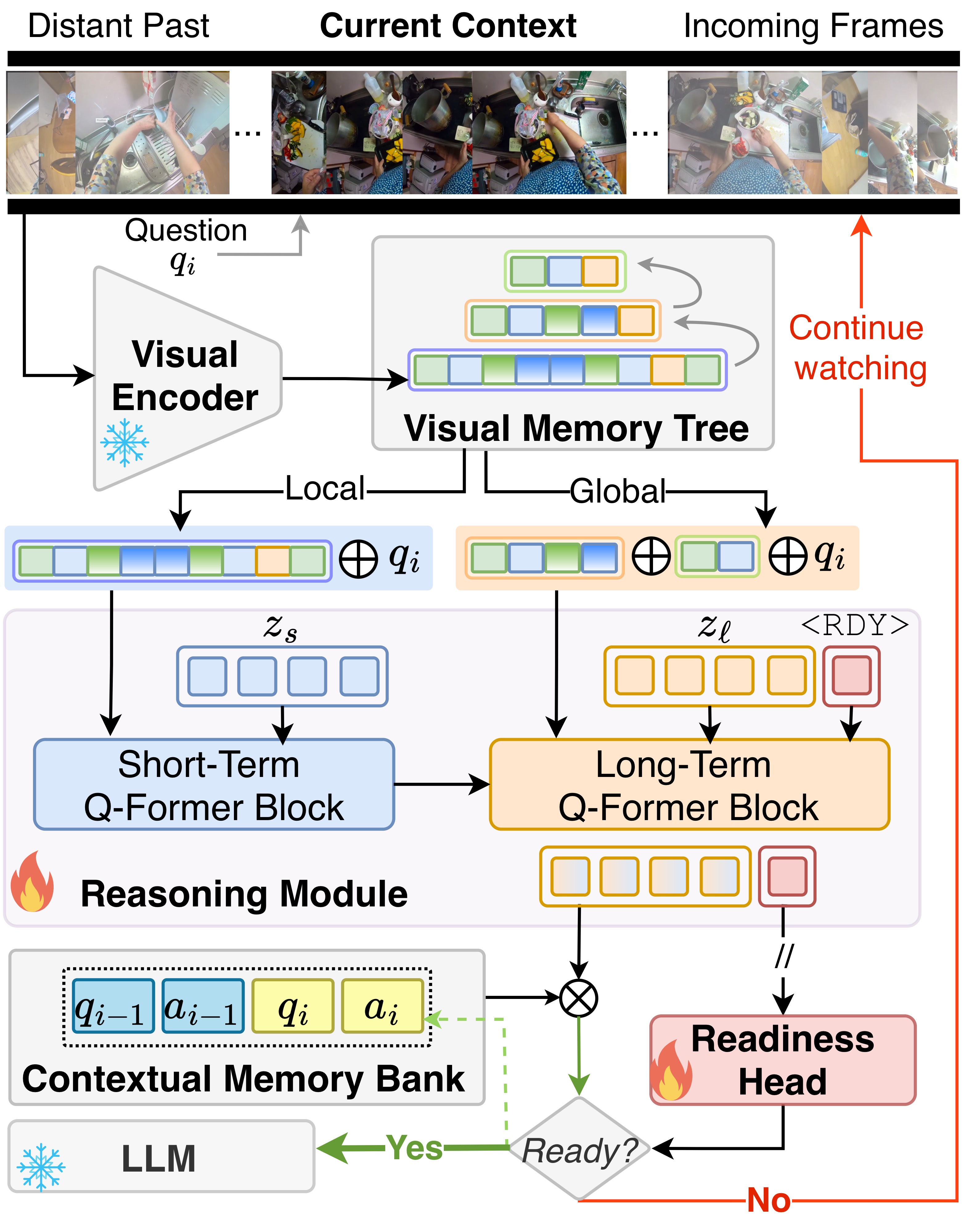

StreamReady tackles this problem with a unique readiness-aware formulation. At its core is the Answer Readiness Score (ARS), a timing-aware objective function that penalizes both early and late answers. The model is explicitly trained not just to be correct, but to deliver that correctness at the opportune moment. The architecture itself is fascinating.

Unlike simpler models that process frames in isolation, StreamReady encodes streaming videos into a visual memory tree. This innovative structure allows the system to reason through both short-term, immediate observations and long-term contextual information, building a richer understanding of the ongoing events. The real trick lies in a learnable <RDY> token, which, guided by a dedicated readiness head, acts as a sophisticated gatekeeper. The system holds its response until this readiness head confirms that sufficient visual evidence has been observed to confidently answer. This prevents premature speculation and ensures the model has 'seen enough' before committing to a response. Only then is the enriched, long-term visual representation – combined with contextual information from past interactions – passed to a Large Language Model (LLM) for generating the final answer.

Figure 2: Framework Overview. StreamReady encodes streaming videos into a visual memory tree and reasons through short and long-term branches. A learnable <RDY> token, guided by a readiness head, gates the reasoning output until sufficient evidence is observed. Once ready, the long-term representation, enriched with contextual information from past QA pairs, is sent to the LLM for answering, enabling readiness-aware streaming behavior.

Figure 2: Framework Overview. StreamReady encodes streaming videos into a visual memory tree and reasons through short and long-term branches. A learnable <RDY> token, guided by a readiness head, gates the reasoning output until sufficient evidence is observed. Once ready, the long-term representation, enriched with contextual information from past QA pairs, is sent to the LLM for answering, enabling readiness-aware streaming behavior.

To evaluate this capability, the authors introduced ProReady-QA, a new benchmark specifically designed with annotated answer evidence windows and proactive multi-turn questions. The benchmark pushes models to not just understand video, but to understand when they have enough information to respond accurately and proactively.

The Numbers Don't Lie

StreamReady doesn't just offer a theoretical improvement; it delivers concrete performance gains. On the new ProReady-QA benchmark, StreamReady-Large achieved an impressive 77.8 ARS-Acc, significantly outperforming prior state-of-the-art methods like Video-LLaMA (55.4 ARS-Acc) and Stream-LLM (64.1 ARS-Acc). This isn't merely a win on their custom benchmark; the paper reports StreamReady consistently outperforms prior methods across eight additional streaming and offline long-video benchmarks. Such robust performance across diverse datasets suggests a broadly generalizable capability.

Why Your Drone Needs a Sense of Timing

The implications of a drone that understands when to speak are profound. This isn't just an academic exercise; StreamReady has direct, tangible implications for drone operations across various sectors:

- Precision Inspection: A drone inspecting a power line for damage, for example. Instead of reporting every flicker or shadow,

StreamReadycould enable it to report a specific crack the moment it's definitively identified, preventing false positives and ensuring timely maintenance action. - Enhanced Surveillance: For security or search & rescue drones, knowing when a person enters a restricted zone or when a specific gesture is made is critical.

StreamReadycould drastically improve the actionability of these alerts. - Autonomous Navigation & Interaction: Future drones interacting with dynamic environments, like navigating a cluttered warehouse or assisting workers, will need to make real-time decisions based on evolving visual cues. Timely comprehension of events – e.g.,

whena path clears orwhenan obstacle appears – is paramount. - Event Logging & Forensics: For any drone mission, accurate timestamping of significant events is crucial. This system ensures that event logs aren't just accurate in content, but also in their temporal placement, which is vital for post-mission analysis or legal contexts.

This tech gives drones a sense of timing, making them smarter and more reliable for critical tasks.

DIY Feasibility: Not Yet, But Watch This Space

Realistically, StreamReady is an advanced research framework, not a plug-and-play library for your hobby drone build. Implementing this would require substantial computational resources (think NVIDIA A100 GPUs), deep expertise in Vision-Language Models, Large Language Models, and complex neural network architectures. The paper doesn't mention open-source code releases at this stage, nor does it propose a simplified version for edge devices. This work represents foundational research pushing the boundaries of AI capabilities. However, the concepts and advancements here will undoubtedly trickle down into more accessible forms over time, influencing future SDKs and specialized AI modules for drones. We've seen this pattern before with complex AI models, where initial breakthroughs in high-performance labs eventually lead to more democratized tools. For now, consider this a glimpse into the next generation of intelligent drone autonomy, developed in high-performance labs.

While StreamReady focuses on the 'when,' other research is simultaneously refining the 'what' and 'why' of AI vision. For instance, Haoyang Li et al. with FVG-PT are refining Vision-Language Models to better focus on foreground elements, which directly feeds into StreamReady's ability to accurately perceive relevant visual evidence. Meanwhile, Nanjun Li et al. are tackling ER-Pose for real-time human pose estimation, a critical 'what' for many drone applications from security to search and rescue. And for understanding the 'why' behind these complex decisions, Simone Carnemolla et al. are developing UNBOX, a method to unveil black-box visual models with natural language explanations. Together, these advancements paint a picture of increasingly capable and transparent drone intelligence.

The ability for a drone to not just observe, but to understand when its observations become actionable, fundamentally changes its role from a data collector to an intelligent, proactive assistant. This timing shift in AI marks a significant step towards truly autonomous and dependable drone operations.

Paper Details

Title: StreamReady: Learning What to Answer and When in Long Streaming Videos Authors: Shehreen Azad, Vibhav Vineet, Yogesh Singh Rawat Published: March 13, 2026 arXiv: 2603.08620 | PDF

Written by

The Flight DeskSharing knowledge about drones and aerial technology.

More from Mini Drone Shop

Stop Wandering: Metacognitive AI Makes Drones Smarter, Not Just Faster

Unmasking the Invisible: Polarization Powers Drone Camouflage Detection

Smarter Drone Comms: AI-Powered Beams Cut Through the Noise