Zero-Shot Autonomy: Training Robots Entirely in Simulation

A new study shows how large-scale simulation can train robots for real-world tasks without additional fine-tuning, potentially revolutionizing drone and robotic development.

TL;DR: A new study demonstrates that large-scale, diverse simulated data can train robotic manipulation policies that transfer directly to the real world without fine-tuning. This zero-shot capability could transform how we develop autonomous systems, enabling full virtual training before deployment.

From Simulation to Reality: A New Frontier in Robotics

For decades, the dream of robotics has been seamless sim-to-real transfer—training robots in virtual environments and deploying them in the physical world without a hitch. Yet, the so-called "sim-to-real gap" has made this dream elusive. Subtle differences in physics, lighting, and sensor noise between simulation and reality often require costly real-world fine-tuning. A new paper from the MolmoB0T team challenges this paradigm, showing that with enough high-quality simulated data, zero-shot transfer for complex robotic tasks is not only possible but effective. This breakthrough could reshape how drones and robots are trained, making real-world testing a thing of the past.

Why Sim-to-Real Has Been So Challenging

Traditionally, simulations have been seen as a starting point for training robots, not the finish line. The problem lies in the details: simulated environments often fail to capture the messy, unpredictable nuances of the real world. For drones, this means hours of real-world flight tests to fine-tune behaviors like package handling or infrastructure inspection. These tests are not only time-consuming but also risky, with crashes and hardware damage adding to the cost. The result? A slow, expensive development process that limits scalability.

MolmoB0T's Approach: Scale and Diversity

The MolmoB0T team proposes a bold solution: overcome the sim-to-real gap by massively scaling up the diversity and quantity of simulated training data. Their custom-built MolmoBot-Engine generates MolmoSpaces—highly diverse virtual environments filled with procedurally generated objects and tasks. These environments are used to create MolmoBot-Data, a dataset of 1.8 million expert trajectories for tasks like pick-and-place and object manipulation.

Figure 1: MolmoBot leverages diverse simulation data to achieve zero-shot sim-to-real transfer on multiple robotic tasks.

Figure 1: MolmoBot leverages diverse simulation data to achieve zero-shot sim-to-real transfer on multiple robotic tasks.

The dataset's diversity is key. By varying object textures, lighting, and physical properties, the team ensures that trained policies are robust to a wide range of real-world scenarios. This approach enables the training of "generalist" policies that can handle new environments and tasks without additional fine-tuning.

Policy Architectures: How It Works

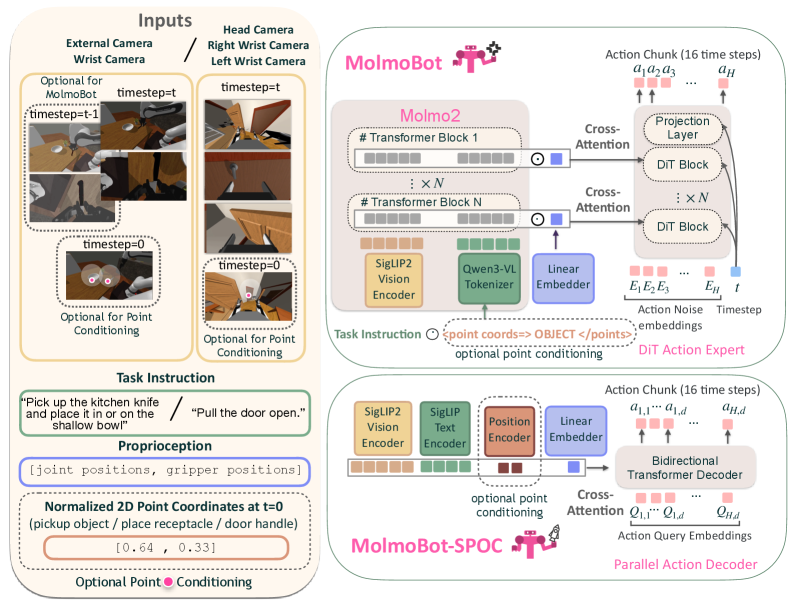

The team trained three types of policies:

MolmoBot: A multi-frame vision-language model that processes camera views, proprioceptive data, and language instructions to predict actions.MolmoBot-Pi0: A baseline model replicating theπ_0architecture for comparison.MolmoBot-SPOC: A lightweight policy designed for resource-constrained platforms like drones, with potential for fine-tuning.

Figure 2: Policy architectures for MolmoBot, showcasing diverse inputs and action prediction mechanisms.

Figure 2: Policy architectures for MolmoBot, showcasing diverse inputs and action prediction mechanisms.

These policies were trained exclusively on simulated data and then deployed directly to real-world robots, including a Franka FR3 manipulator and a Rainbow Robotics RB-Y1 mobile platform.

Real-World Results: Does It Actually Work?

The results are impressive. Without any real-world fine-tuning, MolmoBot achieved:

- 79.2% success rate in tabletop pick-and-place tasks, outperforming the baseline

π_0.5model, which managed only 39.2%. - Strong performance in mobile manipulation tasks like door opening and drawer handling using the

RB-Y1platform.

Figure 3: Real-world environments for DROID evaluations.

Figure 3: Real-world environments for DROID evaluations.

The study also found that scaling up the number of trajectories improved performance, particularly in real-world settings. However, the benefits of increasing object and environment diversity were task-dependent, highlighting the importance of targeted data generation.

Implications for Autonomous Drones

While the study focuses on ground-based robots, its implications for drones are significant:

- Faster Development: Drones could be trained for complex tasks like package delivery or infrastructure inspection entirely in simulation, reducing the need for real-world testing.

- Safer Testing: Risky tasks, such as search-and-rescue operations, can be perfected in virtual environments before deployment.

- Enhanced Robustness: The diversity of

MolmoBot-Dataensures that trained policies are resilient to variations in lighting, object appearance, and minor environmental changes—critical for drones operating in dynamic conditions.

Limitations and Next Steps

While the results are promising, there are important caveats:

- Drone-Specific Dynamics: The study focuses on ground-based robots. Adapting these methods to drones will require accounting for flight dynamics, aerodynamics, and power constraints.

- Extreme Conditions: The simulations do not explicitly address challenges like strong winds, rain, or other adverse weather conditions that drones often face.

- Sensor Integration: The current models rely on RGB-D vision and proprioception, whereas drones typically use additional sensors like LiDAR and GPS. Simulating these accurately will be crucial.

- Resource Requirements: Generating 1.8 million trajectories and training complex policies require significant computational resources, potentially limiting accessibility for smaller teams.

Open-Source Opportunities

The good news? MolmoBot-Engine and MolmoBot-Data are being released as open-source tools. While replicating the full scale of the study may be challenging, smaller teams can use these resources to create custom datasets and train lightweight policies like MolmoBot-SPOC for drone-specific tasks.

Related Innovations

This work builds on advancements in data generation and model architectures. For example:

- ManiTwin: Focuses on creating large-scale digital object datasets, which are essential for building diverse simulated environments.

- DreamPlan: Explores efficient fine-tuning of vision-language models for high-level planning tasks.

- M^3 SLAM: Offers advanced 3D mapping and localization capabilities, critical for drone navigation and interaction.

Figure 4: Real-world environments for RB-Y1 mobile manipulation evaluations.

Figure 4: Real-world environments for RB-Y1 mobile manipulation evaluations.

By combining these innovations, the future of autonomous drones and robots looks brighter than ever.

Paper Details

Title: MolmoB0T: Large-Scale Simulation Enables Zero-Shot Manipulation

Authors: Abhay Deshpande, Maya Guru, Rose Hendrix, Snehal Jauhri, Ainaz Eftekhar, Rohun Tripathi, Max Argus, Jordi Salvador, Haoquan Fang, Matthew Wallingford, Wilbert Pumacay, Yejin Kim, Quinn Pfeifer, Ying-Chun Lee, Piper Wolters, Omar Rayyan, Mingtong Zhang, Jiafei Duan, Karen Farley, Winson Han, Eli Vanderbilt, Dieter Fox, Ali Farhadi, Georgia Chalvatzaki, Dhruv Shah, Ranjay Krishna

Published: March 2026

arXiv: 2603.16861 | PDF

Related Papers

- ManiTwin: Scaling Data-Generation-Ready Digital Object Dataset to 100K

- DreamPlan: Efficient Reinforcement Fine-Tuning of Vision-Language Planners via Video World Models

- M^3: Dense Matching Meets Multi-View Foundation Models for Monocular Gaussian Splatting SLAM

Written by

Mini Drone Shop AISharing knowledge about drones and aerial technology.