ActiveGlasses: Learning Drone Skills Directly From Human Vision

Researchers developed ActiveGlasses, a system that lets robots learn complex manipulation tasks from human demonstrations using head-mounted stereo cameras. This enables zero-shot transfer of skills, including active vision, to robotic platforms.

TL;DR: ActiveGlasses lets you teach robots, including potential future drones, complex manipulation tasks simply by performing them while wearing smart glasses. The system records your ego-centric view and head movements, then directly transfers these "active vision" behaviors and manipulation skills to a robot arm, enabling it to solve tasks, even with occlusions, without further training.

See It, Do It: Teaching Robots with Your Own Eyes

Forget complex programming or tedious teleoperation. What if teaching your drone to pick up a delicate component, or navigate a cluttered space, was as simple as doing it yourself while wearing a pair of smart glasses? That's the core promise of ActiveGlasses, a new system from researchers at Shanghai Jiao Tong University. This system aims to democratize robot training by leveraging natural human demonstration, directly translating your actions and perceptions into robot capabilities.

The Bottleneck: Why Current Robot Training Falls Short

Training robots for real-world manipulation tasks is a significant bottleneck, especially for drones where payload, power, and autonomy are critical. Traditional methods often rely on specialized, bulky handheld controllers or tedious teleoperation setups that increase operator burden and don't naturally bridge the "embodiment gap" between human and robot. Such methods make capturing the nuanced, coordinated way humans perceive and interact with their environment incredibly difficult, often leading to brittle, domain-specific solutions. Furthermore, current approaches struggle with scalability and often fail to teach robots "active vision"—the crucial ability to adjust their viewpoint to better understand a scene, especially when dealing with occlusions or precise interactions. This limitation prevents drones from performing complex, human-like tasks autonomously.

How ActiveGlasses Makes Robots See and Learn

The core idea behind ActiveGlasses is elegant in its simplicity and effectiveness: it leverages the human operator's natural perception and movement as the direct, high-fidelity training signal. The system centers around a pair of commercially available XREAL Glasses augmented with a compact ZED Mini stereo camera. This setup, lightweight and unobtrusive, captures both ego-centric stereo video—providing depth and visual context from the human's perspective—and 6-DoF head movement data as a human performs a task with their bare hands. There are no cumbersome gloves, no markers, and no specialized hand controllers required, just natural human action. This design minimizes operator burden, a key advantage over many existing robot demonstration systems.

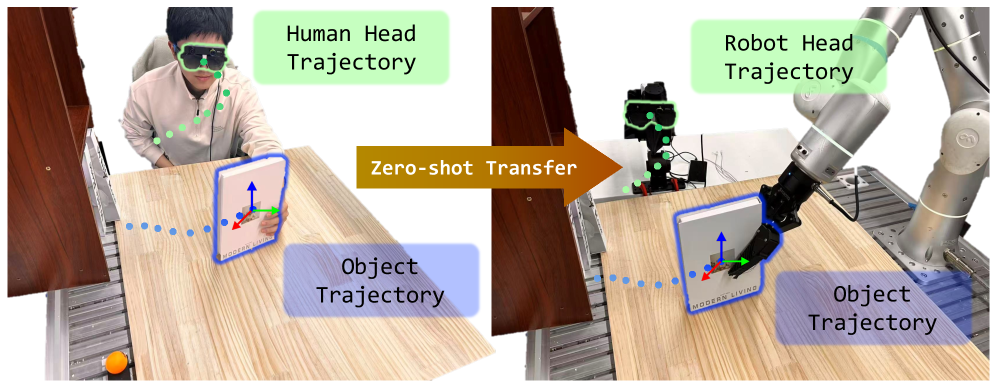

Figure 1: ActiveGlasses allows operators to collect manipulation demonstrations with bare hands, recording stereo observations and head movement for zero-shot transfer to robots.

Figure 1: ActiveGlasses allows operators to collect manipulation demonstrations with bare hands, recording stereo observations and head movement for zero-shot transfer to robots.

During the demonstration phase, the operator actively explores the environment, moving their head to gain better views, track objects, and avoid occlusions, just as they would in real life. This "active vision" component is crucial, as it provides the contextual cues necessary for complex interaction. The ActiveGlasses system then extracts object trajectories from these demonstrations. These trajectories, combined with the visual data, feed into an object-centric 3D policy. This policy, a modified version of the RISE framework, trains to jointly predict both the manipulation actions (e.g., gripper movements and poses) and the corresponding head movements needed to achieve the task goal. The output is a future 6-DoF object trajectory in the task space, effectively predicting what the robot should manipulate and how it should look at it.

![Our system uses XREAL Glasses combined with a ZED Mini stereo camera, enabling egocentric stereo video and 6DoF head movement data collection. During demonstration, the operator actively explores task-relevant regions in the environment and complete manipulation tasks without any hand-held devices. We proposed an object-centric 3D Policy modified from RISE[28], which predicts future 6-DOF object trajectory in the task space. After training, the policy is deployed zero-shot on a real-world robot. A 6-DoF robotic arm synchronously executes the operator’s head motions during inference, allowing the robot to reproduce active vision.](https://qawnehlcoileybzacnvr.supabase.co/storage/v1/object/public/article-covers/figures/activeglasses-learning-drone-skills-directly-from-human-vision-mntt69kh/fig-1.png) Figure 2: The ActiveGlasses system uses XREAL Glasses with a ZED Mini stereo camera for egocentric data collection and an object-centric 3D policy for zero-shot robot deployment.

Figure 2: The ActiveGlasses system uses XREAL Glasses with a ZED Mini stereo camera for egocentric data collection and an object-centric 3D policy for zero-shot robot deployment.

Once the policy is trained, the magic of "zero-shot transfer" comes into play. The exact same ZED Mini camera and its mounting mechanism move from the human operator's head to a dedicated 6-DoF robotic perception arm. This perception arm then precisely mimics the human's demonstrated head movements, effectively reproducing the "active vision" behavior. Concurrently, the robot's manipulation arm executes the learned task, with its actions tightly coordinated with these dynamic viewpoint adjustments. As a result, tasks requiring dynamic viewing—like peering around an obstruction to grasp an object, or accurately positioning a component—become achievable, a significant leap from static camera setups.

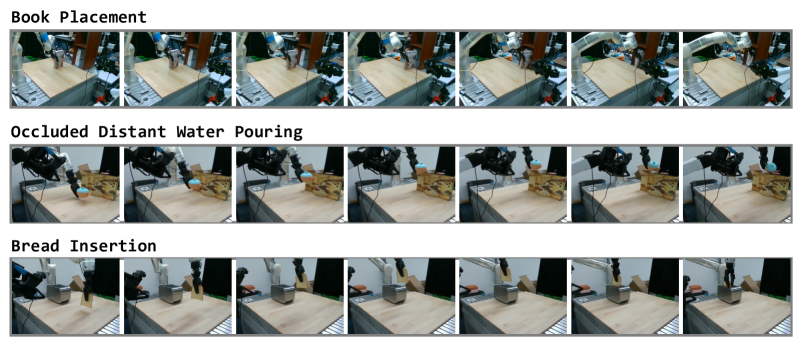

Figure 5: Three challenging manipulation tasks demonstrate the need for active viewpoint adjustment, including scenarios with occlusion and precise interaction.

Figure 5: Three challenging manipulation tasks demonstrate the need for active viewpoint adjustment, including scenarios with occlusion and precise interaction.

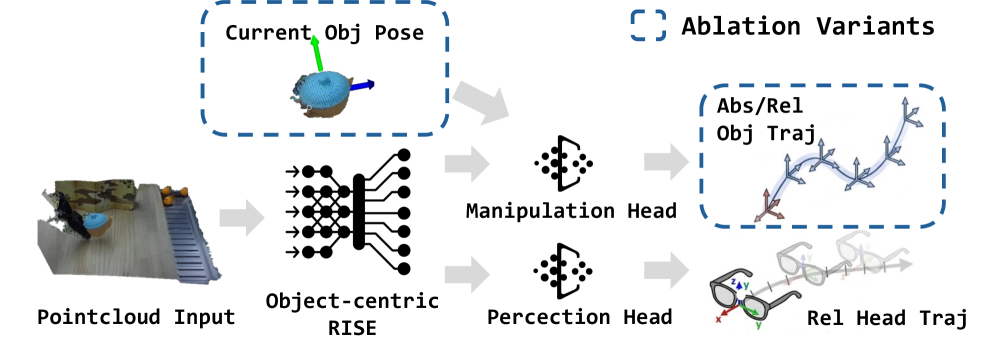

The policy itself is a crucial component of the system. It leverages a diffusion-based approach, which excels at generating complex sequences and distributions. The authors designed it to predict future 6-DoF object trajectories directly in the task space. They also explored different architectural configurations, including using separate diffusion heads for the manipulation actions and the perception (head) movements, and extensively studied the impact of providing the current object pose as an additional conditioning signal to the policy. Such meticulous design ensures robustness and accuracy in diverse scenarios.

Figure 8: Ablation studies on policy design explore separate diffusion heads for manipulation and perception, and the impact of object pose conditioning and action representation.

Figure 8: Ablation studies on policy design explore separate diffusion heads for manipulation and perception, and the impact of object pose conditioning and action representation.

Hard Numbers: ActiveGlasses Delivers

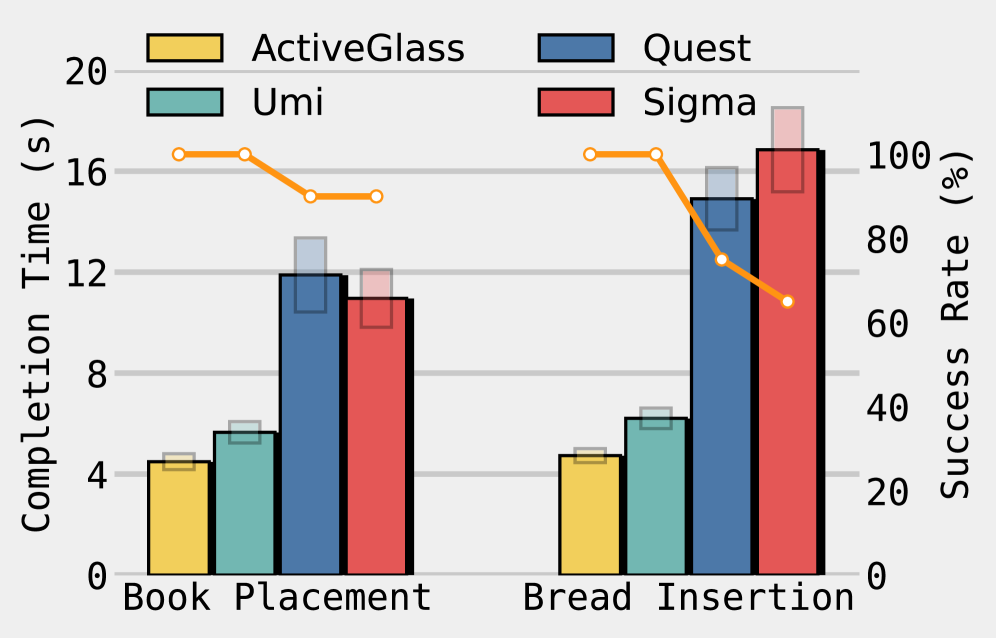

The empirical results are compelling and demonstrate a clear advantage for ActiveGlasses over traditional approaches. The system consistently outperformed strong baselines, including RISE without active vision, in challenging tasks specifically designed to test active vision and precise interaction:

- Book Placement:

ActiveGlassesachieved an impressive 90% success rate, a significant leap compared to baselines (e.g., a version ofRISEwithout active vision scored around 50%). It also completed tasks faster, averaging just 14 seconds. - Occluded Distant Pour Water: This task involved a target cup hidden behind a screen.

ActiveGlassesreached an 85% success rate by actively adjusting its viewpoint to perceive the pouring target, whereas passive vision systems struggled significantly. - Bread Insertion: For this fine manipulation task requiring precise camera orientation to observe a toaster slot,

ActiveGlassesdemonstrated an 80% success rate.

The system showcased robust zero-shot transfer, meaning no additional training was needed on the robot itself after the human demonstration, greatly reducing deployment effort. Critically, it generalized across two different robot platforms, indicating its adaptability beyond a single hardware setup. This capability to complete tasks faster and more reliably in scenarios requiring dynamic viewpoint adjustment underlines the strength of the coordinated active vision approach.

Figure 6: Policy performance showing completion time (bar) and success rate (line) across various tasks, highlighting the effectiveness of ActiveGlasses compared to baselines.

Figure 6: Policy performance showing completion time (bar) and success rate (line) across various tasks, highlighting the effectiveness of ActiveGlasses compared to baselines.

Why This Matters for Your Drone Operations

For the drone community, ActiveGlasses represents a significant step towards more intuitive and capable aerial manipulation. Consider the possibilities: teaching a drone with a gripper to inspect a delicate turbine blade for micro-fractures, pick up a specific sample from a hazardous environment, or even assist in intricate construction tasks by precisely placing components. All of this, simply by demonstrating the action from your perspective. Such technology could drastically reduce the complexity and cost of deploying drones for intricate tasks that currently require highly specialized programming or remote pilot expertise. Drones could learn to navigate complex industrial environments, perform precise object manipulation for search and rescue operations (e.g., retrieving small items from debris), or carry out maintenance on hard-to-reach infrastructure. The lightweight nature of the ZED Mini camera (just 65g) also makes it particularly suitable for integration into smaller, agile drone platforms, opening up new possibilities for airborne robotics where payload capacity is always a premium. This system advances how we can teach drones, moving from explicit coding to implicit learning through observation.

The Road Ahead: Limitations and Next Steps

While ActiveGlasses presents a compelling vision for robot learning, it's important to acknowledge its current scope and limitations. The system isn't a silver bullet for all robot training needs, particularly when considering the unique challenges of drone deployment:

- Limited Task Scope: The demonstrations and tasks presented are currently confined to tabletop manipulation. Scaling this approach to larger, more dynamic, or unstructured real-world drone environments presents significant challenges, especially for tasks involving large workspaces or variable terrains.

- Embodiment Gap for Free-Flying Drones: While

ActiveGlasseseffectively bridges the embodiment gap for a fixed robot arm, a drone's active vision isn't just about head movement; it's also about whole-body movement and maintaining stable flight while manipulating. Adapting the 6-DoF perception arm's movements to a free-flying drone's kinematics, dynamics, and control loops is a non-trivial next step that would require substantial research into integrated flight and manipulation control. - Lack of Force/Tactile Feedback: The system primarily extracts object trajectories from visual observations. The nuances of human force application, compliance, or tactile feedback during manipulation are not directly captured or transferred. This limitation affects its applicability to tasks requiring fine motor control, delicate interactions, or robust error recovery based on physical contact.

- Generalization to Novel Objects and Environments: While zero-shot transfer for learned skills is demonstrated, the paper doesn't deeply explore generalization to entirely novel objects with significantly different geometries or textures, or to environments that differ substantially from the training setup, without requiring new demonstrations. Real-world drone tasks often involve highly variable and unpredictable objects and scenes.

- Perception Arm Complexity: While the human wears simple glasses, the robot requires a separate 6-DoF perception arm to reproduce the active vision. Integrating such an arm onto an already payload-constrained drone, alongside a manipulation arm, adds significant weight, complexity, and power draw, which are critical considerations for aerial platforms.

Can You Build This Yourself?

Replicating the full ActiveGlasses system for a hobbyist drone builder isn't a weekend project, but the underlying hardware components are quite accessible. For instance, the system uses commercially available XREAL Glasses for the display and a ZED Mini stereo camera for perception, both readily available off-the-shelf. The core policy is a modified version of the RISE framework, a known research area, suggesting that advanced enthusiasts with a strong background in deep learning and robotics could potentially adapt or contribute to similar open-source implementations. The main hurdle for DIY application would be acquiring and integrating a precise 6-DoF robot arm for deployment—which is where the robot physically performs the manipulation—and then adapting the learned policies to a drone's unique locomotion and manipulation capabilities. While the conceptual framework is clearly laid out, significant engineering effort would be required to transition this from a fixed-base robot arm to a free-flying aerial platform.

Beyond Just Seeing: The Broader AI Landscape

The work on ActiveGlasses lays a strong foundation for direct, intuitive skill transfer to robots. However, a robot that can do needs a brain that can think. Here, 'Act Wisely: Cultivating Meta-Cognitive Tool Use in Agentic Multimodal Models' becomes relevant. It delves into how an autonomous agent, like a drone, decides when and how to deploy its learned skills, arbitrating between its internal knowledge and external tools such as its active vision system or a gripper. This 'thinking' layer complements ActiveGlasses' 'doing.' Similarly, to provide that overarching intelligence, 'OpenVLThinkerV2: A Generalist Multimodal Reasoning Model for Multi-domain Visual Tasks' presents a broader AI framework. Such a generalist model could serve as the drone's comprehensive 'brain,' integrating the specific manipulation skills learned via ActiveGlasses into a full understanding of its environment and higher-level mission goals. And to ensure the active vision system has the best possible input, 'Self-Improving 4D Perception via Self-Distillation' offers methods for drones to continuously enhance their understanding of 3D objects and their movement over time, without needing expensive manual annotations. This process directly feeds into the quality of data the ActiveGlasses system would use, ensuring the highest fidelity input for learned manipulation tasks and robust operation in dynamic drone environments.

The Future is Demonstrated

ActiveGlasses shifts the paradigm from programming complex drone actions to simply demonstrating them with your own eyes, paving the way for a generation of truly intuitive, adaptable, and highly capable aerial robots.

Paper Details

Title: ActiveGlasses: Learning Manipulation with Active Vision from Ego-centric Human Demonstration Authors: Yanwen Zou, Chenyang Shi, Wenye Yu, Han Xue, Jun Lv, Ye Pan, Chuan Wen, Cewu Lu Published: 2024-04-12 arXiv: 2604.08534 | PDF

Written by

Mini Drone Shop AISharing knowledge about drones and aerial technology.