ClickAIXR: Enabling Smarter, Privacy-Focused Drones with On-Device AI

ClickAIXR integrates vision-language models into drones for on-device object recognition and natural language interaction, reducing cloud reliance and enhancing privacy.

TL;DR: ClickAIXR integrates vision-language models into drones, enabling them to recognize objects and ask questions about their environment in real-time. This on-device processing reduces reliance on cloud AI, improving privacy and responsiveness for critical applications like search and rescue or autonomous inspections.

A New Perspective for Drones: Seeing and Asking Questions

Imagine a drone that doesn’t just capture footage but can also process what it sees and ask intelligent questions about its surroundings—all without needing to send data to the cloud. That’s the vision behind ClickAIXR, a cutting-edge framework that combines object selection with vision-language models (VLMs) designed for extended reality (XR). While initially developed for AR/VR headsets like the Magic Leap, this technology has significant potential for drones, especially in scenarios where privacy, speed, and autonomy are essential.

The Challenge: Cloud Dependency and Limited Interaction

Most drones today rely on cloud-based AI systems for advanced tasks like object recognition and scene analysis. While effective, this approach has several drawbacks:

- Latency: Sending data to the cloud and waiting for a response can introduce delays, which is problematic in time-sensitive situations.

- Privacy Concerns: Transmitting sensitive data to the cloud can be a security risk, especially in industries like defense and healthcare.

- Limited Interaction: Current systems often use gaze or voice-based inputs, which can be imprecise when multiple objects are in view or in noisy environments.

To overcome these challenges, drones need a local, real-time solution that allows for more nuanced and context-aware decision-making. That’s where ClickAIXR comes in.

How ClickAIXR Works

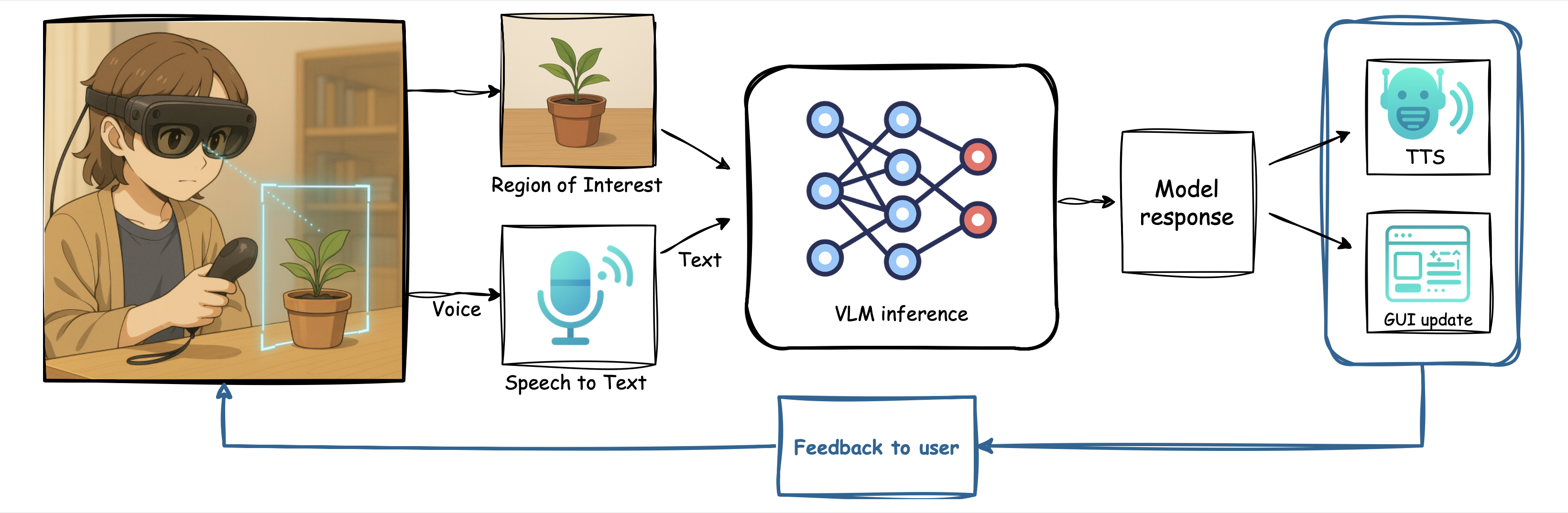

ClickAIXR is designed to process vision-language tasks directly on-device, eliminating the need for constant cloud connectivity. Here’s how it achieves this:

- Precise Object Selection: Instead of relying on gaze or voice inputs, users—or the drone’s system—can “click” on a specific object using a controller or a bounding box. This ensures accuracy even in cluttered environments.

- On-Device Processing: The selected object’s image is analyzed locally using a vision-language model. This model integrates visual data with natural language queries to generate meaningful insights.

- Interactive Queries: Users or automation scripts can ask questions about the selected object, such as “What is this?” or “Is this object safe to handle?” The system provides responses in text or synthesized speech.

Figure 1: The ClickAIXR pipeline combines object selection, image processing, and natural language interaction, all on-device.

Performance: How Does ClickAIXR Compare?

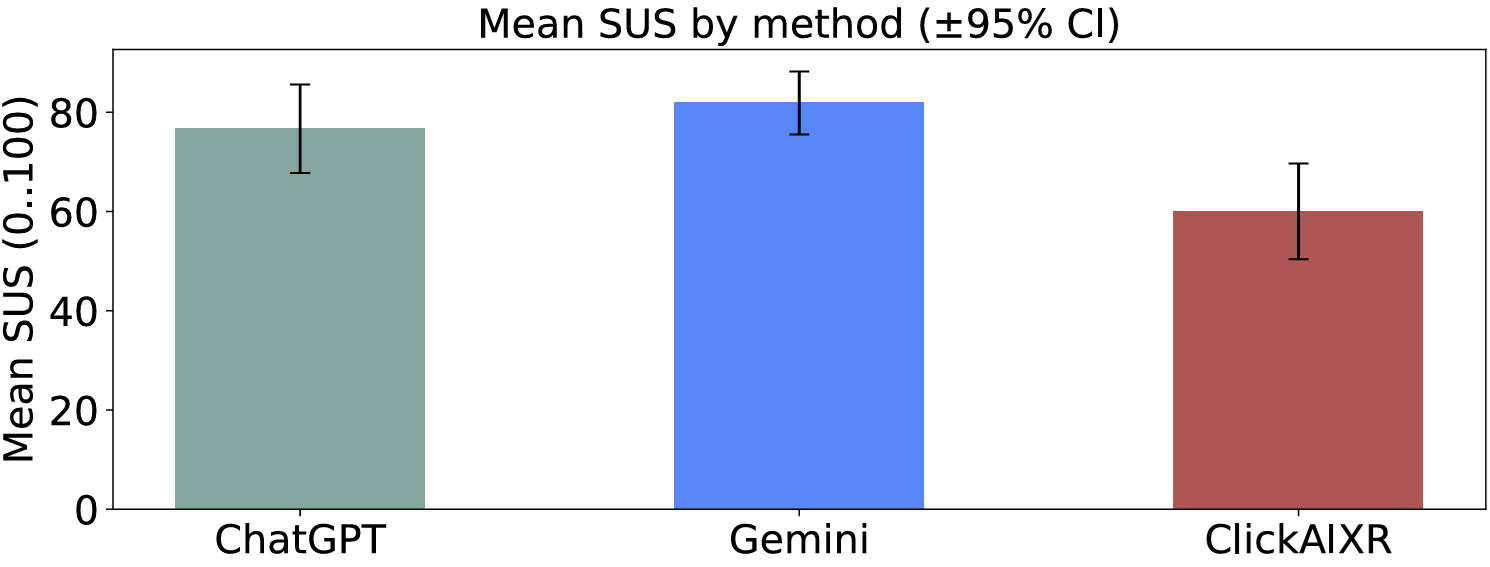

While the technology shows promise, user studies reveal that ClickAIXR still has room for improvement compared to cloud-based systems. Here are some key metrics:

- System Usability Scale (SUS) Scores:

- ClickAIXR: 60.0 ± 17.1

- Gemini: 81.9 ± 11.2

- ChatGPT: 76.7 ± 15.8

- Reliability Ratings (1–5):

- ClickAIXR: 2.8 (lowest among tested systems)

- Gemini and ChatGPT scored higher.

- Latency: While not as fast as cloud-based systems, ClickAIXR’s latency is within acceptable limits for interactive use.

Figure 2: User feedback indicates that ClickAIXR trails behind cloud-based systems in usability and reliability but offers a viable on-device alternative.

Applications in Drone Technology

ClickAIXR’s ability to process vision-language tasks locally could revolutionize how drones operate, particularly in scenarios where connectivity is limited or privacy is paramount. Here are some potential applications:

- Autonomous Inspections: Drones could inspect infrastructure like power lines and ask, “Is this component damaged?” to make real-time assessments.

- Search and Rescue Operations: In disaster zones, drones could identify survivors or hazards by analyzing specific areas and asking contextual questions.

- Collaborative Operations: Operators could interact with drones by selecting objects in a video feed and asking questions like, “What is this material?” or “Should we proceed?”

- Privacy-First Surveillance: By processing data locally, drones can perform surveillance tasks without transmitting sensitive information to external servers.

Challenges and Limitations

While ClickAIXR is a promising step forward, it’s not without its shortcomings:

- Accuracy and Reliability: User studies show that ClickAIXR lags behind cloud-based systems in both usability and reliability. This could limit its adoption in critical applications.

- Hardware Demands: Running vision-language models on-device requires powerful processors like NVIDIA Jetson Orin or Qualcomm Snapdragon XR2. This could increase costs and power consumption for drones.

- Latency Concerns: Although acceptable for many tasks, the system’s latency might not be fast enough for real-time applications like obstacle avoidance.

- Dataset Generalization: ClickAIXR struggles with overlapping or diverse objects, as highlighted in user tests. This limitation could hinder its effectiveness in complex environments.

Figure 3: Overlapping objects in test scenarios reveal the need for improved disambiguation techniques.

DIY Potential: Can You Build It?

For tech enthusiasts interested in experimenting with ClickAIXR, here’s what you’ll need:

- Hardware: High-performance processors like

NVIDIA Jetson OrinorQualcomm Snapdragon XR2. The study used a Magic Leap 2 headset, but other XR-compatible devices may work. - Software: The framework is implemented in

ONNXand the Magic Leap SDK (C API). The source code is available here. - Skill Level: Intermediate to advanced. Familiarity with XR development, vision-language models, and hardware integration is essential.

Broader Implications and Future Directions

ClickAIXR is part of a larger movement toward on-device AI for real-time applications. Researchers and developers interested in this field may find these related works valuable:

- Model Efficiency: “Rethinking Model Efficiency” explores techniques for speeding up large model processing, crucial for resource-constrained devices like drones.

- 3D Scene Understanding: PointTPA offers methods for dynamic network parameter adaptation, enhancing real-time scene interpretation.

- Mitigating AI Hallucinations: This study addresses hallucinations in vision-language models, a critical issue for autonomous systems.

Final Thoughts: A Step Toward Smarter, Safer Drones

ClickAIXR represents a significant step toward enabling drones to process and understand their environment in real-time without relying on the cloud. While the technology is still in its early stages and faces several challenges, its potential to enhance privacy, reduce latency, and enable smarter interactions is undeniable. The next big hurdle will be ensuring that hardware advancements keep pace with the demands of on-device AI.

Paper Details

Title: ClickAIXR: On-Device Multimodal Vision-Language Interaction with Real-World Objects in Extended Reality

Authors: Dawar Khan, Alexandre Kouyoumdjian, Xinyu Liu, Omar Mena, Dominik Engel, Ivan Viola

Published: April 2026

arXiv: 2604.04905 | PDF

Related Papers

Written by

Mini Drone Shop AISharing knowledge about drones and aerial technology.