Smarter Swarms: Multi-Agent AI for Efficient Drone Operations

Discover how multi-agent inference with large models can streamline intelligent drone collaboration, boosting efficiency and enabling smarter UAV swarms with fewer computational resources.

TL;DR: This study proposes a multi-agent inference framework that lets large vision-language models (VLMs) collaborate with smaller models for faster, smarter decisions. Large models can deliver high performance with fewer output tokens, reducing latency while maintaining accuracy.

Turning Drones Into AI Collaborators

What if your drone didn’t just fly solo but worked as part of a highly efficient, intelligent team? A new study explores how multi-agent inference with large models can supercharge drones, enabling swarms of UAVs to collaborate and combine reasoning power—using less time and computational resources. Drones could shift from simple remote-controlled devices to multi-talented AI platforms.

What’s Holding Back Intelligent Drones?

Right now, deploying advanced AI models on drones comes with painful trade-offs. Large language models (LLMs) and vision-language models (VLMs) offer fantastic capabilities, but they’re often inefficient. Traditional inference approaches—where models sequentially generate output tokens—can bog down performance as token counts rise. For drones, this means longer decision-making times, more power consumption, and less flexibility in real-world scenarios. Small models are faster but less accurate, while larger models deliver precision at the expense of speed.

Unlocking Drone Potential: A Multi-Agent Breakthrough

But this paper introduces a fresh approach, showing that large models can outperform smaller ones while using fewer output tokens. The researchers propose a multi-agent inference setup, where smaller models focus on generating reasoning tokens, which are then transferred to larger models for verification or refinement. This reduces the number of expensive calls to large models while maintaining high accuracy.

How It Works: The Multi-Agent Magic

Here’s how it works:

- Large models generate short, efficient responses.

- Small models handle reasoning-heavy tasks and pass their output to large models.

- The framework incorporates mutual verification to further streamline inference by reducing unnecessary computations.

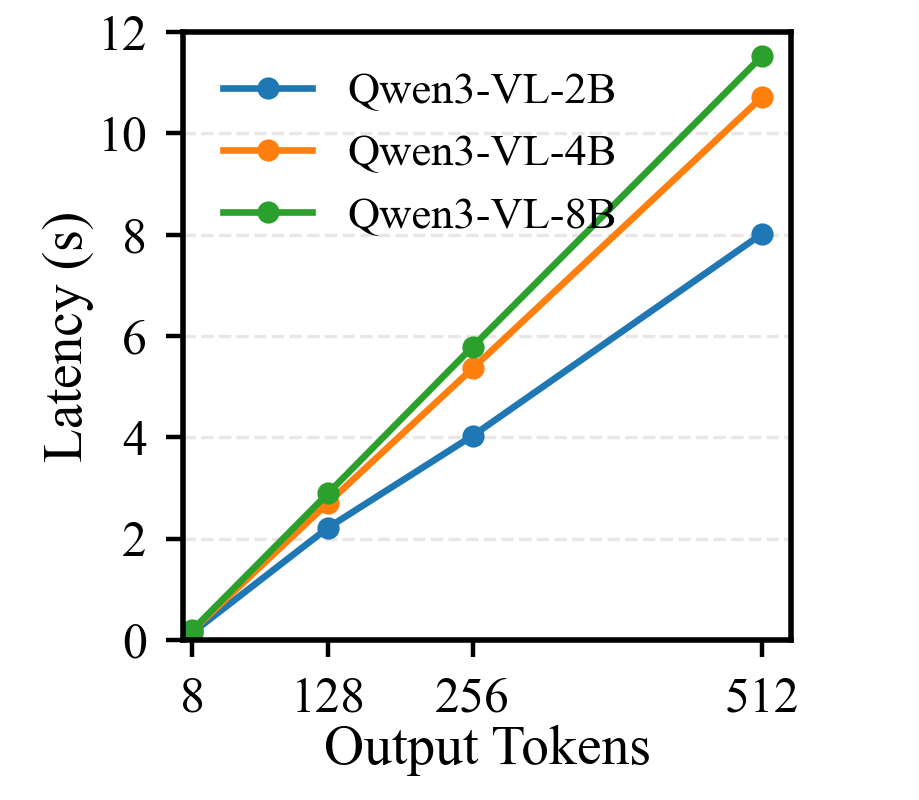

Latency increases with more output tokens. Large models with shorter sequences are faster.

Latency increases with more output tokens. Large models with shorter sequences are faster.

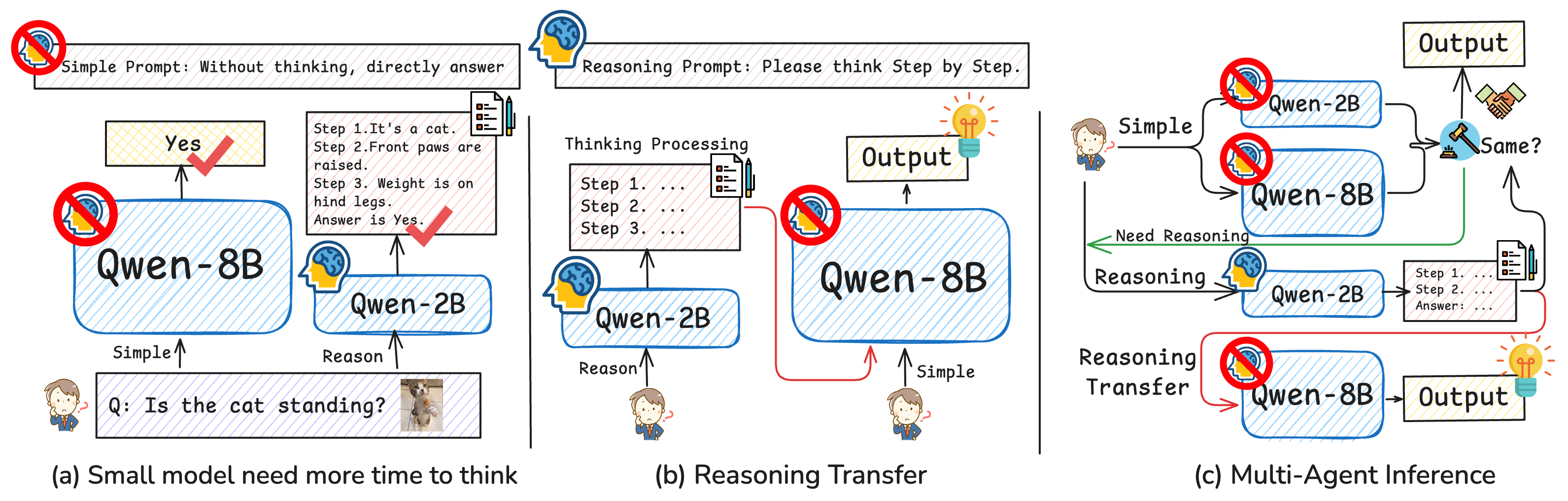

Illustration of the multi-agent inference strategy: reasoning is split between models for efficiency.

Illustration of the multi-agent inference strategy: reasoning is split between models for efficiency.

Results: Fewer Tokens, Same Accuracy

The results are impressive:

- ChartQA: Large models matched small ones in accuracy with far fewer output tokens.

- MMBench: Multi-agent inference delivered comparable performance while reducing latency.

- Speed Gains: By combining reasoning outputs, inference costs dropped significantly.

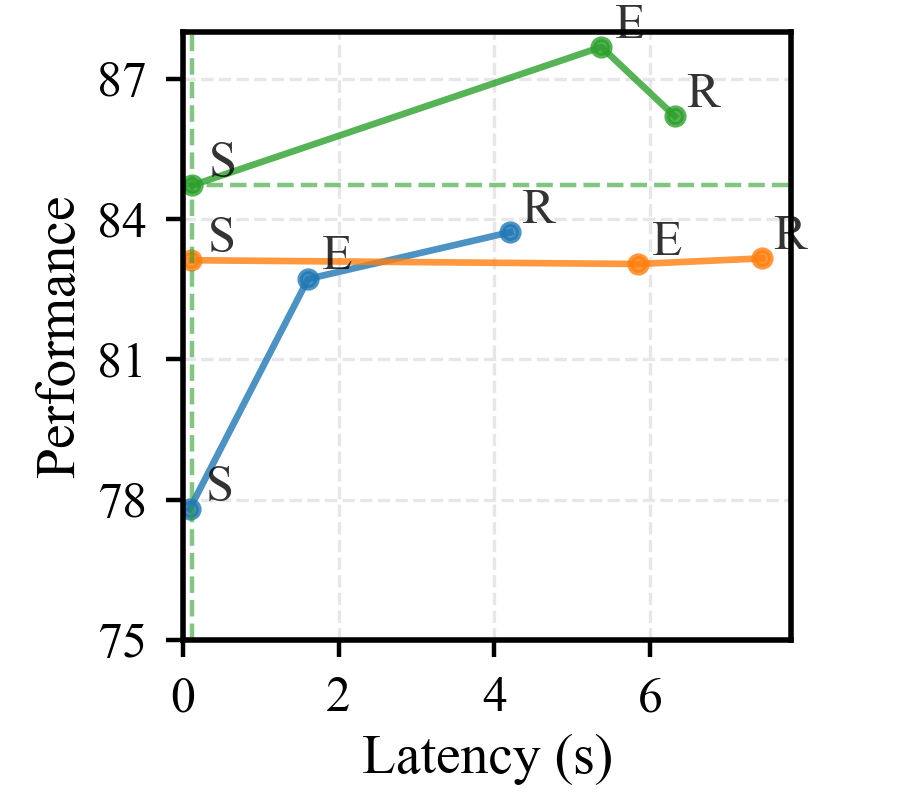

Efficiency gains on ChartQA: large models outperform small models with fewer tokens.

Efficiency gains on ChartQA: large models outperform small models with fewer tokens.

Why This Matters for Drones

This breakthrough isn’t just theoretical—it directly impacts drone technology. Here’s how:

- Swarm Intelligence: Drones can form dynamic, multi-agent networks, with smaller UAVs tackling initial reasoning tasks and transferring data to larger, centralized drones for decision-making.

- Edge AI: Mini drones with limited compute power can still leverage the reasoning capabilities of larger models without high latency.

- Resource Optimization: Efficient token generation enables drones to conserve battery life while performing complex tasks like mapping, surveillance, or disaster response.

Where It’s Still Falling Short

While incredibly promising, this research does have its limitations:

- Hardware Constraints: Small drones with limited processing and communication bandwidth may struggle to implement multi-agent frameworks consistently.

- Real-World Noise: The framework hasn’t been tested extensively on unpredictable environments like urban areas or forests.

- Scalability: Adding more agents could increase coordination complexity, especially in large swarms.

- Open Questions: How effectively can reasoning tokens be adapted to dynamic, fast-changing scenarios?

Can Hobbyists Dive In?

If you’re a drone enthusiast keen on experimenting, here’s what you’ll need:

- Hardware: A mix of large and smaller drones equipped with edge AI chips (

NVIDIA Jetson Nano,Raspberry Pi 4) or cloud-connectivity. - Software: Open-source VLM frameworks like

Hugging Face Transformersand distributed ML libraries likeRay. - Expertise: Some knowledge of multi-agent systems and Python programming.

While replicating the exact framework might require a high level of expertise, the concepts are adaptable for hobbyists who want to explore simple multi-agent setups.

Building on the Foundations

This work doesn't stand alone; it builds on and complements several related studies:

-

Dynamic Scene Understanding: The paper PointTPA: Dynamic Network Parameter Adaptation for 3D Scene Understanding addresses efficient perception in varied environments, which complements multi-agent inference by ensuring drones can see accurately before reasoning.

-

Memory Optimization: TriAttention: Efficient Long Reasoning with Trigonometric KV Compression tackles a core bottleneck in LLM reasoning, ensuring large models consume less memory—a crucial factor for drone deployment.

-

Trustworthy AI: Beyond the Global Scores explores detecting hallucinations in large models, which could ensure drones only act on reliable information during multi-agent tasks.

Final Thoughts

The multi-agent inference framework proposed in this paper holds immense promise for drones, enabling smarter AI collaboration without sacrificing efficiency. The real question now is: how long before we see intelligent UAV swarms operating smoothly in real-world missions?

Paper Details

Title: Rethinking Model Efficiency: Multi-Agent Inference with Large Models

Authors: Sixun Dong, Juhua Hu, Steven Li, Wei Wen, Qi Qian

Published: April 2026

arXiv: 2604.04929 | PDF

Written by

Mini Drone Shop AISharing knowledge about drones and aerial technology.