Sharper Skies: New AI Sees Small Drone Targets Amidst Clutter

A new AI model, SFFNet, significantly improves object detection in complex drone imagery by effectively separating targets from background noise and leveraging multi-scale information. It offers various scales, balancing accuracy and efficiency for diverse drone applications.

TL;DR: A new AI model, SFFNet, dramatically improves drone object detection by intelligently separating targets from complex backgrounds and leveraging multi-scale information. It offers flexible versions, balancing accuracy and efficiency for various drone applications.

Your Drone's Next Vision Upgrade: Cutting Through the Clutter

Ever wish your drone could see things clearer, especially those tiny, distant objects hidden in a complex urban sprawl or a dense forest? For hobbyists flying FPV, or engineers building autonomous inspection systems, accurate object detection isn't just a nice-to-have; it's fundamental for safety, navigation, and mission success. A new paper, SFFNet: Synergistic Feature Fusion Network With Dual-Domain Edge Enhancement for UAV Image Object Detection, pushes us closer to that reality, proposing an AI solution specifically engineered to make drone vision sharper and more reliable.

The Blurry Truth: Why Drones Struggle to See

Current object detection systems often fall short when deployed on drones, and it's not always the camera's fault. The primary culprits are usually two-fold: the sheer complexity of aerial backgrounds and the vast imbalance in target scales. Think about it: a drone camera might capture a vast cityscape where a tiny person or a small vehicle needs identifying. Traditional methods frequently get bogged down in background noise, treating it as signal, or they simply can't effectively leverage the rich multi-scale information present in a single image. Such limitations lead to missed detections, false positives, and ultimately, less reliable autonomous operations. The challenge isn't just about 'seeing' an object, but discerning it from everything else, regardless of how small or far away it is.

How SFFNet Sharpens the View

To tackle these challenges, the researchers behind SFFNet introduced a synergistic feature fusion network with dual-domain edge enhancement. In plain terms, they built a smart AI that looks at images in two complementary ways to find edges and features, then merges that information intelligently. Here's a breakdown:

Dual-Domain Edge Enhancement with MDDC

First, they designed the Multi-scale Dynamic Dual-domain Coupling (MDDC) module. This is where the 'dual-domain' magic happens. Instead of just looking at pixels (the spatial domain), MDDC also analyzes the image in the frequency domain. Why? Because background noise often appears differently in the frequency domain, making it easier to filter out. By processing in both domains, MDDC effectively decouples multi-scale object edges from complex background noise. It's like having two sets of eyes, one for the big picture and one for the intricate details, filtering out distractions before they even reach the main processing unit.

Figure 2: Overview of the SFFNet framework. The framework integrates a backbone network with MDDC for efficient multi-scale dual-domain feature extraction, and realizes collaborative feature fusion in the neck through SFPN. This design ensures the accurate positioning and detection of small objects, effectively addressing the challenges in complex environments.

Figure 3: The detailed structure of the MDDC module. The MDDC module initially performs multi-scale decomposition on the input feature map to construct the base representation. Subsequently, through DEIE, it achieves complementary feature fusion in both the spatial and frequency domains. In the frequency domain branch of DEIE, features undergo spectral decomposition, removing low-frequency noise disturbances while enhancing high-frequency feature representations.

Synergistic Feature Pyramid Network (SFPN)

Next, to truly understand the objects it's seeing, SFFNet uses a Synergistic Feature Pyramid Network (SFPN). This component is all about enhancing the model's ability to represent both the geometric shape and the semantic meaning of objects across different scales. SFPN employs linear deformable convolutions, which are flexible enough to adaptively capture irregular object shapes. Think of it as a deformable lens that can adjust its focus to perfectly outline an object, no matter how unusual its form. Furthermore, it includes a Wide-area Perception Module (WPM), which builds long-range contextual associations around targets. This means it doesn't just see a car; it understands it's a car on a road, surrounded by other cars, giving it a richer understanding of the scene.

Figure 4: The detailed structure of the WPM. WPM employs a parallel structure consisting of a large kernel convolution, a small kernel convolution, and two strip convolutions.

Performance that Counts: Hard Numbers

The researchers put SFFNet through its paces on two notoriously challenging aerial datasets: VisDrone and UAVDT. These datasets are packed with small objects, dense scenes, and varying conditions that really test a model's mettle.

What did they find? SFFNet doesn't just improve; it sets new benchmarks.

- VisDrone Dataset: The most capable variant,

SFFNet-X, achieved an impressive 36.8 AP (Average Precision). This marks a significant jump compared to previous state-of-the-art models. - UAVDT Dataset: On this dataset,

SFFNet-Xhit 20.6 AP, again demonstrating superior performance. - Scalability: Importantly,

SFFNetisn't a one-size-fits-all solution. The authors designed six different scale detectors (N/S/M/B/L/X), allowing developers to choose between high accuracy (SFFNet-X) or lightweight efficiency (SFFNet-N/S) depending on their drone's hardware constraints and mission requirements. The lightweightSFFNet-NandSmodels maintain a strong balance between accuracy and computational footprint, which is crucial for edge devices.

Figure 1: The relationship between the AP value and the number of parameters for different object detection algorithms on the VisDrone dataset. The points near the upper left corner of the figure indicate that the model can achieve higher accuracy while maintaining a lower number of parameters. Our algorithm (marked “Ours”) outperforms others by achieving a higher AP value with fewer parameters.

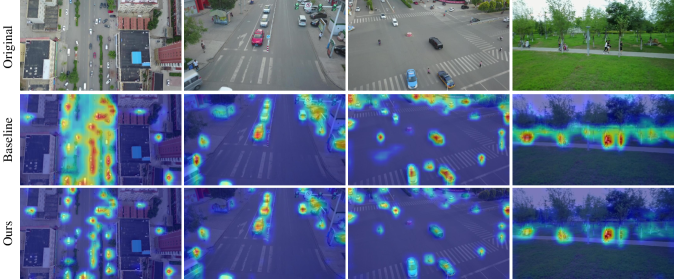

Qualitative results further illustrate the improvements. Figure 6 shows SFFNet outperforming baseline models in dense areas, under noisy weather conditions, and with blur from camera rotation. Figure 7's heatmaps clearly demonstrate how SFFNet suppresses irrelevant background noise and focuses more precisely on target objects. These aren't just theoretical gains; they translate directly to better real-world performance.

Figure 6: The comparison of detection visualization results between the baseline model and SFFNet. The first three rows are from the VisDrone dataset, and the last three are from the UAVDT dataset. Our model performs better in handling dense areas from various perspectives (rows 2–4), noise occlusion resulting from specific weather conditions (row 5), and blur caused by camera rotation (rows 1 and 6).

Figure 7: The comparison of heatmap visualization results between the baseline model and SFFNet on the VisDrone dataset. Our model not only suppresses irrelevant background noise but also focuses more on detecting the target objects.

Unlocking Sharper Vision: Why This Matters for Drones

The practical implications of SFFNet hold significant implications for anyone involved with drones:

- Enhanced Safety: For autonomous flight, better object detection means drones can more reliably identify obstacles, other aircraft, or even people, reducing collision risks. Such reliability is critical for urban air mobility or package delivery drones.

- Precision Inspections: Drones used for infrastructure inspection (e.g., power lines, wind turbines, bridges) can more accurately spot defects, cracks, or anomalies, even small ones, from a greater distance or in challenging lighting.

- Search and Rescue: In scenarios like disaster response, drones equipped with

SFFNetcould more effectively locate survivors or points of interest in complex, debris-strewn environments, especially small targets that are easily missed by current systems. - Environmental Monitoring: Tracking wildlife, detecting illegal dumping, or monitoring agricultural health is more precise when the drone can reliably differentiate targets from background noise.

- New Applications: This enhanced capability opens doors for applications previously limited by visual accuracy, potentially enabling more sophisticated surveillance, traffic monitoring, or even autonomous drone sports where precise object tracking is paramount.

The Fine Print: Limitations and What's Next

While SFFNet marks a notable step forward, it's important to consider its boundaries:

- Real-world Variability: The researchers tested the models on challenging datasets, but real-world conditions can introduce even more extreme variables like unexpected weather, optical distortions, or novel object types not seen during training. Generalization to truly unseen environments always presents a hurdle.

- Computational Overhead for X-variant: While the

NandSmodels are lightweight, the top-performingSFFNet-Xstill demands significant computational resources. Deploying this full model on smaller, power-constrained drones might require specialized edge AI hardware or offboard processing. - Dynamic Scenes: The paper shows improvements in blur from camera rotation, but rapid, unpredictable motion of both the drone and targets still poses challenges for any vision system. Maintaining high frame rates and low latency detection in highly dynamic scenes remains an active research area.

- Beyond Detections: While

SFFNetexcels at detecting objects, it doesn't inherently understand the broader context or intent. This is where other AI models become crucial.

Is it DIY-Friendly? Getting Your Hands Dirty

For the builders and hobbyists, the good news is that the authors plan to make the code available on GitHub (https://github.com/CQNU-ZhangLab/SFFNet). This means you could potentially experiment with integrating SFFNet into your own drone vision projects. However, training or fine-tuning the X variant would likely require substantial GPU resources (think NVIDIA RTX 3090 or better). The lighter N and S versions, once trained, could potentially run on powerful embedded platforms like NVIDIA Jetson series boards (Orin Nano/NX) or even advanced Raspberry Pi variants with AI accelerators, given sufficient optimization. This is a research paper, so expect some technical heavy lifting to get it production-ready on a custom drone.

This work on SFFNet complements other ongoing research in making drones smarter. For instance, while SFFNet helps drones see better, scaling complementary multi-encoder vision-language models, as explored in "CoME-VL: Scaling Complementary Multi-Encoder Vision-Language Learning," could help drones understand what they see with richer context, bridging raw visual data to meaningful linguistic interpretations. Such capabilities would enable more intelligent decision-making, moving beyond just detecting an object to understanding its purpose or state. Furthermore, ensuring a drone's interpretations are reliable remains critical. "Understanding the Role of Hallucination in Reinforcement Post-Training of Multimodal Reasoning Models" addresses the issue of 'hallucination' in multimodal reasoning. After a drone processes visual data, its subsequent actions must be based on accurate information, paramount for autonomous drone safety and effectiveness.

Ultimately, SFFNet offers a robust framework for significantly improving object detection from a drone's perspective, directly impacting how reliably autonomous drones can operate. What will you build with a drone that sees this clearly?

Paper Details

Title: SFFNet: Synergistic Feature Fusion Network With Dual-Domain Edge Enhancement for UAV Image Object Detection Authors: Wenfeng Zhang, Jun Ni, Yue Meng, Xiaodong Pei, Wei Hu, Qibing Qin, Lei Huang Published: April 4, 2026 arXiv: 2604.03176 | PDF

Written by

Mini Drone Shop AISharing knowledge about drones and aerial technology.