DRIVE-Nav: Smarter Drone Navigation for Complex Language Commands

A new framework, DRIVE-Nav, allows drones to efficiently navigate unknown environments using natural language commands, significantly improving path efficiency and reliability over existing methods.

TL;DR: This paper introduces DRIVE-Nav, a new approach for Open-Vocabulary Object Navigation (OVON) that helps drones find language-specified targets in unknown spaces more efficiently. Instead of relying on raw, unstable frontier points, DRIVE-Nav organizes exploration around persistent directions, drastically reducing redundant revisits and improving path selection. It's a pragmatic step toward drones that truly understand and act on complex verbal instructions.

Beyond Basic Flight: Drones That Understand

We're past the point where drones just fly. Now, the goal is true autonomy: systems that understand complex commands and navigate unfamiliar environments to achieve them. This research, DRIVE-Nav, tackles a significant hurdle in making that a reality for our mini drones: Open-Vocabulary Object Navigation (OVON). This isn't just about following GPS waypoints or avoiding obstacles; it's about a drone hearing "find the package on the kitchen counter" and intelligently finding that counter in an unknown house. It’s a foundational step towards drones becoming truly helpful, intelligent agents, not just remote-controlled cameras.

Why Current Drone Brains Get Lost

Current zero-shot navigation methods for OVON often struggle. They typically reason over "frontier points"—the edges of explored territory. While seemingly logical, this approach has serious drawbacks. Drones make decisions based on incomplete observations, leading to unstable route selections, constantly re-evaluating the same areas, and performing unnecessary actions. This translates directly to wasted battery life, slower mission completion, and a higher chance of mission failure. For a mini drone, every joule of energy and every second of flight time matters. We need a system that thinks ahead, not just reacts to the immediate unknown.

DRIVE-Nav's Intelligent Compass

DRIVE-Nav shifts the paradigm from reactive frontier exploration to proactive, direction-based reasoning. Instead of blindly chasing every frontier, it focuses on identifying and inspecting persistent, promising directions. The system extracts and tracks these directional candidates from weighted Fast Marching Method (FMM) paths. Once a drone reaches a decision point, it re-clusters local bearings, identifying new, distinct "direction categories" if angular gaps exceed a 45-degree threshold.

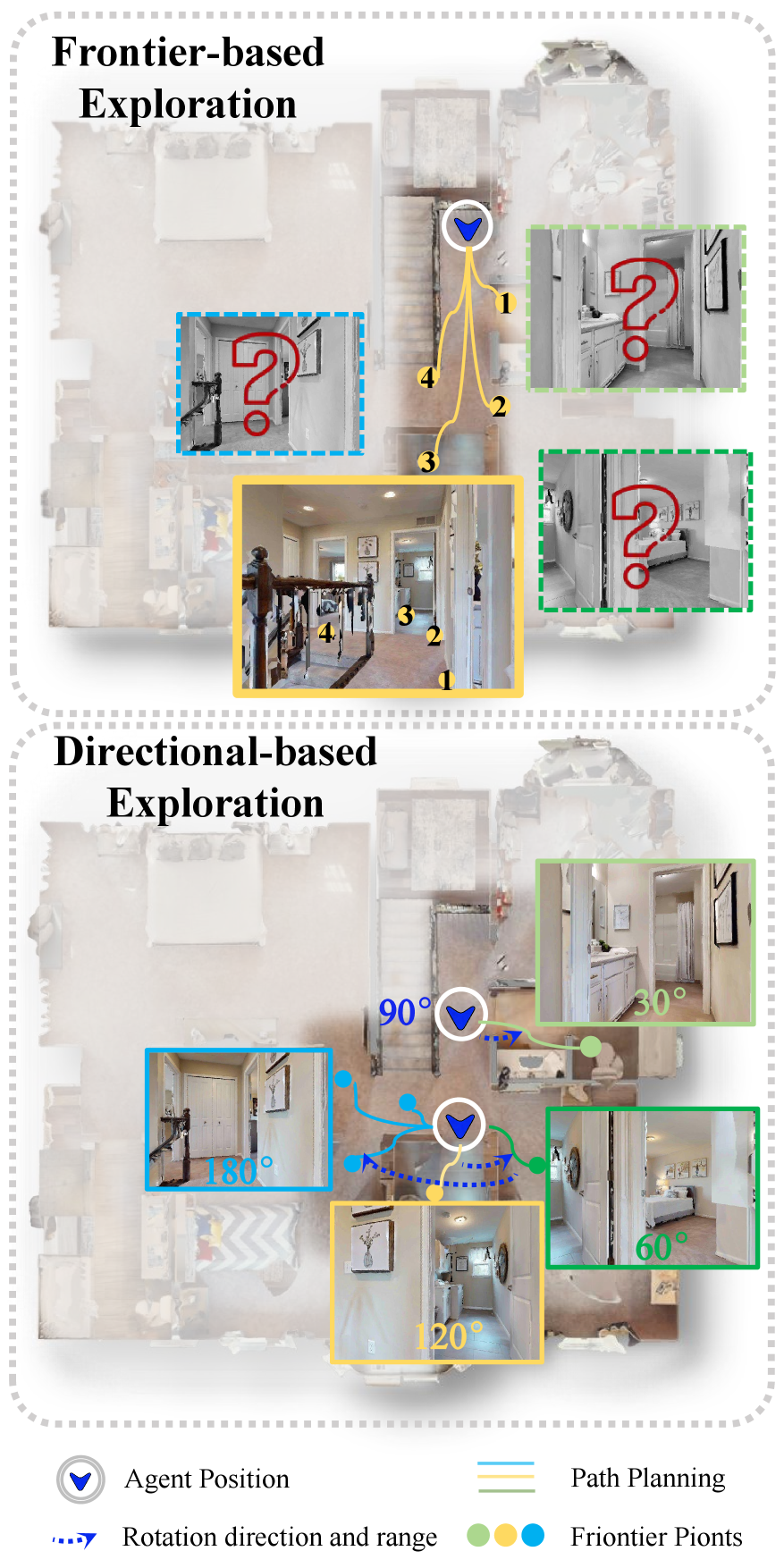

Figure 1: Comparison of exploration paradigms. Frontier-based exploration selects among frontier points without directly observing the unexplored regions beyond them, whereas direction-based exploration actively inspects junction information before making exploration decisions.

The core idea here is "inspect before you commit." The drone doesn't just pick a direction; it actively inspects encountered directions more completely within a forward 240-degree view range. This comprehensive inspection, combined with prompt enrichment and cross-frame verification, improves the reliability of understanding what it's seeing and where it should go. The system maintains representative views for semantic inspection, ensuring robust vision-language grounding.

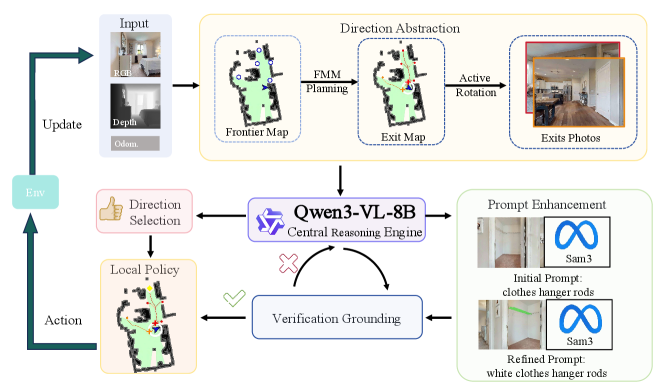

Figure 2: Overview of the proposed closed-loop navigation pipeline. RGB-D observations are converted into persistent exits for direction selection, prompt enhancement, and cross-frame target verification.

This closed-loop pipeline (Figure 2) continuously processes RGB-D observations, converting them into "persistent exits" which are then used for selecting the next direction. This persistent directional reasoning is key. It helps the agent avoid getting stuck in loops or revisiting areas unnecessarily, a common pitfall for current methods.

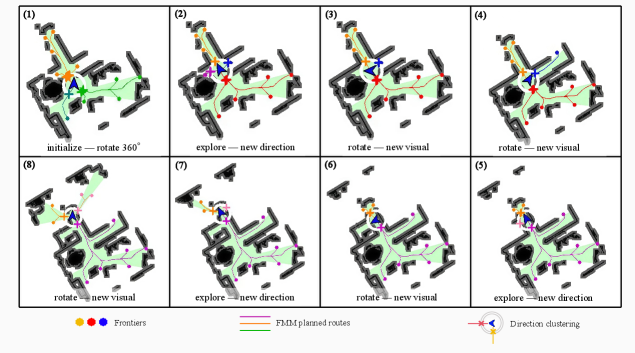

Figure 3: Directional abstraction during a representative exploration episode. As the agent reaches key decision points, local directional bearings are re-clustered. When adjacent angular gaps exceed the 45∘ threshold, new direction categories are identified. The agent then alternates between inspection and forward exploration.

For example, when a drone enters a hallway with multiple openings, DRIVE-Nav identifies these as distinct directions, then systematically inspects each one to determine the most promising path towards the target. This strategic approach, as shown in Figure 3, drastically cuts down on wasted exploration.

The Numbers Don't Lie

DRIVE-Nav isn't just a theoretical improvement; it delivers tangible gains. Tested on standard benchmarks like HM3D-OVON, HM3Dv2, and MP3D, it shows strong overall performance and consistent efficiency improvements.

- HM3D-OVON: Achieved 50.2% Success Rate (SR) and 32.6% Success weighted by Path Length (SPL). This is a solid improvement over the previous best method, with a 1.9% SR increase and a substantial 5.6% SPL gain. That 5.6% SPL gain means shorter, more efficient paths.

- HM3Dv2 & MP3D: Delivered the best SPL on both these datasets, confirming its efficiency across different environments.

- Real-world deployment: The framework also transferred successfully to a physical humanoid robot, demonstrating its robustness beyond simulation.

These results are significant. Higher SPL means less flight time, less energy consumption, and quicker task completion—all critical for mini drones.

Practical Intelligence for Your Fleet

So, what does this mean for the mini drone world? This research moves us closer to truly intelligent drones that can perform complex tasks with minimal human intervention. Think about:

- Automated inventory management: A drone could be commanded, "Find the missing

item_Xin warehouseY," and it would autonomously navigate the complex shelves and aisles to locate it. - Search and rescue: Instead of pre-mapping, a drone could be sent into a damaged building with a command like "Locate survivors near the collapsed wall in sector

Alpha," and intelligently explore to find the target. - Home assistance/security: A domestic drone could be asked, "Find my keys in the living room" or "Check if the back door is closed."

The ability to process natural language and make intelligent navigation decisions in unknown spaces opens up a new realm of applications, moving beyond mere surveillance or delivery of known points.

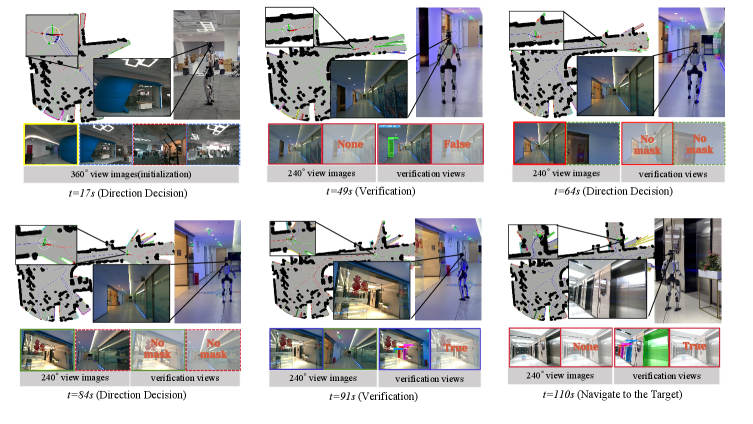

Figure 4: Real-world deployment of DRIVE-Nav for finding an elevator. The sequence shows the full navigation episode, including 360∘ initialization, successive 240∘ direction decisions, cross-frame verification, and final approach to the target.

Figure 4 illustrates a real-world scenario where DRIVE-Nav successfully navigates to an elevator. This isn't just a lab demo; it's a demonstration of practical, deployable intelligence.

Still Room to Grow

While DRIVE-Nav is a major leap, it's not without its current limitations. The paper acknowledges that the system still relies on structured directional reasoning. This means environments with highly ambiguous or unstructured paths might still pose challenges where distinct "directions" are harder to define. Also, the computational overhead for the multi-stage inspection and verification, while efficient compared to dense frontier methods, still requires non-trivial processing power. For extremely resource-constrained mini drones, optimizing the inference time for real-time decisions would be crucial. Furthermore, while it transfers to a physical robot, the specific hardware and sensor suite used for a humanoid might differ significantly from a typical mini drone, requiring careful adaptation and optimization of the vision-language models for drone-specific constraints like camera angle, motion blur, and power budget.

Can You Build It?

Replicating DRIVE-Nav as a hobbyist project would be challenging but not impossible for a dedicated builder with a strong background in robotics, computer vision, and machine learning. You'd need a drone platform capable of carrying an RGB-D camera (like Intel RealSense or Azure Kinect), a decent onboard compute unit (e.g., NVIDIA Jetson series or a Raspberry Pi 5 with a dedicated neural compute stick), and sufficient battery life. The core algorithms would need to be implemented, likely leveraging open-source frameworks like ROS for robotics middleware, PyTorch or TensorFlow for the deep learning components, and potentially OpenCV for image processing. The paper's project page provides more details, but a full open-source implementation for DRIVE-Nav itself isn't explicitly stated as immediately available for DIY. Expect significant development effort.

The Broader AI Landscape

DRIVE-Nav doesn't operate in a vacuum. Its success relies on advancements in related fields. For instance, the vision-language understanding at its core benefits from research like "FocusVLA: Focused Visual Utilization for Vision-Language-Action Models." This paper demonstrates how VLA models can be made more efficient by focusing on relevant visual details, directly complementing how DRIVE-Nav interprets commands and environmental cues. Furthermore, teaching a drone to learn these complex navigation policies can be significantly accelerated by approaches like "SOLE-R1: Video-Language Reasoning as the Sole Reward for On-Robot Reinforcement Learning." SOLE-R1 shows how Video-Language Models can act as powerful supervisors, making the learning process for autonomous agents more robust. Finally, once DRIVE-Nav decides where to go, efficiently executing that path is crucial. Papers like "Dynamic Lookahead Distance via Reinforcement Learning-Based Pure Pursuit for Autonomous Racing" offer insights into optimizing path-tracking algorithms, ensuring the drone smoothly and dynamically follows its intelligently planned route. These interconnected areas are all pushing the boundaries of what autonomous systems can achieve.

This isn't just about finding objects; it's about building a drone that truly understands its mission, even in uncharted territory.

Paper Details

Title: DRIVE-Nav: Directional Reasoning, Inspection, and Verification for Efficient Open-Vocabulary Navigation Authors: Maoguo Gao, Zejun Zhu, Zhiming Sun, Zhengwei Ma, Longze Yuan, Zhongjing Ma, Zhigang Gao, Jinhui Zhang, Suli Zou Published: Preprint (March 2026) arXiv: 2603.28691 | PDF

Written by

Mini Drone Shop AISharing knowledge about drones and aerial technology.