M^3: Real-Time, Photorealistic 3D Maps from a Single Drone Camera

A new SLAM technique called M^3 leverages Gaussian Splatting to create incredibly detailed, photorealistic 3D models of environments using only a monocular camera, significantly boosting drone autonomy and mapping precision.

TL;DR: M^3 dramatically enhances monocular SLAM using Gaussian Splatting, achieving highly accurate drone positioning and generating stunningly photorealistic 3D maps from just one camera. This method significantly outperforms prior techniques, making advanced drone perception both more precise and more attainable.

Your Drone's New Reality: Instant 3D Worlds

For anyone passionate about drones—be it hobbyists, builders, or engineers—the vision of a drone that doesn't just fly, but genuinely understands its surroundings in vivid, photorealistic detail, has always been compelling. We're not talking about basic obstacle avoidance here; we mean creating an instant, high-fidelity digital replica of the world, all captured by the simplest sensor: a single camera. That ambitious goal just took a massive leap forward with the introduction of M^3.

The Challenge: Pinpoint Precision from a Single Eye

Building a detailed 3D environment in real-time from just one uncalibrated camera feed is incredibly difficult. While today's multi-view foundation models are powerful, they often struggle to provide the geometric precision essential for reliable SLAM (Simultaneous Localization and Mapping). These models typically generate pixel-level matches that just aren't sharp enough for the rigorous geometric calculations needed. The result? Less accurate drone positioning and fuzzy, unreliable 3D maps. For smaller drones, where every gram, watt, and dollar counts, a single camera is the ideal sensor. But until now, achieving high fidelity with it has been a major bottleneck. We've needed pinpoint accuracy without the bulk of multiple cameras or expensive LiDAR.

How M^3 Crafts Superior 3D Worlds

This is precisely where M^3 makes its mark. The core breakthrough involves enhancing existing multi-view foundation models with a specialized Matching head. This Matching head is engineered to generate highly detailed, dense correspondences—the kind of precise data absolutely vital for accurate geometric optimization. By weaving this into a robust Monocular Gaussian Splatting SLAM framework, M^3 unlocks unparalleled levels of detail and accuracy. For the uninitiated, Gaussian Splatting is a relatively new, game-changing technique that models a 3D scene as a collection of 3D Gaussians. Think of these as tiny, pliable, semi-transparent ellipsoids that are meticulously optimized to render views from any angle with stunning photorealism.

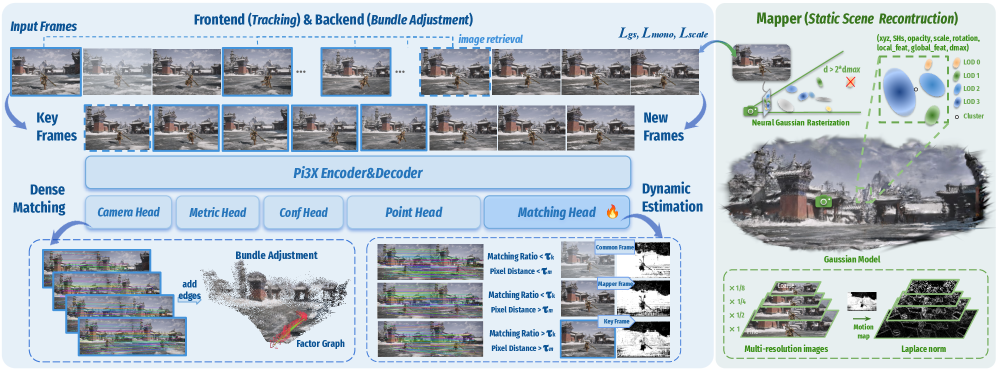

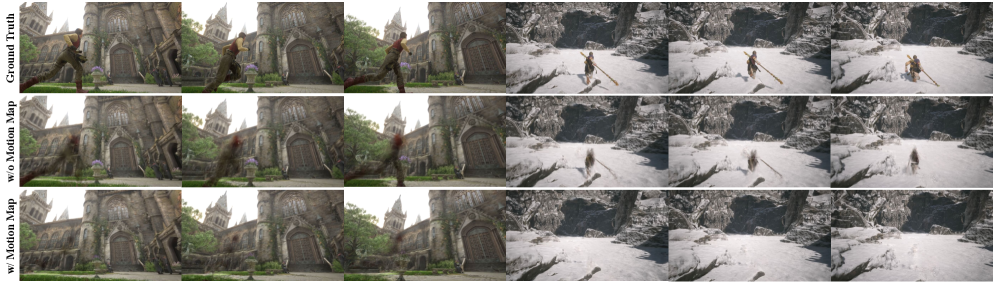

But M^3 doesn't stop at superior matching. It also boosts tracking stability with two ingenious mechanisms: dynamic area suppression and cross-inference intrinsic alignment. Dynamic area suppression, illustrated in Figure 5, is especially critical for drones navigating real-world settings with moving elements. It smartly identifies and filters out these dynamic regions from the static scene reconstruction, effectively preventing 'ghosting' and structural glitches in the final map. Figure 2 provides a comprehensive look at the M^3 pipeline, showcasing its joint tracking and global optimization for pose estimation, alongside its scene reconstruction mapper.

Figure 2: The M3 Pipeline. The framework consists of joint tracking and global optimization for pose estimation and a mapper for scene reconstruction. For monocular sequences, Pi3X processes retrieved historical keyframes and new frames in one inference to facilitate factor graph construction and keyframe selection. Following the Neural Gaussian and LOD architecture of ARTDECO [artdeco], Gaussians are initialized via Laplacian norm and optimized jointly with camera poses.

Figure 2: The M3 Pipeline. The framework consists of joint tracking and global optimization for pose estimation and a mapper for scene reconstruction. For monocular sequences, Pi3X processes retrieved historical keyframes and new frames in one inference to facilitate factor graph construction and keyframe selection. Following the Neural Gaussian and LOD architecture of ARTDECO [artdeco], Gaussians are initialized via Laplacian norm and optimized jointly with camera poses.

Figure 5: Effect of the Motion Map in dynamic environments. By detecting dynamic regions, the Motion Map enables moving objects to be excluded from the static reconstruction, thereby improving structural consistency in dynamic scenes.

Figure 5: Effect of the Motion Map in dynamic environments. By detecting dynamic regions, the Motion Map enables moving objects to be excluded from the static reconstruction, thereby improving structural consistency in dynamic scenes.

The Proof is in the Pixels: Unpacking M^3's Performance

The numbers don't lie: M^3 truly delivers. Comprehensive tests across various indoor and outdoor environments confirm its leading accuracy in both estimating a drone's position and reconstructing the scene. This isn't just a small step forward; it's a monumental leap:

- Pinpoint Pose Estimation: M^3 slashes the

ATE RMSE(Absolute Trajectory Error Root Mean Square Error) by an impressive 64.3% when stacked againstVGGT-SLAM 2.0. In plain terms, your drone will know its exact location with unprecedented precision. - Stunning Scene Reconstruction: On the demanding

ScanNet++dataset, M^3 outshinesARTDECOby 2.11 dB inPSNR(Peak Signal-to-Noise Ratio). For those unfamiliar,PSNRis a standard measure of image quality, and a 2.11 dB jump signifies a visibly clearer, more accurate reconstruction.

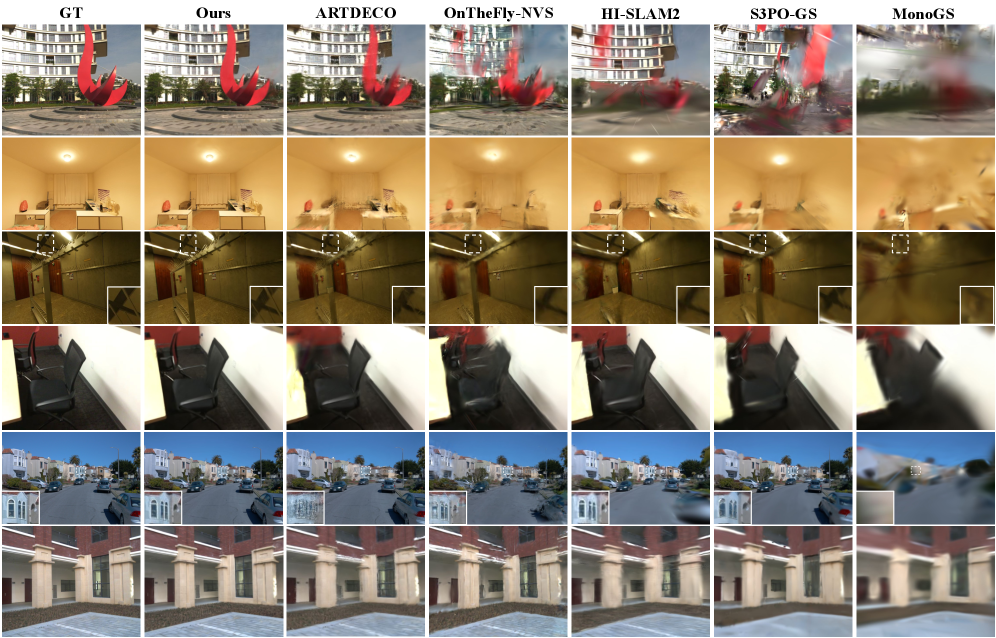

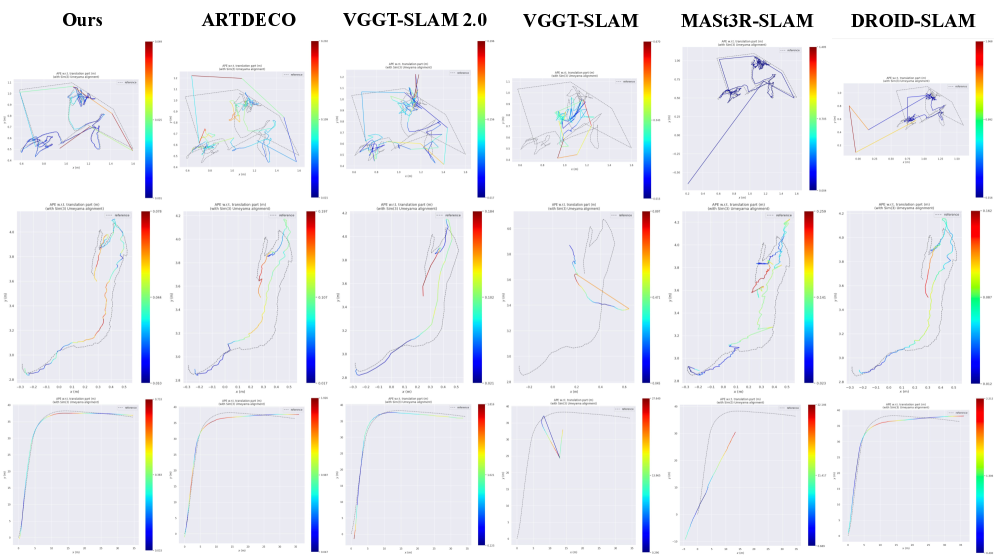

Visual comparisons, like those in Figure 3, further highlight these advancements, demonstrating M^3's ability to maintain high-fidelity rendering even in tough conditions. Moreover, as seen in Figure 6, its generated trajectories align remarkably well with ground truth, showing no significant drift or inconsistencies.

Figure 3: Qualitative comparisons of rendering against on-the-fly reconstruction baselines across diverse datasets. M3 preserves high-fidelity rendering details in challenging environments, particularly in regions highlighted by white rectangles.

Figure 3: Qualitative comparisons of rendering against on-the-fly reconstruction baselines across diverse datasets. M3 preserves high-fidelity rendering details in challenging environments, particularly in regions highlighted by white rectangles.

Figure 6: Qualitative comparisons of camera trajectories against on-the-fly reconstruction baselines across diverse datasets. Our visualized trajectories align most closely with the ground truth (GT), exhibiting no significant divergence or inconsistency.

Figure 6: Qualitative comparisons of camera trajectories against on-the-fly reconstruction baselines across diverse datasets. Our visualized trajectories align most closely with the ground truth (GT), exhibiting no significant divergence or inconsistency.

Why This Matters: A New Era for Drone Autonomy

The potential for drone autonomy is immense. M^3 isn't just an academic achievement; it's a gateway to practical applications like:

- Precision Inspections: Drones could conduct incredibly detailed structural assessments of critical infrastructure—think bridges, power lines, or wind turbines—creating photorealistic 3D models on the fly. This means identifying anomalies with previously impossible clarity.

- Rapid Search and Rescue: Imagine drones swiftly mapping disaster areas with high fidelity, providing emergency teams with immediate, actionable 3D intelligence of collapsed structures or treacherous terrains.

- Seamless AR/VR Integration: Drones could capture entire environments in real-time, enabling immersive augmented reality overlays or the instant generation of virtual reality training simulations.

- Robust Navigation: For areas where GPS signals fail—indoors or in dense urban canyons—this level of precise

monocular SLAMtranslates to far more reliable autonomous navigation, lessening the need for bulky external sensors.

Crucially, this technology makes sophisticated spatial understanding achievable for lighter, more energy-efficient drones, unlocking innovations that once demanded heavier, multi-sensor setups.

The Road Ahead: Current Hurdles and Future Needs

While M^3 shows immense promise, it's vital to acknowledge its current limitations. As with any cutting-edge research, there are challenges to address:

- Heavy Computational Load:

Gaussian Splattingis famously demanding on computing resources. Even with M^3's efficiency optimizations, achieving this level of real-time reconstruction on a small drone'sedge AIhardware will still necessitate powerful, specialized processors or smartedge offloadingtechniques. - Monocular Scale Ambiguity: While

SLAMaids significantly,monocular visionalone inherently struggles to determine absolute scale without additional external information. The paper primarily focuses on relative accuracy and reconstruction quality, but incorporating external scale cues (like an altimeter reading or known object dimensions) would be essential for many real-world deployments. - Handling Complex Dynamics: While

dynamic area suppressioneffectively helps with static scene reconstruction, fully comprehending and reconstructing moving objects within the scene in real-time presents a more profound challenge. - Environmental Sensitivity: Performance can still be impacted by difficult lighting conditions (e.g., intense glare, very dim light) or environments lacking distinct features, though the

Matching headandfoundation modelslikely provide some degree of resilience.

DIY Dreams? Not Quite Yet for the Garage Workbench

For the typical drone hobbyist, attempting to replicate M^3 at home isn't feasible right now. This technology hinges on advanced multi-view foundation models and Gaussian Splatting optimization, which typically demand high-end GPUs for both training and even for real-time operation. The underlying software stack is intricate, likely involving custom neural network implementations and complex SLAM algorithms. While Gaussian Splatting is quickly gaining open-source traction, integrating it into a complete, robust SLAM system like M^3 still requires substantial expertise and computational power far beyond what most enthusiasts have at their disposal. Consider this a significant research breakthrough that will, over time, filter down into commercial products and more user-friendly open-source frameworks.

M^3 in Context: The Broader Landscape of Drone Perception

M^3 isn't an isolated achievement; it's a key piece in the larger puzzle of advanced drone autonomy. While M^3 excels at crafting a breathtakingly detailed overall 3D world, other research addresses equally crucial aspects of drone perception. For example, 'MessyKitchens: Contact-rich object-level 3D scene reconstruction' by Ansari et al. zeroes in on helping drones understand individual objects within complex, cluttered environments using just one camera—a critical step for drones that need to interact with their surroundings. Taking this further, 'SegviGen: Repurposing 3D Generative Model for Part Segmentation' by Li et al. explores how drones could grasp the internal structure of objects by segmenting them into their constituent parts, which is essential for intricate inspection or manipulation. Given that M^3's real-time, high-fidelity 3D mapping requires substantial computational muscle, 'Deep Reinforcement Learning-driven Edge Offloading for Latency-constrained XR pipelines' by Saha & Debroy offers a vital practical solution. This work shows how drones can tap into remote computational resources, allowing them to run advanced AI like M^3 without compromising real-time performance or battery life. And finally, to effectively train and rigorously test drones capable of M^3's sophisticated spatial understanding, realistic simulation environments are indispensable. 'ManiTwin: Scaling Data-Generation-Ready Digital Object Dataset to 100K' by Wang et al. lays the groundwork for scaling digital object datasets, paving the way for richer, more diverse simulated worlds where drones can hone their navigation and interaction abilities.

The Future is Clearer, and More Detailed

M^3 significantly expands the capabilities of what a single camera can achieve for drone perception. This isn't merely about generating superior maps; it's about empowering a new generation of drones to genuinely see, comprehend, and engage with their environment in ways that were once confined to science fiction.

Paper Details

Title: M^3: Dense Matching Meets Multi-View Foundation Models for Monocular Gaussian Splatting SLAM Authors: Kerui Ren, Guanghao Li, Changjian Jiang, Yingxiang Xu, Tao Lu, Linning Xu, Junting Dong, Jiangmiao Pang, Mulin Yu, Bo Dai Published: Preprint (arXiv) arXiv: 2603.16844 | PDF

Written by

Mini Drone Shop AISharing knowledge about drones and aerial technology.