Smart Drone Swarms: Multi-Agent AI for Faster, Smarter Operations

Large models with short outputs and multi-agent frameworks could transform drone swarms by improving decision-making speed and efficiency.

TL;DR: Large AI models can be faster and just as effective as smaller ones when output tokens are minimized. A new multi-agent inference framework combines large and small models for smarter, faster reasoning.

AI Models That Think Fast and Act Smart

For drone engineers and hobbyists, the performance of autonomous systems depends on fast and accurate decision-making. We're exploring how large AI models, when paired with multi-agent setups, can boost efficiency without sacrificing intelligence. Specifically, it’s about making drones smarter and faster by optimizing how they process and share reasoning across models.

The Bottleneck: Token Overload

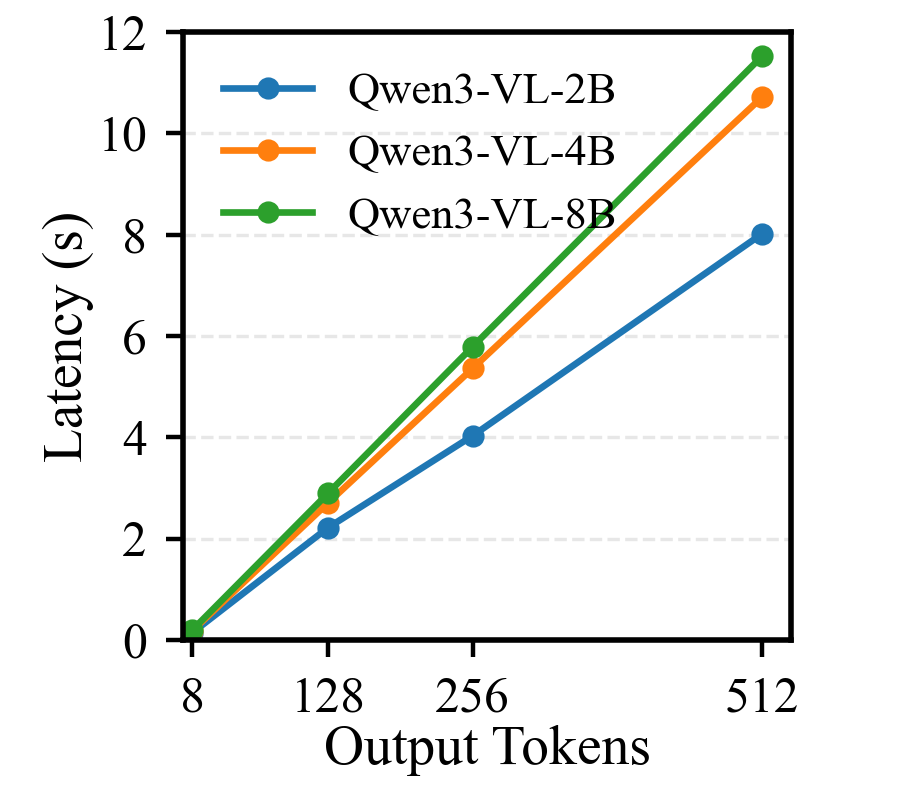

Autonomous drones rely on AI models for tasks like navigation, object detection, and swarm coordination, often using vision-language models (VLMs). But here’s the problem: most large models generate outputs sequentially, token by token, in an autoregressive process. The longer the output, the slower the response time—a critical limitation for real-time drone operations. Smaller models might generate faster responses, but they often lack the nuanced reasoning needed for complex tasks.

A Smarter Approach: Combining Models

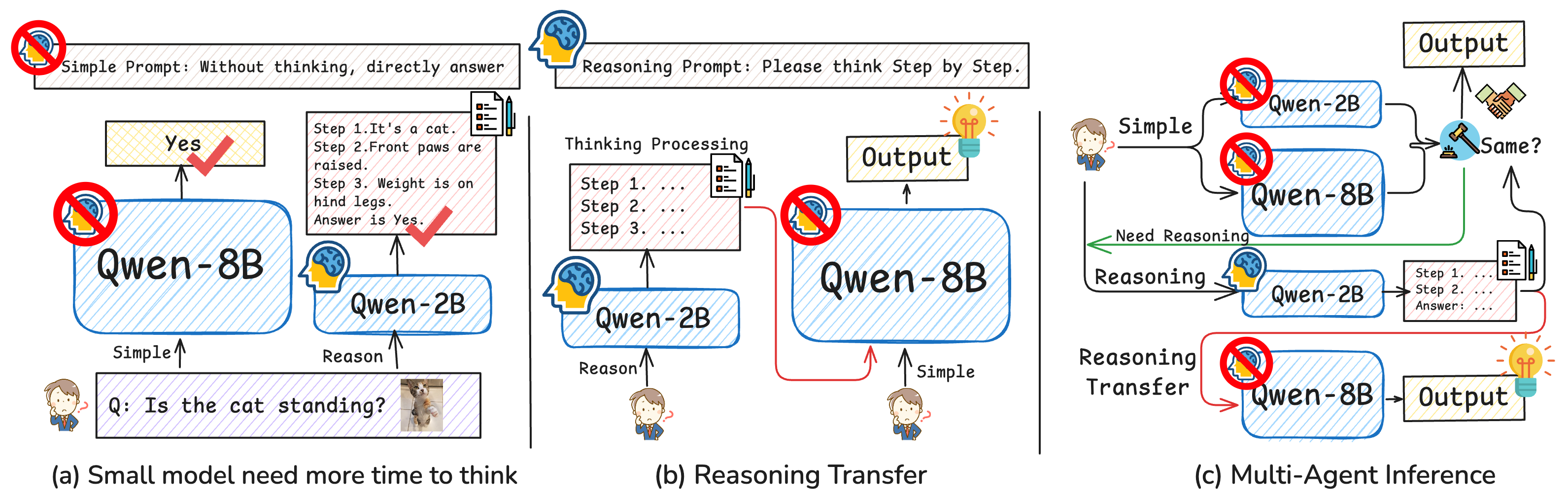

The authors propose combining large and small models in a multi-agent framework. Large models generate concise, high-quality outputs, saving time without compromising performance. Meanwhile, small models handle reasoning for situations where additional information is needed. When necessary, reasoning tokens generated by the smaller model are transferred to the larger model for enhanced decision-making.

Here’s a look at the key findings:

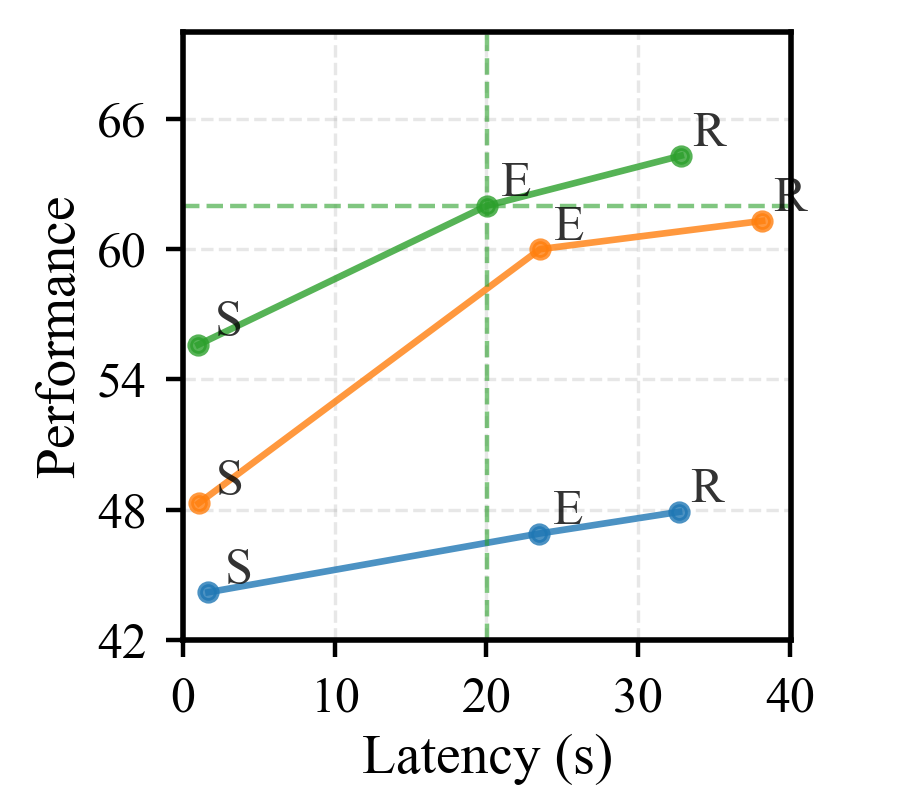

Figure: Large models with shorter outputs outperform smaller models with long outputs in terms of latency.

Figure: Large models with shorter outputs outperform smaller models with long outputs in terms of latency.

Figure: Illustration of the proposed multi-agent inference framework, showing how reasoning tokens are shared and verified between models.

Figure: Illustration of the proposed multi-agent inference framework, showing how reasoning tokens are shared and verified between models.

Performance in Practice

This isn't just a theoretical concept; the researchers put their approach to the test on real-world benchmarks. Here are the key results:

-

On the

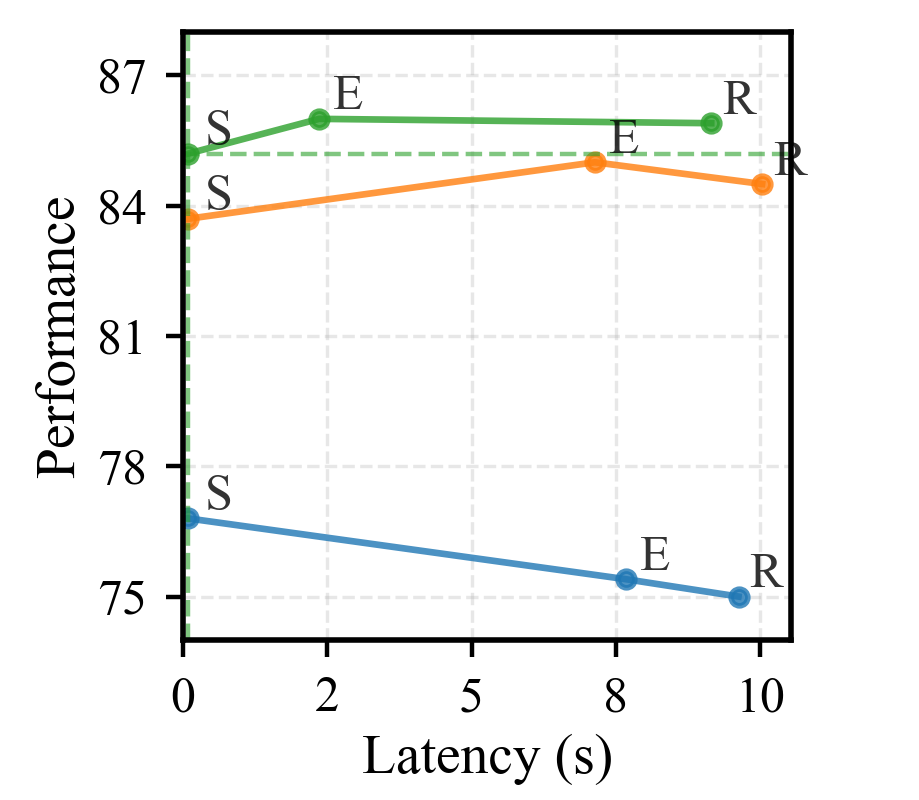

MMBenchbenchmark, the multi-agent system matched large models’ accuracy while cutting latency by nearly 40%. Figure: Performance on the MMBench benchmark, showing latency reduction.

Figure: Performance on the MMBench benchmark, showing latency reduction. -

In

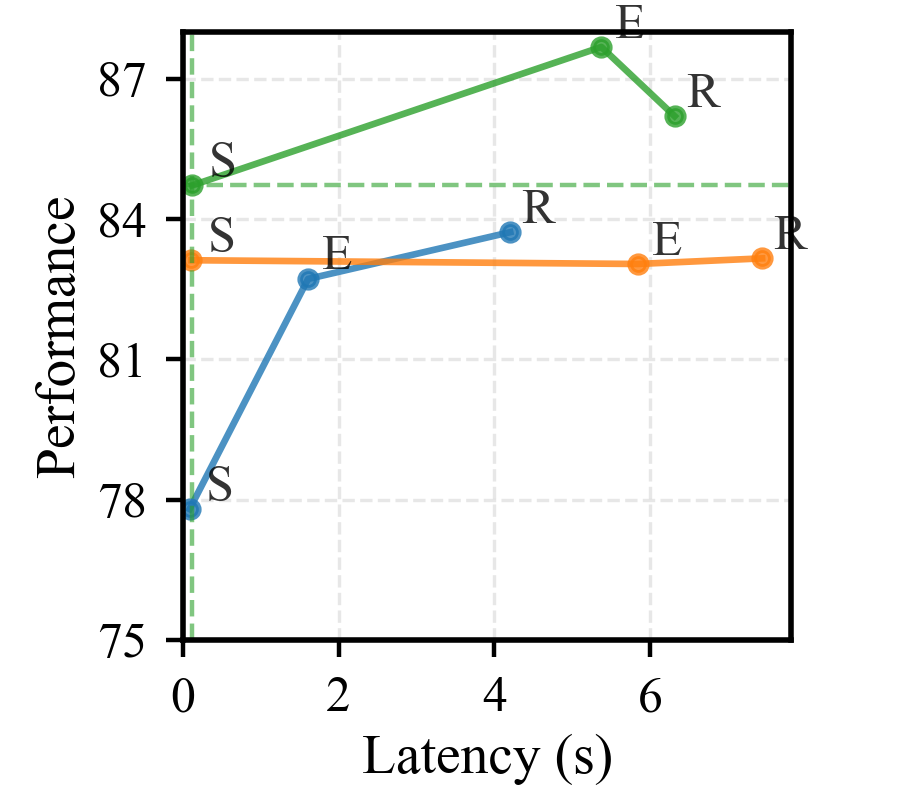

ChartQAtasks, reasoning token reuse enabled 90% of the large model’s performance but required fewer model calls, saving computational costs. Figure: Performance on ChartQA tasks, highlighting efficiency gains.

Figure: Performance on ChartQA tasks, highlighting efficiency gains. -

On

MMMU, mutual verification between agents reduced unnecessary computation. Figure: Performance on MMMU, demonstrating reduced computation.

Figure: Performance on MMMU, demonstrating reduced computation.

Why This Matters for Drone Swarms

Drone swarms depend on speed and coordination, especially in dynamic environments. This framework could optimize drone swarm operations by enabling:

-

Real-time swarm intelligence: Large models can quickly deliver concise instructions to individual drones, while smaller models refine reasoning for unexpected scenarios.

-

Energy efficiency: Generating fewer tokens reduces latency and power consumption—a win for battery-operated drones.

-

Enhanced coordination: Sharing reasoning tokens across drones ensures smarter decision-making without needing centralized computation.

Reality Check: What’s Missing

No paper is perfect, and this one has its gaps:

- Hardware constraints: The approach assumes robust on-device processing capabilities, which might be a stretch for smaller drones with limited hardware.

- Scalability: Multi-agent frameworks need careful tuning to avoid bottlenecks when scaled to large swarms.

- Complex scenarios: The paper doesn’t address edge cases like adversarial environments where models might struggle.

- Communication overhead: Transferring reasoning tokens between models adds a layer of complexity, especially for drones relying on low-bandwidth networks.

Can You Build This at Home?

If you’re a hobbyist looking to implement this, here’s the deal:

- Hardware: You’ll need drones equipped with edge AI hardware like NVIDIA Jetson modules or Google Coral accelerators.

- Software: Frameworks like

PyTorchorTensorFlowfor model deployment, and possibly a multi-agent coordination tool. - Resources: The paper doesn’t provide open-source implementations, so you’d need to re-create the system from scratch based on their descriptions.

Related Innovations

This research ties into broader trends in AI and drone technology. For example:

-

The

Veropaper (link) explores visual reasoning across diverse tasks, which is crucial for drones interacting with dynamic environments. -

ClickAIXR(link) highlights on-device multimodal interaction, aligning with the need for edge processing in drones. -

Finally,

LVLM Hallucinations(link) tackles AI reliability—a major concern for autonomous systems relying on VLMs.

The Path Forward for Drone AI

The future of drone swarms isn’t just about hardware upgrades; it’s about optimizing intelligence. Multi-agent inference with large models could be the key to smarter, faster, and more efficient drones. The question is: how quickly can we move this from research labs to the skies?

Paper Details

Title: Rethinking Model Efficiency: Multi-Agent Inference with Large Models

Authors: Sixun Dong, Juhua Hu, Steven Li, Wei Wen, Qi Qian

Published: April 2026

arXiv: 2604.04929 | PDF

Written by

Mini Drone Shop AISharing knowledge about drones and aerial technology.