Smarter Drone Vision: Less Memory, Better Real-Time Perception

A new paper introduces SimpleStream, a surprising baseline for real-time video understanding. It shows that feeding a VLM only the most recent frames often outperforms complex memory systems, offering efficiency benefits for drone AI.

TL;DR: Forget complex memory systems for streaming video. A new paper demonstrates that simply feeding an off-the-shelf Vision-Language Model (VLM) the most recent 4-16 frames (SimpleStream) often matches or surpasses the performance of much more intricate, memory-intensive approaches, delivering better real-time perception. This means more accessible, efficient AI vision for drones.

For drone enthusiasts, builders, and engineers, the promise of truly autonomous flight often hinges on sophisticated AI vision. We've been told that advanced perception requires increasingly complex neural networks, especially when dealing with continuous video streams. But what if much of that complexity is unnecessary, even detrimental? A recent paper by Shen, Tian, Yang, and Liu suggests we've been overcomplicating things, proposing a surprisingly simple method that could reshape how our drones 'see' the world in real-time.

The Overloaded Brain: Why Drone AI Gets Bogged Down

Current approaches to streaming video understanding for AI agents – including those on your drone – often get bogged down in intricate memory mechanisms. These systems are designed to recall events from minutes or even hours ago, constantly trying to compress and retrieve historical data. While the intent is noble, this complexity comes with significant downsides:

- Computational Cost: High processing power needed, translating to heavier, hotter, and more expensive onboard hardware.

- Memory Footprint: Large RAM requirements, pushing against the strict weight and power budgets of mini drones.

- Real-time Performance: The overhead of managing vast historical contexts can actually slow down the perception of immediate events, leading to poorer real-time responsiveness.

- Development Complexity: More moving parts mean harder to debug and optimize. We've been assuming more memory always means better performance, but this paper challenges that directly.

The Elegant Simplicity of SimpleStream

The core idea, dubbed SimpleStream, is almost embarrassingly straightforward: Instead of building elaborate memory modules that try to summarize or compress long histories, you simply feed an off-the-shelf Vision-Language Model (VLM) a small, fixed window of the most recent video frames. That's it.

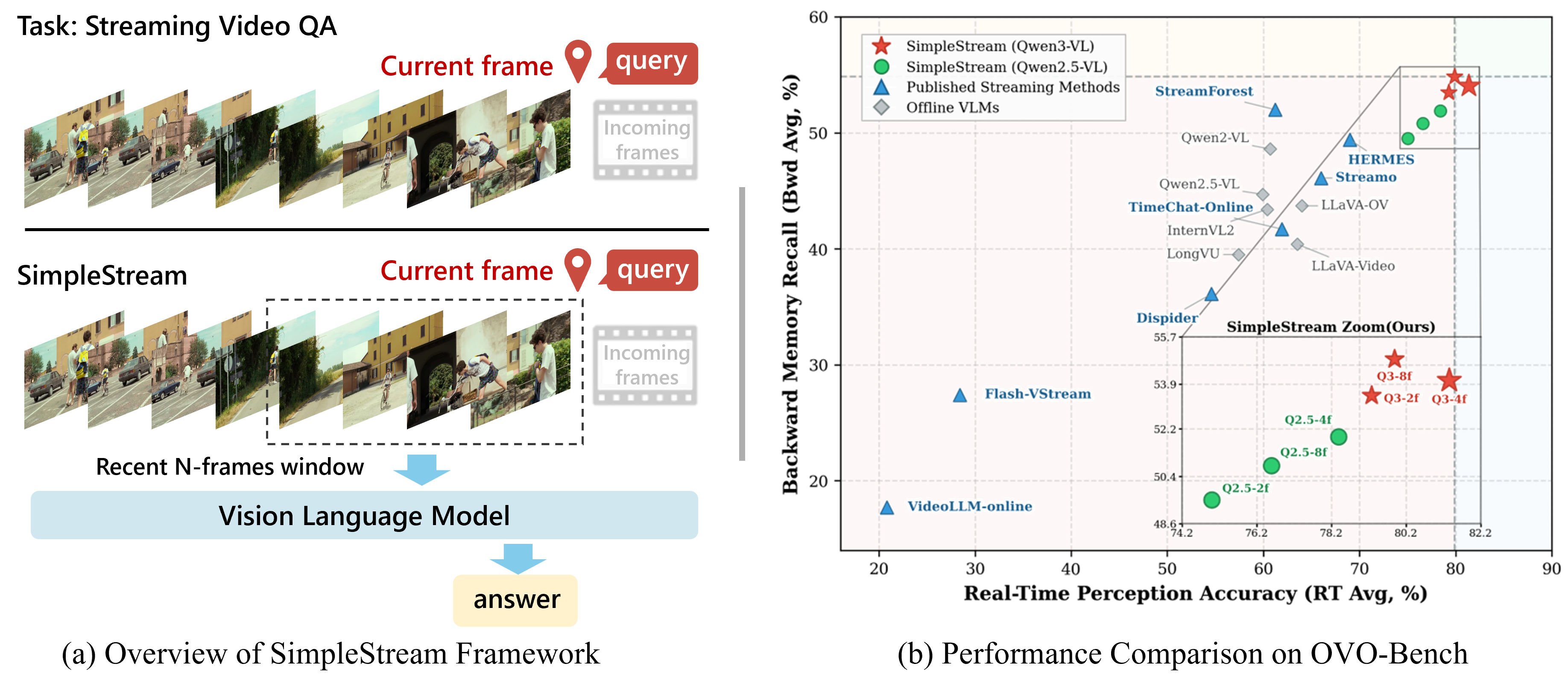

Figure 1: SimpleStream's elegant approach (a) feeds only the most recent N frames to a VLM, consistently achieving top-right performance on perception-memory metrics (b).

This contrasts sharply with the memory-heavy strategies that dominate the current research landscape, which strive to maintain vast historical contexts, often at the expense of immediate relevance.

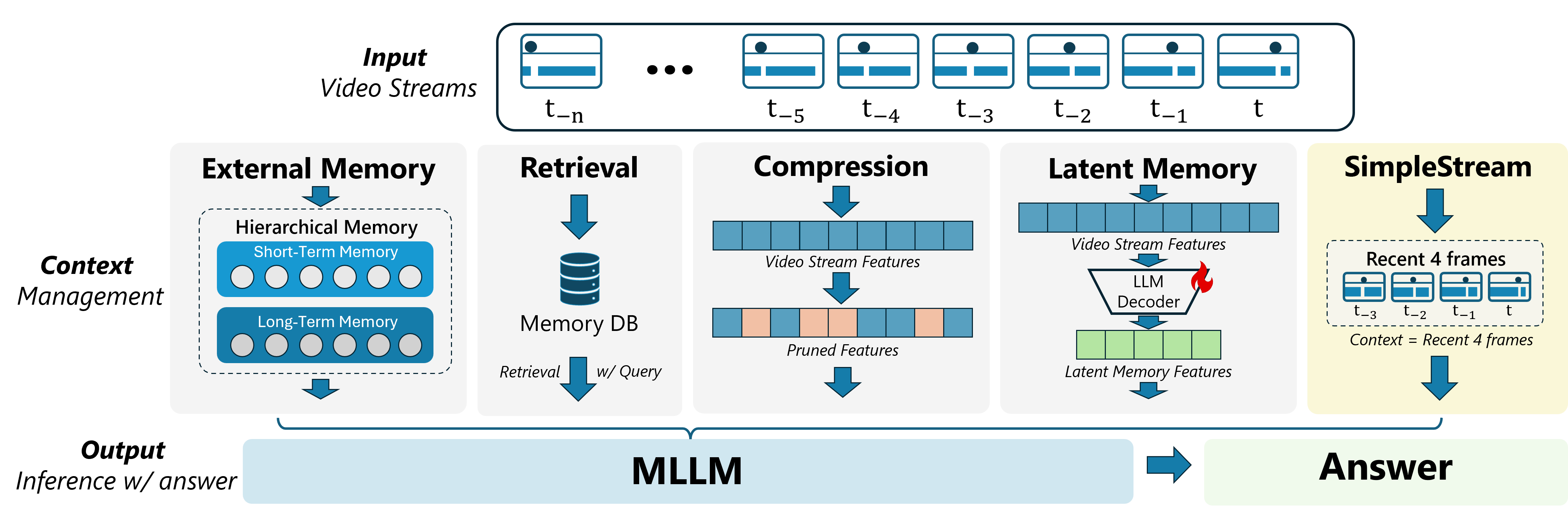

Figure 2: While most methods focus on complex historical information management, SimpleStream keeps it simple with a recent frame window.

The authors found that for many tasks, the information critical for understanding what's happening now is almost entirely contained within the last few seconds of video. Trying to process or retrieve information from too far back can actually dilute the model's focus on the present, impairing its real-time perception. This approach drastically reduces the computational and memory burden.

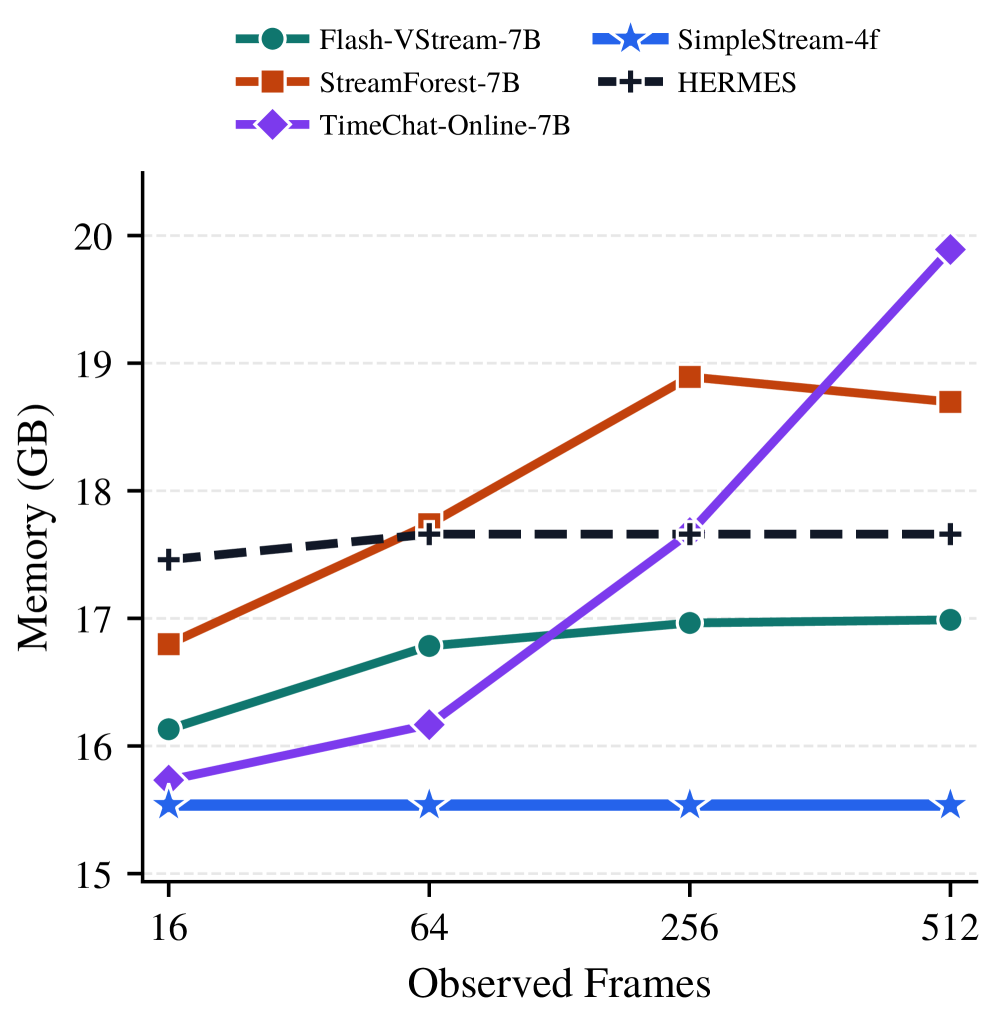

Figure 3: SimpleStream-4f maintains a remarkably low and stable GPU memory footprint, ideal for resource-constrained drone platforms.

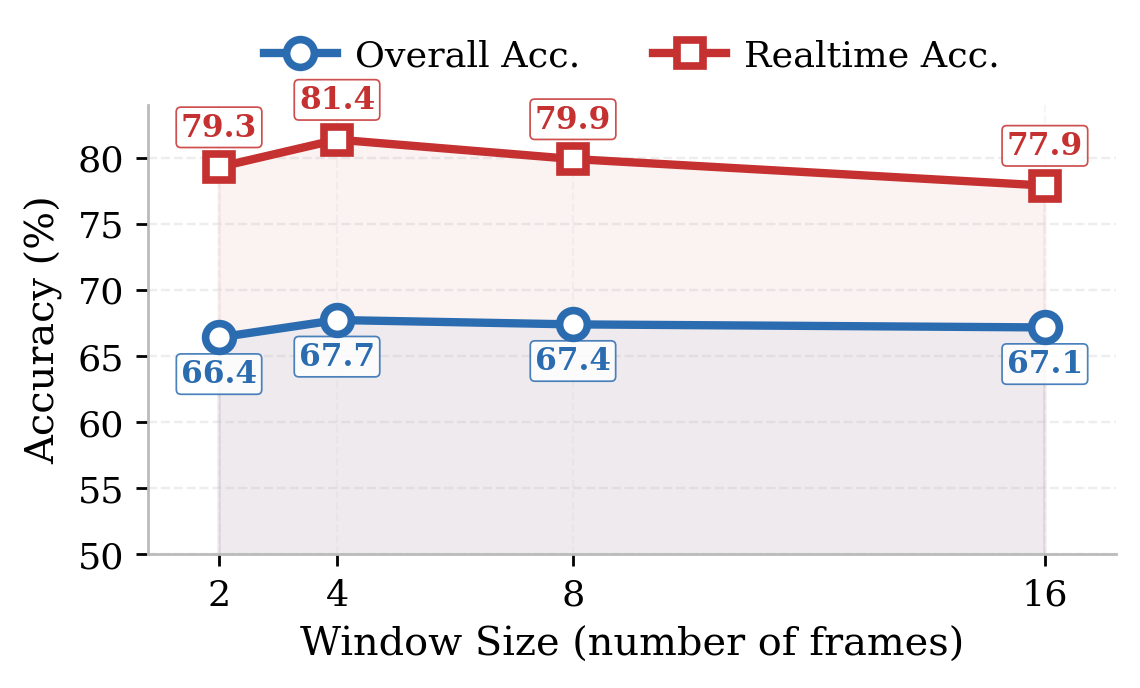

They tested various window sizes (number of recent frames) and found a sweet spot. For many models, 4 frames provided the optimal balance for real-time accuracy, with performance often declining if the window became too wide.

Figure 4: This ablation shows SimpleStream's highest real-time accuracy often occurs with just 4 recent frames, not monotonically increasing with window size.

The Numbers Don't Lie: Simplicity Wins

The results are compelling and, frankly, a bit of a reality check for the field. SimpleStream consistently matches or outperforms far more complex models across two major streaming video benchmarks, OVO-Bench and StreamingBench.

- With only 4 recent frames, it achieved an average accuracy of 67.7% on

OVO-Benchand 80.59% onStreamingBench. - It consistently lies on the "upper-right frontier" in perception-memory comparisons, meaning it delivers strong perception with minimal memory overhead.

- Crucially, controlled experiments showed a clear "perception-memory trade-off": adding more historical context can improve overall recall, but often weakens real-time perception. This is a critical distinction for drone autonomy where immediate understanding is paramount.

- The GPU memory usage for

SimpleStream-4fis consistently the lowest and flattest among all tested methods, making it highly suitable foredge AIdeployments. - The optimal window size isn't universal; while many models peaked at 4 frames, some larger

Qwen3-VLcheckpoints preferred 8 or 16 frames, suggesting a backbone-dependent relationship rather than a simple "more is better" rule.

Why This Matters for Your Drone Projects

For the drone community, these findings are significant. This isn't just an academic curiosity; it's a practical blueprint for more efficient and capable autonomous systems:

- Real-time Obstacle Avoidance: A drone needs to understand its immediate surroundings now, not remember what was behind it 30 seconds ago. SimpleStream's focus on real-time perception means faster, more reliable collision avoidance.

- Efficient Object Tracking & Identification: Whether it's tracking wildlife, inspecting infrastructure, or monitoring an event, drones can identify and follow targets with less computational burden. This means longer flight times and smaller, lighter processing units.

- Search and Rescue: Rapidly understanding dynamic scenes, identifying people or objects in distress, without being distracted by irrelevant historical data.

- Resource-Constrained Hardware: Less GPU memory and processing means you can deploy sophisticated

VLMcapabilities on smallerNVIDIA Jetsonmodules or even customFPGAboards, fitting within the tight power and weight budgets of mini drones. - Simplified Development: With a simpler architecture, integrating

AI visioninto drone control systems becomes less complex, opening doors for more hobbyists and small teams to build advanced capabilities.

The Flip Side: Limitations and Unanswered Questions

While SimpleStream is a powerful baseline, it's not a silver bullet for every scenario. It's important to understand its boundaries:

- Long-range Memory Tasks: For tasks that genuinely require recalling specific events from a distant past (e.g., "Where did I see that specific bird nest 10 minutes ago?"), SimpleStream, by design, will struggle. It sacrifices long-term memory for immediate perception. The authors themselves suggest future benchmarks should separate these concerns.

- Specific Contexts: While it performs well on general streaming benchmarks, there might be niche applications where very specific historical context is crucial and a simple window isn't enough.

- Still Requires a VLM: While the memory mechanism is simplified,

SimpleStreamstill relies on anoff-the-shelf VLM. These models, while powerful, can still be computationally intensive depending on their size. The efficiency gain is in how data is fed, not necessarily making theVLMitself lighter. - Generalization to Novel Scenes: The paper doesn't deeply explore how well these models generalize to highly novel or rapidly changing environments without any historical context beyond the immediate window. A drone operating in a completely new, complex environment might benefit from some level of learned environmental understanding, even if implicit.

Building It Yourself: Feasibility for Hobbyists

The beauty of SimpleStream lies in its accessibility. For drone hobbyists and builders, this is genuinely good news:

- Replication: The core idea is simple: grab the latest

Nframes and feed them to aVLM. If you're already experimenting withOpenCVorPyTorchfor video processing, adapting this concept is highly feasible. - Hardware: The reduced memory footprint (as seen in Figure 3) and computational load make it viable for

edge AIhardware. ThinkNVIDIA Jetson NanoorJetson Orinseries, which are popular for drone development due to their power efficiency andGPUcapabilities. - Open-Source Potential: While the paper doesn't explicitly release

SimpleStreamas a separate library (it's a baseline method), the components (VLMs, video processing pipelines) are largely open-source. Implementing a sliding window buffer and integrating it with models likeQwen-VLorLLaVAis well within the capabilities of a determined hobbyist or small team. This could be a fantastic starting point for custom drone vision projects.

The Broader AI Landscape for Drones

This paper’s findings resonate with broader trends in efficient AI. For instance, the emphasis on efficient navigation for drones is echoed in "Stop Wandering: Efficient Vision-Language Navigation via Metacognitive Reasoning" by Li et al. (https://arxiv.org/abs/2604.02318). By streamlining the visual input, SimpleStream could provide the precise, real-time environmental understanding needed to prevent drones from 'wandering' inefficiently, making their metacognitive reasoning more effective. Similarly, the question of what to remember and what to forget is a central theme in "Novel Memory Forgetting Techniques for Autonomous AI Agents" by Fofadiya and Tiwari (https://arxiv.org/abs/2604.02280). SimpleStream essentially implements a very aggressive, yet effective, forgetting technique by only retaining the most recent context, validating the principle that not all memory is good memory. For practical applications, consider "Deep Neural Network Based Roadwork Detection for Autonomous Driving" by Wullrich et al. (https://arxiv.org/abs/2604.02282). While focused on cars, its real-time detection of roadworks using YOLO and LiDAR highlights how efficient, immediate perception, as championed by SimpleStream, is crucial for critical tasks like obstacle detection or identifying specific features during a drone inspection. For drones that need a more comprehensive 3D understanding, the efficient 2D perception from SimpleStream could be integrated into frameworks like "Modulate-and-Map: Crossmodal Feature Mapping with Cross-View Modulation for 3D Anomaly Detection" by Costanzino et al. (https://arxiv.org/abs/2604.02328), allowing for efficient processing of multiple sensor streams to build a robust 3D model of anomalies.

This paper is a clear call to action: before we add more complexity to drone AI, let's ensure we're getting the most out of the basics.

Paper Details

Title: A Simple Baseline for Streaming Video Understanding Authors: Yujiao Shen, Shulin Tian, Jingkang Yang, Ziwei Liu Published: April 2026 (based on arXiv ID) arXiv: 2604.02317 | PDF

Written by

Mini Drone Shop AISharing knowledge about drones and aerial technology.