SoftMimicGen: Teaching Drones Delicate Manipulation with Synthetic Data

SoftMimicGen introduces an automated data generation system for robots manipulating deformable objects. It enables scalable learning for complex tasks like folding and threading, dramatically reducing the need for extensive real-world trials, and opening new possibilities for drone manipulators.

TL;DR: Training robots for complex deformable object manipulation usually means vast amounts of real-world data, which is slow and expensive. SoftMimicGen bypasses this by automatically generating high-fidelity synthetic data in simulation, allowing robots to learn delicate tasks with objects like fabric and rope, dramatically reducing the need for physical demonstrations.

Beyond Rigid Grasping: The Frontier of Soft Manipulation

For years, robots excelled at handling rigid objects, moving them with precision and power. But the real world is messy and soft. Consider a drone needing to delicately pick a ripe strawberry, or a medical bot manipulating tissue. These tasks demand a 'soft touch,' an understanding of how objects deform and react. This paper introduces SoftMimicGen, a system that tackles this challenge by generating the vast datasets needed to teach robots, including drone manipulators, these intricate skills in a virtual environment.

The Data Bottleneck for Deformable Objects

Training advanced robot manipulation policies often relies on large datasets, typically gathered through human demonstrations. This works reasonably well for rigid objects because their physics are predictable and easy to simulate. However, deformable objects – think fabric, rubber, or even human tissue – are a nightmare for data collection. Their infinite degrees of freedom, complex interactions, and unpredictable behavior make real-world data collection incredibly time-consuming, expensive, and difficult to scale. Existing simulation approaches also struggle to accurately model and generate diverse interaction data for these 'soft' scenarios, leaving a crucial gap for real-world robotic applications.

Mimicking Reality: How SoftMimicGen Works

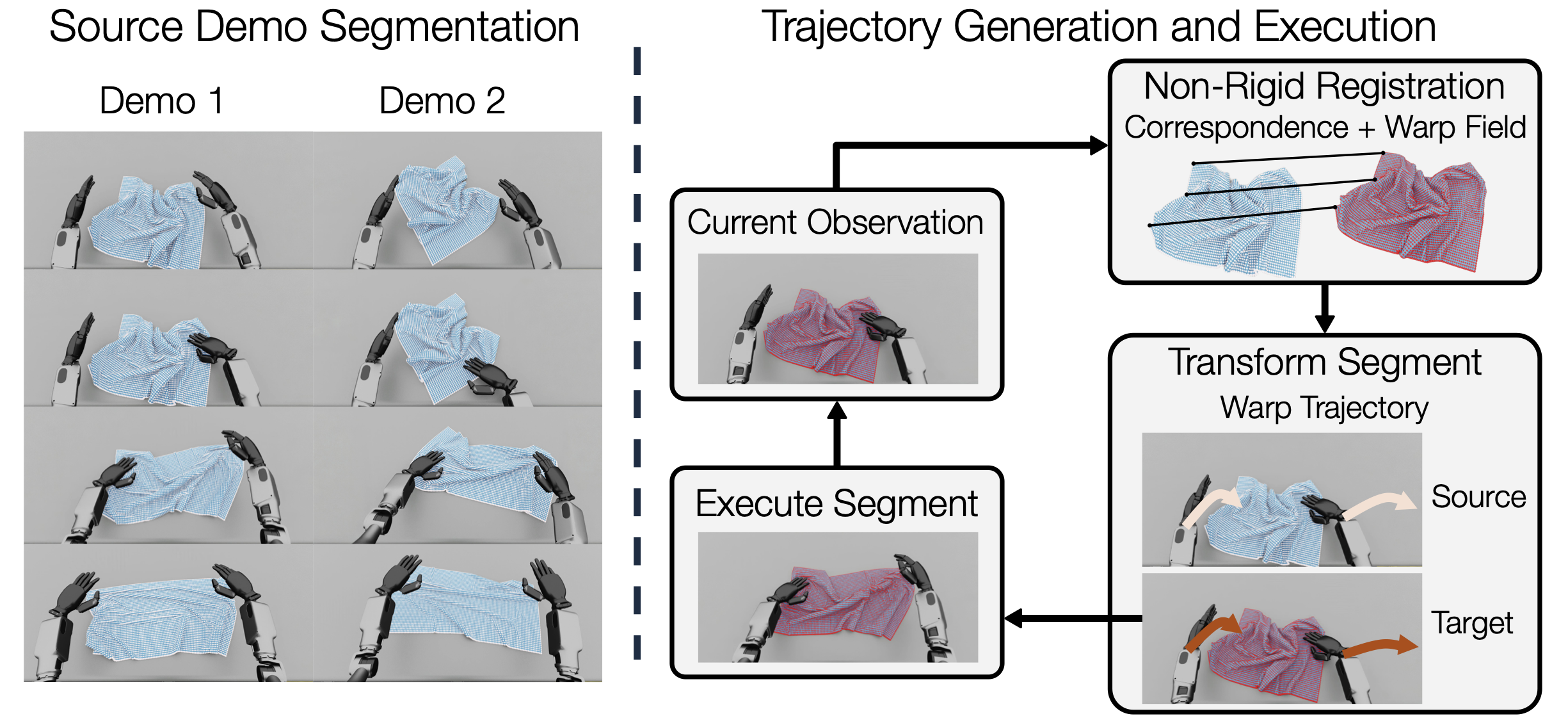

SoftMimicGen proposes an automated pipeline for generating synthetic data specifically for deformable object manipulation. It starts by taking a few human-teleoperated demonstrations as a baseline. These demonstrations are segmented into object-centric subtasks. When presented with a new, target scene, the system observes the current deformable state of the object. It then performs non-rigid registration to map the 'source' demonstration's geometry to the 'target' object's current state, establishing correspondence and a warp field. This sophisticated geometric alignment ensures that the robot's actions, initially designed for one specific object configuration, can be precisely adapted to a new, deformed state, a critical step for handling unpredictable materials. This warp field is crucial for adapting the original human end-effector trajectory to the new, potentially different, deformable object configuration.

Figure 1: SoftMimicGen System Pipeline. (Left) Human-teleoperated demonstrations are segmented into object-centric subtasks using manual annotations or heuristic signals, forming a library of source segments. (Right) Given a new target scene, SoftMimicGen (i) observes the current deformable state, (ii) performs non-rigid registration to establish correspondence and a warp field between the source and target geometries (correspondence + warp field), (iii) selects the source segment with the lowest registration cost and applies the resulting warp field to the end-effector trajectory (warp trajectory; source end-effector trajectory in light arrows, warped trajectory in darker arrows), and (iv) executes the warped trajectory in the target environment.

Figure 1: SoftMimicGen System Pipeline. (Left) Human-teleoperated demonstrations are segmented into object-centric subtasks using manual annotations or heuristic signals, forming a library of source segments. (Right) Given a new target scene, SoftMimicGen (i) observes the current deformable state, (ii) performs non-rigid registration to establish correspondence and a warp field between the source and target geometries (correspondence + warp field), (iii) selects the source segment with the lowest registration cost and applies the resulting warp field to the end-effector trajectory (warp trajectory; source end-effector trajectory in light arrows, warped trajectory in darker arrows), and (iv) executes the warped trajectory in the target environment.

Once the warp field is applied, the system executes the warped trajectory in the simulation. This process allows SoftMimicGen to generate a massive number of diverse, high-fidelity manipulation trajectories without requiring additional human input beyond the initial few demonstrations. This automated expansion of the dataset is key to overcoming the traditional data bottleneck, providing the breadth and variety of examples needed for robust policy learning. The system showcases its capabilities across various challenging tasks and robot embodiments, from a single arm to bimanual systems and even a humanoid robot, demonstrating its versatility.

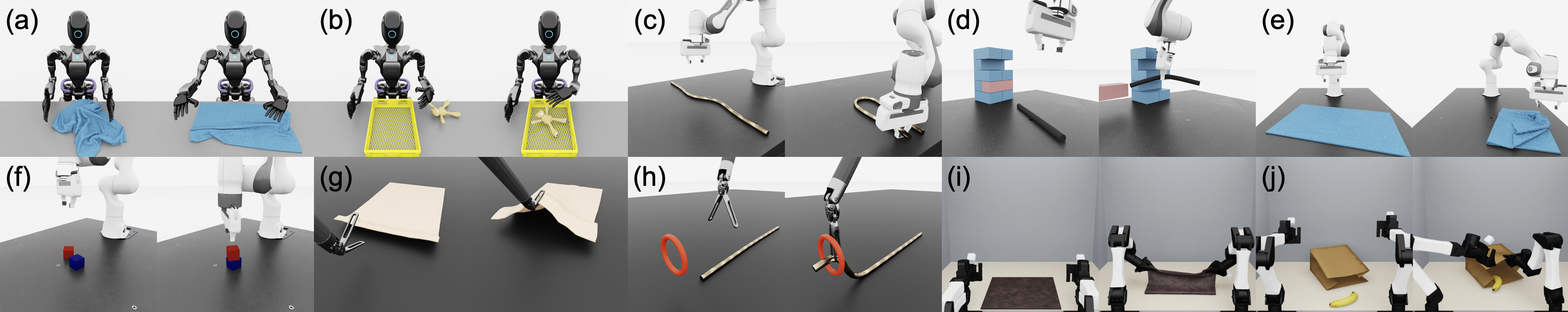

Figure 2: Simulation Tasks. SoftMimicGen is used to generate new demonstrations across 10 challenging tasks involving 4 distinct robot embodiments: (a-b) GR1 humanoid, (c-f) Franka arm, (g-h) dVRK surgical robot, and (i-j) bimanual YAM arms. These tasks demand high precision and fine-grained manipulation to be successfully executed.

Figure 2: Simulation Tasks. SoftMimicGen is used to generate new demonstrations across 10 challenging tasks involving 4 distinct robot embodiments: (a-b) GR1 humanoid, (c-f) Franka arm, (g-h) dVRK surgical robot, and (i-j) bimanual YAM arms. These tasks demand high precision and fine-grained manipulation to be successfully executed.

Policies That Perform: The Proof is in the Data

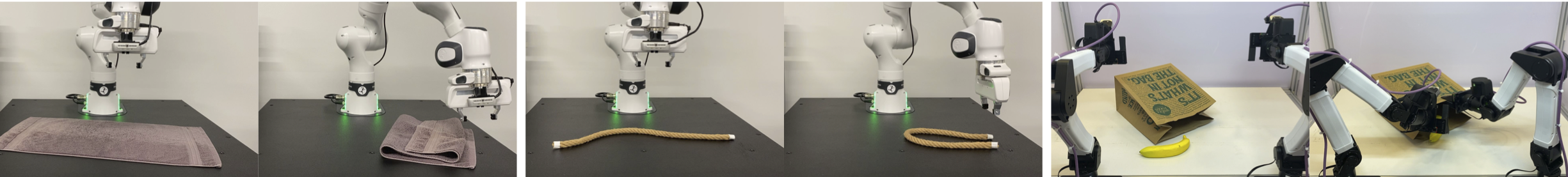

The core strength of SoftMimicGen lies in its ability to produce synthetic datasets that can effectively train high-performing policies. The authors applied their system to generate data across a suite of complex tasks, including threading, whipping, folding, and pick-and-place, involving objects like stuffed animals, ropes, tissues, and towels. Policies trained purely on this generated synthetic data achieved impressive performance, successfully executing these delicate manipulation behaviors across various robot platforms. This demonstrates that synthetic data can indeed dramatically reduce real-world data requirements and facilitate generalization to novel scenarios not seen in initial human demonstrations.

Figure 3: Real-World Deployment. Example rollouts of real-world deformable manipulation tasks using policies trained on SoftMimicGen-generated datasets.

Figure 3: Real-World Deployment. Example rollouts of real-world deformable manipulation tasks using policies trained on SoftMimicGen-generated datasets.

Why This Matters for Drones: A Gentle Touch from Above

For drone enthusiasts and developers, SoftMimicGen opens up significant possibilities beyond traditional aerial robotics. Mini drones equipped with manipulator arms could perform tasks that require a delicate touch. This could include precision crop harvesting, where soft fruits need careful handling, or even autonomous package delivery of fragile goods where crushing is not an option. In industrial settings, drones could inspect and manipulate flexible cables or fabrics. The ability to simulate these complex interactions means we can train drone manipulators for delicate tasks without risking costly real-world failures or requiring endless hours of human-supervised flight tests.

This technology could accelerate the development of drones for medical logistics, handling sensitive biological samples or assisting in tasks within sterile environments where physical contact needs to be precise and gentle. It pushes drones beyond just vision and movement, into a realm of nuanced physical interaction with the world around them.

The Road Ahead: Limitations and Unanswered Questions

While SoftMimicGen is a significant step forward, it's important to acknowledge its current boundaries. The system still requires an initial set of human teleoperated demonstrations. While minimal compared to traditional methods, these initial demos still need to be captured accurately. The fidelity of the simulation itself is also critical; any inaccuracies in the physics engine or material properties could lead to a sim-to-real gap, where policies trained in simulation don't transfer perfectly to the real world. The computational cost of high-fidelity deformable body simulations and non-rigid registration can be substantial, limiting real-time application directly on resource-constrained drone hardware.

Furthermore, the current approach focuses on replicating and adapting known manipulation behaviors. It doesn't inherently discover novel manipulation strategies, which might be necessary for truly unprecedented tasks. Generalizing to highly novel objects or vastly different scenarios than those in the source demonstrations will likely remain a challenge.

RELATED PAPERS: MuRF: Unlocking the Multi-Scale Potential of Vision Foundation Models, No Hard Negatives Required: Concept Centric Learning Leads to Compositionality without Degrading Zero-shot Capabilities of Contrastive Models, R-C2: Cycle-Consistent Reinforcement Learning Improves Multimodal Reasoning

Written by

Mini Drone Shop AISharing knowledge about drones and aerial technology.