Your Drone, Your Style: AI Learns Personalized Flight Preferences

New research demonstrates a Vision-Language-Action model that learns and applies individual preferences to vehicle control, paving the way for drones that fly exactly how you like, not just where you tell them.

Flying Your Way, Not Just the AI's Way

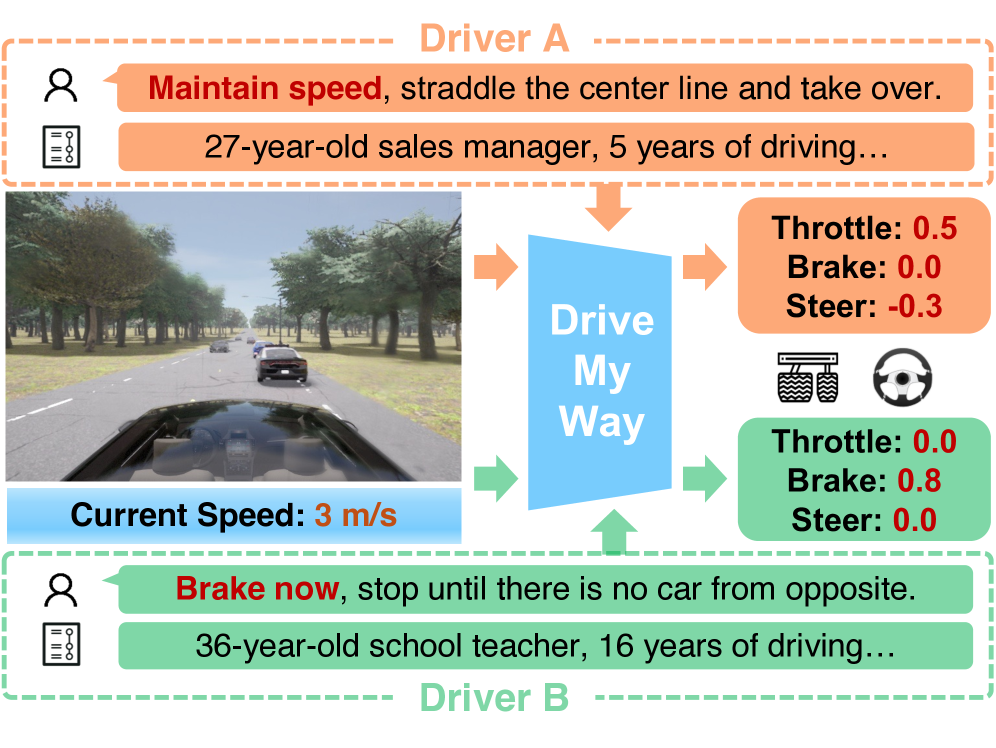

TL;DR: This research introduces "Drive My Way" (DMW), an AI framework that learns and applies a driver's unique long-term habits and short-term linguistic instructions to control a vehicle. It offers personalized autonomous driving by creating a user embedding to tailor acceleration, braking, and steering, moving beyond generic driving modes.

Forget drones that just follow a pre-programmed path or respond to basic joystick inputs. What if your drone could actually learn your unique flight style – not just what you want it to do, but how you prefer it to execute maneuvers? This isn't science fiction; new research from Zehao Wang and team, "Drive My Way," demonstrates a Vision-Language-Action (VLA) model that aligns with individual preferences, initially for cars, but with clear implications for autonomous drones.

The Generic vs. The Personal

Current autonomous systems, whether for cars or drones, largely operate on generic objectives. They aim for efficiency, safety, or speed, but rarely blend these with a specific user's preference. This means a drone might execute a turn safely, but not with the aggressive bank angle you prefer for FPV racing, or the gentle curve you like for cinematic shots.

The lack of personalization forces users to constantly override or manually adjust, making "autonomous" less autonomous and more like a sophisticated assistant that doesn't quite get you. Building truly personalized systems is complex; it requires understanding subtle cues and adapting to varying intentions, something fixed driving modes simply can't achieve. This is a crucial gap DMW aims to fill.

How DMW Learns Your Style

The "Drive My Way" (DMW) framework tackles this by learning a user's long-term driving habits and combining them with real-time natural language instructions. The core idea revolves around creating a unique "user embedding" – essentially a digital fingerprint of your driving or flying style. This embedding is derived from a personalized dataset that tracks how individual drivers behave across various scenarios.

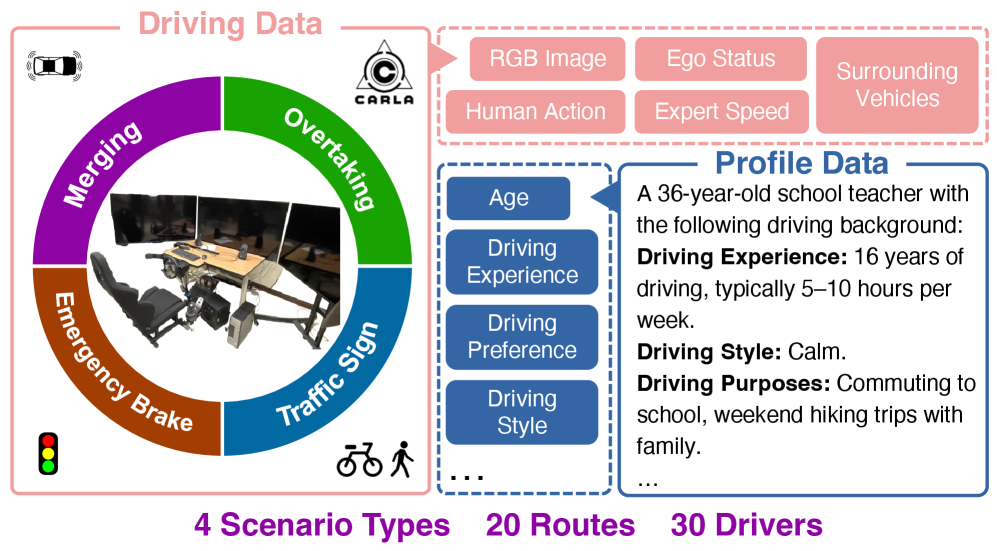

An overview of the Personal Driving Dataset, which consists of the driving data and structured driver profile data.

An overview of the Personal Driving Dataset, which consists of the driving data and structured driver profile data.

DMW uses a Vision-Language-Action (VLA) backbone. This system takes in several inputs: front-view camera images (for environmental context), natural language instructions (like "drive aggressively" or "take it easy"), route target points (the overall destination), and crucially, the learned user profile.

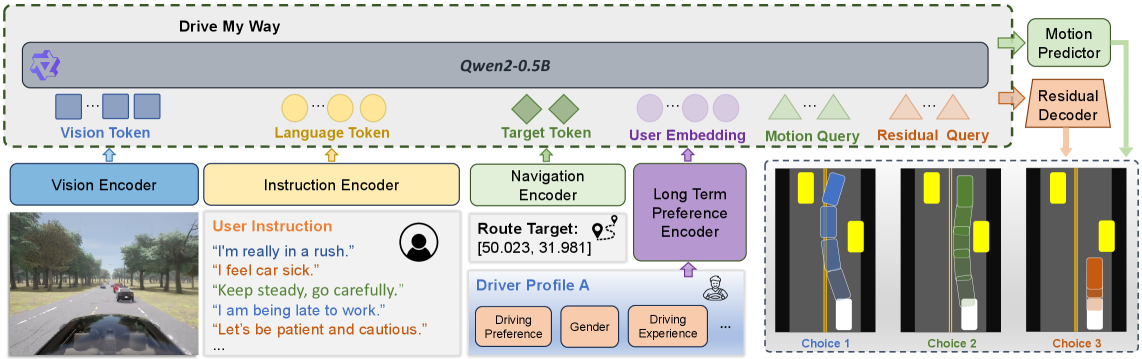

An overview of the DMW framework with a pretrained VLA backbone. The model takes in front-view camera images, instructions, route target points, and user profile as inputs, while the motion predictor outputs route and speed waypoints, which derive the base action (throttle, steer angle). The residual decoder outputs a discrete residual applied to the base to produce the final personalized action.

An overview of the DMW framework with a pretrained VLA backbone. The model takes in front-view camera images, instructions, route target points, and user profile as inputs, while the motion predictor outputs route and speed waypoints, which derive the base action (throttle, steer angle). The residual decoder outputs a discrete residual applied to the base to produce the final personalized action.

A motion predictor then outputs a base set of route and speed waypoints, translating into basic actions like throttle and steer angle. Here's where personalization kicks in: a "residual decoder" applies a discrete residual adjustment to these base actions, refining them to match the user's specific style embedded in their profile. This residual correction ensures the drone, or car, doesn't just execute the command, but executes it your way.

The Vision-Language-Action (VLA) backbone at the heart of DMW isn't just a black box; it's a sophisticated neural network designed to interpret multiple input modalities simultaneously. It processes high-dimensional visual data from the drone's cameras, natural language commands that might come from a pilot's voice or text input, and navigational data like GPS waypoints. This multimodal integration allows the system to build a comprehensive understanding of the current situation and the user's intent. The genius of DMW lies in its "residual decoder," which takes these standard VLA outputs and subtly modifies them based on the personalized user embedding. This isn't just applying a fixed offset; it's a learned, context-dependent adjustment that ensures the drone's flight characteristics, from its acceleration curve to its turning radius, align with your unique style.

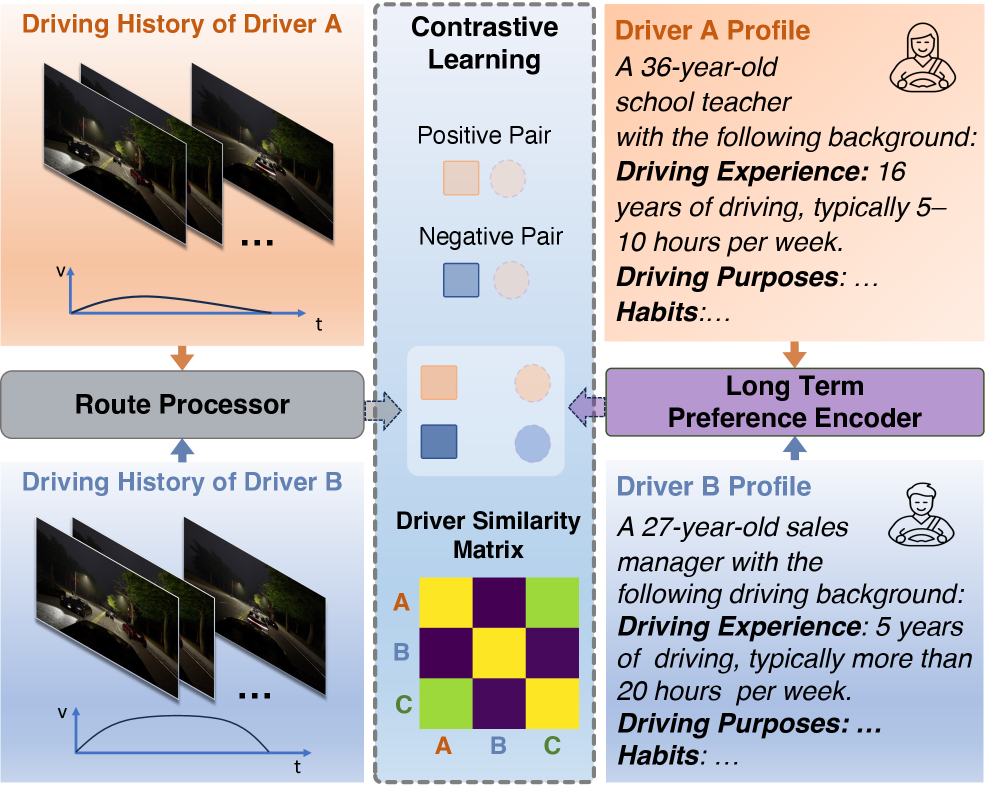

The long-term preference alignment is achieved through a contrastive learning mechanism. This trains the system to recognize and differentiate between various user styles, ensuring the user embedding accurately captures unique habits.

The contrastive learning mechanism on the long-term preference encoder and route processor.

The contrastive learning mechanism on the long-term preference encoder and route processor.

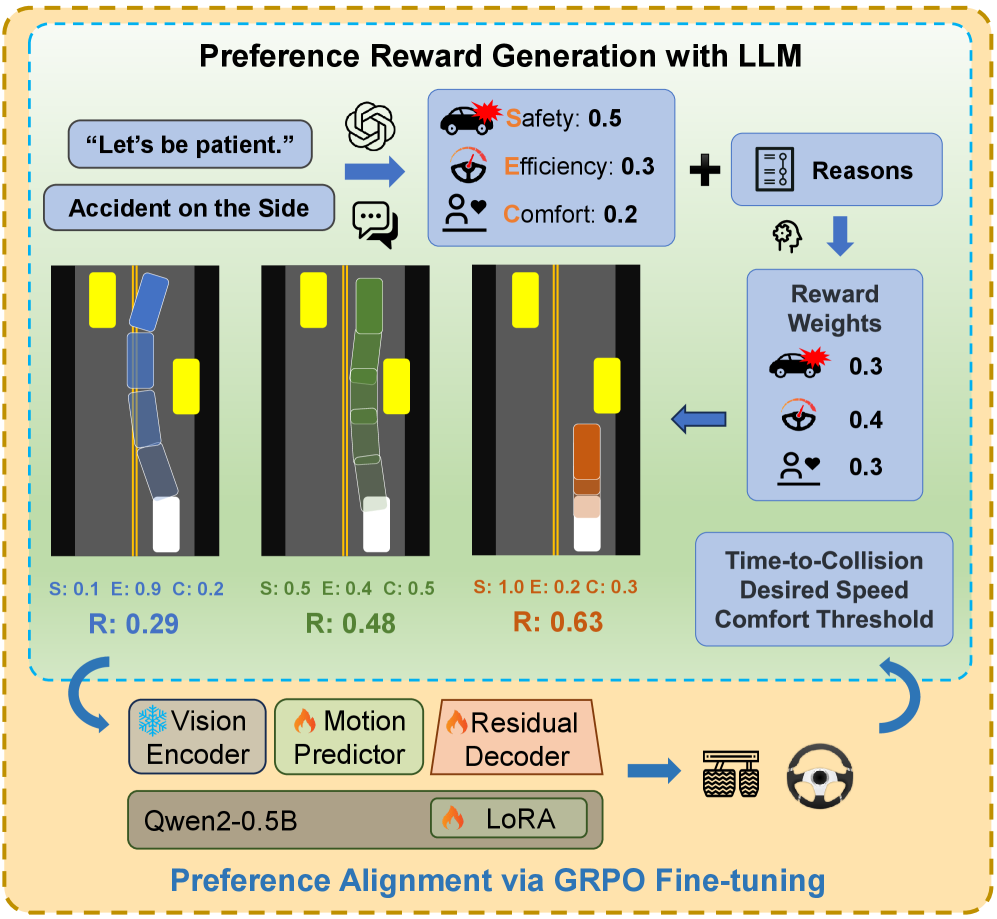

Short-term instructions are integrated via fine-tuning and reward generation. If you tell the system to "be aggressive," it adjusts its behavior in real-time within the bounds of your learned style. This dual approach – long-term habit learning plus short-term instruction adaptation – is key to DMW's flexibility.

The fine-tuning process and reward generation for short-term instruction alignment.

The fine-tuning process and reward generation for short-term instruction alignment.

Drive My Way (DMW) achieves end-to-end personalized driving via both long-term preference alignment and short-term style instruction adaptation.

Drive My Way (DMW) achieves end-to-end personalized driving via both long-term preference alignment and short-term style instruction adaptation.

Quantifying Style: The Numbers

The DMW framework was evaluated on the Bench2Drive benchmark, specifically focusing on its ability to adapt to style instructions. The results are compelling:

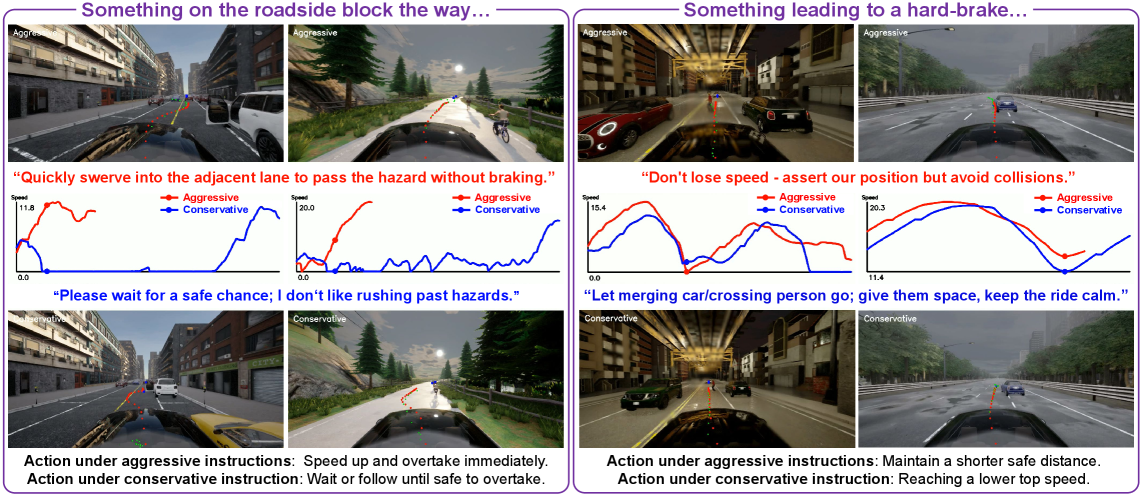

- Improved Style Instruction Adaptation: DMW significantly outperforms baseline VLA models in adapting to various driving styles like "aggressive" or "conservative."

- User Recognizability: Human user studies confirmed that the generated driving behaviors were consistently recognizable as each driver's unique style, demonstrating true personalization.

- Reduced Overrides: While specific numbers weren't explicitly detailed in the abstract snippet, the implication is that by aligning with preferences, the need for manual intervention or mode switching is reduced.

- Generalization Across Conditions: The personalized driving dataset was collected across multiple real drivers and conditions, suggesting the learned embeddings are robust and generalize well.

Driving preference under aggressive and conservative instructions. Red waypoints denote distance parametrized (every 1 m), navigation path and green waypoints denote time parametrized (every 0.25 s) trajectory.

Driving preference under aggressive and conservative instructions. Red waypoints denote distance parametrized (every 1 m), navigation path and green waypoints denote time parametrized (every 0.25 s) trajectory.

Why This Matters for Drones

This research directly translates to a new generation of autonomous drones. Think beyond simply following a GPS path. An FPV racing drone could learn your specific cornering lines, throttle feathering, and dive profiles, then execute them autonomously with your signature flair. For cinematic drones, it means teaching the drone your preferred camera movements, smooth transitions, or dynamic tracking shots – not just hitting a waypoint, but composing the shot with your artistic touch. This could extend to industrial applications too, where drones performing inspections could learn an operator's preferred scanning patterns or stability requirements, improving consistency and efficiency based on expert human input. The potential for a drone that feels like an extension of your own piloting skill is immense.

The Unresolved: Limitations and What's Next

While DMW is a significant step, it faces several practical hurdles for real-world drone deployment.

- Data Collection Scale: The paper mentions a personalized driving dataset, but collecting similar detailed, multi-driver, multi-condition drone flight data would be a massive undertaking. Unlike cars, drones operate in 3D space with more complex dynamics and environmental interactions (e.g., wind, turbulence).

- Computational Overhead: VLA models, especially with real-time preference alignment, are computationally intensive. For a drone, this means significant processing power, which directly impacts

weight,power consumption, and ultimatelyflight time. Edge AI optimization would be critical. - Safety and Edge Cases: Personalization must always be balanced with safety. What happens when a user's "aggressive" preference conflicts with a critical safety protocol, like avoiding a sudden obstacle? The paper doesn't detail how these conflicts are resolved or how safety guarantees are maintained within a personalized framework.

- Transferability of Driving Preferences to Flight: While the concept holds, the specifics of "accelerate, brake, merge, yield, and overtake" in cars don't directly map to drone flight dynamics (pitch, roll, yaw, throttle, altitude). New preference vectors and mapping mechanisms would be needed for flight control. The authors acknowledge the human driving context, and adaptation to airborne systems would require specific research.

- Ethical and Bias Considerations: If the system learns preferences from real human pilots, it risks encoding and perpetuating biases. For instance, if a dataset primarily features aggressive pilots, a "safe" instruction might still result in overly dynamic flight compared to a truly cautious style. Ensuring fairness and preventing unintended negative behaviors from being learned and replicated is a complex ethical challenge that personalized AI systems must address.

Can You Build This at Home?

Replicating DMW for a hobbyist drone would be challenging but not entirely out of reach for advanced builders. The core VLA backbone likely leverages existing foundation models, which might be accessible. However, developing the "personalized driving dataset" for a drone, complete with diverse flight styles and conditions, is a major barrier. You'd need a robust data logging setup, possibly with a motion capture system or high-fidelity sensor fusion, to accurately capture your unique flight behaviors. The Bench2Drive benchmark is for cars, so a drone-specific benchmark would also be needed for evaluation. The authors have stated that their data and code will be available at https://dmw-cvpr.github.io/, which is a huge plus for anyone looking to experiment, though adapting it from car-specific implementations to drone flight dynamics would require significant engineering effort. For hobbyists with deep pockets and significant expertise, setting up a DMW-like system for a drone involves several high-end components. Beyond the SBC (like a Jetson Orin AGX or NVIDIA Clara Holoscan for higher-end processing, if available in a drone form factor), you'd need a high-quality stereo camera or LiDAR for robust environmental perception, especially for accurate depth estimation. Integrating this with an open-source flight controller like ArduPilot or PX4, modified to accept high-level VLA outputs, would be a major firmware project. The data collection aspect remains the most daunting. You'd need a highly instrumented drone capable of logging precise flight states (attitude, velocity, acceleration, motor commands) synchronized with video feeds and verbal commands. This level of data logging typically requires specialized data acquisition hardware and careful calibration, pushing it beyond the typical hobbyist's bench. The promise of open-sourced code from the authors is a good starting point, but the leap from car to drone domain is non-trivial, demanding expertise in both robotics and machine learning.

Context: The Broader AI Landscape

This work on personalized autonomous systems doesn't exist in a vacuum. The need for vast, specialized datasets for training is a recurring theme, as seen in "SoftMimicGen: A Data Generation System for Scalable Robot Learning in Deformable Object Manipulation" by Moghani et al. (https://arxiv.org/abs/2603.25725). While focused on manipulation, SoftMimicGen tackles the very real problem of generating synthetic data efficiently, which is crucial for training complex models like DMW without intractable human effort in the drone world. Similarly, the underlying Vision-Language-Action models rely on robust multimodal reasoning. Zhang et al.'s "R-C2: Cycle-Consistent Reinforcement Learning Improves Multimodal Reasoning" (https://arxiv.org/abs/2603.25720) directly enhances the reliability of these core AI systems, ensuring a personalized drone accurately perceives and acts upon learned preferences. Furthermore, the visual perception component, powered by Vision Foundation Models (VFMs), benefits from advancements like "MuRF: Unlocking the Multi-Scale Potential of Vision Foundation Models" by Zou et al. (https://arxiv.org/abs/2603.25744). MuRF's ability to handle multi-scale inputs means a drone's VFM can better interpret its environment, from distant landmarks to close-up obstacles, which is fundamental for any personalized flight.

The move from "what" to "how" in autonomous systems marks a significant step toward truly intelligent, user-centric drone operation, bringing us closer to drones that don't just fly, but fly like you.

Paper Details

Title: Drive My Way: Preference Alignment of Vision-Language-Action Model for Personalized Driving Authors: Zehao Wang, Huaide Jiang, Shuaiwu Dong, Yuping Wang, Hang Qiu, Jiachen Li Published: March 2026 (based on arXiv date format in link) arXiv: 2603.25740 | PDF

Written by

Mini Drone Shop AISharing knowledge about drones and aerial technology.